Multiplexing

Multiplexers, often called muxes, are extremely important to telecommunications. Their main reason for being is to reduce network costs by minimizing the number of communications links needed between two points. Like all other computing systems, multiplexers have evolved. Each new generation has additional intelligence, and additional intelligence brings more benefits. The types of benefits that have accrued, for example, include the following:

- The capability to compress data in order to encode certain characters with fewer bits than normally required and free up additional capacity for the movement of other information.

- The capability to detect and correct errors between the two points being connected to ensure that data integrity and accuracy are maintained.

- The capability to manage transmission resources on a dynamic basis, with such things as priority levels. If you have only one 64Kbps channel left, who gets it? Or what happens when the link between San Francisco and Hong Kong goes down? How else can you reroute traffic to get the high-priority information where it needs to go? Multiplexers help solve such problems.

The more intelligent the multiplexer, the more actively and intelligently it can work to dynamically make use of the available transmission resources.

When you're working with network design and telecommunications, you need to consider line cost versus device cost. You can provide extremely high levels of service by ensuring that everybody always has a live and available communications link. But you must pay for those services on an ongoing basis, and their costs become extremely high. You can offset the costs associated with providing large numbers of lines by instead using devices such as multiplexers that help make more intelligent use of a smaller number of lines.

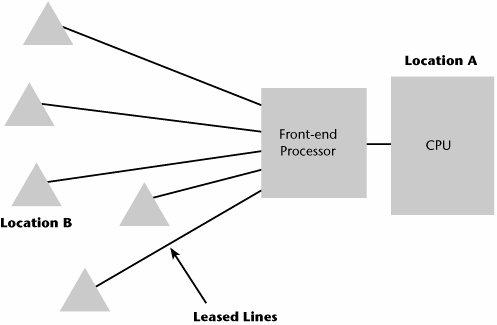

Figure 1.12 illustrates a network that has no multiplexers. Let's say this network is for Bob's department stores. The CPU is at Location A, a data center that's in New York that manages all the credit authorization functions for all the Bob's stores. Location B, the San Francisco area, has five different Bob's stores in different locations. Many customers will want to make purchases using their Bob's credit cards, so we need to have a communications link back to the New York credit authorization center so that the proper approvals and validations can be made. Given that it's a sales transaction, the most likely choice of communications link is the use of a leased line from each of the locations in San Francisco back to the main headquarters in New York.

Figure 1.12. A network without multiplexers

Remember that using leased lines is a very expensive type of network connection. Because this network resource is reserved for one company's usage only, nobody else has access to that bandwidth, and providers can't make use of it in the evenings or on the weekends to carry residential traffic, so the company pays a premium. Even though it is the most expensive approach to networking, the vast majority of data networking today still takes place using leased lines because they make the network manager feel very much in control of the network's destiny (but don't forget the added incentive for telco salespeople to push these highly profitable lines). With leased lines, the bandwidth is not affected by sudden shifts of traffic elsewhere in the network, the company can apply its own sophisticated network management tools, and the network manager feels a sense of security in knowing who the user communities are at each end of that link. But leased lines have another negative attribute: They are mileage sensitive, so the longer the communications link, the higher the cost. And in a network that doesn't efficiently use that communications link all day long, leased lines can be overkilland expensive. Plus, beyond the local loop, even leased lines are often bundled together onto a provider's backbone, thus intermixing everyone's presumed-to-be-private data into one seething, heaving mass.

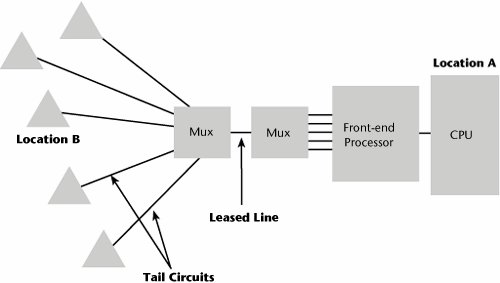

Because of the problems with leased lines, the astute network manager at Bob's thinks about ways to make the network less expensive. One solution, shown in Figure 1.13, is to use multiplexers. Multiplexers always come in pairs, so if you have one at one end, you must have one at the other end. They are also symmetrical, so if there are five outputs available in San Francisco, there must also be five inputs in the New York location. The key savings in this scenario comes from using only one leased line between New York and California. In San Francisco, short leased lines, referred to as tail circuits, run from the centrally placed multiplexer to each of the individual locations. Thus, five locations are sharing one high-cost leased line, rather than each having its own leased line. Intelligence embedded in the multiplexers allows the network manager to manage access to that bandwidth and to allocate network services to the endpoints.

Figure 1.13. A network with multiplexers

Various techniquesincluding Frequency Division Multiplexing (FDM), Time Division Multiplexing (TDM), Statistical Time Division Multiplexing (STDM), Code Division Multiple Access (CDMA), intelligent multiplexing, inverse multiplexing, Wavelength Division Multiplexing (WDM), Dense Wavelength Division Multiplexing (DWDM), and Coarse Wavelength Division Multiplexing (CWDM)enable multiple channels to coexist on one link. The following sections examine these techniques (except CDMA, which is covered in Chapter 13, "Wireless Communications Basics").

FDM

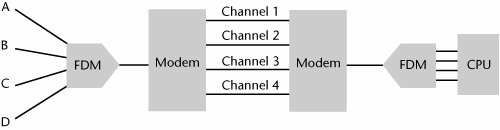

With FDM, the entire frequency band available on the communications link is divided into smaller individual bands or channels (see Figure 1.14). Each user is assigned to a different frequency. The signals all travel in parallel over the same communications link, but they are divided by frequencythat is, each signal rides on a different portion of the frequency spectrum. Frequency, which is an analog parameter, implies that the type of link used with FDM is usually an analog facility. A disadvantage of frequency division muxes is that they can be difficult to reconfigure in an environment in which there's a great deal of dynamic change. For instance, to increase the capacity of channel 1 in Figure 1.14, you would also have to tweak channels 2, 3, and 4 to accommodate that change.

Figure 1.14. FDM

In an enterprise that has a high degree of moves, additions, and changes, an FDM system would be expensive to maintain because it would require the additional expertise of frequency engineering and reconfiguration. Today's environment doesn't make great use of FDM, but it is still used extensively in cable TV and radio. In cable TV, multiple channels of programming all coexist on the coax coming into a home, and they are separated based on the frequency band in which they travel. When you enter a channel number on your set-top box or cable-ready TV, you're essentially selecting the channel (set of frequencies) that you want your television to decode into a picture and sound stream to watch.

TDM

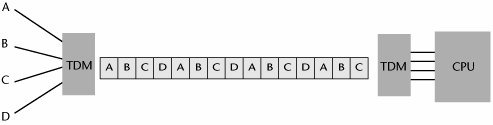

The second muxing technique to be delivered to the marketplace was TDM. There are various levels of TDM. In the plain-vanilla TDM model, as shown in Figure 1.15, a dedicated time slot is provided for each port, or point of interface, on the system. Each device in a predetermined sequence is allotted a time slot during which it can transmit. That time slot enables one character of data, or 8 bits of digitized voice, to be placed on the communications link. The allocated time slots have to be framed in order for the individual channels to be separated out.

Figure 1.15. TDM

A problem with a standard time division mux is that there is a one-to-one correlation between each port and time slot, so if the device attached to port 2 is out for the day, nobody else can make use of time slot 2. Hence, there is a tendency to waste bandwidth when vacant slots occur because of idle stations. However, this type of TDM is more efficient than standard FDM because more subchannels can be derived. Time division muxes are used a great deal in the PSTN, with two generations currently in place: those used in the Plesiochronous Digital Hierarchy (PDH) infrastructure, better known as T-/E-/J-carrier muxes, and those used in the SDH/SONET optical networks (described in Chapter 4).

FDM and TDM can be combined. For example, you could use FDM to carve out individual channels and then within each of those channels apply TDM to carry multiple conversations on each channel. This is how some digital cellular systems work (e.g., Global System for Mobile Communications [GSM]). Digital cellular systems are discussed in Chapter 14, "Wireless WANs."

STDM

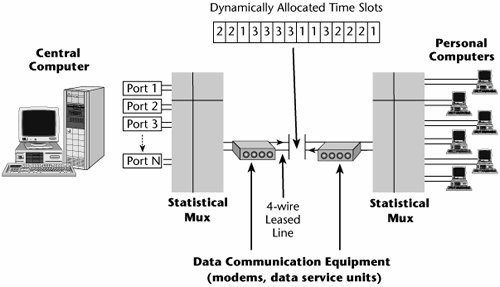

STDM was introduced to overcome the limitation of standard TDM, in which stations cannot use each other's time slots. Statistical time division multiplexers, sometimes called statistical muxes or stat muxes, dynamically allocate the time slots among the active terminals, which means you can actually have more terminals than you have time slots (see Figure 1.16).

Figure 1.16. STDM

A stat mux is a smarter mux, and it has more memory than other muxes, so if all the time slots are busy, excess data goes into a buffer. If the buffer fills up, the additional access data gets lost, so it is important to think about how much traffic to put through a stat mux in order to maintain performance variables. Dynamically allocating the time slots enables you to make the most efficient use of bandwidth. Furthermore, because these are smarter muxes, they have the additional intelligence mentioned earlier in terms of compression and error control features. Because of the dynamic allocation of time slots, a stat mux can carry two to five times more traffic than a traditional time division mux. But, again, as you load the stat mux with traffic, you run the risk of delays and data loss.

Stat muxes are extremely important because they are the basis on which packet-switching technologies (e.g., X.25, IP, Frame Relay, ATM) are built. The main benefit of a stat mux is the efficient use of bandwidth, which leads to transmission efficiencies.

Intelligent Multiplexing

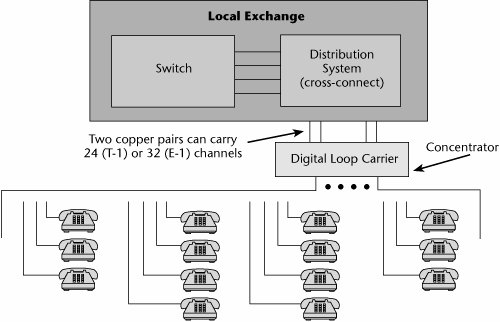

An intelligent multiplexer is often referred to as a concentrator, particularly in the telecom world. Intelligent muxes are not used in pairs; they are used alone. An intelligent mux is a line-sharing device whose purpose is to concentrate large numbers of low-speed lines to be carried over a high-speed line to a further point in the network.

A good example of a concentrator is in a device called the digital loop carrier (DLC), which is also referred to as a remote concentrator or remote terminal. In Figure 1.17, twisted-pairs go from the local exchange to the neighborhood. Before the advent of DLCs, each household needed a twisted-pair. If the demand increased beyond the number of pairs available for that local exchange, the users were out of luck until a new local exchange was added.

Figure 1.17. Intelligent multiplexing: concentrators

Digital technology makes better use of the existing pairs than does analog. Instead of using each pair individually per subscriber from the local exchange to the subscriber, you can put a DLC in the center. A series of either fiber-optic pairs or microwave beams connects the local exchange to this intermediate DLC, and those facilities then carry multiplexed traffic. When you get to the DLC, you break out the individual twisted-pairs to the households. This allows you to eliminate much of what used to be an analog plant leading up to the local exchange. It also allows you to provide service to customers who are outside the distance specifications between a subscriber and the local exchange. So, in effect, that DLC can be used to reduce the loop length.

Traditional DLCs are not interoperable with some DSL offerings, including ADSL and SDSL. For example, about 30% to 40% of the U.S. population is serviced through DLCs. And in general, globally, the more rural or remote a city or neighborhood, the more likely that it is serviced via a DLC. For those people to be able to subscribe to high-bandwidth DSL services, the carrier has to replace the DLC with a newer generation of device. Lucent's xDSL Access Gateway, for example, is such a device; it offers a multiservice access system that provides Lite and full-rate ADSL, Integrated Services Digital Network (ISDN), Asynchronous Transfer Mode (ATM), and plain old telephone service (POTS), which is analog over twisted-pair lines or fiber. Or the carrier may simply determine that the market area doesn't promise enough revenue and leave cable modems or broadband wireless as the only available broadband access technique.

Inverse Multiplexing

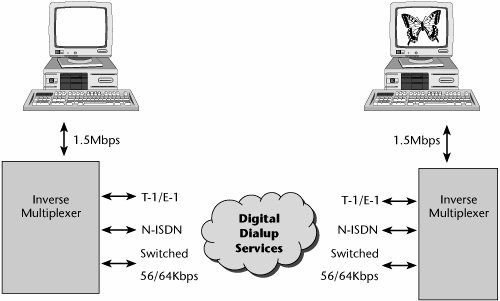

The inverse multiplexer, which arrived on the scene in the 1990s, does the opposite of what the multiplexers described so far do: Rather than combine lots of low-bit-rate streams to ride over a high-bit-rate pipe, an inverse multiplexer breaks down a high-bandwidth signal into a group of smaller-data-rate signals that can be dispersed over a range of channels to be carried over the network. A primary application for inverse multiplexers is to support high-bandwidth applications such as videoconferencing.

In Figure 1.18, a videoconference is to occur at 1.5Mbps. A good-quality, full-motion, long-session video requires substantial bandwidth. It's one thing to tolerate pixilation or artifacts in motion for a 15-minute meeting that saves you the time of driving or flying to meet colleagues. However, for a two-hour meeting to evaluate a new advertising campaign, the quality needs to parallel what most of us use as a reference point: television. Say that company policy is to hold a two-hour videoconferenced meeting twice each month. Very few customers are willing to pay for a 1.5Mbps to 2Mbps connection for an application that they're using just four hours each month. Instead, they want their existing digital facilities to carry that traffic. An inverse mux allows them to do so. In Figure 1.18, the 1.5Mbps video stream is introduced into the inverse multiplexer, the inverse mux splits that up into 24 64Kbps channels, and each of these 24 channels occupies a separate channel on an existing T-1/E-1 facility or PRI ISDN. (PRI ISDN is discussed in Chapter 2, "Traditional Transmission Media.") The channels are carried across the network separately. At the destination point, a complementary inverse mux reaggregates, resynchronizes, and reproduces that high-bandwidth signal so that it can be projected on the destination video monitor.

Figure 1.18. Inverse multiplexing

Inverse multiplexing therefore allows you to experience a bit of elastic bandwidth. You can allocate existing capacity to a high-bandwidth application without having to subscribe to a separate link just for that purpose.

WDM, DWDM, and CWDM

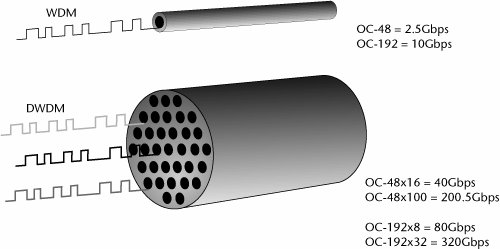

WDM was specifically developed for use with fiber optics. In the past, we could use only a fraction of the available bandwidth of a fiber-optic system. This was mainly because we had to convert the optical pulses into electrical signals to regenerate them as they moved through the fiber network. As optical signals travel through fiber, the impurities in the fiber absorb the signal's strength. Because of this, somewhere before the original signal becomes unintelligible, the optical signal must be regenerated (i.e., given new strength) so that it will retain its information until it reaches its destination or at least until it is amplified again. And because repeaters were originally electronic, data rates were limited to about 2.5Gbps. In 1994, something very important happened: Optical amplifiers called erbium-doped fiber amplifiers (EDFAs) were introduced. Erbium is a chemical that's injected into the fiber. As a light pulse passes through the erbium, the light is amplified and continues on its merry way, without having to be stopped and processed as an electrical signal. The introduction of EDFAs immediately opened up the opportunity to make use of fiber-optic systems operating at 10Gbps.

EDFAs also paved the way to developing wavelength division multiplexers. Before the advent of WDM, we were using only one wavelength of light within each fiber, although the visible light spectrum engages a large number of different wavelengths. WDM takes advantage of the fact that multiple colors, or frequencies, of light can be transmitted simultaneously down a single optical fiber. The data rate that each of the wavelengths supports depends on the type of light source. Today, the most common data rates supported are OC-48, which is shorthand for 2.5Gbps, and OC-192, which is equivalent to 10Gbps. 40Gbps systems are also now commercially available. In the future, we'll go beyond that. Part of the evolution of WDM is that every year, we double the number of bits per second that can be carried on a wavelength, and every year we double the number of wavelengths that can be carried over a single fiber. But we have just begun. Soon light sources should be able to pulse in the terabits-per-second range, and within a few years, light sources that produce petabits per second (1,000Tbps) will emerge.

One thing to clarify about the first use of WDM is that unlike the other types of multiplexing, where the goal is to aggregate smaller channels into one larger channel, WDM is meant to furnish separate channels for each service, at the full data rate. Increasingly, enterprises are making use of high-capacity switches and routers equipped with 2.5Gbps interfaces, so there's a great deal of desire within the user community to plug in to a channel of sufficient size to carry a high-bandwidth signal end to end, without having to break it down into smaller increments only to build them back out at the destination. WDM addresses this need by furnishing a separate channel for each service at the full rate.

Systems that support more than 8 wavelengths are referred to as DWDM (see Figure 1.19). Systems at both the OC-48 (2.5Gbps) and OC-192 (10Gbps) levels can today support upward of 128 channels, or wavelengths. New systems that operate at 40Gbps (OC-768) are also now available, and Bell Labs is working on a technique that might enable us to extract up to 15,000 channels or wavelengths on a single fiber. Meanwhile, CWDM has been introduced to address metro area and campus environments, where long distance is not an issue, but cost is. These systems rely on lower-cost light sources that reduce costs, but at the same time, they require increased spacing between the channels and therefore limit CWDM systems to 32 or 64 channels, at most. An important point to note is that at this time, DWDM and CWDM systems do not always interoperate; of course, this will be resolved in the near future, but for the time being, it is a limitation that needs to be acknowledged.

Figure 1.19. WDM and DWDM

The revolution truly has just begun. The progress in this area is so great that each year we're approximately doubling performance while halving costs. Again, great emphasis is being placed on the optical sector, so many companiestraditional telecom providers, data networking providers, and new startupsare focusing attention on the optical revolution. (WDM, DWDM, CWDM, and EDFAs are discussed in more detail in Chapter 11, "Optical Networking.")

Political and Regulatory Forces in Telecommunications |

Part I: Communications Fundamentals

Telecommunications Technology Fundamentals

- Telecommunications Technology Fundamentals

- Transmission Lines

- Types of Network Connections

- The Electromagnetic Spectrum and Bandwidth

- Analog and Digital Transmission

- Multiplexing

- Political and Regulatory Forces in Telecommunications

Traditional Transmission Media

Establishing Communications Channels

- Establishing Communications Channels

- Establishing Connections: Networking Modes and Switching Modes

- The PSTN Versus the Internet

The PSTN

- The PSTN

- The PSTN Infrastructure

- The Transport Network Infrastructure

- Signaling Systems

- Intelligent Networks

- SS7 and Next-Generation Networks

Part II: Data Networking and the Internet

Data Communications Basics

- Data Communications Basics

- The Evolution of Data Communications

- Data Flow

- The OSI Reference Model and the TCP/IP Reference Model

Local Area Networking

Wide Area Networking

The Internet and IP Infrastructures

- The Internet and IP Infrastructures

- Internet Basics

- Internet Addressing and Address Resolution

- The Organization of the Internet

- IP QoS

- Whats Next on the Internet

Part III: The New Generation of Networks

IP Services

Next-Generation Networks

- Next-Generation Networks

- The Broadband Evolution

- Multimedia Networking Requirements

- The Broadband Infrastructure

- Next-Generation Networks and Convergence

- The Next-Generation Network Infrastructure

Optical Networking

- Optical Networking

- Optical Networking Today and Tomorrow

- End-to-End Optical Networking

- The Optical Edge

- The Optical Core: Overlay Versus Peer-to-Peer Networking Models

- The IP+Optical Control Plane

- The Migration to Optical Networking

Broadband Access Alternatives

- Broadband Access Alternatives

- Drivers of Broadband Access

- DSL Technology

- Cable TV Networks

- Fiber Solutions

- Wireless Broadband

- Broadband PLT

- HANs

Part IV: Wireless Communications

Wireless Communications Basics

- Wireless Communications Basics

- A Brief History of Wireless Telecommunications

- Wireless Communications Regulations Issues

- Wireless Impairments

- Antennas

- Wireless Bandwidth

- Wireless Signal Modulation

- Spectrum Utilization

Wireless WANs

- Wireless WANs

- 1G: Analog Transmission

- 2G: Digital Cellular Radio

- 5G: Enhanced Data Services

- 3G: Moving Toward Broadband Wireless

- Beyond 3G

- 4G: Wireless Broadband

- 5G: Intelligent Technologies

WMANs, WLANs, and WPANs

Emerging Wireless Applications

- Emerging Wireless Applications

- The Handset Revolution

- Mobile IP

- The IP Multimedia Subsystem

- Mobile Gaming

- Mobile Video

- Mobile TV

- Mobile Content

Glossary

EAN: 2147483647

Pages: 160