Multitier DataSnap Applications

Overview

Large companies often have needs that are broader than applications using local database and SQL servers can meet. In the past few years, Borland Software Corporation has been addressing the needs of large corporations, and it even temporarily changed its own name to Inprise to underline this enterprise focus. The name was eventually changed back to Borland, but the focus on enterprise development remains.

Delphi targets many different technologies: three-tier architectures based on Windows NT and DCOM, TCP/IP and socket applications, and—most of all—SOAP- and XML-based web services. This chapter focuses on database-oriented multitier architectures; XML-oriented solutions will be discussed in Chapters 22 and 23, which are devoted to XML, SOAP, and web services.

Before proceeding, I should emphasize two important elements. First, the tools to support this kind of development are available only in the Enterprise version of Delphi; and second, with Delphi 7 you don't have to pay a deployment fee for DataSnap applications. You buy the development environment and then deploy your programs on as many servers as you want, without owing Borland any money. This is a very significant change (the most significant in Delphi 7) to the distribution policy of DataSnap, which used to require a per-server fee (initially very high, then significantly lowered over time). This new deployment license will certainly increase the appeal of DataSnap to developers, which is a good reason to cover it in some detail.

One, Two, Three Levels in Delphi s History

Initially, database PC applications were client-only solutions: The program and the database files were on the same computer. From there, adventuresome programmers moved the database files onto a network file server. The client computers still hosted the application software and the entire database engine, but the database files were now accessible to several users at the same time. You can still use this type of configuration with a Delphi application and Paradox files (or, of course, Paradox itself), but the approach was much more widespread just few years ago.

The next big transition was to client/server development, embraced by Delphi since its first version. In the client/server world, the client computer requests the data from a server computer, which hosts both the database files and a database engine to access them. This architecture downplays the role of the client, but it also reduces the requirements for processing power on the client machine. Depending on how the programmers implement client/server, the server can do most (if not all) of the data processing. In this way, a powerful server can provide data services to several less powerful clients.

Naturally, there are many other reasons for using centralized database servers, such as concern for data security and integrity, simpler backup strategies, central management of data constraints, and so on. The database server is often called a SQL server, because SQL is the language most commonly used for making queries into the data; but it may also be called a RDBMS (relational database management system), reflecting the fact that the server provides tools for managing the data, such as support for backup and replication.

Of course, some applications you build may not need the benefits of a full RDBMS, so a simple client-only solution might be sufficient. On the other hand, you might need some of the robustness of a RDBMS system, but on a single, isolated computer. In this case, you can use a local version of a SQL server, such as InterBase. Traditional client/server development is done with a two-tier architecture. However, if the RDBMS is primarily performing data storage instead of data- and number-crunching, the client might contain both user interface code (formatting the output and input with customized reports, data-entry forms, query screens, and so on) and code related to managing the data (also known as business rules). In this case, it's generally a good idea to try to separate these two sections of the program and build a logical three-tier architecture. The term logical here means that there are still just two computers (that is, two physical tiers), but you've now partitioned the application into three distinct elements.

Delphi 2 introduced support for a logical three-tier architecture with data modules. As you should know by now, a data module is a nonvisual container for the data access components of an application (or indeed any other nonvisual components), but it often includes several handlers for database-related events. You can share a single data module among several different forms and provide different user interfaces for the same data; there might be one or more data-input forms, reports, master/detail forms, and various charting or dynamic output forms.

The logical three-tier approach solves many problems, but it also has a few drawbacks. First, you must replicate the data-management portion of the program on different client computers; doing so may hamper performance, but the bigger issue is the complexity it adds to code maintenance. Second, when multiple clients modify the same data, there's no simple way to handle the resulting update conflicts. Finally, for logical three-tier Delphi applications, you must install and configure the database engine (if any) and SQL server client library on every client computer.

The next logical step up from client/server is to move the data-module portion of the application to a separate server computer and design all the client programs to interact with it. This is exactly the purpose of remote data modules, which were introduced in Delphi 3. Remote data modules run on a server computer—generally called the application server. The application server in turn communicates with the DBMS (which can run on the application server or on another dedicated computer). Therefore, the client machines don't connect to the SQL server directly, but indirectly via the application server.

At this point there is a fundamental question: Do we still need to install the database access software? The traditional Delphi client/server architecture (even with three logical tiers) requires you to install the database access on each client, which is quite troublesome when you must configure and maintain hundreds of machines. In the physical three-tier architecture, you need to install and configure the database access only on the application server, not on the client computers. Because the client programs have only user interface code and are extremely simple to install, they now fall into the category of so-called thin clients. To use marketing-speak, we might even call this a zero-configuration thin-client architecture. But let's focus on technical issues instead of marketing terminology.

The Technical Foundation of DataSnap

When Borland introduced this physical multitier architecture in Delphi, it was called MIDAS (Middle-tier Distributed Application Services). For example, Delphi 5 included the third version of this technology, MIDAS 3. Afterward Borland renamed the technology DataSnap and extended its capabilities.

DataSnap requires the installation of specific libraries on the server (actually the middle-tier computer), which provides your client computers with the data extracted from the SQL server database or other data sources. DataSnap does not require a SQL server for data storage. DataSnap can serve up data from a wide variety of sources, including SQL, other DataSnap servers, or data computed on the fly.

As you would expect, the client side of DataSnap is extremely thin and easy to deploy. The only file you need is Midas.dll, a small DLL that implements the ClientDataSet and RemoteServer components and provides the connection to the application server. As an alternative to distributing the DLL, you can embed the code of this library in your executable file by including the MidasLib unit in your uses statements, as discussed in Chapter 13, "Delphi's Database Architecture."

The IAppServer Interface

The two sides of a DataSnap application communicate using the IAppServer interface; this interface's definition appears in Listing 16.1. You'll seldom need to call the methods of the IAppServer interface directly, because Delphi includes components implementing this interface on the server side applications and components calling the interface on the client side applications. These components simplify the support of the IAppServer interface and at times even hide it completely. In practice, the server will make objects implementing this interface available to the client, possibly along with other custom interfaces.

Listing 16.1: The Definition of the IAppServer Interface

type

IAppServer = interface(IDispatch)

['{1AEFCC20-7A24-11D2-98B0-C69BEB4B5B6D}']

function AS_ApplyUpdates(const ProviderName: WideString; Delta: OleVariant;

MaxErrors: Integer; out ErrorCount: Integer;

var OwnerData: OleVariant): OleVariant; safecall;

function AS_GetRecords(const ProviderName: WideString; Count: Integer;

out RecsOut: Integer; Options: Integer; const CommandText: WideString;

var Params: OleVariant; var OwnerData: OleVariant): OleVariant; safecall;

function AS_DataRequest(const ProviderName: WideString;

Data: OleVariant): OleVariant; safecall;

function AS_GetProviderNames: OleVariant; safecall;

function AS_GetParams(const ProviderName: WideString;

var OwnerData: OleVariant): OleVariant; safecall;

function AS_RowRequest(const ProviderName: WideString; Row: OleVariant;

RequestType: Integer; var OwnerData: OleVariant): OleVariant; safecall;

procedure AS_Execute(const ProviderName: WideString;

const CommandText: WideString; var Params: OleVariant;

var OwnerData: OleVariant); safecall;

end;

| Note |

A DataSnap server exposes an interface using a COM type library, a technology covered in Chapter 12, "From COM to COM+." |

The Connection Protocol

DataSnap defines only the higher-level architecture and can use different technologies for moving the data from the middle tier to the client side. DataSnap supports many different protocols, including the following:

Distributed COM (DCOM) and Stateless COM (MTS or COM+) DCOM is directly available in Windows NT/2000/XP and 98/Me, and it requires no additional run-time applications on the server. DCOM is basically an extension of COM technology that allows a client application to use server objects that exist and execute on a separate computer. The DCOM infrastructure allows you to use stateless COM objects, available in the COM+ and in the older MTS (Microsoft Transaction Server) architectures. Both COM+ and MTS provide features such as security, component management, and database transactions, and are available in Windows NT/2000/XP and in Windows 98/Me.

Due to the complexity of DCOM configuration and its problems in passing through firewalls, even Microsoft is abandoning DCOM in favor of SOAP-based solutions.

TCP/IP Sockets These are available on most systems. Using TCP/IP, you might distribute clients over the Web, where DCOM cannot be taken for granted, and you'll have far fewer configuration headaches. To use sockets, the middle-tier computer must run the ScktSrvr.exe application provided by Borland, a single program that can run either as an application or as a service. This program receives the client requests and forwards them to the remote data module (executing on the same server) using COM. Sockets provide no protection against failure on the client side, because the server is not informed and might not release resources when a client unexpectedly shuts down.

HTTP and SOAP The use of HTTP as a transport protocol over the Internet simplifies connections through firewalls or proxy servers (which generally don't like custom TCP/IP sockets). You need a specific web server application, httpsrvr.dll, which accepts client requests and creates the proper remote data modules using COM. These web connections can also use SSL security. Finally, web connections based on HTTP transport can use DataSnap object-pooling support.

| Note |

The DataSnap HTTP transport can use XML as the data packet format, enabling any platform or tool that can read XML to participate in a DataSnap architecture. This is an extension of the original DataSnap data packet format, which is also platform independent. The use of XML over HTTP is also the foundation of SOAP. There's more on SOAP in DataSnap in Chapter 23, "Web Services and SOAP." |

Until Delphi 6, you could also use CORBA (Common Object Request Broker Architecture) as a transport mechanism for DataSnap applications. Due to compatibility issues with the newer versions of Borland's VisiBroker CORBA solution, this feature has been discontinued in Delphi 7.

Finally, notice that as an extension to this architecture, you can transform the data packets into XML and deliver them to a web browser. In this case, you basically have one extra tier: the web server gets the data from the middle tier and delivers it to the client. I'll discuss this architecture, called Internet Express, in Chapter 22, "Using XML Technologies."

Providing Data Packets

The entire Delphi multitier data-access architecture centers around the idea of data packets. In this context, a data packet is a block of data that moves from the application server to the client or from the client back to the server. Technically, a data packet is a sort of subset of a dataset. It describes the data it contains (usually a few records of data), and it lists the names and types of the data fields. Even more important, a data packet includes the constraints— that is, the rules to be applied to the dataset. You'll typically set these constraints in the application server, and the server sends them to the client applications along with the data.

All communication between the client and the server occurs by exchanging data packets. The provider component on the server manages the transmission of several data packets within a big dataset, with the goal of responding faster to the user. As the client receives a data packet in a ClientDataSet component, the user can edit the records it contains. As mentioned earlier, during this process the client also receives and checks the constraints, which are applied during the editing operations.

When the client has updated the records and sends back a data packet, that packet is known as a delta. The delta packet tracks the difference between the original records and the updated ones, recording all the changes the client requested from the server. When the client asks to apply the updates to the server, it sends the delta to the server, and the server tries to apply each of the changes. I say tries because if a server is connected to several clients, the data may have changed already, and the update request may fail.

Because the delta packet includes the original data, the server can quickly determine if another client has already changed the data. If so, the server fires an OnReconcileError event, which is one of the vital elements for thin-client applications. In other words, the three-tier architecture uses an update mechanism similar to the one Delphi uses for cached updates. As you saw in Chapter 14, "Client/Server with dbExpress," the ClientDataSet manages data in a memory cache; it typically reads only a subset of the records available on the server side, loading more elements only as they're needed. When the client updates records or inserts new ones, it stores these pending changes in another local cache on the client, the delta cache.

The client can also save the data packets to disk and work offline, thanks to the MyBase support discussed in Chapter 13. Even error information and other data moves using the data packet protocol, so it is truly one of the foundation elements of this architecture.

| Note |

It's important to remember that data packets are protocol-independent. A data packet is merely a sequence of bytes, so anywhere you can move a series of bytes, you can move a data packet. This functionality was provided to make the architecture suitable for multiple transport protocols (including DCOM, HTTP, and TCP/IP) and for multiple platforms. |

Delphi Support Components (Client Side)

Now that we've examined the general foundations of Delphi's three-tier architecture, let's focus on the components that support it. For developing client applications, Delphi provides the ClientDataSet component, which provides all the standard dataset capabilities and embeds the client side of the IAppServer interface. In this case, the data is delivered through the remote connection.

The connection to the server application is made via another component you'll also need in the client application. You should use one of the three specific connection components (available in the DataSnap page):

- The DCOMConnection component can be used on the client side to connect to a DCOM and MTS server, located either on the current computer or on another computer indicated by the ComputerName property. The connection is with a registered object having a given ServerGUID or ServerName.

- The SocketConnection component can be used to connect to the server via a TCP/IP socket. You should indicate the IP address or the host name, and the GUID of the server object (in the InterceptGUID property). This connection component has an extra property, SupportCallbacks, which you can disable if you are not using callbacks and want to deploy your program on Windows 95 client computers that don't have Winsock 2 installed.

Note In the WebServices page, you can also find the SoapConnection component, which requires a specific type of server and will be discussed in Chapter 23.

- The WebConnection component is used to handle an HTTP connection that can easily get through a firewall. You should indicate the URL where your copy of httpsrvr.dll is located and the name or GUID of the remote object on the server.

A few more client-side components were added to the DataSnap architecture in Delphi 6, mainly for managing connections:

- The ConnectionBroker component can be used as an alias of an actual connection component, which is useful when you have a single application with multiple client datasets. To change the physical connection of each dataset, you only need to change the Connection property of the ConnectionBroker. You can also use the events of this virtual connection component in place of those of the actual connections, so you don't have to change any code if you change the data transport technology. For the same reason, you can refer to the AppServer object of the ConnectionBroker instead of the corresponding property of a physical connection.

- The SharedConnection component can be used to connect to a secondary (or child) data module of a remote application, piggy-backing on an existing physical connection to the main data module. In other words, an application can connect to multiple data modules of the server with a single, shared connection.

- The LocalConnection component can be used to target a local dataset provider as the source of the data packet. The same effect can be obtained by hooking the ClientDataSet directly to the provider. However, using the LocalConnection, you can write a local application with the same code as a complete multitier application, using the IAppServer interface of the "fake" connection. Doing so will make the program easier to scale up, compared to a program with a direct connection.

A few other components of the DataSnap page relate to the transformation of the DataSnap data packet into custom XML formats. These components (XMLTransform, XMLTransformProvider, and XMLTransformClient) will be discussed in Chapter 22.

Delphi Support Components (Server Side)

On the server side (actually the middle tier), you'll need to create an application or a library that embeds a remote data module, a special version of the TDataModule class. A second alternative is the use of a specialized remote data module for transactional COM. In the Multitier page of the New Items dialog box (obtained from the File ® New ® Other menu) there are specific wizards to create both types of remote data module.

The only specific component you need on the server side is the DataSetProvider. You need one of these components for every table or query you want to make available to the client applications, which will then use a separate ClientDataSet component for every exported dataset. The DataSetProvider was introduced in Chapter 13.

Building a Sample Application

Now you're ready to build a sample program. Doing so will let you observe some of the components I've just described in action, and will also allow you to focus on some other problems, shedding light on other pieces of the Delphi multitier puzzle. I'll build the client and application server portions of a three-tier application in two steps. The first step will simply test the technology using a minimum of elements. These programs will be very simple.

From that point, I'll add more power to the client and the application server. In each example, I'll display data from a local InterBase table using dbExpress and set up everything to allow you to test the programs on a stand-alone computer. I won't cover the steps you have to follow to install the examples on multiple computers with various technologies—that would be the subject of at least one other book.

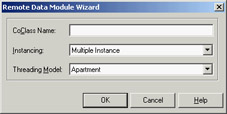

The First Application Server

The server side of the basic example is easy to build. Simply create a new application and add a remote data module to it using the corresponding icon in the Multitier page of the Object Repository. The Remote Data Module Wizard (see Figure 16.1) will ask you for a class name and the instancing style. When you enter a class name, such as AppServerOne, and click the OK button, Delphi will add a data module to the program. This data module will have the usual properties and events, but its class will have the following Delphi language declaration:

type

TAppServerOne = class(TRemoteDataModule, IAppServerOne)

private

{ Private declarations }

protected

class procedure UpdateRegistry(Register: Boolean;

const ClassID, ProgID: string); override;

public

{ Public declarations }

end;

Figure 16.1: The Remote Data Module Wizard

In addition to inheriting from the TRemoteDataModule base class, this class implements the custom IAppServerOne interface, which derives from the standard DataSnap interface (IAppServer). The class also overrides the UpdateRegistry method to add support for enabling the socket and web transports, as you can see in the code generated by the wizard. At the end of the unit, you'll find the class factory declaration, which should be clear if you read Chapter 12:

initialization TComponentFactory.Create(ComServer, TAppServerOne, Class_AppServerOne, ciMultiInstance, tmApartment); end.

Now you can add a dataset component to the data module (I've used the dbExpress SQLDataSet), connect it to a database and a table or query, activate it, and finally add a DataSetProvider and hook it to the dataset component. You'll obtain a DFM file like this:

object AppServerOne: TAppServerOne object SQLConnection1: TSQLConnection ConnectionName = 'IBLocal' LoginPrompt = False end object SQLDataSet1: TSQLDataSet SQLConnection = SQLConnection1 CommandText = 'select * from EMPLOYEE' end object DataSetProvider1: TDataSetProvider DataSet = SQLDataSet1 Constraints = True end end

The main form of this program is almost useless, so you can simply add a label to it indicating that it's the form of the server application. When you've built the server, you should compile it and run it once. This operation will automatically register it as an Automation server on your system, making it available to client applications. Of course, you should register the server on the computer where you want it to run, either the client or the middle tier.

The First Thin Client

Now that you have a working server, you can build a client that will connect to it. You'll again begin with a standard Delphi application and add a DCOMConnection component to it (or the proper component for the specific type of connection you want to test). This component defines a ComputerName property that you'll use to specify the computer that hosts the application server. If you want to test the client and application server from the same computer, you can leave this property blank.

Once you've selected an application server computer, you can simply display the ServerName property's combo box list to view the available DataSnap servers. This combo box shows the servers' registered names, by default the name of the executable file of the server followed by the name of the remote data module class, as in AppServ1.AppServerOne. Alternatively, you can enter the GUID of the server object as the ServerGUID property. Delphi will automatically fill this property as you set the ServerName property, determining the GUID by looking it up in the Registry.

At this point, if you set the DCOMConnection component's Connected property to True, the server form will appear, indicating that the client has activated the server. You don't usually need to perform this operation, because the ClientDataSet component typically activates the RemoteServer component for you. I've suggested this step simply to emphasize what's happening behind the scenes.

| Tip |

You should generally leave the DCOMConnection component's Connected property set to False at design time, so you can open the project in Delphi even on a computer where the DataSnap server is not already registered. |

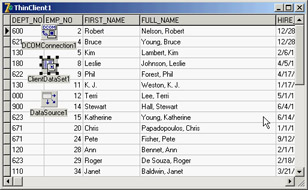

As you might expect, the next step is to add a ClientDataSet component to the form. You must connect the ClientDataSet to the DCOMConnection1 component via the RemoteServer property, and thereby to one of the providers it exports. You can see the list of available providers in the ProviderName property, via the usual combo box. In this example, you'll be able to select only DataSetProvider1, because it is the only provider available in the server you've just built. This operation connects the dataset in the client's memory with the dbExpress dataset on the server. If you activate the client dataset and add a few data-aware controls (or a DBGrid), you'll immediately see the server data appear in them, as illustrated in Figure 16.2.

Figure 16.2: When you activate a ClientDataSet component con-nected to a remote data module at design time, the data from the server becomes visible as usual.

Here is the DFM file for the minimal client application, ThinCli1:

object Form1: TForm1

Caption = 'ThinClient1'

object DBGrid1: TDBGrid

Align = alClient

DataSource = DataSource1

end

object DCOMConnection1: TDCOMConnection

ServerGUID = '{09E11D63-4A55-11D3-B9F1-00000100A27B}'

ServerName = 'AppServ1.AppServerOne'

end

object ClientDataSet1: TClientDataSet

Aggregates = <>

Params = <>

ProviderName = 'DataSetProvider1'

RemoteServer = DCOMConnection1

end

object DataSource1: TDataSource

DataSet = ClientDataSet1

end

end

Obviously, the programs for this first three-tier application are quite simple, but they demonstrate how to create a dataset viewer that splits the work between two different executable files. At this point, the client is only a viewer. If you edit the data on the client, it won't be updated on the server. To accomplish this, you'll need to add more code to the client. However, before you do that, let's add some features to the server.

Adding Constraints to the Server

When you write a traditional data module in Delphi, you can easily add some of the application logic, or business rules, by handling the dataset events and by setting field object properties and handling their events. You should avoid doing this work on the client application; instead, write your business rules on the middle tier.

In the DataSnap architecture, you can send some constraints from the server to the client and let the client program impose those constraints during the user input. You can also send field properties (such as minimum and maximum values and the display and edit masks) to the client and (using some of the data access technologies) process updates through the dataset used to access the data (or a companion UpdateSql object).

Field and Dataset Constraints

When the provider interface creates data packets to send to the client, it includes the field definitions, the table and field constraints, and one or more records (as requested by the ClientDataSet component). This implies that you can customize the middle tier and build distributed application logic by using SQL-based constraints.

The constraints you create using SQL expressions can be assigned to an entire dataset or to specific fields. The provider sends the constraints to the client along with the data, and the client applies them before sending updates back to the server. This process reduces network traffic, compared to having the client send updates back to the application server and eventually up to the SQL server, only to find that the data is invalid. Another advantage of coding the constraints on the server side is that if the business rules change, you need to update the single server application and not the many clients on multiple computers.

But how do you write constraints? You can use several properties:

- BDE datasets have a Constraints property, which is a collection of TCheckConstraint objects. Every object has a few properties, including the expression and the error message.

- Each field object defines the CustomConstraint and ConstraintErrorMessage properties. There is also an ImportedConstraint property for constraints imported from the SQL server.

- Each field object has a DefaultExpression property, which can be used locally or passed to the ClientDataSet. This is not an actual constraint; it's only a suggestion to the end user.

The next example, AppServ2, adds a few constraints to a remote data module connected to the sample EMPLOYEE InterBase database. After connecting the table to the database and creating the field objects for it, you can set the following special properties:

object SQLDataSet1: TSQLDataSet ... object SQLDataSet1EMP_NO: TSmallintField CustomConstraint = 'x > 0 and x < 10000' ConstraintErrorMessage = 'Employee number must be a positive integer below 10000' FieldName = 'EMP_NO' end object SQLDataSet1FIRST_NAME: TStringField CustomConstraint = 'x <> '#39#39 ConstraintErrorMessage = 'The first name is required' FieldName = 'FIRST_NAME' Size = 15 end object SQLDataSet1LAST_NAME: TStringField CustomConstraint = 'not x is null' ConstraintErrorMessage = 'The last name is required' FieldName = 'LAST_NAME' end end

| Note |

The expression 'x <> '#39#39 is the DFM transposition of the string x <> '', indicating that you don't want to have an empty string. The final constraint, not x is null, instead allows empty strings but not null values. |

Including Field Properties

You can control whether the properties of the field objects on the middle tier are sent to the ClientDataSet (and copied into the corresponding field objects of the client side) by using the poIncFieldProps value of the Options property of the DataSetProvider. This flag controls the download of the field properties Alignment, DisplayLabel, DisplayWidth, Visible, DisplayFormat, EditFormat, MaxValue, MinValue, Currency, EditMask, and DisplayValues, if they are available in the field. Here is an example of another field of the AppServ2 example with some custom properties:

object SQLDataSet1SALARY: TBCDField DefaultExpression = '10000' FieldName = 'SALARY' DisplayFormat = '#,###' EditFormat = '####' Precision = 15 Size = 2 end

With this setting, you can write your middle tier the way you usually set the fields of a standard client/server application. This approach also makes it faster to move existing applications from a client/server to a multitier architecture. The main drawback of sending fields to the client is that transmitting all the extra information takes time. Turning off poIncFieldProps can dramatically improve network performance of datasets with many columns.

A server can generally filter the fields sent to the client; it does so by declaring persistent field objects with the Fields editor and omitting some of the fields. Because a field you're filtering out might be required to identify the record for future updates (if the field is part of the primary key), you can also use the field's ProviderFlags property on the server to send the field value to the client but make it unavailable to the ClientDataSet component (this provides some extra security, compared to sending the field to the client and hiding it there).

Field and Table Events

You can write middle-tier dataset and field event handlers as usual and let the dataset process the updates received by the client in the traditional way. This means updates are considered to be operations on the dataset, exactly as when a user is directly editing, inserting, or deleting fields locally.

This update process is requested by setting the ResolveToDataSet property of the TDatasetProvider component, again connecting either the dataset used for input or a second dataset used for the updates. This approach is possible with datasets supporting editing operations. These include BDE, ADO, and InterBase Express datasets, but not those of the new dbExpress architecture.

With this technique, the updates are performed by the dataset, which implies a lot of control (the standard events are being triggered) but generally slower performance. Flexibility is much greater, because you can use standard coding practices. Also, porting existing local or client/server database applications, which use dataset and field events, is much more straightforward with this model. However, keep in mind that the user of the client program will receive your error messages only when the local cache (the delta) is sent back to the middle tier. Saying to the user that some data prepared half an hour ago is not valid might be a little awkward. If you follow this approach, you'll probably need to apply the updates in the cache at every AfterPost event on the client side.

Finally, if you decide to let the dataset and not the provider do the updates, Delphi helps you a lot in handling possible exceptions. Any exceptions raised by the middle-tier update events (for example, OnBeforePost) are automatically transformed by Delphi into update errors, which activate the OnReconcileError event on the client side (more on this event later in this chapter). No exception is shown on the middle tier, but the error travels back to the client.

Adding Features to the Client

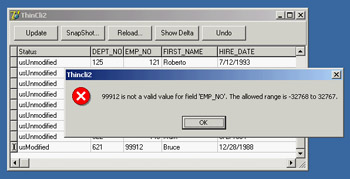

After adding some constraints and field properties to the server, let's return our attention to the client application. The first version was very simple, but now you can add several features to make it work well. In the ThinCli2 example, I've embedded support for checking the record status and accessing the delta information (the updates to be sent back to the server), using some of the ClientDataSet techniques already discussed in Chapter 13. The program also handles reconcile errors and supports the briefcase model.

Keep in mind that while you're using this client to edit the data locally, you'll be reminded of any failure to match the business rules of the application, which are set up on the server side using constraints. The server will also provide you with a default value for the Salary field of a new record and pass along the value of its DisplayFormat property. In Figure 16.3, you can see one of the error messages this client application can display, which it receives from the server. This message is displayed when you're editing the data locally, not when you send it back to the server.

Figure 16.3: The error message displayed by the ThinCli2 example when the employee ID is too large

The Update Sequence

This client program includes a button to apply the updates to the server and a standard reconcile dialog. Here is a summary of the complete sequence of operations related to an update request and the possible error events:

- The client program calls the ApplyUpdates method of a ClientDataSet.

- The delta is sent to the provider on the middle tier. The provider fires the OnUpdateData event, where you have a chance to look at the requested changes before they reach the database server. At this point you can modify the delta, which is passed in a format compatible with the data of a ClientDataSet.

- The provider (technically, a part of the provider called the resolver) applies each row of the delta to the database server. Before applying each update, the provider receives a BeforeUpdateRecord event. If you've set the ResolveToDataSet flag, this update will eventually fire local events of the dataset in the middle tier.

- In case of a server error, the provider fires the OnUpdateError event (on the middle tier) and the program has a chance of fixing the error at that level.

- If the middle-tier program doesn't fix the error, the corresponding update request remains in the delta. The error is returned to the client side at this point or after a given number of errors have been collected, depending on the value of the MaxErrors parameter of the ApplyUpdates call.

- The delta packet with the remaining updates is sent back to the client, firing the OnReconcileError event of the ClientDataSet for each remaining update. In this event handler, the client program can try to fix the problem (possibly prompting the user for help), modifying the update in the delta, and later reissuing it.

Refreshing Data

You can obtain an updated version of the data, which other users might have modified, by calling the Refresh method of the ClientDataSet. However, this operation can be done only if there are no pending update operations in the cache, because calling Refresh raises an exception when the change log is not empty:

if cds.ChangeCount = 0 then cds.Refresh;

If only some records have been changed, you can refresh the others by calling RefreshRecords. This method refreshes only the current record, but it should be used only if the user hasn't modified the current record. In this case, RefreshRecords leaves the unapplied changes in the change log. As an example, you can refresh a record every time it becomes the active one, unless it has been modified and the changes have not yet been posted to the server:

procedure TForm1.cdsAfterScroll(DataSet: TDataSet); begin if cds.UpdateStatus = usUnModified then cds.RefreshRecord; end;

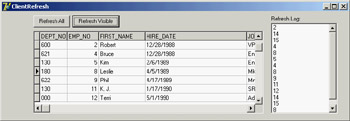

When the data is subject to frequent changes by many users and each user should see changes right away, you should apply any change immediately in the AfterPost and AfterDelete methods, and call RefreshRecords for the active record (as shown previously) or each of the records visible inside a grid. This code is part of the ClientRefresh example, connected to the AppServ2 server. For debugging purposes, the program also logs the EMP_NO field for each record it refreshes, as you can see in Figure 16.4.

Figure 16.4: The form of the ClientRefresh example, which automatically refreshes the active record and allows more extensive updates by clicking the buttons

I've done this by adding a button to the ClientRefresh example. The handler of this button moves from the current record to the first visible record of the grid and then to the last visible record. This is accomplished by noting that there are RowCount - 1 rows visible, assuming that the first row is the fixed one hosting the field names. The program doesn't call RefreshRecord every time, because each movement will trigger an AfterScroll event with the code shown previously. The following code refreshes the visible rows, which might be triggered by a timer:

// protected access hack type TMyGrid = class (TDBGrid) end; procedure TForm1.Button2Click(Sender: TObject); var i: Integer; bm: TBookmarkStr; begin // refresh visible rows cds.DisableControls; // start with the current row i := TMyGrid (DbGrid1).Row; bm := cds.Bookmark; try // get back to the first visible record while i > 1 do begin cds.Prior; Dec (i); end; // return to the current record i := TMyGrid(DbGrid1).Row; cds.Bookmark := bm; // go ahead until the grid is complete while i < TMyGrid(DbGrid1).RowCount do begin cds.Next; Inc (i); end; finally // set back everything and refresh cds.Bookmark := bm; cds.EnableControls; end; end;

This approach generates a huge amount of network traffic, so you might want to trigger updates only when there are actual changes. You can implement this process by adding a callback technology to the server, so that it can inform all connected clients that a given record has changed. The client can determine whether it is interested in the change and eventually trigger the update request.

Advanced DataSnap Features

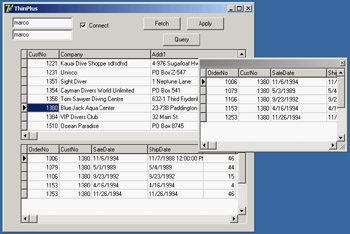

DataSnap includes many more features than I've covered up to now. Here is a quick tour of some of the more advanced features of the architecture, partially demonstrated by the AppSPlus and ThinPlus examples. Unfortunately, demonstrating every piece of functionality would turn this chapter into an entire book, so I'll limit myself to an overview.

| Warning |

The ThinPlus example requires Delphi's socket server (provided in Delphi's bin folder) to run. Without this program, you'll see a socket error when the client tries to connect with the server. The plus side is that you can easily deploy the program over a network by modifying the IP address of the server in the client program. |

Besides the features discussed in the following sections, the AppSPlus and ThinPlus examples demonstrate the use of a socket connection, limited logging of events and updates on the server side, and direct fetching of a record on the client side. The last feature is accomplished with this call:

procedure TClientForm.ButtonFetchClick(Sender: TObject); begin ButtonFetch.Caption := IntToStr (cds.GetNextPacket); end;

This allows you to get more records than are required by the client user interface (the DBGrid). In other words, you can fetch records directly, without waiting for the user to scroll down in the grid. I suggest you study the details of these complex examples after reading the rest of this section.

Parametric Queries

If you want to use parameters in a query or stored procedure, then instead of building a custom solution (with a custom method call to the server), you can let Delphi help you. First define the query on the middle tier with a parameter:

select * from customer where Country = :Country

Use the Params property to set the type and default value of the parameter. On the client side, you can use the Fetch Params command from the ClientDataSet's shortcut menu, after connecting the component to the proper provider. At run time, you can call the equivalent FetchParams method of the ClientDataSet component.

Now you can provide a local default value for the parameter by acting on the Params property. The value of the parameter will be sent to the middle tier when you fetch the data. The ThinPlus example refreshes the parameter with the following code:

procedure TFormQuery.btnParamClick(Sender: TObject); begin cdsQuery.Close; cdsQuery.Params[0].AsString := EditParam.Text; cdsQuery.Open; end;

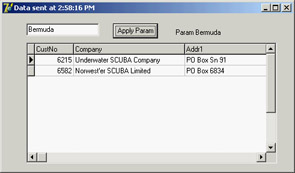

You can see the secondary form of this example, which shows the result of the parametric query in a grid, in Figure 16.5. The figure also shows some custom data sent by the server, as explained in the section "Customizing the Data Packets."

Figure 16.5: The secondary form of the ThinPlus example, showing the data of a parametric query

Custom Method Calls

Because the server has a normal COM interface, you can add more methods or properties to it and call them from the client. Simply open the server's type library editor and use it as with any other COM server. In the AppSPlus example, I've added a custom Login method with the following implementation:

procedure TAppServerPlus.Login(const Name, Password: WideString);

begin

// TODO: add actual login code...

if Password <> Name then

raise Exception.Create ('Wrong name/password combination received')

else

Query.Active := True;

ServerForm.Add ('Login:' + Name + '/' + Password);

end;

The program performs a simple test, instead of checking the name/password combination against a list of authorizations as a real application should do. Also, disabling the Query doesn't really work, because it can be activated by the provider; disabling the DataSetProvider is a more robust approach. The client has a simple way to access the server: the AppServer property of the remote connection component. Here is a sample call from the ThinPlus example, which takes place in the AfterConnect event of the connection component:

procedure TClientForm.ConnectionAfterConnect(Sender: TObject); begin Connection.AppServer.Login (Edit2.Text, Edit3.Text); end;

Note that you can call extra methods of the COM interface through DCOM and also using a socket-based or HTTP connection. Because the program uses the safecall calling convention, the exception raised on the server is automatically forwarded and displayed on the client side. This way, when a user selects the Connect check box, the event handler used to enable the client datasets is interrupted, and a user with the wrong password won't be able to see the data.

| Note |

Besides direct method calls from the client to the server, you can also implement callbacks from the server to the client. You can use this approach, for example, to notify every client of specific events. COM events are one way to do this. As an alternative, you can add a new interface, implemented by the client, which passes the implementation object to the server. This way, the server can call the method on the client computer. Callbacks are not possible with HTTP connections, though. |

Master Detail Relations

If your middle-tier application exports multiple datasets, you can retrieve them using multiple ClientDataSet components on the client side and connect them locally to form a master/detail structure. This approach will create quite a few problems for the detail dataset unless you retrieve all the records locally.

This solution also makes it quite complex to apply the updates; you cannot usually cancel a master record until all related detail records have been removed, and you cannot add detail records until the new master record is properly in place. (Different servers handle this situation differently, but in most cases where a foreign key is used, this is the standard behavior.) To solve this problem, you can write complex code on the client side to update the records of the two tables according to the specific rules.

A completely different approach is to retrieve a single dataset that already includes the detail as a dataset field, a field of type TDataSetField. To accomplish this, you need to set up the master/detail relation on the server application:

object TableCustomer: TTable DatabaseName = 'DBDEMOS' TableName = 'customer.db' end object TableOrders: TTable DatabaseName = 'DBDEMOS' MasterFields = 'CustNo' MasterSource = DataSourceCust TableName = 'ORDERS.DB' end object DataSourceCust: TDataSource DataSet = TableCustomer end object ProviderCustomer: TDataSetProvider DataSet = TableCustomer end

On the client side, the detail table will show up as an extra field of the ClientDataSet, and the DBGrid control will display it as an extra column with an ellipsis button. Clicking the button will display a secondary form with a grid presenting the detail table (see Figure 16.6). If you need to build a flexible user interface on the client, you can then add a secondary ClientDataSet connected to the dataset field of the master dataset, using the DataSetField property. Simply create persistent fields for the main ClientDataSet and then hook up the property:

Figure 16.6: The ThinPlus example shows how a dataset field can either be displayed in a grid in a floating window or extracted by a ClientDataSet and displayed in a second form. You'll generally do one of the two things, not both!

object cdsDet: TClientDataSet DataSetField = cdsTableOrders end

With this setting, you can show the detail dataset in a separate DBGrid placed as usual in the form (the bottom grid of Figure 16.6) or any other way you like. Note that with this structure, the updates relate only to the master table, and the server should handle the proper update sequence even in complex situations.

Using the Connection Broker

I've already mentioned that the ConnectionBroker component can be helpful in case you might want to change the physical connection used by many ClientDataSet components of a single program. By hooking each ClientDataSet to the ConnectionBroker, you can change the physical connection of all the ClientDataSets by updating the physical connection of the broker.

The ThinPlus example uses these settings:

object Connection: TSocketConnection ServerName = 'AppSPlus.AppServerPlus' AfterConnect = ConnectionAfterConnect Address = '127.0.0.1' end object ConnectionBroker1: TConnectionBroker Connection = Connection end object cds: TClientDataSet ConnectionBroker = ConnectionBroker1 end // in the secondary form object cdsQuery: TClientDataSet ConnectionBroker = ClientForm.ConnectionBroker1 end

That's basically all you have to do. To change the physical connection, drop a new DataSnap connection component to the main form and set the Connection property of the broker to it.

More Provider Options

I've already mentioned the Options property of the DataSetProvider component, noting that you can use it to add the field properties to the data packet. There are several other options you can use to customize the data packet and the behavior of the client program. Here is a short list:

- You can minimize downloading BLOB data with the poFetchBlobsOnDemand option. In this case, the client application can download BLOBs by setting the FetchOnDemand property of the ClientDataSet to True or by calling the FetchBlobs method for specific records. Similarly, you can disable the automatic downloading of detail records by setting the poFetchDetailsOnDemand option. Again, the client can use the FetchOnDemand property or call the FetchDetails method.

- When you are using a master/detail relation, you can control cascades with either of two options. The poCascadeDeletes flag controls whether the provider should delete detail records before deleting a master record. You can set this option if the database server performs cascaded deletes for you as part of its referential integrity support. Similarly, you can set the poCascadeUpdates option when the server can automatically update key values of a master/detail relation.

- You can limit the operations on the client side. The most restrictive option, poReadOnly, disables any update. If you want to give the user a limited editing capability, use poDisableInserts, poDisableEdits, or poDisableDeletes.

- You can use poAutoRefresh to resend to the client a copy of the records the client has modified; doing so is useful in case other users have simultaneously made other, nonconflicting changes. You can also specify the poPropogateChanges option to send back to the client changes done in the BeforeUpdateRecord or AfterUpdateRecord event handler. This option is also handy when you are using autoincrement fields, triggers, and other techniques that modify data on the server or middle tier beyond the changes requested from the client tier.

- Finally, if you want the client to drive the operations, you can enable the poAllowCommandText option. It lets you set the SQL query or table name of the middle tier from the client, using the GetRecords or Execute method.

The Simple Object Broker

The SimpleObjectBroker component provides an easy way to locate a server application among several server computers. You provide a list of available computers, and the client will try each of them in order until it finds one that is available.

Moreover, if you enable the LoadBalanced property, the component will randomly choose one of the servers; when many clients use the same configuration, the connections will be automatically distributed among the multiple servers. If this seems like a "poor man's" object broker, consider that some highly expensive load-balancing systems don't offer much more than this.

Object Pooling

When multiple clients connect to your server at the same time, you have two options. The first is to create a remote data module object for each of them and let each request be processed in sequence (the default behavior for a COM server with the ciMultiInstance style). Alternatively, you can let the system create a different instance of the server application for every client (ciSingleInstance). This approach requires more resources and more SQL server connections (and possibly licenses).

An alternate approach is offered by DataSnap's support for object pooling. All you need to do to request this feature is add a call to RegisterPooled in the overridden UpdateRegistry method. Combined with the stateless support built in to this architecture, the pooling capability allows you to share some middle-tier objects among a much larger number of clients. A pooling mechanism is built in to COM+, but DataSnap makes it available for HTTP and socket-based connections as well.

The users on the client computers will spend most of their time reading data and typing in updates, and they generally don't continue asking for data and sending updates. When the client is not calling a method of the middle-tier object, this same remote data module can be used for another client. Being stateless, every request reaches the middle tier as a brand-new operation, even when a server is dedicated to a specific client.

Customizing the Data Packets

There are many ways to include custom information within the data packet handled by the IAppServer interface. The simplest is to handle the OnGetDataSetProperties event of the provider. This event has a Sender parameter, a dataset parameter indicating where the data is coming from, and an OleVariant array Properties parameter, in which you can place the extra information. You need to define one variant array for each extra property and include the name of the extra property, its value, and whether you want the data to return to the server along with the update delta (the IncludeInDelta parameter).

Of course, you can pass properties of the related dataset component, but you can also pass any other value (extra fake properties). In the AppSPlus example, I pass to the client the time the query was executed and its parameters:

procedure TAppServerPlus.ProviderQueryGetDataSetProperties( Sender: TObject; DataSet: TDataSet; out Properties: OleVariant); begin Properties := VarArrayCreate([0,1], varVariant); Properties[0] := VarArrayOf(['Time', Now, True]); Properties[1] := VarArrayOf(['Param', Query.Params[0].AsString, False]); end;

On the client side, the ClientDataSet component has a GetOptionalParameter method to retrieve the value of the extra property with the given name. The ClientDataSet also has the SetOptionalParameter method to add more properties to the dataset. These values will be saved to disk (in the briefcase model) and eventually sent back to the middle tier (by setting the IncludeInDelta member of the variant array to True). Here is a simple example of the retrieval of the dataset in the previous code:

Caption := 'Data sent at ' + TimeToStr (TDateTime (

cdsQuery.GetOptionalParam('Time')));

Label1.Caption := 'Param ' + cdsQuery.GetOptionalParam('Param');

The effect of this code was visible in Figure 16.5. An alternative and more powerful approach for customizing the data packet sent to the client is to handle the OnGetData event of the provider, which receives the outgoing data packet in the form of a client dataset. Using the methods of this client dataset, you can edit data before it is sent to the client. For example, you might encode some of the data or filter out sensitive records.

What s Next?

Borland originally introduced its multitier technology in Delphi 3 and has kept extending it from version to version. In addition to further updates and the change of the MIDAS name to DataSnap, Delphi 6 saw the introduction of SOAP support, introducing an alternate and extended architecture for multitier applications. We'll fully explore this topic in Chapter 23. Delphi 7, on the other hand, cut back on CORBA support.

However, with the introduction of a new licensing scheme (basically, free deployment), Delphi 7 has paved the way for increased adoption of this technology. This is particularly true of DataSnap's SOAP variant, but socket connections also provide a very good balance of data transfer efficiency and ease of deployment.

For the moment, we'll continue with database programming, discussing data-aware controls and custom datasets. In the final part of the book we'll explore sockets, Internet programming, and SOAP, so you'll have a complete picture of possible multitier architectures based on Delphi—not to mention the availability of third-party tools providing features similar to DataSnap.

Part I - Foundations

- Delphi 7 and Its IDE

- The Delphi Programming Language

- The Run-Time Library

- Core Library Classes

- Visual Controls

- Building the User Interface

- Working with Forms

Part II - Delphi Object-Oriented Architectures

- The Architecture of Delphi Applications

- Writing Delphi Components

- Libraries and Packages

- Modeling and OOP Programming (with ModelMaker)

- From COM to COM+

Part III - Delphi Database-Oriented Architectures

- Delphis Database Architecture

- Client/Server with dbExpress

- Working with ADO

- Multitier DataSnap Applications

- Writing Database Components

- Reporting with Rave

Part IV - Delphi, the Internet, and a .NET Preview

- Internet Programming: Sockets and Indy

- Web Programming with WebBroker and WebSnap

- Web Programming with IntraWeb

- Using XML Technologies

- Web Services and SOAP

- The Microsoft .NET Architecture from the Delphi Perspective

- Delphi for .NET Preview: The Language and the RTL

- Appendix A Extra Delphi Tools by the Author

- Appendix B Extra Delphi Tools from Other Sources

- Appendix C Free Companion Books on Delphi

EAN: 2147483647

Pages: 279