Working with the File System

Overview

Everything happens to everybody sooner or later if there is time enough.-George Bernard Shaw

One reason I welcome the .NET Framework is because it reworks, rationalizes, and simplifies a large part of the old, and still underlying, Win32 API. Within the .NET Framework, the whole Win32 API—with very few exceptions—has been redesigned and made available to programmers in an object-oriented fashion. Not only do you have more effective and handy programming tools, but the object orientation of the .NET Framework makes programming easier, and makes code more reusable than ever.

ASP.NET applications are not specifically related to file-system functions; however, many ASP.NET applications happen to work with local files and need to explore the contents of folders. In this chapter, we'll review the main aspects of file-system programming using ASP.NET. By reading on, you'll see how to (finally) manage path names with ad hoc methods and properties, how to extract as much information as possible about files and directories, and how to read and write files, including XML files.

The file system is definitely a huge topic. So let's start by grouping the main categories of functionality in the API that you're most likely to encounter.

File I O in the NET Framework

The key namespace for operations and classes that relate to file-system activity is System.IO. Within this namespace, you find four logical groups of specialized types. These classes allow programmers to:

- Get information and perform basic operations on files and directories

- Perform string-based manipulation on path names

- Read and write operations on data streams and files

- Be notified of dynamic changes that occur to files and folders in a given directory tree

A bunch of classes let you access each of these features through methods, properties, and fairly easy-to-use enumeration types.

Working with Files

To manage the contents of files and directories, the .NET Framework provides two global static classes, named File and Directory. To use them, you don't need to create specific instances of the classes. File and Directory are just the repository of global, type-specific functions that you call to create, copy, delete, move, and open files and directories. All these functions require a file or a directory name to operate. Table 16-1 shows the methods of the File class.

|

Method Name |

Description |

|---|---|

|

AppendText |

Creates and returns a stream object for the specified file. The stream allows you to append UTF-8 encoded text. |

|

Copy |

Copies an existing file to a new file. The destination cannot be a directory name or an existing file. |

|

Create |

Creates a new file. |

|

CreateText |

Creates (or opens if one exists) a new file for writing UTF-8 text. |

|

Delete |

Deletes the file specified. |

|

Exists |

Determines whether the specified file exists. |

|

GetAttributes |

Gets the attributes of the file. |

|

GetCreationTime |

Returns the creation date and time of the specified file. |

|

GetLastAccessTime |

Returns the last access date and time for the specified file. |

|

GetLastWriteTime |

Returns the last write date and time for the specified file. |

|

Move |

Moves a specified file to a new location. Also provides the option to specify a new file name. |

|

Open |

Opens a file on the specified path. |

|

OpenRead |

Opens an existing file for reading. |

|

OpenText |

Opens an existing UTF-8 encoded text file for reading. |

|

OpenWrite |

Opens an existing file for writing. |

|

SetAttributes |

Sets the specified attributes for the given file. |

|

SetCreationTime |

Sets the date and time the file was created. |

|

SetLastAccessTime |

Sets the date and time the specified file was last accessed. |

|

SetLastWriteTime |

Sets the date and time that the specified file was last written. |

The path parameter that all methods require can indicate a relative or absolute path. A relative path is interpreted as relative to the current working directory. To obtain the current working directory, you use the GetCurrentDirectory method on the Directory class. Path names are case insensitive and can also be expressed in the Universal Naming Convention (UNC) format if they contain a server and share name. Writing methods create the specified file if it doesn't exist; if the file does exist, it gets overwritten as long as it isn't marked read-only.

| Note |

Most path names contain a backslash () character, which has a special meaning to C-based languages such as C#. In C#, the backslash is used in conjunction with other characters to specify escape sequences, such as the tab character. To indicate that a single backslash character is needed, you can use a double backslash (\). C# also provides a more elegant solution—prefixing the path name with the @ symbol. The following two path names are equivalent within a C# source file: string path1 = "c:\path\file.txt"; string path2 = @"c:pathfile.txt"; The @ character tells the C# compiler to consider the following string as literal text and to process it verbatim. |

The global Directory class exposes static methods for creating, copying, and moving directories and for enumerating their files and subdirectories. Table 16-2 lists the methods of the Directory class.

|

Method Name |

Description |

|---|---|

|

CreateDirectory |

Create all directories and subdirectories as specified by the path. |

|

Delete |

Deletes a directory and, optionally, all of its contents. |

|

Exists |

Determines whether the given directory exists. |

|

GetCreationTime |

Gets the creation date and time of the specified directory. |

|

GetCurrentDirectory |

Gets the current working directory of the application. |

|

GetDirectories |

Returns an array of strings filled with the names of the child subdirectories of the specified directory. |

|

GetDirectoryRoot |

Gets volume and root information for the specified path. |

|

GetFiles |

Returns the names of files in the specified directory. |

|

GetFileSystemEntries |

Returns an array of strings filled with the names of all files and subdirectories contained in the specified directory. |

|

GetLastAccessTime |

Returns the date and time the specified directory was last accessed. |

|

GetLastWriteTime |

Returns the date and time the specified directory was last written. |

|

GetLogicalDrives |

Returns an array of strings filled with the names of the logical drives found on the computer. Strings have the form ":". |

|

GetParent |

Retrieves the parent directory of the specified path. The directory is returned as a DirectoryInfo object. |

|

Move |

Moves a directory and its contents to a new location. An exception is thrown if you move the directory to another volume or if a directory with the same name exists. |

|

SetCreationTime |

Sets the creation date and time for the specified directory. |

|

SetCurrentDirectory |

Sets the application's current working directory. |

|

SetLastAccessTime |

Sets the date and time the specified file or directory was last accessed. |

|

SetLastWriteTime |

Sets the date and time a directory was last written to. |

You should note that the Delete method has two overloads. By default, it deletes only empty directories and throws an IOException exception if the directory is not empty or marked as read-only. The second overload includes a Boolean argument that, if set to true, enables the method to recursively delete the entire directory tree.

// Clear a directory tree Directory.Delete(dirName, true);

The global classes File and Directory provide you with a lot of helpful methods. However, many of those methods are also available on helper data structures that collect information about a particular file or directory. These classes are named FileInfo and DirectoryInfo.

| Caution |

Each time a method of the Directory or File class is invoked, a security check is performed on the involved file-system elements. The check is aimed at verifying that the current user has the permission to operate. This might result in a slight performance hit, especially if you use the same files or directories several times. In similar situations, consider using the corresponding instance method of the FileInfo or DirectoryInfo class. In this case, in fact, the security check would occur only once. In contrast, for single, one-shot use the global classes are preferable because their internal implementation results in more direct code. |

The FileInfo Class

If you look at the overall functionality, the FileInfo class looks very similar to the static File class. However, the internal implementation and the programming interface is slightly different. The FileInfo class works on a particular file and requires that you first instantiate the class to access methods and properties.

FileInfo fi = new FileInfo("info.txt");

When you create an instance of the class, you specify a file name, either fully or partially qualified. The file you indicate is checked only for the name consistency and not for existence. The class, in fact, can also be used to create a new file, as shown here:

FileInfo fi = new FileInfo("info.txt");

FileStream stream = fi.Create();

If the file name you indicate through the class constructor is clearly an unacceptable name, an exception is thrown. Common pitfalls are colons in the middle of the string, invalid characters, blank names, and path and file names that exceed the maximum length. Table 16-3 lists the properties of the FileInfo class.

|

Property Name |

Description |

|---|---|

|

Attributes |

Gets or sets the attributes of the current file. |

|

CreationTime |

Gets or sets the time when the current file was created. |

|

Directory |

Gets a DirectoryInfo object representing the parent directory. |

|

DirectoryName |

Gets a string representing the directory's full path. |

|

Exists |

Gets a value that indicates whether a file with the current name exists. |

|

Extension |

Gets the string representing the extension of the file name, including the period (.). |

|

FullName |

Gets the full path name of the current file. |

|

LastAccessTime |

Gets or sets the time when the current file was last accessed. |

|

LastWriteTime |

Gets or sets the time when the current file was last written. |

|

Length |

Gets the size in bytes of the current file. |

|

Name |

Gets the name of the file. |

The methods available for the FileInfo class are summarized in Table 16-4. As you can see, methods can be grouped in two categories: methods to perform simple stream-based operations on the contents of the file, and methods to copy or delete the file itself.

|

Method |

Description |

|---|---|

|

AppendText |

Creates and returns a stream object for the current file. The stream allows you to append UTF-8 encoded text. |

|

CopyTo |

Copies the current file to a new file. |

|

Create |

Creates a file. It's a simple wrapper for the File.Create method. |

|

CreateText |

Creates a file, and returns a stream object to write text. |

|

Delete |

Permanently deletes the current file. Fails if the file is open. |

|

MoveTo |

Moves the current file to a new location, providing the option to specify a new file name. |

|

Open |

Opens the file with various read/write and sharing privileges. |

|

OpenRead |

Creates and returns a read-only stream for the file. |

|

OpenText |

Creates and returns a stream object to read text from the file. |

|

OpenWrite |

Creates and returns a write-only stream object that can be used to write text to the file. |

|

Refresh |

Refreshes the information that the class can have about the file. |

|

ToString |

Returns a string that represents the fully qualified path of the file. |

The FileInfo class represents a logical wrapper for a system element that is continuously subject to concurrent changes. Can you be sure that the information returned by the FileInfo object is always up to date? Properties such as Exists, Length,Attributes, and LastAccessTime can easily contain inconsistent values if other users can concurrently access the machine and modify files.

Keeping File Information In Sync

When you create an instance of FileInfo, no information is actually read from the file system. As soon as you attempt to read the value of one of the aforementioned critical properties, the class invokes the Refresh method, reads the current state of the file, and caches that information. For performance reasons, though, the FileInfo class doesn't automatically refresh the state of the object each time properties are read. It does that only the first time one of the properties is read.

To counteract for this built-in behavior, you should call Refresh whenever you need to read up-to-date information about the attributes or the length of a file. However, you don't necessarily need to do that all the time. Whether you need it or not mostly depends on the characteristics and requirements of the application.

The Refresh method makes a call to a Win32 API function—FindFirstFile—and uses the information contained in the returned WIN32_FIND_DATA structure to populate the properties of the FileInfo class.

Copying and Deleting Files

To make a copy of the current file, you use the CopyTo method. CopyTo comes with two overloads. Both copy the file to another file, but the first overload disallows overwriting, while the other gives you a chance to control overwriting through a Boolean parameter.

FileInfo fi = fi.CopyTo("NewFile.txt", true);

Note that both overloads consider the file name argument as a file name. You cannot pass just the name of a directory where you want the file to be copied. If you do so, the directory name will be interpreted as a file name.

The Delete method permanently deletes the file from disk. Using this method, there is no way to programmatically send the deleted file to the recycle bin. To accomplish this, you must resort to creating a .NET wrapper for the Win32 API function designed to do that. The API function needed is named SHFileOperation.

Attributes of a File

The Attributes property indicates the file system attributes of the given file. For an attribute to be read or set, the file must exist and be accessible. To write an attribute value to a file, you must also have write permissions; otherwise, a FileIOPermissionAccess exception is raised. The attributes of a file are expressed using the FileAttributes enumeration. Table 16-5 lists the values for the FileAttributes enumeration.

|

Attribute |

Description |

|---|---|

|

Archive |

Indicates that the file is an archive. |

|

Compressed |

The file is compressed. |

|

Device |

Not currently used. Reserved for future use. |

|

Directory |

The file is a directory. |

|

Encrypted |

The file or directory is encrypted. For a file, this means that all data in the file is encrypted. For a directory, this means that encryption is the default for newly created files and directories but all current files are not necessarily encrypted. |

|

Hidden |

The file is hidden and doesn't show up in directory listings. |

|

Normal |

The file has no other attributes set. Note that this attribute is valid only if used alone. |

|

NotContentIndexed |

The file should not be indexed by the system indexing service. |

|

Offline |

The file is offline and its data is not immediately available. |

|

ReadOnly |

The file is read-only. |

|

ReparsePoint |

The file contains a reparse point, which is a block of user-defined data associated with a file or a directory. Requires an NTFS file system. |

|

SparseFile |

The file is a sparse file. Sparse files are typically large files whose data are mostly zeros. Requires an NTFS file system. |

|

System |

The file is a system file, part of the operating system, or used exclusively by the operating system. |

|

Temporary |

The file is temporary and can be deleted by the application when it is no longer needed. |

Note that not all attributes in Table 16-5 are applicable to both files and directories.

| Note |

The FileAttributes type is an enumerated type marked with the [Flags] attribute.

[Flags]public enum FileAttributes {...}

The [Flags] attribute set for an enumeration type allows programmers to use a bitwise combination of the member values as if the resultant value were itself a native member of the enumeration. |

You set attributes on a file by using code as in the following code snippet:

// Make the file read-only and hidden

FileInfo fi = new FileInfo("info.txt")

fi.Attributes = FileAttributes.ReadOnly | FileAttributes.Hidden;

Note that not all attributes listed in Table 16-5 can be set through the Attributes property. For example, the Encrypted and Compressed attributes can be assigned only if the file is contained in an encrypted folder or is programmatically encrypted. Likewise, a file can be given a reparse point or can be marked as a sparse file only through specific API functions and only on NTFS volumes.

The FileAttributes type has another nice feature. When you call the ToString method, the class returns a string with a description of the attributes. The returned text consists of a comma-separated string in which each attribute is automatically translated into descriptive text. This following text shows the output of ToString when called to operate on a read-only and hidden file:

Readonly, Hidden

Working with Directories

To manage a directory as an object, you resort to the DirectoryInfo class. The class supplies methods and properties to read attributes and performs basic operations on directories. Let's look at it in a bit more detail.

The DirectoryInfo Class

The DirectoryInfo class represents the instance-based counterpart of the Directory class we explored earlier. The class works on a particular directory.

DirectoryInfo di = new DirectoryInfo(@"c:");

To create an instance of the class, you specify a fully qualified path name. Just as for FileInfo, the path name is checked for consistency but not for existence. Note that the path name can also be a file name or a UNC name. If you create a DirectoryInfo object passing a file name, the directory that contains the specified file will be considered for use. Table 16-6 shows the properties available with the DirectoryInfo class.

|

Property |

Description |

|---|---|

|

Attributes |

Gets or sets the attributes of the current directory |

|

CreationTime |

Gets or sets the creation time of the current directory |

|

Exists |

Gets whether the directory exists |

|

Extension |

Gets the extension (if any) in the directory name |

|

FullName |

Gets the full path of the directory |

|

LastAccessTime |

Gets or sets the time when the current directory was last accessed |

|

LastWriteTime |

Gets or sets the time when the current directory was last written |

|

Name |

Gets the name of the directory bound to this object |

|

Parent |

Gets the parent of the directory bound to this object |

|

Root |

Gets the root portion of the directory path |

Note that the Name property of the file and directory classes is read-only and can't be used to rename the corresponding file system's element.

The methods you can use in the DirectoryInfo class are listed in Table 16-7.

|

Method Name |

Description |

|---|---|

|

Create |

Creates a directory. It's a simple wrapper for the Directory.Create method. |

|

CreateSubdirectory |

Creates a subdirectory on the specified path. The path can be relative to this instance of the DirectoryInfo class. |

|

Delete |

Deletes the directory. |

|

GetDirectories |

Returns an array of DirectoryInfo objects, each pointing to a subdirectory of the current directory. |

|

GetFiles |

Returns an array of FileInfo objects, each pointing to a file contained in the current directory. |

|

GetFileSystemInfos |

Retrieves an array of FileSystemInfo objects representing all the files and subdirectories in the current directory. |

|

MoveTo |

Moves a directory and all of its contents to a new path. |

|

Refresh |

Refreshes the state of the DirectoryInfo object. |

The method GetFileSystemInfos returns an array of objects, each pointing to a file or a subdirectory contained in the directory bound to the current DirectoryInfo object. Unlike the GetDirectories and GetFiles methods, which simply return the names of subdirectories and files as plain strings, GetFileSystemInfos return a strongly typed object for each entry—either DirectoryInfo or FileInfo. The return type of the method is an array of FileSystemInfo objects.

public FileSystemInfo[] GetFileSystemInfos()

FileSystemInfo is the base class for both FileInfo and DirectoryInfo. GetFileSystemInfos has an overloaded version that can accept a string with search criteria.

Listing the Contents of a Directory

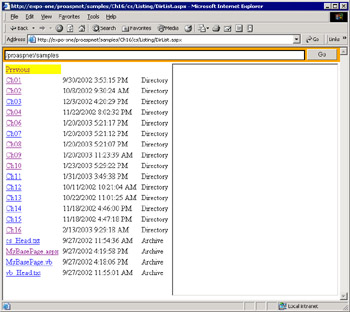

Let's see how to use the FileInfo and DirectoryInfo classes to write a small application that creates a navigation mechanism across Web folders. Figure 16-1 shows the page in action.

Figure 16-1: An ASP.NET application that navigates through Web folders.

The user interface consists of a text box, in which the user enters the virtual path to search in, and a button to start the search. The results are displayed using a Repeater control within a scrollable area. (We're reusing some of the tricks we've learned in Chapter 9, "ASP.NET Iterative Controls.") Finally, an inline frame is used to display the contents of listed .aspx pages.

When the user clicks on the Go button, the following code runs and displays all the files and subdirectories contained in the physical folder that maps to the given virtual path.

public void OnGo(object sender, EventArgs e) {

// Reset the current URL

SetFullUrl("");

// Command to list the contents of the directory

string url = folderName.Text;

ListDirectory(url);

}

The ListDirectory method retrieves all the information available using the GetFileSystemInfos method. As mentioned, the GetFileSystemInfos method returns an array of FileSystemInfo objects. The array is then bound to the Repeater.

private void ListDirectory(string url) {

// Build an absolute URL using the cached "current URL"

string tmp = GetFullUrl(url);

// Refresh the address bar

folderName.Text = tmp;

// Set this URL as the new "current URL"

SetFullUrl(tmp);

// Reset the contents of the frame (in case a page was displayed)

SetView(null);

// Obtain a physical path from the URL and instantiate the

// DirectoryInfo class

string path = Server.MapPath(tmp);

DirectoryInfo di = new DirectoryInfo(path);

// Bind to data

Listing.DataSource = di.GetFileSystemInfos();

Listing.DataBind();

}

Any public property on the FileSystemInfo object can be accessed through DataBinder.Eval and used in the resulting user interface. The following code snippet shows the template of the Repeater.

<%# DataBinder.Eval(Container.DataItem, "Name") %> <%# DataBinder.Eval(Container.DataItem, "LastWriteTime") %> <%# DataBinder.Eval(Container.DataItem, "Attributes") %>

The template generates a link button for each selected file or directory. Directories are given a command name of SELECT, whereas files are associated with the OPEN command. When the user clicks on any of them, the page responds by either recursively running the ListDirectory method or updating the frame view. The first option is chosen when the user clicks on a directory; the second option is reserved for when the click hits an .aspx page. The following code illustrates the process that updates the user interface of the application.

string GetCommandName(object fsInfo) {

FileSystemInfo fsi = (FileSystemInfo) fsInfo;

if (fsi is DirectoryInfo)

return "Select";

else

return "Open";

}

void ItemCommand(object sender, RepeaterCommandEventArgs e) {

switch(e.CommandName) {

case "Select":

ListDirectory(e.CommandArgument.ToString());

break;

case "Open":

SetView(GetFullUrl(e.CommandArgument.ToString()));

break;

}

return;

}

void SetView(string url) {

if (url == null)

view.Attributes["src"] = "about:blank";

else

view.Attributes["src"] = url;

}

Filtering the Contents of a Directory

The GetFileSystemInfos method accepts a filter string by means of which you set some criteria. The filter string can contain commonly used wild-card characters such as ? and *. The ? character is a placeholder for any individual character, while * represents any string of one or more characters. Characters other than the wild-card specifiers just represent themselves. The following statement demonstrates how to scan a given directory searching for text files only:

foreach(FileSystemInfo fsi in di.GetFileSystemInfos("*.txt"))

{

}

}

A bit more problematic is selecting all files that belong to one group or another. Likewise, there's no direct way to obtain all directories plus all the files that match certain criteria. In similar cases, you must query each result set individually and then combine them in a single array of FileSystemInfo objects. The following code snippet shows how to select all the subdirectories and all the .aspx pages in a given folder:

FileSystemInfo fsiDirs = (FileSystemInfo[]) di.GetSubdirectories();

FileSystemInfo fsiAspx = (FileSystemInfo[]) di.GetFiles(".aspx");

You can fuse the two arrays together using the methods of the Array class.

Working with Path Names

Although path names are nothing more than strings, it's widely agreed that they deserve their own tailor-made set of functions for easier and handy manipulation. This old dream of programmers finally came true with the .NET Framework, particularly thanks to the Path class. The Path type provides programmers with the unprecedented ability to perform operations on instances of a string class that contain file or directory path information. Path is a single-instance class that contains only static methods. A path can contain either absolute or relative location information for a given file or folder. If the information about the location is incomplete and partial, the class completes it using the current location, if applicable.

The Programming Interface of the Path Class

The members of the Path class let you perform, quickly and effectively, everyday operations such as determining whether a given file name has a certain extension, changing only the extension and leaving the remainder of the path intact, combining partial path strings into one valid path name, and more. The Path class doesn't work in conjunction with the operating system and should be simply viewed as a highly specialized string manipulation class.

No members of the class ever interact with the file system to verify the correctness of a file name. From this point of view, you simply manipulate a string of text. So even though you successfully verified that a fully qualified file name has a given extension, nothing could give you the certainty that the file really exists. Likewise, even though you can combine two strings to get a valid directory name, that would not be sufficient to actually create that new directory. On the other hand, the members of the Path class are smart enough to throw an exception if they detect that a path string contains invalid characters. Table 16-8 summarizes the public fields of the class.

|

Property Name |

Description |

|---|---|

|

AltDirectorySeparatorChar |

The alternate character used to separate directory levels in a path. It is the forward slash (/) in Windows. |

|

DirectorySeparatorChar |

The platform-specific character used to separate directory levels in a path. It is the backslash () in Windows. |

|

InvalidPathChars |

Array of characters that are not permitted in a path string on the current platform. |

|

PathSeparator |

The character used to separate path strings in environment variables. It is the semicolon (;) in Windows. |

|

VolumeSeparatorChar |

The character used to separate a volume from the remainder of the path. It is the colon (:) in Windows. |

Table 16-9, on the other hand, lists the methods of the Path class.

|

Method Name |

Description |

|---|---|

|

ChangeExtension |

Changes the extension of the specified path string. |

|

Combine |

Concatenates two path strings together. |

|

GetDirectoryName |

Extracts and returns the directory information for the specified path string. |

|

GetExtension |

Returns the extension of the specified path string. |

|

GetFileName |

Returns the file name and extension of the specified path string. |

|

GetFileNameWithoutExtension |

Returns the file name of the specified path string without the extension. |

|

GetFullPath |

Returns the absolute path for the specified path string. |

|

GetPathRoot |

Returns the root directory for the specified path. |

|

GetTempFileName |

Returns a unique temporary file name, and creates a zero-byte file by that name on disk. |

|

GetTempPath |

Returns the path of the temporary folder. |

|

HasExtension |

Determines whether the specified path string includes an extension. |

|

IsPathRooted |

Returns a value that indicates whether the specified path string contains an absolute path. |

It's interesting to note that any call to GetTempFileName promptly results in a zero-length file actually being created on disk, and specifically in the user's temporary folder. This is the only case in which the Path class happens to interact with the operating system.

Combining Paths

A couple of considerations apply to the behavior of the Combine method, whose prototype is as follows:

public static string Combine(string path1, string path2)

If one of the specified arguments is an empty string, the method returns the other argument. If any of the arguments contains an absolute path, the first argument with an absolute path is returned. If any of the two arguments is a null string, the ArgumentNullException exception is thrown. Generally, the exception is thrown whenever a member of the Path class happens to work with a null argument.

In addition, if the first argument does not terminate with a valid separator, the character is appended to it before the concatenation takes place. A valid separator is either the character that DirectorySeparatorChar indicates or the character returned by AltDirectorySeparatorChar. In all other cases, the Combine method fuses the two strings together. However, under some circumstances (such as spaces and UNC paths in the second argument), the Combine method does not throw any exception, but the result should be programmatically verified prior to making use of it.

| Note |

Although useful and effective, the Path class doesn't seem to have a method to verify whether a given path is a URL. A similar method exists in Win32 API and in particular in the Shell Lightweight API (shlwapi.dll). The function is named PathIsURL and has the following prototype: bool PathIsURL(string path) To make use of it in managed code, you must resort to the P/Invoke platform and connect to the DLL from within managed code. |

Readers and Writers

In the .NET Framework, the classes in the System.IO namespace provide for both synchronous and asynchronous operations (for example, reading and writing) on two distinct categories of data: streams and files. A file is an ordered and named collection of bytes and is persistently stored to a disk. A stream represents a block of bytes that is read from and written to a data store, which can be based on a variety of storage media. So a stream is a kind of superset of a file, or, if you prefer, a stream is just a file that can be saved to a variety of storage media, including memory. To work with streams, the .NET Framework defines reader and writer classes.

Readers and writers are generic software components that provide a common programming interface for both synchronous and asynchronous operations on streams and files. Readers and writers work in isolated compartments in the sense that readers work in a read-only fashion while writers operate in writing only. A reader works in much the same way as a client-side database cursor. The underlying stream is seen as a logical sequence of units of information whose size and layout depend on the particular reader. The reader moves through the data in a read-only, forward-only way. Just as a database cursor reads one record at a time and stores the content in some internal data structure, a reader working on a disk file stream would consider as its own atomic unit of information the single byte, whereas a text reader would perhaps specialize in extracting one row of text at a time.

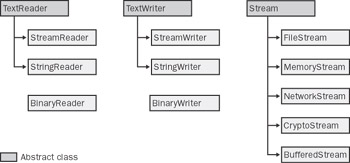

To work with streams, the .NET Framework defines several flavors of reader and writer classes. Figure 16-2 shows how each class relates to the others.

Figure 16-2: Various reader, writer, and stream classes.

The base classes are TextReader, TextWriter, BinaryReader, BinaryWriter, and StreamTextReader. , TextWriter, and Stream are marked abstract and cannot be directly instantiated in code. You can use abstract classes, though, to reference living instances of derived classes.

Working with Streams

The Stream class supports three basic operations: reading, writing, and seeking. Reading and writing operations entail transferring data from a stream into a data structure and vice versa. Seeking consists of querying and modifying the current position within the stream of data.

The .NET Framework provides a number of predefined stream classes, including FileStream, MemoryStream, and the fairly interesting CryptoStream, which automatically encrypts and decrypts data as you write or read. As you can see, the .NET Framework lacks a compressed stream class, but some third-party vendors already provide that. Overall, the methods and properties of the Stream base class are designed for a byte I/O. The Read and Write methods that you find in any derived class have the following signatures:

public abstract int Read( in byte[] buffer, int offset, int count ); public abstract void Write( byte[] buffer, int offset, int count );

A stream class works on top of a back-end storage medium, such as a disk or memory. Each different storage medium implements its own stream as an implementation of the Stream class. Each stream type reads and writes bytes from and to its given backing store. The constructors of a Stream class have the parameters necessary to connect the stream to the backing store. For example, FileStream has constructors that specify a path, whereas the CryptoStream class requires information about the cryptographic transformation to apply.

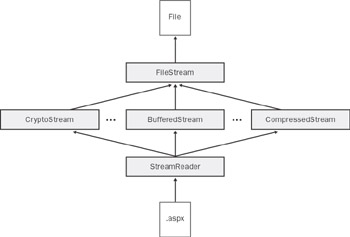

An interesting aspect of .NET streams is that they can be composed and piled up. Base streams can be attached to one or more pass-through streams that provide the desired functionality. Base streams are those which provide connectivity with the physical data source; pass-through streams are those which connect to an existing stream, modify data, and expose the new contents as a stream. A typical example of a pass-through stream is CryptoStream. You can't create a CryptoStream directly from a file; however, you can encrypt the contents of any object exposed as a stream. While composing streams, you end up building a chain of objects at the end of which there's the calling application. For the application's ease, a reader or writer can be attached to the end of the chain so that the desired types can be read or written more easily. The overall architecture is depicted in Figure 16-3.

Figure 16-3: Composing streams to connect an ASP.NET page with a target file.

Working with Readers and Writers

The TextReader and TextWriter classes are designed to be the base classes for specialized readers and writers. In particular, they are designed for character I/O and, especially, to handle Unicode characters. For example, using the TextReader class, you can read an entire line of text in a single shot. The method that does this is ReadLine. A line of characters is intended as the sequence that flows from the current position to the end of the line. The end of the line is marked by a carriage return (0x000d), a line feed (0x000a), or the physical end of stream.

Along with ReadLine, the TextReader class provides a couple of more specialized methods for reading. They are ReadBlock and ReadToEnd. ReadBlock reads a given number of characters from the current stream and writes them to the specified buffer beginning at the given index. ReadToEnd, on the other hand, just reads from the current position to the end of the stream. To write text, you use either the Write method of the TextWriter class or the more specialized, and extremely handy, WriteLine method.

Specialized Readers and Writers

The TextReader and TextWriter classes act as the foundation for more specialized readers and writers. The StreamReader and StreamWriter classes are just examples of classes that provide a unified programming interface to read and write the contents of a stream. The .NET Framework also provides the StringReader and StringWriter classes to let you read and write characters on strings.

The .NET Framework uses the concept of reader and writer classes extensively. So you find ad hoc writer classes such as HttpWriter and HtmlWriter, which write bytes from ASP.NET pages to the browser and prepare HTML pages, respectively. Other ad hoc reader and writer classes exist throughout the framework, although they have a different programming interface and do not directly inherit from TextReader or TextWriter. In this category you find a pair of classes for binary data management: BinaryReader and BinaryWriter. These classes read and write primitive data types as binary values in the specified encoding. Other reader and writer classes can be found in particular areas of the framework, including XML (XmlReader and XmlWriter) and ADO.NET (DataReader).

We've already met ADO.NET data readers in Chapter 5, "The ADO.NET Object Model." We'll discuss XML readers and writers with practical examples later in this chapter.

Reading Local Files

In the .NET Framework, all files can be read and written only through streams. You transform the contents of a file into a stream by using the FileStream class. The following code shows how to open a file for reading:

FileStream fs = new FileStream(filename, FileMode.Open, FileAccess.Read);

Streams supply a rather low-level programming interface which, although functionally effective, is not always an appropriate interface for classes that need to perform more high-level operations such as reading the entire content or an entire line. For this reason, the .NET Framework provides you with reader classes. In particular, two types of file readers are available—text readers and binary readers.

To manipulate the contents of a file as a binary stream, you just pass the file stream object down to a specialized reader object that knows how to handle it.

BinaryReader bin = new BinaryReader(fs);

If you want to process the file's contents in a text-based way, you can use the StreamReader class. Note that unlike BinaryReader, the StreamReader class can be directly instantiated on a file, thus saving one step of programming. So if your goal is manipulating text files, you might find the following code more compact:

StreamReader reader = new StreamReader(fileName);

Finally, you should note that all the File and FileInfo methods that allow for data manipulation return stream objects. This code snippet shows how to get a stream reader directly from a FileInfo object using the OpenText method.

FileInfo fi = new FileInfo(fileName); StreamReader reader = fi.OpenText();

The StreamReader Class

The StreamReader class inherits from TextReader and is designed for character input in a particular encoding scheme. You use it to read lines of information from a text file. StreamReader defaults to UTF-8 encoding unless otherwise specified. UTF-8 has the advantage of managing Unicode characters, which allows it to provide consistent results on localized versions of the operating system. The source that StreamReader works on doesn't have to be a disk file; it can be any store that supports access via streams.

Streams can be null objects. A quick test to see whether you're using a reader built around a null stream involves the static Null field.

StreamReader reader = new StreamReader(fileName);

if (!reader.Equals(StreamReader.Null)) {

reader.BaseStream.Seek(0, SeekOrigin.Begin);

string text = reader.ReadToEnd();

}

reader.Close();

}

reader.Close();

The StreamReader class defines several constructors and can be created on top of a disk file or an existing stream. Other parameters you can specify are the character encoding, byte-order mark, and buffer size.

| Note |

When instantiating the StreamReader class, you can indicate whether to look for byte-order marks at the beginning of the file. The class automatically recognizes UTF-8, little-endian Unicode, and big-endian Unicode text if the file starts with the appropriate byte-order marks. Otherwise, the user-provided encoding is used. |

The StreamReader class exposes only two properties—BaseStream and CurrentEncoding. The BaseStream property returns the underlying stream the class is working on; the CurrentEncoding property gets the current character encoding being used.

Accessing the Underlying Stream

The programming interface of the StreamReader class doesn't include any method to seek data or perform position-based reading. Similar functions are, instead, available on the underlying stream object. You use the method on the BaseStream object to move the internal pointer to a particular position.

// Skip the first 14 bytes StreamReader reader = new StreamReader(fileName); reader.BaseStream.Seek(14, SeekOrigin.Begin);

Note that reading methods on the StreamReader class doesn't update the pointer on the stream class. For this reason, the position of the underlying stream normally doesn't match the StreamReader position. The StreamReader maintains the current position internally, but this information is not made public and can be reset using the DiscardBufferedData method. To keep the pointers in sync, you must manually move the base stream pointer ahead after each reading. The following code snippet shows how to proceed:

StreamReader reader = new StreamReader(fileName);

// Set the file pointer to the beginning

reader.BaseStream.Seek(0, SeekOrigin.Begin);

reader.BaseStream.Position = 0;

// Read one character at a time

char[] buffer = new char[1];

while(reader.BaseStream.Position < reader.BaseStream.Length) {

reader.Read(buffer, 0, 1);

reader.BaseStream.Position ++;

DoSomething(buffer[0].ToString());

}

// Reset and close

reader.DiscardBufferedData();

reader.Close();

If you continue reading from the reader after a call to DiscardBufferedData is made, you read back from the origin of the stream.

Character Encoding

The CurrentEncoding property returns the current character encoding the reader is using. The encoding scheme is expressed through an object that inherits from the Encoding class. Characters are abstract entities that can be represented using many different character schemes or code pages. An encoding scheme is a way of mapping source characters to an effective representation that the application understands.

In the .NET Framework, specifically in the System.Text namespace, the encoding classes that are defined are shown in Table 16-10.

|

Class |

Description |

|---|---|

|

ASCIIEncoding |

Encodes Unicode characters as single 7-bit ASCII characters. Supports character values between 0 and 127. |

|

UnicodeEncoding |

Encodes each Unicode character as two consecutive bytes (16-bit). Both little-endian (code page 1200) and big-endian (code page 1201) byte orders are supported. Also known as UTF-16. |

|

UTF7Encoding |

Encodes Unicode characters using the UCS Transformation Format, 7-bit form (UTF-7) encoding. Supports all Unicode character values, and can also be accessed as code page 65000. |

|

UTF8Encoding |

Encodes Unicode characters using the UTF-8 encoding. Supports all Unicode character values, and can also be accessed as code page 65001. |

If the StreamReader object is created using one of the constructors with the byte-order detection option turned on, the actual encoding scheme is determined only the first time you read from the stream. In this case, the encoding class you pass to the constructor is considered as a default scheme and can be different from the actual encoding detected looking at the first three bytes in the stream.

Methods of the StreamReader Class

The methods of the StreamReader class are detailed in Table 16-11.

|

Method |

Description |

|---|---|

|

Close |

Closes the StreamReader object, and releases any system resources associated with the reader. |

|

DiscardBufferedData |

Resets all internal variables that track the current position and buffered data. |

|

Peek |

Reads the next available character, but does not consume it. The next reading operation returns only this character. The method acts as a preview and always forward-checks one character. |

|

Read |

Reads the next character or next set of characters from the input stream. |

|

ReadBlock |

Reads up to the specified maximum of characters from the current stream and writes the data to buffer, beginning at a given index. |

|

ReadLine |

Reads a line of characters from the current stream, and returns the data as a string. |

|

ReadToEnd |

Reads characters from the current position to the end of the stream. Returns a string. |

The preview capabilities of the Peek method can be used to control the loop that is typically used to scan a stream.

// Loop until the end of the stream is reached

while (reader.Peek() > -1) {

}

}

The Peek method returns -1 if there's nothing else to read or if the stream does not support seeking.

Reading a Text File

If an ASP.NET application deals with a text file, chances are good that the file contains configuration data. As we saw in Chapter 12, "Configuration and Deployment," ASP.NET touts the use of .config files with an XML syntax. However, many developers feel quite comfortable with probably less expressive but terrifically handy INI files. The typical syntax of an INI file is as follows:

Server=http://localhost Machine=expo-one Options=Debug, Trace

Introduced with Windows 3.x, the INI syntax has never changed over time and has resisted change in spite of the invention of the registry and then XML configuration stores. In the simplest case, an INI file consists of any number of lines of text expressed in the form of key=value.

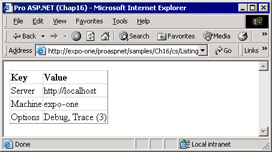

As we mentioned in Chapter 12, a similar collection of key/value pairs can be easily managed using the AppSettingsReader class and a .config file. However, with the tools available in the .NET Framework, even the old-fashioned, but still effective, pure INI approach becomes straightforward to implement. Let's see how to read from an INI-like file and build a key/value collection to display in a DataGrid control.

void Page_Load(object sender, EventArgs e)

{

// File to process

string path = Server.MapPath(@"info.txt");

// Read and caches the contents

StreamReader reader = new StreamReader(path);

string text = reader.ReadToEnd();

reader.Close();

// Split data and builds a name/value collection

string[] tokens = text.Split('

');

NameValueCollection coll = new NameValueCollection();

foreach(string s in tokens) {

string[] parts = s.Split('=');

coll.Add(parts[0], parts[1]);

}

// Print data

grid.DataSource = coll;

grid.DataBind();

}

The contents of the file are read in a single shot thanks to the ReadToEnd method and cached in a local string variable. By using the Split method of the String class, we can tokenize the original string in individual substrings by using the specified character as the separator. We use the newline ( ) character and obtain an array of strings, each of which represents a line of text. (Note that you can obtain the same result by reading one line at a time, but this would probably lock the file for a longer time.)

The next step consists in calling Split on each line of text using the equal (=) symbol. The two parts of the string—left and right of the = symbol—are copied into a newly created NameValueCollection object as the key and the value, respectively. Finally, the collection is bound to a DataGrid control for display.

Binding the Contents of a File to a DataGrid

If automatically bound, a DataGrid control displays only the Name column of a name/value collection. To display it correctly, use a couple of templated columns.

<%# Container.DataItem %> <%# ExtractData(Container.DataItem) %>

The Container.DataItem expression evaluates to a string—specifically, the string that represents the key. To extract the physical data, you must access the key within the collection, as shown here:

string ExtractData(object dataItem) {

// Get the collection object

NameValueCollection coll = (NameValueCollection) grid.DataSource;

// Get the text to display

string displayText = coll[dataItem.ToString()];

// Map the contents to a custom enum type

if ((string) dataItem == "Options") {

MyOptions opt;

opt = (MyOptions) Enum.Parse(typeof(MyOptions), displayText);

displayText = String.Format("{0} ({1})", displayText, (int) opt);

}

return displayText;

}

The collection is just what the DataSource property of the DataGrid returns. Once you hold the collection, getting the value is a child's game. The preceding code also demonstrates a very nice feature about enumeration and the persistence of the enumeration values.

Suppose you need to configure some options for your application. In practice, you never use strings to indicate features; instead, you use numbers. Subsequently, when you persist those settings, only numbers are saved to a file. This wouldn't be a big deal except for the fact that it makes it harder for administrators and developers to edit settings offline. Look at the Options element in the sample file we just discussed—wouldn't it be more confusing if we used, say, 3 instead of descriptive and human-readable text like "Debug, Trace"? How can you translate that easily editable string into a number? Let's start by defining a custom enumeration type named MyOptions.

[Flags]

enum MyOptions {

Debug = 1,

Trace = 2

}

Once the enumeration is defined, you can have the static Enum.Parse method parse any string trying to match it to a combination of values in the enumeration. A comma-separated list of strings that represent elements of the enumeration is automatically recognized as the AND of the corresponding values. Enum.Parse returns an object that evaluates to an element (or a combination of elements) in the enumeration. Figure 16-4 shows the output of the sample page we have built so far.

Figure 16-4: The contents of a text file that has been read, copied into a collection, and displayed using a DataGrid control.

The BinaryReader Class

A binary reader works by reading primitive data types from a stream with a specific encoding. The default encoding is UTF-8. The set of methods includes Read, PeekChar, and a long list of ReadXxx methods specialized to extract data of the given type. The generic Read method works by extracting only one character from the stream and moving the internal pointer ahead according to the current encoding.

Reading Binary Information

Binary readers are ideal for extracting information out of a binary file. The following code snippet shows how to read width, height, and color depth for a .bmp file.

void DisplayBmpInfo(string bmpFile) {

FileStream fs = new FileStream(bmpFile, FileMode.Open,

FileAccess.Read);

BinaryReader reader = new BinaryReader(fs);

// Skip the file header (14 bytes)

reader.ReadBytes(14);

// Skip the structure's size

reader.ReadUInt32();

// Read width and height of the BMP file

int width = reader.ReadInt32();

int height = reader.ReadInt32();

// Skip info about BMP planes of colors

Int16 planes = reader.ReadInt16();

// Read color depth (bit per pixel)

Int16 bitsPerPixel = reader.ReadInt16();

// Clean-up

reader.Close();

fs.Close();

// Print information

Response.Write(width + " x " + height + " x " + bitsPerPixel * planes);

}

If you want to skip over the specified number of bytes, you just indicate the number of bytes explicitly.

reader.ReadBytes(14);

However, this is not always a smart approach. If you know what data structure the bytes represent, you can just skip the size of the type. For example, the file header of a .bmp file is given by a structure named BITMAPFILEHEADER, which counts a total of 14 bytes: three UInt16 and two UInt32. How can you indicate the number of bytes in a parametric way?

Skipping Bytes While Reading

You cannot rely on handy functions such as sizeof (in C#) or Len (in Visual Basic) to indicate the number of bytes to skip in a parametric way. The sizeof function, in particular, works only on unmanaged resources. A more viable alternative would be to use the Marshal.SizeOf function, which works on a type or an object. In any case, you must import the type declaration straight from the Win32 API.

// Must import System.Runtime.InteropServices // Skip the BITMAPFILEHEADER BMP's file header reader.ReadBytes(Marshal.SizeOf(typeof(BITMAPFILEHEADER)));

When imported in the managed world, the BITMAPFILEHEADER structure looks like the following class:

[StructLayout(LayoutKind.Sequential, Pack=1)]

public class BITMAPFILEHEADER {

public UInt16 bfType;

public UInt32 bfSize;

public UInt16 bfReserved1;

public UInt16 bfReserved2;

public UInt32 bfOffBits;

}

Pay attention to the role of the Pack attribute, which is set to 8 by default. The attribute determines the size of the minimum block of memory that is allocated for the structure. In other words, the size of the class can only be a multiple of the Pack value. It goes without saying that setting Pack to 1 is the only way to get an exact size with a byte precision.

More important than how you get the size to skip, though, is the fact that as long as you use a ReadXxx method, the bytes are read and not skipped. To really jump over the specified number of bytes, you must resort to the methods of the underlying stream object. You get the current stream by using the BaseStream property. Here is how you skip the header of a .bmp file:

int bytesToSkip = Marshal.SizeOf(typeof(BITMAPFILEHEADER)); reader.BaseStream.Seek(bytesToSkip, SeekOrigin.Begin);

You can also choose to move a given offset from the bottom of the stream or from the current position. The following code shows how to actually skip a short integer:

reader.BaseStream.Seek(2, SeekOrigin.Current);

Finally, if you need to read a complex structure from a binary stream, you must do that sequentially on a per-field basis.

Writing Local Files

All reader classes have a writing counterpart that works according to the same model. To write a text file, you use the StreamWriter class. To create a binary file, you use the BinaryWriter class. Writing local files is a bit more problematic than just reading in ASP.NET because of the default security model. Personally, I consider the need to create a local file as an alarm bell, which prompts me to think about possible alternatives. Let's quickly review a couple of common scenarios.

If you need to persist user settings, you're better off using a database, which provides more speed and flexibility in terms of search and manipulation. As for global settings, they are a type of information individual users should never access and modify. ASP.NET applications can be programmatically driven by a configuration file, but you will likely edit that file offline and not through an ASP.NET front-end.

The StreamWriter Class

The StreamWriter class implements a text writer and outputs characters to a stream in a particular encoding. The default encoding is UTF-8. The constructor of the class lets you specify the output stream as a Stream object or a file name. If you indicate a file name, you're also given a chance to specify whether you want to write it from scratch or just append text.

The Programming Interface of the StreamWriter Class

Table 16-12 details the properties available on the StreamWriter class.

|

Property |

Description |

|---|---|

|

AutoFlush |

Gets or sets whether the writer will flush its buffer to the underlying stream after every call to a writing method. |

|

BaseStream |

Gets the underlying stream that interfaces with a backing store. |

|

Encoding |

Gets the encoding scheme in which the output is written. |

|

FormatProvider |

Gets an object that controls formatting for the text according to a specific culture. |

|

NewLine |

Gets or sets the line terminator string used by the writer. The default string is a carriage return followed by a line feed ( ). |

When AutoFlush is set to true, only the data is flushed from the buffer to the stream. The encoder state is retained so that it can encode the next block of characters correctly. Especially when working with UTF-8 and UTF-7 encodings, the writer might happen to manage characters that can only be encoded when having adjacent characters available. The AutoFlush state is checked at the bottom of all WriteXXX methods defined on the class. Setting AutoFlush to true should be used when the user expects immediate feedback on the output device, such as the console. In ASP.NET, you might get better performance by setting AutoFlush to false and calling Close (or at least Flush) when you're done writing.

The line terminator string is appended to the output stream whenever the WriteLine method is called. Although in theory you can use any string to terminate the line, be aware that the resulting text would be correctly readable through a StreamReader only if either

or

is used. If you set NewLine to null, the default new line sequence is used. If you're using StreamWriter to dynamically create, say, HTML pages, you can use the HTML break tag

instead.

Table 16-13 details the methods exposed by the StreamWriter class.

|

Method |

Description |

|---|---|

|

Close |

Closes the current writer and the underlying stream |

|

Flush |

Clears all buffers for the current writer, and causes any buffered data to be written to the underlying stream |

|

Write |

Writes data to the stream |

|

WriteLine |

Differs from Write because it always adds a line terminator |

Both Write and WriteLine have several overloads, each of which enables you to write data of a particular value type—Boolean, character, string, decimal, and so forth. In all cases, what the methods really write is a textual representation of the value. For example, if you write a Boolean, the whole False or True strings are written, not a single byte!

Persisting a Collection of Data

Let's see how to write a sample text file. If you're going to write a text file from within an ASP.NET application, chances are that you are just persisting a collection of data. So to exemplify, let's dump the contents of the Request.ServerVariables collection.

Note that for this code to work, the account running the ASP.NET service must have writing permissions on the folder. If not, writing permissions must be granted. You can do that either manually, through the Explorer's Properties dialog box, or programmatically using the cacls.exe utility. (See Chapter 15, "ASP.NET Security.")

Writing Binary Files

There are a couple of interesting scenarios in ASP.NET in which you might need to write binary files. The first scenario has to do with the creation of dynamic images—that is, images that are created while users work with the application. Dynamic images are typically obtained from the contents of binary large object (BLOB) fields in a database or from the output of charting applications. In many cases, it's dynamically generated output that doesn't necessarily need to be cached. For this reason, you implement the generation of the image as a new page with a graphical content type. In this case, page output caching is the only possibility you have to reuse an image over and over again. In some cases, if the number of images is not that big, and the likelihood of reusing them is quite significant, you can persist the dynamically generated stream of bytes to a disk file.

Creating Images Dynamically

Suppose that you manage a Web site for registered users. Whenever a user is authenticated and connected to the system, you might want to display a welcome message. You can compose the message by using static graphics and rich-formatted HTML text, or you could generate the message dynamically, obtaining effects not otherwise possible with the normal tools. For example, if you use GDI+ you can easily draw any text using a gradient brush. (You'll probably never use this technique in a real-world application to generate messages, but persisting dynamically generated images can be a useful technique to know.)

In the current directory, the following method creates a GIF file that is the graphical representation of the specified text. The text is written using a cyan-to-yellow gradient of colors on a black background.

void CreateWelcomeBitmap(string userID, string file) {

// Prepare the drawing context

Rectangle area = new Rectangle(0,0,200,30);

Font f = new Font("Verdana", 12);

Bitmap bmp = new Bitmap(200, 30);

Graphics g = Graphics.FromImage(bmp);

// Prepare the text

StringFormat sf = new StringFormat();

sf.Alignment = StringAlignment.Center;

sf.LineAlignment = StringAlignment.Center;

// Draw the text

LinearGradientBrush brForeColor = new LinearGradientBrush(area,

Color.SkyBlue, Color.Yellow, LinearGradientMode.Horizontal);

g.DrawString(userID, f, brForeColor, area, sf);

// Create the file

FileStream fs = new FileStream(file, FileMode.Create);

bmp.Save(fs, ImageFormat.Gif);

fs.Close();

// Clean-up

brForeColor.Dispose();

f.Dispose();

bmp.Dispose();

g.Dispose();

}

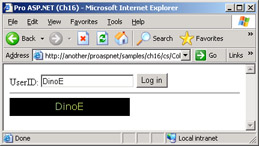

The picture is created executing a few GDI+ operations on a logical canvas represented by a Bitmap object. The content of the object is then saved to the specified stream compressed as a GIF stream. The FileStream class is used to create and fill the new file. A reference to the stream is passed to the Save method of the Bitmap class for the actual writing. For the sake of completeness, you should note that the Bitmap Save method can also accept a direct file name, which would save you from creating a file stream. Figure 16-5 shows an example of the output.

Figure 16-5: A dynamically generated image.

The user enters the user ID and clicks to log in. The system looks at the user ID and determines whether an appropriate bitmap is already present. If not, a new GIF file is generated and displayed through a server-side tag.

void OnWelcome(object sender, EventArgs e)

{

string userID = theUserID.Text;

string file = Server.MapPath(userID + ".gif");

if (!File.Exists(file))

CreateWelcomeBitmap(userID, file);

picture.src=file;

}

The layout of the sample page is as follows:

UserID:

| Caution |

Before attempting to create a new file, the Save method of the Bitmap class demands the write permission on the resource. It does that by calling the Demand method on a file-specific instance of the FileIOPermission class. The method forces a security exception at run time if all callers higher in the call stack (including your code) have not been granted the permission requested. Normally, though, no callers would prevent the GDI+ method from writing, so the Save method successfully passes the common language runtime (CLR) permission check and yields to a COM library, which is actually in charge for creating the file. At this point, the COM library operates on behalf of the ASP.NET account, and if no write permissions have been granted to that account, a system exception is raised. The module that catches the exception is the COM Interop infrastructure, which bubbles it up as an ExternalException exception because of some generic failure in the underlying COM code. The GDI+ layer can't translate that exception into a more meaningful message, and all that you get is a "Generic GDI+ Error". There might be several reasons for that message, one of which is the lack of write permissions. |

In Chapter 22, "Working with Images in ASP.NET," we'll return to image processing in ASP.NET and cover the topic of dynamic images more in detail.

The BinaryWriter Class

The BinaryWriter class is another alternative to writing binary data to a stream or a file. The class writes primitive types in a binary format according to the specified encoding. BinaryWriter doesn't have a complex and rich programming interface. It features only one property—BaseStream—and a few methods, including Close, Flush, and Write. The BinaryWriter class also exposes the Seek method, which allows you to set the current position within the stream. As usual, you set the position by indicating a byte position and an origin—beginning, current position, or end of the stream.

Serializing Data Structures

Persisting binary data is a problem that can be approached in at least two different ways. You can write all the necessary pieces of data to disk one after the next, or you can group them all into a more manageable, all-encompassing data structure and save it to disk in a single step. The difference between the two approaches is not particularly relevant in terms of the file creation. The final output, in fact, is nearly identical. More significant is the difference when reading data back. When reading binary data in pieces, you are responsible for transforming all the pieces of data into a common memory structure that represents the data. When reading binary data in one chunk, the transformation step is unnecessary, as you can read data directly into a new instance of the class.

Working with objects and binary data exploits a key feature of the .NET Framework—run-time object serialization and deserialization. Object serialization and deserialization is offered through the classes exposed by the System.Runtime.Serialization namespace. It allows you to store public, protected, and private fields, and it automatically handles circular references. A circular reference occurs when a child object references a parent object, and the parent object also references the child object.

Serialization can generate output in multiple formats by using different formatter modules. A formatter is a sort of pass-through stream that accepts raw data and formats it for storage in a particular scheme. The .NET Framework comes with two predefined formatters—the BinaryFormatter and SoapFormatter classes—which write the object's state in binary format and SOAP format, respectively.

The run-time serialization engine works only with classes explicitly declared as serializable. Classes make themselves serializable in two ways. They can either support the [Serializable] attribute or implement the ISerializable interface. If the class supports the [Serializable] attribute, the class doesn't need to do anything else, and the serialization takes place automatically through reflection. The ISerializable interface, on the other hand, lets the class author exercise closer control on how the bits of the living object are actually persisted. Object serialization is a large topic that deserves much more space than is available in this context. In the rest of the section, we'll examine serialization only from the perspective of an ASP.NET application that wants to persist information in a format that is both compact and easy to manage.

The BinaryFormatter Class

To serialize an object to a file, you first select a serialization formatter. This is normally the binary or the SOAP formatter. Each formatter has its own class.

IFormatter binFormatter = new BinaryFormatter(); IFormatter soapFormatter = new SoapFormatter();

Once you hold a living instance of the serializer, you simply call the Serialize method, passing the stream to write to and the object to save.

MyClass obj = new MyClass();binFormatter.Serialize(stream, obj);

Rebuilding objects from a storage medium is easy too. You simply call the Deserialize method on the specified formatter.

MyClass obj = (MyClass) binFormatter.Deserialize(stream);

It goes without saying that you cannot serialize to, say, SOAP and then deserialize through the binary formatter.

An object can only be serialized to, and deserialized from, a stream. However, a stream can be, in turn, attached to a disk file, a more specialized stream, a memory buffer, and even to a string.

Serializing Data Through Classes

Let's go through a practical example to see how the contents of a data structure can be serialized to a binary file. We'll consider the following class—MenuItem—which represents a menu item to display on the page.

[Serializable]

public class MenuItem {

private int _key;

private string _displayText;

public MenuItem(string text, int value) {

_key = value;

_displayText = text;

}

public string DisplayText {

get {return _displayText;}

}

public int Key {

get {return _key;}

}

}

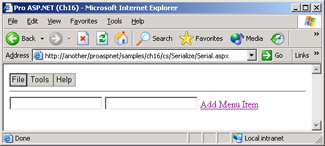

The page reads out of a disk file the structure of its menu. The disk file simply consists of an array of MenuItem objects.

void Page_Load(object sender, EventArgs e) {

string fileName = Server.MapPath(@"menu.dat");

if (!File.Exists(fileName))

BindMenu();

else {

ArrayList values = LoadFromFile();

BindMenu(values);

}

}

private void BindMenu() {

ArrayList values = new ArrayList();

values.Add(new MenuItem("File", 1));

values.Add(new MenuItem("Tools", 2));

values.Add(new MenuItem("Help", 3));

Repeater1.DataSource = values;

Repeater1.DataBind();

SaveToFile(values);

}

private ArrayList LoadFromFile() {

string fileName = Server.MapPath(@"menu.dat");

FileStream stream = new FileStream(fileName, FileMode.Open,

FileAccess.Read);

BinaryFormatter reader = new BinaryFormatter();

ArrayList values = (ArrayList) reader.Deserialize(stream);

stream.Close();

return values;

}

The deserialization process is straightforward once you know the structure of the disk file. The first time the page is accessed, it creates and persists the default menu. The menu is serialized as an array of MenuItem objects. You can also edit the structure of the menu, and any changes will be promptly detected.

private void SaveToFile(ArrayList values) {

string fileName = Server.MapPath(@"menu.dat");

FileStream stream = new FileStream(fileName, FileMode.Create);

BinaryFormatter writer = new BinaryFormatter();

writer.Serialize(stream, values);

stream.Close();

}

Figure 16-6 shows the page in action.

Figure 16-6: The structure of the menu is serialized to disk using a binary formatter.

Each class involved in the serialization process must be serializable; otherwise, a run-time exception is thrown. In this case, the rule applies to both ArrayList and MenuItem. ArrayList is serializable by design.

The resulting file contains more than just the class information. The .NET Framework, in fact, also inserts a signature and information about the assembly from which the class was read. Using the binary formatter is helpful because it lets you save and restore data using the same memory pattern. On the other hand, the layout of the data follows a well-known pattern decided by the .NET Framework and requires more space than if you write the data yourself.

Watching for File Changes

It's not uncommon that ASP.NET applications based on local files have to update their user interface and behavior if one of those files changes. Since Windows 95, the operating system has offered a feature that can be extremely useful in many situations in which you need to detect changes in a certain portion of the file system. This feature is called file notification and basically consists of the system's ability to notify through events several types of changes that affect files and folders under a given tree. ASP.NET exploits this feature extensively to detect changes in the source .aspx files.

The file notification mechanism works in a slightly different manner under the operating systems of the Windows 9x (including Windows ME) and Windows NT, Windows 2000, and Windows XP families. Under Windows 9x, the notification mechanism does not provide information about the actual files or directories involved in the change. What the programmer gets is the notification that a certain change occurred. To detect what really changed, you must scan the contents of the watched tree of directories and figure it out yourself. Windows NT 4.0 added an extra bunch of API functions to read the details of any directory changes. The whole notification mechanism was extended in particular by the ReadDirectoryChangesW function.

There's good news and bad news about the integration of the file notification mechanism in .NET. The good news is that all the intricacies of the underlying ReadDirectoryChangesW function have been straightened out and wrapped into an easy-to-use and elegant class named FileSystemWatcher. The bad news is that the file notification support built into .NET is completely based on the features of the ReadDirectoryChangesW function. This means that you can use the FileSystemWatcher class only if your code runs on a Windows NT 4.0, Windows 2000, or Windows XP machine. This is not an issue for ASP.NET code, though.

The FileSystemWatcher Class

The FileSystemWatcher class watches a specified directory for changes that involve contained files and subdirectories. The class can monitor what happens to the file system of a local computer, network drive, or remote computer. The FileSystemWatcher class does not raise events for CD and DVD drives because nothing can happen on these drives to change contents or timestamps.

You can initialize the FileSystemWatcher class using three possible constructors. You can use the default, parameterless constructor, but you can also create a new instance of the FileSystemWatcher class indicating the directory to watch and even the search string for the files to watch.

FileSystemWatcher fsw = new FileSystemWatcher(path, "*.txt");

Table 16-14 lists the properties of the class.

|

Property Name |

Description |

|---|---|

|

EnableRaisingEvents |

Gets or sets a value that indicates whether the component is watching |

|

Filter |

Gets or sets the filter string used to determine what files are monitored in a directory |

|

IncludeSubdirectories |

Gets or sets a value that indicates whether subdirectories should be monitored too |

|

InternalBufferSize |

Gets or sets the size of the internal buffer used to retrieve file system information |

|

NotifyFilter |

Gets or sets the type of changes to watch for |

|

Path |

Gets or sets the path of the directory to watch |

You set up a file system watcher in three steps. First, you define the root directory to monitor and the criteria for the files to watch for. Next, you indicate the events you want to be notified of and write event handlers accordingly. Finally, you start watching.