Hack 45. Process Web Server Logs

You can pull your web log into the database. Once it's in the database, you can investigate who is looking at what and when they did so.

If you use Apache on a Linux system, your web log is likely to be in a location such as /var/log/httpd/access_log. The format of the logfile is determined by entries in the Apache configuration file.

On a Windows system, you will find Apache logs in C:Program FilesApache GroupApachelogsaccess.log. The IIS logs are likely to be in C:WINDOWSSystem32LogFilesW3SVC1ex yymmdd .log, where yymmdd represents the date.

The two lines that control the format for the logs on a typical Linux/Apache server are in /etc/httpd/conf/httpd.conf (/etc/httpd/httpd.conf on Mac OS X):

LogFormat "%h %l %u %t "%r" %>s %b "%{Referer}i" "%{User-Agent}i""

combined

CustomLog logs/access_log combined

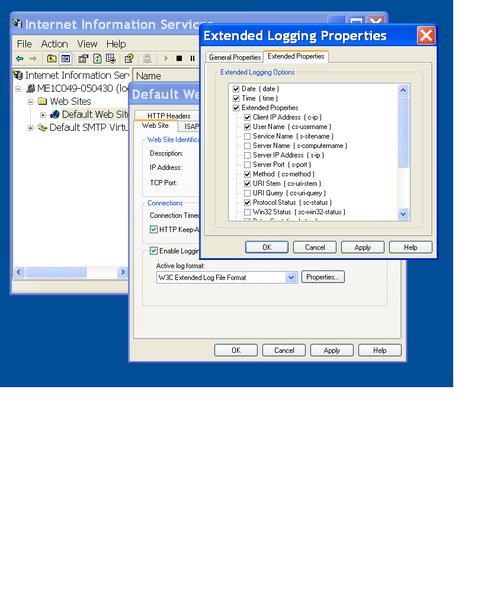

For IIS, you can control the format of the web log using the Microsoft Management Console IIS snap-in. On the properties page of the Web Server choose the Web Site tab; select Enable Logging, set the Active log format to W3C Extended Log File Format, and then click on Properties to choose the fields, as shown in Figure 6-8.

Figure 6-8. Determining the format of the logfile

Here is a sample line from the logfile:

192.116.140.62 - - [09/Jul/2006:13:00:37 +0100] "GET /xml.css HTTP/1.1" 200 1619 "http://xmlzoo.net/sax/?xml=01.xml" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; InfoPath.1)"

The logfile includes the fields shown in Table 6-2.

| Field | Sample | Meaning |

|---|---|---|

| IP address | 192.116.140.62 | The address of the visitor. |

| Identity | - | Ignored. |

| User | - | User ID: when HTTP authentication is enabled. |

| Date and Time | 09/Jul/2006:13:00:37 +0100 | Date and time, with time zone of the server. |

| HTTP Request | GET /xml.css HTTP/1.1 | The method, page, and protocol. |

| Response Code | 200 | 200 means request was successful. |

| Bytes Served | 1619 | Volume of data sent. |

| Referrer | http://xmlzoo.net/sax/?xml=01.xml | Visitor's previous web page. |

| User Agent | Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; InfoPath.1) | Browser being used. |

You can create a table suitable for holding the log entries. It helps to have some indexes as well:

CREATE TABLE access_log (ip VARCHAR(15) ,skp VARCHAR(15) ,usr VARCHAR(15) ,whn TIMESTAMP ,mthd VARCHAR(5) ,page VARCHAR(255) ,ver VARCHAR(10) ,resp INTEGER ,bytes INTEGER ,refr VARCHAR(255) ,agnt VARCHAR(255) ); CREATE INDEX pageidx ON access_log(page); CREATE INDEX ipidx ON access_log(ip); CREATE INDEX whnidx ON access_log(whn); CREATE INDEX refridx ON access_log(refr);

You can convert each line from the logfile into an SQL INSERT statement with a Perl program:

use strict;

my %month=(Jan=>'01',Feb=>'02',Mar=>'03',Apr=>'04',May=>'05',Jun=>'06',

Jul=>'07',Aug=>'08',Sep=>'09',Oct=>'10',Nov=>'11',Dec=>'12');

while (<>){

my $pattern =

'^(S*) (S*) (S*) '. #Fields ip, - , username

'[(..)/(...)/(....):(..):(..):(..) .....]'.#Date and time

' "(S*) (S*) (S*)" '. #Request: GET or POST

'(d*) (-|d*) "([^"]*)" "([^"]*)"'; #resp, bytes, refr, agnt

if (m/$pattern/){

my ($tgt,$ref,$agt) = (esc($11),esc($15),esc($16));

my $byt = $14 eq '-'?'NULL':$14;

print "INSERT INTO access_log VALUES ('$1','$2','$3',".

" TIMESTAMP '$6/$month{$5}/$4 $7:$8:$9','$10','$tgt',".

"'$12',$13,$byt,'$ref','$agt');

";

}else{

print "--Skipped line $.

";

}

}

sub esc{

my ($p) = @_;

$p =~ s/'/''/g;

return $p;

}

Once the table has been created, pipe in a logfile from the Linux prompt:

perl access_log2sql.pl /var/log/httpd/access_log | mysql u scott ptiger -f dbname

If you're using IIS in Windows, select the following fields for your log format:

#Fields: date time c-ip cs-username cs-method cs-uri-stem sc-status sc-bytes cs-version cs(User-Agent) cs(Referer)

Use this modified version of the Perl script (saved as log2sql):

use strict;

while (<>){

my $pattern =

'^(....)-(..)-(..) (..):(..):(..) '. #Date and time

'(S*) (S*) '. #ip, username

'(S*) (S*) (d*) '. #GET/POST page resp

'(-|d*) (S+) (S+) (S+)'; #bytes, ,version, agnt, refr

if (m/$pattern/){

my ($tgt,$ref,$agt) = (esc($10),esc($15),esc($14));

my $byt = $12 eq '-'?'NULL':$12;

print "INSERT INTO access_log VALUES ('$7','','$8',".

" TIMESTAMP '$1/$2/$3 $4:$5:$6','$9','$tgt',".

"'$13',$11,$byt,'$ref','$agt')

GO

";

}else{

print "--Skipped line $.

";

}

}

sub esc{

my ($p) = @_;

$p =~ s/'/''/g;

return $p;

}

At the Windows prompt, enter the following:

C:>perl log2sql.pl C:Windowssystem32LogfilesW3SVC1ex060911.log >l.sql C:>sqlcmd U scott d dbname i l.sql

6.5.1. Queries

First, check how many rows you have, and check the range of times:

mysql> SELECT COUNT(*), MIN(whn), MAX(whn) -> FROM access_log; +----------+---------------------+---------------------+ | COUNT(*) | MIN(whn) | MAX(whn) | +----------+---------------------+---------------------+ | 319300 | 2006-07-02 04:03:53 | 2006-07-09 04:03:21 | +----------+---------------------+---------------------+

You can filter out the robot spiders. Well-behaved robots always visit the page /robots.txt, so you can find out how many robot visits you've had. This query shows the most active robot visitors:

mysql> SELECT LEFT(agnt,50), COUNT(*) -> FROM access_log -> WHERE page='/robots.txt' -> GROUP BY agnt -> ORDER BY 2 DESC; +----------------------------------------------------+----------+ | LEFT(agnt,50) | COUNT(*) | +----------------------------------------------------+----------+ | Mozilla/5.0 (compatible; Yahoo! Slurp; http://help | 871 | | msnbot/1.0 (+http://search.msn.com/msnbot.htm) | 169 | | Krugle/Krugle,Nutch/0.8+ (Krugle web crawler; http | 63 | | Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv | 62 | | Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; | 50 | | NaverBot-1.0 (NHN Corp. / +82-31-784-1989 / nhnbot | 46 | | Mozilla/2.0 (compatible; Ask Jeeves/Teoma; +http:/ | 32 | | - | 25 | | Mozilla/5.0 (compatible; Charlotte/1.0b; charlotte | 25 | | Mozilla/5.0 (compatible; Googlebot/2.1; +http://ww | 21 | ...

Of course, each robot makes many requests in addition to the /robots.txt request, and you need to delete all of them if you are interested only in human visitors. To get rid of all rows associated with the robots you might try to DELETE with a subquery. Most engines will accept this, but MySQL will not:

mysql> DELETE FROM access_log -> WHERE ip IN (SELECT ip -> FROM access_log -> WHERE page='/robots.txt'); ERROR 1093 (HY000): You can't specify target table 'access_log' for update in FROM clause

For MySQL, you can first mark the robots by setting the agnt field to robot, and then in a separate statement you can delete them:

mysql> UPDATE access_log x JOIN access_log y ON x.ip=y.ip -> SET x.agnt = 'robot' -> WHERE y.page='/robots.txt'; Query OK, 44039 rows affected (8.99 sec) Rows matched: 670233 Changed: 44039 Warnings: 0 mysql> DELETE FROM access_log WHERE agnt='robot'; Query OK, 44039 rows affected (36.31 sec)

6.5.1.1. Check for broken links

You can look through the error numbers for "404" errors. These indicate pages which were requested but could not be found. If the cause is a link from an internal page, you can fix it. If it is an external link pointing to a page you have moved, you can redirect the traffic:

mysql> SELECT LEFT(page,20), refr, COUNT(*) -> FROM access_log -> WHERE resp BETWEEN 400 AND 499 -> GROUP BY page -> ORDER BY 3 DESC -> LIMIT 5; +----------------------+---------------------------------------+----------+ | LEFT(page,20) | refr | COUNT(*) | +----------------------+---------------------------------------+----------+ | /_vti_bin/owssvr.dll | - | 70 | | /MSOffice/cltreq.asp | - | 70 | | /MSOffice/cltreq.asp | - | 36 | | /_vti_bin/owssvr.dll | - | 36 | | /favicon.ico | http://coredump.biz/~mnowak/education | 27 | +----------------------+---------------------------------------+----------+ 5 rows in set (0.78 sec)

|

6.5.1.2. Investigate users' actions

If you remove the nonpage files from the log, it is easier to analyze people's actions on the web site. You can delete all of the image files, JavaScript, and stylesheet references:

mysql> DELETE FROM access_log -> WHERE page LIKE '%.css' -> OR page LIKE '%.png' -> OR page LIKE '%.js' -> OR page LIKE '%.ico'; Query OK, 119566 rows affected (31.65 sec)

You can now see your most popular pages:

mysql> SELECT page, COUNT(*) FROM access_log -> GROUP BY page -> ORDER BY 2 DESC -> LIMIT 6; +--------------------------------+----------+ | page | COUNT(*) | +--------------------------------+----------+ | / | 19248 | | /ernest/gisq.htm | 17904 | | /1.htm | 2362 | | /~scott/cgi-bin/wendy/gisq.htm | 1894 | | /priya/gisq.htm | 1282 | | /1a.htm | 1148 | +--------------------------------+----------+ 6 rows in set (0.57 sec)

You can see how users move from page to page within your site:

mysql> SELECT page,refr, COUNT(*) -> FROM access_log -> WHERE refr LIKE 'http://sqlzoo.net%' -> GROUP BY page,refr -> ORDER BY 3 DESC -> LIMIT 10; +----------------------------------+---------------------------+----------+ | page | refr | COUNT(*) | +----------------------------------+---------------------------+----------+ | /ernest/gisq.htm | http://sqlzoo.net/1.htm | 4509 | | /ernest/gisq.htm | http://sqlzoo.net/1a.htm | 2214 | | /1.htm | http://sqlzoo.net/ | 1633 | | /ernest/gisq.htm | http://sqlzoo.net/1b.htm | 1556 | | /ernest/gisq.htm | http://sqlzoo.net/2.htm | 1157 | | /ernest/gisq.htm | http://sqlzoo.net/3.htm | 1104 | | /howto/source/z.dir/i01select.xml| http://sqlzoo.net/ | 663 | | /3a.htm | http://sqlzoo.net/ | 557 | | /ernest/gisq.htm | http://sqlzoo.net/3a.htm | 512 | | /ernest/gisq.htm | http://sqlzoo.net/de/1.htm| 409 | +----------------------------------+---------------------------+----------+ 10 rows in set (6.84 sec)

Each row shows the number of people who followed a link from refr to page.

Suppose you want to know whether the links from page 1.htm to 1a.htm and 1b.htm are being used. The following answers the question "How do most people get to page 1a.htm and 1b.htm from another page on this site?"

mysql> SELECT page,refr, COUNT(*) -> FROM access_log -> WHERE refr LIKE 'http://sqlzoo.net%' -> AND page LIKE '/1_.htm' -> GROUP BY page,refr -> ORDER BY 3 DESC -> LIMIT 10; +---------+----------------------------------+----------+ | page | refr | COUNT(*) | +---------+----------------------------------+----------+ | /1a.htm | http://sqlzoo.net/ | 402 | | /1b.htm | http://sqlzoo.net/ | 366 | | /1a.htm | http://sqlzoo.net/1.htm | 165 | | /1b.htm | http://sqlzoo.net/1.htm | 93 | | /1b.htm | http://sqlzoo.net/1.htm?answer=1 | 16 | | /1a.htm | http://sqlzoo.net/1.htm?answer=1 | 14 | | /1a.htm | http://sqlzoo.net | 11 | | /1b.htm | http://sqlzoo.net | 6 | +---------+----------------------------------+----------+ 8 rows in set (0.16 sec)

6.5.2. Hacking the Hack

The Webalizer tool, available from http://www.mrunix.net/webalizer, provides useful reports on web usage. If you use Webalizer, you can use the DNS cache that it builds. That means you can translate IP addresses such as 65.54.188.29 into more meaningful hostnames such as msnbot.msn.com.

The DNS cache Webalizer uses is called dns_cache.db, and you can access it from Perl if you install the DB_File Perl module. Here is the Perl program to convert the web log to SQL again. It puts the hostname into the ip column. You will need to make that column rather large because the hostname may be a longer string. Also, be sure that you run Webalizer on the logfile before you try to process it with this Perl program; otherwise, the DNS cache will not have translated any new visitors:

use strict;

use DB_File;

my %dns_cache;

dbmopen(%dns_cache,'dns_cache.db',undef);

my %month=(Jan=>'01',Feb=>'02',Mar=>'03',Apr=>'04',May=>'05',Jun=>'06',

Jul=>'07',Aug=>'08',Sep=>'09',Oct=>'10',Nov=>'11',Dec=>'12');

while (<>){

my $pattern =

'^(S*) (S*) (S*) '. #Fields ip, - , username

'[(..)/(...)/(....):(..):(..):(..) .....]'.#Date and time

' "(S*) (S*) (S*)" '. #Request: GET or POST...

'(d*) (-|d*) "([^"]*)" "([^"]*)"'; #resp, bytes, refr, agnt

if (m/$pattern/){

my ($tgt,$ref,$agt) = (esc($11),esc($15),esc($16));

my $byt = $14 eq '-'?'NULL':$14;

my $ip = $1;

my $host = substr($dns_cache{$ip},8)||$ip;

print "INSERT INTO access_log VALUES ('$host','$2','$3',".

" TIMESTAMP '$6/$month{$5}/$4 $7:$8:$9','$10','$tgt',".

"'$12',$13,$byt,'$ref','$hst');

";

}else{

print "--Skipped line $.

";

}

}

sub esc{

my ($p) = @_;

$p =~ s/'/''/g;

return $p;

}

SQL Fundamentals

- SQL Fundamentals

- Hack 1. Run SQL from the Command Line

- Hack 2. Connect to SQL from a Program

- Hack 3. Perform Conditional INSERTs

- Hack 4. UPDATE the Database

- Hack 5. Solve a Crossword Puzzle Using SQL

- Hack 6. Dont Perform the Same Calculation Over and Over

Joins, Unions, and Views

- Joins, Unions, and Views

- Hack 7. Modify a Schema Without Breaking Existing Queries

- Hack 8. Filter Rows and Columns

- Hack 9. Filter on Indexed Columns

- Hack 10. Convert Subqueries to JOINs

- Hack 11. Convert Aggregate Subqueries to JOINs

- Hack 12. Simplify Complicated Updates

- Hack 13. Choose the Right Join Style for Your Relationships

- Hack 14. Generate Combinations

Text Handling

- Text Handling

- Hack 15. Search for Keywords Without LIKE

- Hack 16. Search for a String Across Columns

- Hack 17. Solve Anagrams

- Hack 18. Sort Your Email

Date Handling

- Date Handling

- Hack 19. Convert Strings to Dates

- Hack 20. Uncover Trends in Your Data

- Hack 21. Report on Any Date Criteria

- Hack 22. Generate Quarterly Reports

- Hack 23. Second Tuesday of the Month

Number Crunching

- Number Crunching

- Hack 24. Multiply Across a Result Set

- Hack 25. Keep a Running Total

- Hack 26. Include the Rows Your JOIN Forgot

- Hack 27. Identify Overlapping Ranges

- Hack 28. Avoid Dividing by Zero

- Hack 29. Other Ways to COUNT

- Hack 30. Calculate the Maximum of Two Fields

- Hack 31. Disaggregate a COUNT

- Hack 32. Cope with Rounding Errors

- Hack 33. Get Values and Subtotals in One Shot

- Hack 34. Calculate the Median

- Hack 35. Tally Results into a Chart

- Hack 36. Calculate the Distance Between GPS Locations

- Hack 37. Reconcile Invoices and Remittances

- Hack 38. Find Transposition Errors

- Hack 39. Apply a Progressive Tax

- Hack 40. Calculate Rank

Online Applications

- Online Applications

- Hack 41. Copy Web Pages into a Table

- Hack 42. Present Data Graphically Using SVG

- Hack 43. Add Navigation Features to Web Applications

- Hack 44. Tunnel into MySQL from Microsoft Access

- Hack 45. Process Web Server Logs

- Hack 46. Store Images in a Database

- Hack 47. Exploit an SQL Injection Vulnerability

- Hack 48. Prevent an SQL Injection Attack

Organizing Data

- Organizing Data

- Hack 49. Keep Track of Infrequently Changing Values

- Hack 50. Combine Tables Containing Different Data

- Hack 51. Display Rows As Columns

- Hack 52. Display Columns As Rows

- Hack 53. Clean Inconsistent Records

- Hack 54. Denormalize Your Tables

- Hack 55. Import Someone Elses Data

- Hack 56. Play Matchmaker

- Hack 57. Generate Unique Sequential Numbers

Storing Small Amounts of Data

- Storing Small Amounts of Data

- Hack 58. Store Parameters in the Database

- Hack 59. Define Personalized Parameters

- Hack 60. Create a List of Personalized Parameters

- Hack 61. Set Security Based on Rows

- Hack 62. Issue Queries Without Using a Table

- Hack 63. Generate Rows Without Tables

Locking and Performance

- Locking and Performance

- Hack 64. Determine Your Isolation Level

- Hack 65. Use Pessimistic Locking

- Hack 66. Use Optimistic Locking

- Hack 67. Lock Implicitly Within Transactions

- Hack 68. Cope with Unexpected Redo

- Hack 69. Execute Functions in the Database

- Hack 70. Combine Your Queries

- Hack 71. Extract Lots of Rows

- Hack 72. Extract a Subset of the Results

- Hack 73. Mix File and Database Storage

- Hack 74. Compare and Synchronize Tables

- Hack 75. Minimize Bandwidth in One-to-Many Joins

- Hack 76. Compress to Avoid LOBs

Reporting

- Reporting

- Hack 77. Fill in Missing Values in a Pivot Table

- Hack 78. Break It Down by Range

- Hack 79. Identify Updates Uniquely

- Hack 80. Play Six Degrees of Kevin Bacon

- Hack 81. Build Decision Tables

- Hack 82. Generate Sequential or Missing Data

- Hack 83. Find the Top n in Each Group

- Hack 84. Store Comma-Delimited Lists in a Column

- Hack 85. Traverse a Simple Tree

- Hack 86. Set Up Queuing in the Database

- Hack 87. Generate a Calendar

- Hack 88. Test Two Values from a Subquery

- Hack 89. Choose Any Three of Five

Users and Administration

- Users and Administration

- Hack 90. Implement Application-Level Accounts

- Hack 91. Export and Import Table Definitions

- Hack 92. Deploy Applications

- Hack 93. Auto-Create Database Users

- Hack 94. Create Users and Administrators

- Hack 95. Issue Automatic Updates

- Hack 96. Create an Audit Trail

Wider Access

- Wider Access

- Sharing Data Across the Internet

- Hack 97. Allow an Anonymous Account

- Hack 98. Find and Stop Long-Running Queries

- Hack 99. Dont Run Out of Disk Space

- Hack 100. Run SQL from a Web Page

Index

EAN: 2147483647

Pages: 147