Understanding SQL Basics and Creating Database Files

Understanding the Definition of a Database

Many people use the term database to mean any collection of data items. Working as a consultant, I've been called onsite to repair a database, only to find that the client was referring to a customer list in a Corel WordPerfect document that appeared "corrupted" because someone had changed the document's margins. Microsoft and Lotus have also blurred the lines between application data and a database by referring to "database" queries in help screens about searching the information stored in the cells that make up their competing spreadsheet products.

As the name implies, a database contains data. The data is organized into records that describe a physical or conceptual object. Related database records are grouped together into tables. A customer record, for example, could consist of data items, or attributes, such as name, customer number, address, phone number, credit rating, birthday, anniversary, and so on. In short, a customer record is any group of attributes or characteristics that uniquely identify a person (or other business), making it possible to market the customer for new business or to deliver goods or services. A customer table, then, is a collection of customer records. Similarly, if a business wants to track its inventory (or collection of goods for sale), it would create an inventory table consisting of inventory records. Each inventory record would contain multiple attributes that uniquely describe each item in the inventory. These attributes might include item number, description, cost, date manufactured or purchased, and so on.

While a flat file (which we'll discuss in Tip 2, "Understanding Flat Files,") contains only data, a database contains both data and metadata. Metadata is a description of:

- The fields in each record (or columns in a table)

- The location, name, and number of records in each table

- The indexes used to find records in tables

- The value constraints that define the range of values that can be assigned to individual record attributes (or fields)

- The key constraints that define what records can be added to a table and that limit the way in which records can be removed; also the relationship between records in different database tables

While the data in a database is organized into related records within multiple tables, the metadata for a database is placed in a single table called the data dictionary.

In short, a database is defined as a self-describing collection of records organized into tables. The database is self-describing because it contains metadata in a data dictionary table that describes the fields (or attributes) in each record (or table row) and the structure that groups related records into tables.

Understanding Flat Files

Flat files are collections of data records. When looking at the contents of a flat file, you will not find any information (metadata) that describes the data in the file. Instead, you will see row after row of data such as the following:

010000BREAKFAST JUICES F00.000000 010200TREE TOP APPLE JUICE 120ZF01.100422 010400WELCHES GRAPE JUICE 12OZF00.850198 010600MINUTE MAID LEMONADE 12OZF00.850083 010800MINUTE MAID PINK LEMONADE 12OZF00.890099 011000MINUTE MAID ORANGE JUICE 12OZF01.260704 011400MINUTE MAID FRUIT PUNCH 120ZF00.820142 011600CAMPBELLS CAN TOMATO JUICE 46OZG01.200030 020000FAMOUS BRAND CEREALS G01.200000 020200GENERAL MILLS CHEERIOS 15OZG03.010050

Looking at the flat file listing, you can see that the file contains only data. Spaces are used to separate one field from another and each non-blank line is a record. Each application program reading the data file must "know" the number of characters in each "field" and what the data means. As such, programs must have lines of code that read the first 6 characters on a line as an item number and the next 32 characters as a description, followed by a 1-character department indicator, followed by a 5-character sales price, and ending with a 4-digit average count delivered each week. COBOL programs using flat files had a "File Description" that described the layout of each line (or record) to be read. Modern programming languages such as Pascal, C, and Visual Basic let you read each line of the flat file as a text string that you can then divide into parts and assign to variables whose meanings you define elsewhere in the application. The important thing to understand is that every program using a flat file must have its own description of the file's data. Conversely, the description of the records in a database table is stored in the data dictionary within the database itself. When you change the layout of the records in a flat file (by inserting a five-character item cost field after the sales price, for example), you must change all of the programs that read data from the flat file. If you change the fields in a database record, you need change only the data dictionary. Programs reading database records need not be changed and recompiled.

Another difference between flat files and a database is the way in which files are managed. While a database file (which consists of one or more tables) is managed by the database management system (DBMS), flat files are under the control of the computer operating system's file management system. A file management system, unlike a DBMS, does not keep track of the type of data a file contains. As such, the file system handles word-processing documents, spreadsheets, and graphic images the same way—it keeps track of each file's location and size. Every program that works with a flat file must have lines of code that define the type of data inside the file and how to manipulate it. When developing applications that work with database tables, the programmer needs to specify only what is to be done with the data. While the programmer working with a flat file must know how and where the data is stored, the database programmer is freed from having to know these details. Instead, of having to program how the file manager is to read, add, or remove records, the database programmer needs to specify only which actions the DBMS is to take. The DBMS takes care of the physical manipulation of the data.

Unfortunately, each operating system (DOS, Windows, Unix, and OS2, to name a few) has a different set of commands that you must use to access files. As a result, programs written to use flat file data are not transportable from one operating system to another since the data-manipulation code is often specific to a particular hardware platform. Conversely, programs written to manipulate database data are transportable because the applications make use of high-level read, write, and delete commands sent to the DBMS, which performs the specific steps necessary to carry them out. A delete command sent to the DBMS by an application running on a Unix system is the same delete command a DBMS running on Windows NT expects to see. The physical steps taken to carry out the command differ, but these steps are handled by the DBMS and hidden from the application program.

Thus, the major differences between a flat file and a database are that the flat file is managed by the operating system's file management system and contains no description of its contents. As a result, application programs working with a flat file must include a definition of the flat file record layout, code that specifies the activity (read, write, delete) to be performed, and low-level operating system-specific commands to carry out the program's intent. A database, on the other hand, is managed by the DBMS that handles the low-level commands that manipulate the database file data. In short, programs that work with flat files define the data and the commands that specify what to do and how to do it. Programs that work with a database specify only what is to be done and leave the details of how it is to be done to the DBMS.

Understanding the Hierarchical Database Model

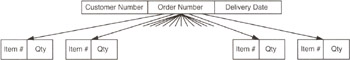

A hierarchical database model consists of data arranged into a structure that looks a lot like a family tree or company organizational chart. If you need to manage data that lends itself to being represented as parent/child relationships, you can make use of the hierarchical database model. Suppose, for example, that you have a home food delivery service and need to know how much of each grocery item you have to purchase in order to fill your customer orders for a particular delivery date. You might design your database using the hierarchical model similar to that shown in Figure 3.1.

Figure 3.1: Hierarchical database model with ORDER/ITEM parent/child relationships

In a hierarchical database, each parent record can have multiple child records; however, each child must have one and only one parent. The hierarchical database for the home food delivery service orders consists of two tables: ORDER (with fields: CUSTOMER NUMBER, ORDER NUMBER, DELIVERY DATE) and ITEM (with fields: ITEM NUMBER, QUANTITY). Each ORDER (parent) record has multiple ITEM (child) records. Conversely, each ITEM (child) record has one parent—the ORDER record for the date on which the item is to be delivered. As such, the database conforms to the hierarchical database model.

To work with data in the database, a program must navigate its hierarchical structure by:

- Finding a particular parent or child record (that is, find an ORDER record by date, or find an ITEM by ITEM NUMBER)

- Moving "down," from parent to child (from ORDER to ITEM)

- Moving "up," from child to parent (from ITEM to ORDER)

- Moving "sideways," from child to child (from ITEM to ITEM) or parent to parent (from ORDER to ORDER)

Thus, to generate a purchase order for the items needed to fill all customer orders for a particular date, the program would:

- Find an ORDER record for a particular date.

- Move down to the first ITEM (child) record and add the amount in the quantity field to the count of that item number to be delivered. For example, if the first item were item number 10 with a quantity of 5, the program would add 5 to the count of item 10s to be delivered on the delivery date.

- Move sideways to the next ITEM (child) record and add the amount in its quantity field to the count of that item number to be delivered. For example, if the next ITEM (child) record for this order were 15 with a quantity of 4, the program would add 4 to the count of item 15s to be delivered on the delivery date.

- Repeat Step 3 until there are no more child records.

- Move up to the ORDER (parent) record.

- Move sideways to the next ORDER (parent) record. If the ORDER record has a delivery equal to the one for which the program is generating the purchase order, continue at Step 2. If there are no more ORDER records, or if the delivery date in the ORDER record is not equal to the date for which the program is generating a purchase order, continue at Step 7.

- Output the purchase order by printing the item number and quantity to be delivered for each of the items with a nonzero delivery count.

The main advantages of the hierarchical database are:

- Performance. Navigating among the records in a hierarchical database is very fast because the parent/child relationships are implemented with pointers from one data record to another. The same is true for the sideways relationships from child to child and parent to parent. Thus, after finding the first record, the program does not have to search an index (or do a table scan) to find the next record. Instead, the application needs only to follow one of the multiple child record pointers, the single sibling record pointer, or the single parent record pointer to get to the "next" record.

- Ease of understanding. The organization of the database parallels a corporate organization chart or family tree. As such, it has a familiar "feel" to even nonprogrammers. Moreover, it easily depicts relationships where A is a part of B (as was the case with the order database we discussed, where each item was a part of an order).

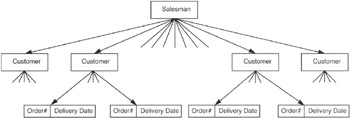

The main disadvantage of the hierarchical database is its rigid structure. If you want to add a field to a table, the database management system must create a new table for the larger records. Unlike an SQL database, the hierarchical model has no ALTER TABLE command. Moreover, if you want to add a new relationship, you will have to build a new and possibly redundant database structure. Suppose, for example, that you want to track the orders for both a customer and all of the customers for a salesperson; you would have to create a hierarchical structure similar to that shown in Figure 3.2.

Figure 3.2: Hierarchical database model with SALESMAN, CUSTOMER, and ORDER relationships

If you just rebuild the ORDER records to include the salesman and leave the database structure as shown in Figure 3.1, your application would have to visit each and every ORDER record to find all of the customers for a particular salesman or all of the orders for a particular customer. Remember, each record in the hierarchical model has only one sibling pointer for use in moving laterally through the database. In our example database, ORDER records are linked by delivery date to make it easy to find all orders for a particular delivery date. Without knowing the date range in which a particular customer placed his or her order(s), you have to visit every ORDER record to see if it belongs to a specific customer. If you decide to restructure the original database instead of creating the redundant ORDER table, you increase the time it takes to find all of the orders for a particular delivery date. In the restructured database, moving laterally at the ORDER record level of the tree gives you only the ORDER records for a particular customer, since ORDER records are now children of a CUSTOMER record parent.

Understanding the Network Database Model

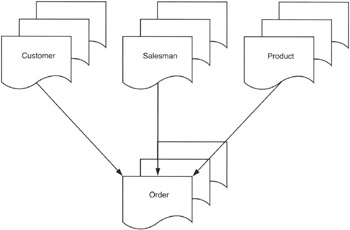

The network database model extends the hierarchiear model by allowing a record to participate in multiple parent/child relationships. In order to be helpful, a database model must be able to represent data relationships in a database to mirror those we see in the real world. One of the shortcomings of the hierarchical database model was that a child record could have one and only one parent. As a result, if you needed to model a more complex relationship, you had to create redundant tables. For example, suppose you were implementing an order-processing system. You would need at least three parent/child relationships for the same ORDER record, as shown in Figure 4.1.

Figure 4.1: Database requiring multiple parent/child relationships

You need to be able to print out an invoice for the orders placed by your customers, so you need to know which orders belong to which customer. The salesmen need to be paid commissions, so you need to know which orders each of them generated. Finally, the production department needs to know which parts are allocated to which orders so that it can assemble the orders and maintain an inventory of products to fill future orders.

If you were to use the hierarchical model, you would have to produce three ORDER tables, one for each of the three parents of each ORDER record. Redundant tables take up additional disk space and increase the processing time required to complete a transaction. Consider what happens if you need to enter an order into a hierarchical database that has redundant ORDER tables. In the current example with three parent/child relationships to ORDER records, the program must insert each new ORDER record into three tables. Conversely, if you had a database that allowed a record to have more than one parent, you would have to do only a single insert.

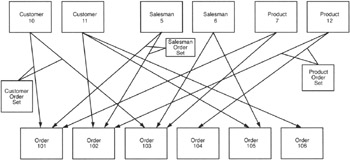

In addition to allowing child records to have multiple parents, the network database model introduced the concepts of "sets" to the database processing. Using the network database model, you could structure the order-processing database relationships shown in Figure 4.1 as shown in Figure 4.2.

Figure 4.2: A database for an order-processing system based on the network database model

Look at Figure 4.2, and you will see that ORDER 101 and ORDER 103 belong to (or are the children of) CUSTOMER 10. Meanwhile, ORDER 102, ORDER 105, and ORDER 106 belong to CUSTOMER 11. As mentioned previously, the network database model applies set concepts to database processing. Refer again to Figure 4.2, and note that the orders that belong to CUSTOMER 10 (ORDER 101 and ORDER 103) are defined as the Customer Order Set for CUSTOMER 10. Similarly, ORDERS 102, 105, and 106 are the Customer Order Set for CUSTOMER 11. Moving next to the SALESMEN records, you can see that SALESMAN 5 was responsible for ORDER 101 and ORDER 102. Meanwhile SALESMAN 6 was responsible for ORDER 103 and ORDER 105. Thus, the Salesman Order Set for SALESMAN 5 consists of ORDERS 101 and 102, and the Salesman Order Set for SALESMAN 6 includes ORDERS 103 and 105. Finally, moving on to the PRODUCTS table, you can see that that PRODUCT 7 is on ORDER 101 and ORDER 103. PRODUCT 12, meanwhile, is on ORDER 102 and ORDER 104. As such, the Product Order Set for PRODUCT 7 consists of ORDERS 102 and 104; while the Product Order Set for PRODUCT 12 includes ORDERS 102 and 104.

| Note |

The company will, of course, have more customers, salesmen, products, and orders than those shown in Figure 4.2. The additional customers, salesmen, and products would be represented as additional parent records in their respective tables. Meanwhile, each of the additional ORDER (child) records in the ORDER table would be an element in each of the three record sets (Customer Order Set, Salesman Order Set, and Product Order Set). |

To retrieve the data in the database, a program must navigate the hierarchical structure by:

- Finding a parent or child record (finding a SALESMAN by number, for example)

- Moving "down," from parent to the first child in a particular set (from SALESMAN to ORDER, for example)

- Moving "sideways," from child to child in the same set (from ORDER to ORDER, for example), or from parent to parent (from CUSTOMER to CUSTOMER, SALESMAN to SALESMAN, or PRODUCT to PRODUCT)

- Moving "up," from child to parent in the same set, or from child to parent in another set (from ORDER to SALESMAN or from ORDER to PRODUCT or from ORDER to CUSTOMER)

Thus, getting information out of a network database is similar to getting data out of the hierarchical database-the program moves rapidly from record to record using a pointer to the "next" record. In the network database, however, the programmer must specify not only the direction of the navigation (down, sideways, or up), but also the set (or relationship) when traveling up (from child record to parent) and sideways (from child to child in the same set).

Because the network database model allows a child record to have multiple parent records, an application program can use a single table to report on multiple relationships. Using the order-processing database example, a report program can use the ORDER table to report which orders include a particular product, which customers bought the product, and which salesmen sold it by performing the following steps:

- Find a PRODUCT record by description or product number.

- Move down to the first ORDER record (which contains the product) in the Product Order Set.

- Find the CUSTOMER that ordered the product (placed the order) by moving up to the parent of the Customer Order Set.

- Return to the child ORDER record by moving back down the link ascended in Step 3.

- Find the SALESMAN that sold the product (got the customer to place the order) by moving up to the parent of the Salesman Order Set.

- Return to the child ORDER record by moving back down the link ascended in Step 5.

- Move sideways to the next ORDER (child) record in the Product Order Set. If there is another child record, continue at Step 2.

The main advantages of the hierarchical database are:

- Performance. Although the network database model is more complex than the hierarchical database model (with several additional pointers in each record), its overall performance is comparable to that of its predecessor. While the DBMS has to spend more time maintaining record pointers in the network model, it spends less time inserting and removing records due to the elimination of redundant tables.

- Ability to represent complex relationships. By allowing more than one parent/child link in each record, the network database model lets you extract data based on multiple relationships using a single table. While we explored using the network database to get a list of all customers that purchased a product and all salesmen that sold the product, you could also get a list of the orders placed by one or all of the customers and a list of sales made by one salesman or the entire sales force using the same network database structure and the same set of tables.

Unfortunately, the network database model, like its hierarchical rival, has the disadvantage of being inflexible. If you want to add a field to a table, the DBMS must create a new table for the larger records. Like the hierarchical model (and, again, unlike an SQL relational database), the network model has no ALTER TABLE command. Moreover, rebuilding a table to accommodate a change in a record's attributes or adding a new table to represent another relationship requires that a majority of the network database's record links be recalculated and updated-this translates into the database being inaccessible for extended periods of time to make even a minor change to a single table's fields.

Understanding the Relational Database Model

While the relational database model did not appear in commercial products until the 1980s, Dr. Edgar F. Codd of IBM defined the relational database in 1970. The relational model simplifies database structures by eliminating the explicit parent/child relationship pointers. In a relational database, all data is organized into tables. The hierarchical and network database records are represented by table rows, record fields (or attributes) are represented as table columns, and pointers to parent and child records are eliminated.

A relational database can still represent parent/child relationships, it just does it based on data values in its tables. For example, you can represent the complex Network database model data shown in Figure 4.2 of Tip 4 with the tables shown in Figure 5.1.

|

Customer Table |

||

|---|---|---|

|

CUST_NO |

LAST_NAME |

FIRST_NAME |

|

10 |

FIELDS |

SALLY |

|

11 |

CLEAVER |

WARD |

|

Salesman Table |

||

|---|---|---|

|

SALESMAN_NO |

LAST_NAME |

FIRST_NAME |

|

5 |

KING |

KAREN |

|

6 |

HARDY |

ROBERT |

|

Product Table |

|||

|---|---|---|---|

|

PRODUCT_NO |

DESCRIPTION |

SALES_PRICE |

INV_COUNT |

|

7 |

100 WATT SPEAKER |

75.00 |

25 |

|

8 |

DVD PLAYER |

90.00 |

15 |

|

9 |

AMPLIFIER |

450.00 |

305 |

|

10 |

RECEIVER |

750.00 |

25 |

|

11 |

REMOTE CONTROL |

25.00 |

15 |

|

12 |

50 DVD PACK |

500.00 |

25 |

|

Order Table |

||||

|---|---|---|---|---|

|

ORDER NO |

DEL DATE |

PRODUCT_NO |

SALESMAN_NO |

CUST_NO |

|

101 |

01/15/2000 |

7 |

5 |

10 |

|

102 |

01/22/2000 |

12 |

5 |

11 |

|

103 |

03/15/2000 |

7 |

6 |

10 |

|

104 |

04/05/2000 |

12 |

7 |

12 |

|

105 |

07/05/2000 |

9 |

6 |

11 |

|

106 |

08/09/2000 |

7 |

8 |

11 |

Figure 5.1: ORDER_ TABLE with relationships to three other tables

In place of pointers, the relational database model uses common columns to establish the relationship between tables. For example, looking at Figure 5.1, you will see that the CUSTOMER, SALESMAN, and PRODUCT tables are not related because they have no columns in common. However, the ORDER table is related to the CUSTOMER table by the CUSTOMER_NO column. As such, an ORDER table row is related to a CUSTOMER table row where the CUSTOMER_NO column has the same value in both tables. Figure 5.1 shows CUSTOMER 10 owns ORDER 101 and ORDER 103 since the CUST_NO column for these two ORDER rows is equal to the CUSTOMER_NO column in the CUSTOMER table. The SALESMAN and PRODUCT tables are related to the ORDER table in the same manner. The SALESMAN table is related to the ORDER table by the common SALESMAN_NO column, and the PRODUCT table is related to the ORDER table by the PRODUCT_NO column.

As you may have noticed from the discussion of the hierarchical model in Tip 3, "Understanding the Hierarchical Database Model," and the network model in Tip 4, applications written to extract data from either of these models had the database structures "hard-coded" into them. To navigate the records in the network model, for example, the programmer had to know what pointers were available and what type of data existed at each level of the database tree structure. Knowing the names of the pointers let the programmer move up, down, or across the database tree; knowing what data was available at each level of the tree told the programmer the direction in which to move. Because record relationships based on pointers are hard-coded into the application, adding a new level to the tree structure requires that you change the application program's logic. Even just adding a new attribute to a network database record changes the location of the pointer within the record changes. As a result, any changes to a database record require that the application programs accessing the database be recompiled or rewritten.

When working with a relational database, you can add tables and add columns to tables to create new relationships to the new tables without recompiling existing applications. The only time you need to recompile an application is if you delete or change a column used by that program. Thus, if you want to relate an entry in the SALESMAN table to a customer (in the CUSTOMER table), you need only add a SALESMAN_NO column to the CUSTOMER table.

Understanding Codd s 12 Rule Relational Database Definition

Dr. Edgar F. Codd published the first theoretical model of a relational database in an article entitled "A Relational Model of Data for Large Shared Data Banks" in the Communications of the ACM in 1970. The relational model was theoretical at the time because all commercially available database management systems were based on either the hierarchical or the network database models. Although Dr. Codd worked for IBM, it was Oracle that brought the first database based on the relational model to market in 1980—10 years later! While Dr. Codd's 12 rules are the semi-official definition of a relational database, and while many commercial databases call themselves relational today, no relational database follows all 12 rules.

Codd's 12 rules to define a relational database are:

- The Information Rule. All information in a relational database must be represented in one and only one way, by values in columns within rows of tables. SQL satisfies this rule.

- The Guaranteed Access Rule. Each and every datum (or individual column value in a row) must be logically addressable by specifying the name of the table, primary key value, and column name. When addressing a data item, the name of the table identifies which database table contains the item, the column identifies a specific item in a row of the named table, and the primary key identifies a single row within a table. SQL follows this rule for tables with primary keys. However, SQL does not require that a table have a key.

- Systematic Treatment of Null Values. The relational database management system must be able to represent missing and inapplicable information in a systematic way that is independent of data type, different than that used to show empty character strings or a strings of blank characters, and distinct from zero or any other number. SQL uses NULL to represent both missing and inapplicable information—as you will learn later, NULL is not zero, nor is it an empty string.

- Dynamic Online Catalog Based on the Relational Model. The database catalog (or description) is represented in the same manner as ordinary data (using tables), so authorized users can use the same relational language to work with the online catalog and regular data. SQL does this through system tables whose columns describe the structure of the database.

- Comprehensive Data Sublanguage Rule. The system may support more than one language, but at least one language must have a well-defined syntax that is based on character strings and that can be used both interactively and within application programs. The language must support:

- Data definitions

- View definitions

- Data manipulation (both update and retrieval)

- Security

- Integrity constraints

- Transaction management operations (Begin, Commit, Rollback)

SQL Data Manipulation Language (DML) (which can be used both interactively and in application programs) has statements that perform all of the required operations.

- View Updating Rule. All views that are theoretically updateable must be updateable by the system. (Views are virtual tables that give users different "pictures" or representations of the database structure.) SQL does not fully satisfy this rule in that it limits updateable views to those based on queries on a single table without GROUP BY or HAVING clauses; it also has no aggregate functions, no calculated columns, and no SELECT DISTINCT clause. Moreover, the view must contain a key of the table, and any columns excluded from the view must be NULL-able in the base table.

- High-Level Insert, Update, and Delete. The system must support multiple-row and table (set-at-a-time) Insert, Update, and Delete operations. SQL does this by treating rows as sets in Insert, Update, and Delete operations. Rule 7 is designed to exclude systems that support only row-at-a-time navigation and modification of the database, such as that required by the hierarchical and network database models. SQL fully satisfies this rule.

- Physical Data Independence. Application programs and interactive database access methods don't have to change due to a change in the physical storage device or method used to retrieve data from that device. SQL does this well.

- Logical Data Independence. Application programs and interactive database access methods don't have to change if tables are changed in a way that preserves the original table values. SQL satisfies this requirement—the results of queries and action taken by statements do not depend on the arrangement of columns in a row, the position of rows in a table, or the structure used to represent the table inside the computer system.

- Integrity Independence. All integrity constraints specific to a particular relational database must be definable in the relational sub-language, be specified outside of the application programs, and stored in the database catalogue. SQL-92 has integrity independence.

- Distribution Independence. Applications and end users should not be aware of whether the database data exists in a single location or whether it is replicated on and distributed among many computers on a network. Thus, the database language must be able to use the same commands to query and manipulate distributed data located on both local and remote computer systems. Distributed SQL database products are relatively new, so the jury is still out as to how well they will satisfy this criterion.

- The Nonsubversion Rule. If the system provides a low-level (record-at-a-time) interface, the low-level statements cannot be used to bypass integrity rules and constraints expressed in the high-level (set-at-a-time) language. SQL-92 complies with this rule. Although one can write statements that affect individual table rows, the system will still enforce security and referential integrity rules.

Just as no SQL DBMS complies with all of the specifications in the SQL-92 standard, none of the commercially available relational databases follow all of Codd's 12 rules. Rather than comparing scorecards on the number of Codd's rules a relational database satisfies, companies normally select a particular database product based on performance, features, availability of development tools, and quality of vendor support. However, Codd's rules are important from a historical prospective, and they do help you decide whether a DBMS is based on the relational model.

Understanding Terms Used to Define an SQL Database

Tables

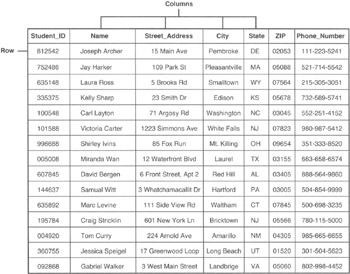

Every SQL database is based on the relational database model. As such, the individual data items in an SQL database are organized into tables. An SQL table (sometimes called a relation), consists of a two-dimensional array of rows and columns. As you learned in Codd's first two rules in Tip 6, "Understanding Codd's 12-Rule Relational Database Definition," each cell in a table contains a single valued entry, and no two rows are identical. If you've used a spreadsheet such as Microsoft Excel or Lotus 1-2-3, you're already familiar with tables, since spreadsheets typically organize their data into rows and columns. Suppose, for example, that you were put in charge of organizing your high school class reunion. You might create (and maintain) a table of information on your classmates similar to that shown in Figure 7.1.

Figure 7.1: Relational database table of student information

Notice that when you look vertically down the table, all of the values in any one column have the same meaning in each and every row of the table. As such, if you see a student ID in the first column of the tenth row of the table, you know that every row in the table has a student ID in its first column. Similarly, if you find a street address in the third column of the second row of a table, you know that the remaining rows of the table have a street address in the third column. In addition to a column having a consistent data type throughout the rows of the table, each column's data is also independent of other columns in the row. For example, the NAME column will contain student names whether it is the second column (as shown in Figure 7.1), the fifth column, or the tenth column in the table.

The "sameness" of the values in a column and the independence of the columns, allow SQL tables to satisfy Codd's relational database Rule 9 (Logical Data Independence). Neither the order of the rows in the table nor the order of its columns matters to the database management system (DBMS). When you tell the DBMS to execute an SQL statement such as

SELECT NAME, PHONE_NUMBER FROM STUDENT

the DBMS will look in the system table (or catalog) to determine which column contains the NAME data and which column has the PHONE_NUMBER information. Then the DBMS will go through the rows of the table and retrieve the NAME and PHONE_NUMBER value from each row. If you later rearrange the table's rows or its columns, the original SQL statement will still retrieve the same NAME and PHONE_NUMBER data values—only the order of the displayed data might change if you changed the order of the rows in the table.

If you look horizontally across the table, you will notice that all of the columns in a single row are the attributes of a single entity. In fact, we often refer to individual table rows as records (or tuples), and the column values in the row as fields (or attributes). Thus, you might say that Figure 7.1 consists of 15 customer records and that each record has the fields STUDENT_ID, NAME, STREET_ADDRESS, CITY, STATE, ZIP_CODE, and PHONE_NUMBER.

Views

A database view is not an opinion, nor is it what you see when you look out of a window in your home. Rather, a database view is the set of columns and rows of data from one or more tables presented to a user as if it were all of the rows and columns in a single table. Views are sometimes called "virtual" tables because they look like tables; you can execute most SQL statements on views as if they were tables. For example, you can query a view and update its data using the same SQL statements you would use to query and update the tables from which the view was generated. Views, however, are "virtual" tables because they have no independent existence. Views are a way of looking at the data, but they are not the data itself.

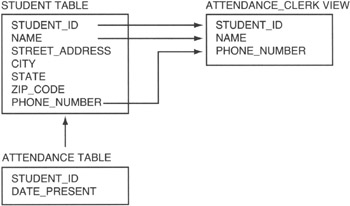

Suppose, for example, that your school had a policy of calling the homes of students too sick to attend classes (why else would you miss school, right?). The attendance clerk would need only a few columns (or attributes) from the STUDENT table and only those rows (or records) in which the student is absent. Figure 7.2 shows the attendance clerk's view of the data.

Figure 7.2: Attendance clerk database view derived from a single table

Although the student database has seven fields, the attendance clerk sees only three on his screen: STUDENT_ID, NAME, and PHONE_NUMBER. Since the attendance clerk is to call the homes of only the students absent from school, you would write a query that selected the rows of the STUDENT table that did not have a matching row in the ATTENDANCE table. Then you would have your query display only the three fields shown in the ATTENDANCE_CLERK view in Figure 7.2. Thus, the attendance clerk would see only the STUDENT_ID, NAME, and PHONE_NUMBER fields of absent students.

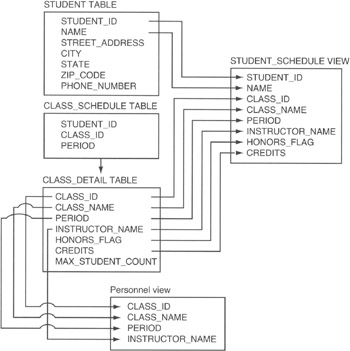

Now, suppose you needed to print the class schedule for the students. Well, each student needs only his or her own class information, as show in Figure 7.3.

Figure 7.3: Student schedule and personnel views derived from multiple tables

The STUDENT_SCHEDULE view includes the majority of columns from the CLASS_ DETAIL table and only two columns from the STUDENT_TABLE. Thus, one student's view of the database is very different than that shown to the attendance clerk. While the attendance clerk sees the database as a list of names and phone numbers of students absent on a particular day, the student sees the database as a list of classes he is scheduled to attend. As such, you can hide table columns from view, combine columns from multiple tables, and display only some of the rows in one or more tables.

As far as the user is concerned, the view itself is a table. As mentioned previously in the current example, the student thinks there is a table with his name and class schedule, and the attendance clerk thinks there is a table of absent students. In addition to displaying data as if it were a table, a view also allows a user with update access to change values in the base tables. (Base tables are those tables from which the view [or virtual table] is derived.) Notice the PERSONNEL view shown in Figure 7.3. Suppose that you had a personnel clerk responsible for entering the names of the instructors for each of the classes. The clerk's screen (view) would show the information on a particular class and allow the clerk to update the name of the instructor for that class. When the clerk changes the name in the INSTRUCTOR_NAME column of the PERSONNEL view, the DBMS actually updates the value in the INSTRUCTOR_NAME column of the CLASS_DETAIL table in the row from which the PERSONNEL view was derived.

Schemas

The database schema is a set of tables (often called the system catalog) that contain a full description of the entire database. Although Figure 7.1 shows the names of the columns as part of the table, and Figure 7.2 and Figure 7.3 show the names of the columns in place of data, actual database data tables contain only data values. Thus, the actual database table shown in Figure 7.1 would have only the information shown below the column headings. Similarly, the table rows (or records) represented by the rectangles in Figure 7.2 and Figure 7.3 would have the actual student, attendance, and class information. The database schema has tables that contain:

- The name of each data table

- The names of each data table's columns, the type of data the column can hold, and the range of values that a column can take on

- A list of database views, how the views are derived, and which users are allowed to use which views

- A list of constraints, or rules, that limit the range of values one can enter into a column, rows one can delete from a table, and rows one can add

- Security information on who can view (query) an existing table, remove a table, or create a new one

- Security information on who can update each table's contents and which columns he or she can change

- Security information on who can add rows to or delete rows from each table

You will learn more about the database schema in Tip 12, "Understanding Schemas." For now, the important thing to know is that the database schema contains a complete description of the database.

Domains

Each column of a table (or attribute of a relation) contains some finite number of values. The domain of the table column (or attribute) is the set of all possible values one could find in that column. Suppose, for example, that you had a table of coins in a U.S. coin collection. The DENOMINATION column could have only the values 0.01, 0.05, 0.10, 0.25, 0.50, and 1.00. Thus, the "domain" of the DENOMINATION table is [0.01, 0.05, 0.10, 0.25, 0.50, 1.00], and all of the rows in the table must have one of these values in the DENOMINATION column.

Constraints

Constraints are the rules that limit what can be done to the rows and columns in a table and the values that can be entered into a table's attributes (columns). While the domain is the range of all values that a column can assume, a column constraint (such as the CHECK constraint, which you will learn about in Tip 193, "Using the CHECK Constraint to Validate a Column's Value") is what prevents a user from entering a value outside the column's domain.

In addition to limiting the values entered into a field, constraints specify rules that govern what rows can be added to or removed from a table. For example, you can prevent a user from adding duplicate rows to a table by applying the PRIMARY KEY constraint (which you will learn about in Tip 173, "Understanding Foreign Keys") to one of a table's columns. If you apply the PRIMARY KEY constraint to the STUDENT_ID column of the STUDENT table in Figure 7.1, the DBMS will make sure that every value in the STUDENT_ID column remains unique. If you already have a STUDENT_ID 101 in the STUDENT table, no user (or application program) can add another row with 101 in the STUDENT_ID column to the table. Similarly, you can apply the FOREIGN KEY constraint (which you will learn about in Tip 174, "Understanding Referential Data Integrity Checks and Foreign Keys") to a column to prevent related rows in another table from being deleted. Suppose, for example, that you had a CUSTOMER and ORDER table similar to that shown in Figure 7.4.

|

CUSTOMER table |

||

|---|---|---|

|

CUSTOMER_ID |

NAME |

ADDRESS |

|

10 |

Konrad King |

765 Wally Way |

|

ORDER table |

||||

|---|---|---|---|---|

|

Order_No |

CUSTOMER_ID |

Item |

Quantity |

Order Date |

|

1 |

10 |

789 |

12 |

4/12/2000 |

|

2 |

||||

|

3 |

||||

|

4 |

||||

|

5 |

||||

Figure 7.4: ORDER and CUSTOMER table related by CUSTOMER_ID

The rows (or records) in the ORDER table are related to the CUSTOMER table by the value in the CUSTOMER_ID column. Thus, a row (or order) in the ORDER table with a CUSTOMER_ID of 10 was placed by Customer 10 (the row in the CUSTOMER table with a 10 in the CUSTOMER_ID column). If someone removed Customer 10 from the CUSTOMER table, you would no longer have any information (other than customer number) on the person that placed Order 1. You can prevent the loss of information by placing the FOREIGN KEY constraint on the CUSTOMER_ID column of the ORDER table. Once in place, the constraint will prevent anyone from deleting Customer 10 from the CUSTOMER table, as long as at least one row (order) in the ORDER table has a 10 in the CUSTOMER_ID field.

In short, constraints are the rules that maintain the domain, entity, and referential integrity of your database. You will learn all about the database integrity and the importance of maintaining it in Tips 175–190.

Understanding the Components of a Table

An SQL table consists of scalar (single-value) data arranged in columns and rows. Relational database tables have the following components:

- A unique table name

- Unique names for each of the columns in the table

- At least one column

- Data types, domains, and constraints that specify the type of data and its range of values for each column in the table

- A structure in which data in one column of the table has the same meaning in every row of the table

- Zero or more rows that represent physical or logical entities

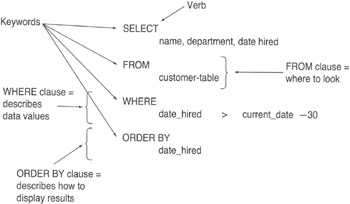

When naming a table, bear in mind that no two tables you create can have the same name. However, table names in the SQL database need be unique only among all of the tables created (or owned) by an individual user. As such, if two users—Joe and Mark, for example—were to create tables in an SQL database, both of them could create a table named Stocks. However, neither of them could create two tables named Stock in the same schema. In Tip 9, "Understanding Table Names," you'll learn more about table names and how the DBMS uses the owner name and schema name to make the names unique across all of the tables in the database. For now, the important thing to know is that you must give your table a name, and you don't have to worry about what other people have named their tables. When selecting a table name, analyze the columns you plan to include in the table, and use a name that summarizes the table's contents. Figure 8.1, for example, contains columns of data that deal with phone call data: PHONE_REP_ID (who made the call), PHONE_NUMBER (the phone number called), DATE_TO_CALL and TIME_TO_CALL (the date and time the call was to be made), DATE_CALLED and TIME_CALLED (the date and time the call was made), HANGUP_TIME (the time the call ended), and DISPOSITION (what happened as a result of the call). The column titles indicate that the table will contain phone call data. Therefore, CALL_HISTORY is an appropriate table name since the name describes the type of data that can be retrieved from the table.

|

CALL_HISTORY table |

|||||||

|---|---|---|---|---|---|---|---|

|

PHONE_REP_ID |

PHONE_NUMBER |

DATE_TO_CALL |

TIME_TO_CALL |

DATE_CALLED |

TIME_CALLED |

HANGUP_TIME |

DISPOSITION |

Figure 8.1: Relational database table of phone call history data

Each horizontal row in a relational database table represents a physical or logical entity. In the CALL_HISTORY table, for example, each row represents a phone call. The columns in a row represent data items. Although neither the relational database rules nor the SQL-92 specification dictates that columns in a table must be somehow related, you will seldom (if ever) see a database table where the columns are just a random mix of data. Typically (if not by convention), data in the columns of a row details the attributes of the entity represented by that row. Notice that the columns in the CALL_HISTORY table shown in Figure 8 all describe some attribute of a phone call.

All relational database tables have at least one column. (A table may have no rows but must have at least one column.) The SQL standard does not specify the maximum number of columns, but most commercial databases normally limit the number of columns in a table to 255. Similarly, the SQL standard places no limit on the number of rows a table may contain. As a result, most SQL products will allow a table to grow until it exhausts the available disk space—or, if they impose a limit, they will set it to a number in the billions.

The order of the columns in a table has no effect on the results of SQL queries on the database. When creating a table, you do, however, have to specify the order of the columns, give each column a unique name, specify the type of data that the column will contain, and specify any constraints (or limits) on the column's values. To create the table shown in Figure 8.1, you could use this SQL statement:

CREATE TABLE CALL_HISTORY (PHONE_REP_ID CHAR(3) NOT NULL, PHONE_NUMBER INTEGER NOT NULL, DATE_TO_CALL DATE, TIME_TO_CALL INTEGER, DATE_CALLED DATE NOT NULL, TIME_CALLED INTEGER NOT NULL, HANGUP_TIME INTEGER NOT NULL, DISPOSITION CHAR(4) NOT NULL)

When you look vertically down the columns in a relational database table, you will notice that the column data is self-consistent, meaning that data in the column has the same meaning in every row of the column. Thus, while the order of the columns is immaterial to the query, the table must have some set arrangement of columns that does not change from row to row. After you create the table, you can use the ALTER TABLE command to rearrange its columns; doing so will have no effect on subsequent SQL queries on the data in the table.

Each column in the table has a unique name, which you assign to the column when you execute the CREATE TABLE statement. In the current example, the column heading names are shown at the top of each column in Figure 8.1. Notice that the DBMS assigns column names from left to right in the table and in the order in which the names appear in the CREATE TABLE statement. All of the columns in a table must have a different (unique) name. However, the same column name may appear in more than one table. Thus, I can have only one PHONE_NUMBER column in the CALL_HISTORY table, but I can have a PHONE_NUMBER column in another table, such as CALLS_TO_MAKE, for example.

Understanding Table Names

When selecting the name for a table, make it something short but descriptive of the data the table will contain. You will want to keep the name short since you will be typing it in SQL statements that work with the table's data. Keeping the name descriptive will make it easy to remember which table has what data, especially in a database system with many (perhaps hundreds) of tables. If you are working on a personal or departmental database, you normally have carte blanche to name your tables whatever you wish—within the limits imposed by your DBMS, of course. SQL does not specify that table names begin with a certain letter or set of letters. The only demand is that table names be unique by owner. (We'll discuss table ownership further in a moment.) If you are working in a large, corporatewide, shared database, your company will probably have some restrictions on table names to organize the tables by department (perhaps) and to avoid name conflicts. In a large organization, for example, tables for sales may all begin with "SALES_," those for human resources might begin with "HR_," and those for customer service might start with "SERVICE_." Again, SQL makes no restrictions on the table names other than they be unique by owner—a large company with several departments may want to define its own set of restrictions to make it easier to figure out where the data in a table came from and who is responsible for maintaining it.

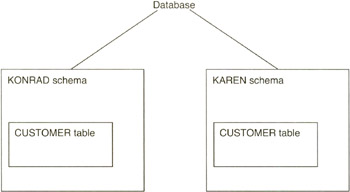

In order to create a table, you must be logged in to the SQL DBMS, and your username must have authorization to use the CREATE TABLE statement. Once you are logged in to the DBMS, the system knows your username and automatically makes you the owner of any table you create. Therefore, if you are working in a multi-user environment, the DBMS may indeed have more than one table named CUSTOMER—but it has only one CUSTOMER table owned by any one user. Suppose, for example, that DBMS users Karen and Konrad each create a CUSTOMER table. The DBMS will automatically adds the owner's name (by default, the table owner is the user ID of the person creating the table) to the name of the table to form a qualified table name that is then stored in the system catalog. Thus, Karen's CUSTOMER table would be stored in the system catalog as KAREN.CUSTOMER, and Konrad's table would be stored as KONRAD.CUSTOMER. As such, all of the table names in the system catalog are still unique even though both Konrad and Karen executed the same SQL statement: CREATE TABLE CUSTOMER.

When you log in to the DBMS and enter an SQL statement that references a table name, the DBMS will assume that you are referring to a table that you created. As such, if Konrad logs in and enters the SQL statement SELECT * FROM CUSTOMER, the DBMS will return the values in all columns of all rows in the KONRAD.CUSTOMER table. Likewise, if Karen logs in and executes the same statement, the DBMS will display the data in KAREN.CUSTOMER. If another user (Mark, for example) logs in and enters the SQL statement SELECT * FROM CUSTOMER without having first created a CUSTOMER table, the system will return an error, since the DBMS does not have a table named MARK.CUSTOMER.

In order to work with a table created by another user, you must have the proper authorization (access rights), and you must enter the qualified table name. A qualified table name specifies the name of the table's owner, followed by a period (.) and then the name of the table (as in .

). In the previous example, if Mark had the proper authorization, he could type the SQL statement SELECT * FROM KONRAD.CUSTOMER to display the data in Konrad's CUSTOMER table, or SELECT * FROM KAREN.CUSTOMER to display the contents of Karen's CUSTOMER table. You can use a qualified table name in an SQL statement wherever a table name can appear.

The SQL-92 standard further extends the DBMS's ability to work with duplicate tables by allowing a user to create tables within a schema. (You will learn more about schemas in Tip 12, "Understanding Schemas," and about creating tables within schemas in Tip 506, "Using the CREATE SCHEMA Statement to Create Tables and Grant Access to Those Tables.") The fully qualified name of a table created within a schema becomes the schema name, followed by a period (.) and then the name of the table (for example, .

). Thus an individual user could create multiple tables with the same name by putting each of the tables in a different schema. For now, the important thing to know is that every table must have a unique qualified table name. As such, a user cannot use the same name for two tables unless he creates the tables in different schemas (which you will learn how to do in Tip 506).

Understanding Column Names

The SQL DBMS stores the names of the columns along with the table names in its system catalog. Column names must be unique within a table but can appear in multiple tables. For example, you can have a STUDENT_ID column in both a STUDENT table and a CLASS_SCHEDULE table. However, you cannot have more than one STUDENT_ID column in either table. When selecting a column name, use a short, unique (to the table being created) name that summarizes the kind of data the column will contain. If you plan to store an address in a column, name the column ADDRESS or STREET_ADDRESS, use CITY as the name for a column that holds the city names, and so on. The SQL specification does not limit your choice as to the name you use for a column (other than that a column name can appear only once in any one table). However, using descriptive column names makes it easier to know which columns to use when you write SQL statements to extract data from the table.

When you specify a column name in an SQL statement, the DBMS can determine the table to which you are referring if the column name is unique to a single table in the statement. Suppose, for example, that you had two tables with column names defined as shown in Figure 10.1.

|

STUDENT table |

|---|

|

STUDENT_ID |

|

STUDENT_NAME |

|

STREET_ADDRESS |

|

CITY |

|

STATE |

|

ZIP_CODE |

|

PHONE_NUMBER |

|

CLASS1_TEACHER |

|

CLASS2_TEACHER |

|

CLASS3_TEACHER |

|

CLASS4_TEACHER |

|

TEACHER table |

|---|

|

TEACHER_ID |

|

TEACHER_NAME |

|

SUBJECT |

|

PHONE_NUMBER |

Figure 10.1: Example STUDENT table and TEACHER table with duplicate column names

The DBMS would have no trouble determining which columns to display in the following SQL statement:

SELECT STUDENT_ID, STUDENT_NAME, SUBJECT, TEACHER_NAME FROM STUDENT, TEACHER WHERE CLASS1_TEACHER = TEACHER_ID

Since STUDENT_ID and STUDENT_NAME appear only in the STUDENT table, the DBMS would display the values in the STUDENT_ID and STUDENT_NAME columns of the STUDENT table. Similarly, SUBJECT and TEACHER_NAME are found only in the TEACHER table, so the DBMS would display SUBJECT and TEACHER_NAME information from the TEACHER table as it executes the SELECT statement. Thus, if you use columns from more than one table in an SQL statement, the DBMS can figure out which column name refers to which table if none of the column names in the SQL statement appears in more than one table listed in the FROM clause.

If you want to display data from one or more columns that have the same name in more than one table used in an SQL statement, you will need to use the qualified column name for each of the duplicate columns. The qualified column name is the name of the table, followed by a period (.) and then the name of the column. As such, if you wanted to list the student's phone number (found in the PHONE_NUMBER column in the STUDENT table), you could use the following SQL statement:

SELECT STUDENT_ID, STUDENT_NAME, STUDENT.PHONE_NUMBER, SUBJECT, TEACHER_NAME FROM STUDENT, TEACHER WHERE CLASS1_TEACHER = TEACHER_ID

If you specified only PHONE_NUMBER after STUDENT_NAME, the DBMS would not know if it were supposed to display the student's phone number or the teacher's phone number, since both tables have the column named PHONE_NUMBER. By using the qualified column name (STUDENT.PHONE_NUMBER, in this example), you specify not only the column whose data you want, but also the table whose data the DBMS is to use. In general, you can use qualified column names in an SQL statement wherever unqualified column names can appear.

As you learned in Tip 9, "Understanding Table Names," you need to use qualified table names whenever you want to work with a table that you do not own. Thus, you must use the qualified table name (the name of the table, followed by a period [.] and then the table name) in your SQL statement wherever the name of the table that you do not own appears. Thus, in the current example, if you own the TEACHER table but you did not create the STUDENT table (and Konrad, who created the table, gave you access to the table but did not assign its ownership to you), you would modify the SQL statement as follows:

SELECT STUDENT_ID, STUDENT_NAME, KONRAD.STUDENT.PHONE_NUMBER, SUBJECT, TEACHER_NAME FROM KONRAD.STUDENT, TEACHER WHERE CLASS1_TEACHER = TEACHER_ID

By using the qualified table name KONRAD.STUDENT in place of STUDENT in the SELECT statement, you tell the DBMS to extract data from the STUDENT table owned by Konrad instead of trying to get data from a nonexistent STUDENT table created (or owned) by you.

Understanding Views

As you learned in Tip 7, "Understanding Terms Used to Define an SQL Database," views are virtual tables. A view looks like a table because it appears to have all of the essential components of a table-it has a name, it has rows of data arranged in named columns, and its definition is stored in the database catalog right along with all of the other "real" tables. Moreover, you can use the name of a view in many SQL statements wherever a table name can appear. What makes a view a virtual vs. real table is that the data seen in a view exists in the tables used to create the view, not in the view itself.

The easiest way to understand views is to see how they are created, what happens when you use the name of a view in an SQL query, and what happens to the view upon completion of the SQL statement.

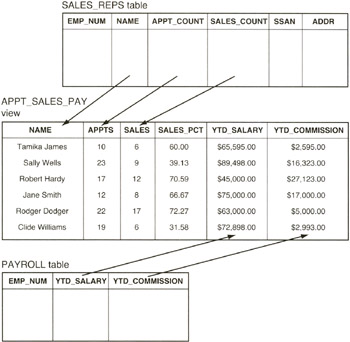

To create a view, use the SQL CREATE VIEW statement. Suppose, for example, that you have relational database tables with salesman and payroll data similar to that shown in Figure 11.1.

|

SALES_REPS table |

|||||

|---|---|---|---|---|---|

|

EMP_NUM |

NAME |

APPT_COUNT |

SALES_COUNT |

SSAN |

ADDR |

|

1 |

Tamika James |

10 |

6 |

||

|

2 |

Sally Wells |

23 |

9 |

||

|

3 |

Robert Hardy |

17 |

12 |

||

|

4 |

Jane Smith |

12 |

8 |

||

|

5 |

Rodger Dodger |

22 |

17 |

||

|

6 |

Clide Williams |

19 |

16 |

||

|

PAYROLL table |

||

|---|---|---|

|

EMP_NUM |

YTD_SALARY |

YTD_COMMISSION |

|

1 |

$69,595.00 |

$2,595.00 |

|

2 |

$89,498.00 |

$16,323.00 |

|

3 |

$45,000.00 |

$27,123.00 |

|

4 |

$75,000.00 |

$17,000.00 |

|

5 |

$63,000.00 |

$5,000.00 |

|

6 |

$72,898.00 |

$2,993.00 |

Figure 11.1: Example relational database tables to use as base tables for a view

When you execute this SQL statement

CREATE VIEW APPT_SALES_PAY (NAME,APPTS,SALES,SALES_PCT,YTD_SALARY,YTD_COMMISSION) AS SELECT NAME, APPT_COUNT, SALES_COUNT, ((APPT_COUNT / SALES_COUNT) * 100), YTD_SALARY, YTD_COMMISSION FROM SALES_REPS, PAYROLL WHERE SALES_REPS.EMP_NUM = PAYROLL.EMP_NUM

the DBMS stores the definition of the view in the database under the name APPT_SALES_PAY. Unlike the CREATE TABLE statement that creates an actual empty database table in addition to storing the definition of the table in the system catalog, the CREATE VIEW statement only stores the definition of the view.

The DBMS does not create an actual table when you create a view because, unlike a real table, the view does not exist in the database as a set of values in a table. Instead, the rows and columns of data you see through a view are the results produced by the query that defines the view.

After you create a view, you can use it in a SELECT statement as if it were a real table. For example, after you create the APPT_SALES_PAY view (using the CREATE statement that follows Figure 11.1), you can display the results of the query that defines the view by using this SQL statement:

SELECT * FROM APPT_SALES_PAY

When the DBMS sees the reference to a view in an SQL statement, it finds the definition of the view in its system tables. The DBMS then transforms the view references into an equivalent request against the base tables and executes the equivalent SQL statements. For the current example, the DBMS will execute a multi-table select statement (which you will learn about in Tip 205, "Using a SELECT Statement with a FROM Clause for Multi-table Selections") to form the virtual table shown in Figure 11.2, and then display the values in all of the columns in each row of the virtual table.

Figure 11.2: APPT_SALES_PAY view generated from base tables SALES_REPS and PAYROLL

For simple views, the DBMS will construct each row of the view's virtual table "on the fly." Thus, in the current example, the DBMS will extract data from the columns specified in the view definition from one row in the SALES_REPS and PAYROLL tables to create a row in the virtual APPT_SALES_PAY table. Next, the DBMS will execute the SELECT statement to display the fields in the newly created row (of the virtual table). The DBMS will then repeat the procedure (create a virtual row from the two tables and then display the columns of the virtual row), until no more rows in the base tables satisfy the query in the view.

To execute more complex views (such as those used in SQL statements that update data in the base tables), the DBMS must actually materialize the view, meaning that the DBMS will perform the query that defines the view and store the results in a temporary table. The DBMS will then use the temporary table to execute the SQL statement (such as SELECT * FROM APPT_SALES_PAY) that references the view. When the DBMS no longer needs the temporary table (at the completion of the SQL statement), the DBMS discards it. Remember, views do not hold data; they merely display the data stored in their base tables (SALES_REPS and PAYROLL, in the current example).

Whether the DBMS handles a particular view by creating rows on the fly or by pulling the view data into a temporary table, the end result is the same-the user can reference views in SQL statements as if they were real tables in the database.

There are several advantages in using views:

- Provide security. When you don't want a user to see all of the data in a table, you can use a view to let the user see only specific columns. Thus, someone working in the personnel department can see the employee name and address information through a view, while the salary or hourly pay can remain hidden by being excluded from the view.

- Simplify data structures. You can present the database as a "personalized" set of tables. Suppose, for example, that you have separate employee and payroll tables. You can use a view to display employee names and pay figures in a single virtual table for the company's managers.

- Abstract data structures. As time goes on, some users will save SQL queries that they use often, and others may even write Visual Basic or C++ programs that extract data from the database to produce reports. If someone (such as the table owner or database administrator) changes the physical structure of a table by splitting it into two tables, for example, saved user queries may no longer function and application programs may try to access columns that no longer exist. However, if users write their queries or application programs to access data in the "virtual" view tables, you can insulate them from changes to the underlying database structures. When you split a table, for example, you need change the view's query so that it recombines the split tables into the set of columns found in the original view.

- Simplify queries. By using a view to combine the data from several tables into a single virtual table, you make it possible for a user to write SQL queries based on a single table, thus avoiding the complexity of using multi-table SELECT and JOIN statements.

While views provide several advantages, there are two main disadvantages to using them:

- Performance. Since a view is a virtual table, the DBMS must either materialize the data in a view as a temporary table or extract the data in the view's rows on the fly (one row at a time) whenever you use a view in an SQL statement. Thus, each time you use an SQL statement that contains a view reference, you are telling the DBMS to perform the query that defines the view in addition to performing the query or update in SQL statement you just entered.

- Update restrictions. Unfortunately, SQL violates Rule 6 of Codd's rules (you learned about this in Tip 6, "Understanding Codd's 12-Rule Relational Database Definition"), in that not all views are updateable. Currently, SQL limits updateable views to those based on queries on a single table without GROUP BY or HAVING clauses. In addition, to be updateable a view cannot have aggregate functions, calculated columns, or a SELECT DISTINCT clause. And, finally, the view must contain a table key column, and any columns excluded from the view must be NULL-able in the base table.

Due to SQL limitations on what views you can use for updating base tables, you cannot always create views to use in place of base tables. Moreover, in those cases where you can use a view, always weigh the advantages of using the view against the performance hit you take in having the DBMS create virtual tables every time it executes an SQL statement that references a view.

Understanding Schemas

A table consists of rows and columns of data that deal with a specific type of entity such as marketing calls, sales statistics, customers, orders, payroll, and so on. A schema is the collection of related tables. Thus, a schema is to tables what tables are to individual data items. While a table brings together related data items so that they describe an entity when considered a row at a time in a table, the schema is the set of related tables and organizational structure that describe your department or company.

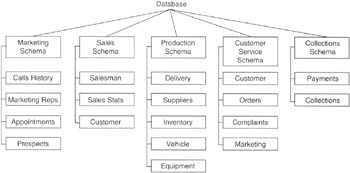

Suppose that you worked in a sales organization with five departments as shown in Figure 12.1.

Figure 12.1: Database with tables grouped into five schemas, one for each department in the company

The tables in the marketing department, for example, might include tables showing the history of marketing calls made, marketing representative appointment setting statistics, an appointment list, and demographic data on prospects. The collections department, meanwhile, would have tables that deal with customer information, payments made on accounts, and collections activity such as scheduling dunning letters and field calls for payment pickups. All of the tables shown in Figure 12.1 would exist in a single database. However, each department has its own set of activities, as reflected in the set tables of data it maintains. Each department's tables could be organized into a separate schema within the company's database.

The schema is more of a "container" for objects than just a "grouping" of related tables because a schema includes:

- Tables. Related data items arranged in columns of rows that describe a physical or logical entity. The schema includes the tables as well as all of the components of a table (described in Tip 8, "Understanding the Components of a Table"), which include the column domains, check constraints, primary and foreign keys, and so on).

- Views. Virtual tables defined by an SQL query that display data from one or more base tables (as described in Tip 11, "Understanding Views"). The schema includes the definition of all views that use base tables of "real" data included in the schema. (As you learned in Tip 11, the virtual tables exist only for the duration of the SQL statement that references the view.)

- Assertions. Database integrity constraints that place restrictions on data relationships between tables in a schema. You will learn more about assertions in Tip 33, "Understanding Assertions."

- Privileges. Access rights that individual users and groups of users have to create or modify table structures, and to query and/or update database table data (or data in only specific columns in tables through views). You will learn all about the SQL security privileges in Tips 135-158.

- Character sets. Database structures used to allow SQL to display non-Roman characters such as Cyrillic (Russian), Kanji (Asian), and so on.

- Collations. Define the sorting sequences for a character set.

- Translations. Control how text character sequences are to be translated from one character set to another. Translations let you store data in Kanji, for example, and display it in Cyrillic, Kanji, and Roman-depending on the user's view of the data. In addition to showing which character(s) in one character set maps to which character(s) in another, translations define how text strings in one character set compare to text strings in another when used in comparison operations.

In short, the schema is a container that holds a set of tables, the metadata that describes the data (columns) in those tables, the domains and constraints that limit what data can be put into a table's columns, the keys (primary and foreign) that limit the rows that can be added to and removed from a table, and the security that defines who is allowed to do what to objects in the schema.

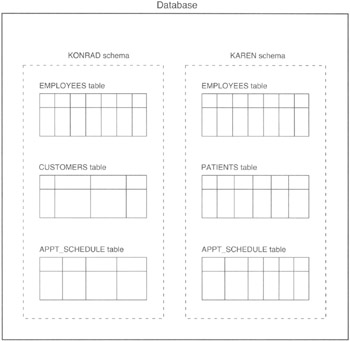

When you use the CREATE TABLE statement (which you will learn about in Tip 46, "Using the CREATE TABLE Statement to Create Tables"), the DBMS automatically creates your table in the default schema for your interactive session, the schema named . Thus, if users Konrad and Karen each log in to the database and execute the SQL statement

CREATE TABLE CALL_HISTORY (PHONE_REP_ID CHAR(3) NOT NULL, PHONE_NUMBER INTEGER NOT NULL, DATE_TO_CALL DATE, TIME_TO_CALL INTEGER, DATE_CALLED DATE NOT NULL, TIME_CALLED INTEGER NOT NULL, HANGUP_TIME INTEGER NOT NULL, DISPOSITION CHAR(4) NOT NULL)

the DBMS will add a table to each of two schemas, as shown in Figure 12.2.

Figure 12.2: Database with two schemas, KONRAD and KAREN

In short, anytime you use the CREATE statement to create a database object such as a table, view, domain, assertion, and so on, the DBMS will create that object in the default "container" schema.

If you want to create an object in a specific schema "container," the container must exist and you must use the qualified name for the object you are creating. Qualified object names are an extension of the qualified table names you learned about in Tip 9, "Understanding Table Names." Instead of using ., use .to place an object in a specific schema.

For example, to create the MARKETING schema shown in Figure 12.1, you could use the SQL statement

CREATE SCHEMA MARKETING AUTHORIZATION KONRAD CREATE TABLE CALLS_HISTORY (PHONE_REP_ID CHAR (3) NOT NULL, PHONE_NUMBER INTEGER, NOT NULL, DATE_CALLED DATE) CREATE TABLE MARKETING REPS (REP_ID CHAR(3), REP_NAME CHAR(25)) CREATE TABLE APPOINTMENTS (APPOINTMENT_DATE DATE, APPOINTMENT_TIME INTEGER, PHONE_NUMBER INTEGER) CREATE TABLE PROSPECTS PHONE_NUMBER INTEGER, NAME CHAR(25), ADDRESS CHAR(35))

to create the MARKETING schema and the structure for its four tables. The AUTHORIZATION predicate in the CREATE SCHEMA statement authorizes Konrad to modify the schema and its objects. As such, Konrad could then use an ALTER TABLE MARKETING.

statement to change the columns, domains, and constraints of columns in the tables included in the MARKETING schema. Moreover, Konrad can create additional tables in the MARKETING schema by specifying the schema name in a CREATE TABLE statement, such as:

CREATE TABLE MARKETING.CONTESTS (DESCRIPTION CHAR(25), RULES VARCHAR(100), WIN_LEVEL1 MONEY, WIN_LEVEL2 MONEY, WIN_LEVEL3 MONEY)

All DBMS products have schema, or "containers," that hold a collection of tables and related objects. However, the name you can give to a schema varies from product to product. Oracle, Informix, and Sybase, for example, require that the schema name and username be the same. Each also limits the types of objects you can define in the CREATE SCHEMA statement. Thus, you must check the syntax of the CREATE SCHEMA statement in your DBMS manual (or Help system) to see what objects can be grouped together in schema "containers" and what names you can give to schema itself.

Understanding the SQL System Catalog

The system catalog is a collection of tables that the DBMS itself owns, creates, and maintains in order to manage the user-defined objects (tables, domains, constraints, schemas, other catalogs, security, and so on) in the database. As a collection, the tables in the system catalog are often referred to as the system tables because they contain data that describes the structure of the database and all of its objects and are used by the database management system in some way during the execution of every SQL statement.

When processing SQL statements, the DBMS constantly refers to the tables in the system catalog to:

- Make sure that all tables or views referenced in a statement actually exist in the database

- Make sure the columns referenced in the SQL statement exist in the tables listed in the target tables list portion of the statement (for example, the FROM section of a SELECT statement)

- Resolve unqualified column names to one of the tables or views referenced in the statement

- Determine the data type of each column

- Check the system security tables to make sure that the user has the privilege necessary to carry out the action described in the SQL statement on the target table (or column)

- Find and apply any primary key, foreign key, domain, and constraint definitions during INSERT, UPDATE, or DELETE operations

By storing the database description as a set of system tables, SQL meets the requirement for a "Dynamic Online Catalog Based on the Relational Model," listed as Rule 4 of Codd's 12 rules that define a relational database (which you learned about in Tip 6, "Understanding Codd's 12-Rule Relational Database Definition").

Not only does an SQL database use the system tables in the system catalog to validate and then execute SQL statements issued by the user or application programs, but the DBMS also makes the data in the system catalog available either directly or through views.

| Note |

The database administrator may limit access to the tables in the system catalog due to security concerns. After all, if you knew the primary key constraints, column name, and data domains for a particular table, you could determine the contents of the column(s) in the primary key-even if you did not have SELECT access to the table itself. Moreover, the structure of the database may itself be a trade secret if table and/or column names give information as the types of data a company in a particular market would find important enough to collect. |

Since the DBMS maintains the data in the system tables so that it accurately describes the structure and contents of the database, user access to the system catalog is strictly read-only. Allowing a user to change the values in system tables would destroy the integrity of the system catalog. After all, if the DBMS is doing its job of maintaining the tables to accurately describe the database, any changes made to the catalog by the user would, by definition, change a correct value into an incorrect one.