The Test Manager

"A writer asked me, 'What makes a good manager?' I replied, 'Good players.'"

— Yogi Berra

What Is Management?

Henry Mintzberg wrote a classic article called "The Manager's Job: Folklore and Fact" for the Harvard Business Review in 1975. In his article, Mintzberg concluded that many managers don't really know what they do. When pressed, many managers fall back on the mantra many of us have been taught that managers plan, organize, coordinate and control, and indeed managers do spend time doing all of these things.

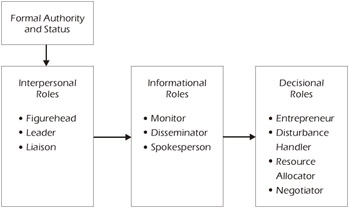

Rather than discuss management in light of tasks or activities, Mintzberg has defined management by a series of roles that managers fulfill. A manager, states Mintzberg, is vested with formal authority over an organization. From this authority comes status that leads the manager into the various interpersonal roles shown in Figure 10-1, which allow the manager to gain access to information, and ultimately allow him or her to make decisions. It's instructional to take this model and apply it to a software testing manager.

Figure 10-1: The Manager's Roles

Interpersonal Roles

The test manager is the figurehead for the testing group and, as such, can affect the perception people outside the test group have of the testing group and the people in it. In most companies, the test manager is very visible within the entire Information Technology (IT) division and may even be well known in other organizations outside of IT. Examples of this visibility are the manager's role on the Configuration Control Board, Steering Council, or Corporate Strategy Group.

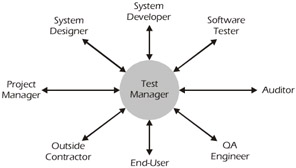

Because of the unique nature of testing software (i.e., evaluating the work of another group the developers - for use by a third group - the users), the testing manager will have to coordinate and work with people from many organizations throughout the company and even with groups and individuals outside of the company. Figure 10-2 shows just a few of the people with whom a test manager may have to deal on a daily basis.

Figure 10-2: Interpersonal Roles of a Test Manager

Another interpersonal role that the test manager plays is, of course, the leader. As such, the manager is trusted to accomplish the mission of the organization and ensure the welfare of the staff. Refer to the section below on the Test Manager as a Leader.

Informational Roles

The test manager is in a position to present information on a daily basis. He or she will be expected to provide status reports, plans, estimates, and summary reports. Additionally, the test manager must fulfill a role as a trainer of the testing staff, upper management, development, and the user community. In this role, the test manager will act as the spokesperson for the testing group.

Decisional Roles

Test managers make hundreds of decisions every day:

- Which tester should get this assignment?

- What tool should we use?

- Should we work overtime this weekend?

- And so on…

One of the major decisional roles that the test manager may take part in is the decision on what to do at the conclusion of testing. Should the software be released or should it undergo further testing? This is primarily a business decision, but the test manager is in a unique position to participate in this decision-making process by advising (the CCB, users, or management) on the quality of the software, the likelihood of failure, and the potential impact of releasing the software.

Management vs Leadership

According to Robert Kreitner and Angelo Kinicki in their book Organizational Behavior, "Management is the process of working with and through others to achieve organizational objectives in an efficient and ethical manner." Effective managers are team players that have the ability to creatively and actively coordinate daily activities through the support of others. Management is about dealing with complexity. Test managers routinely have to work within complicated organizations that possess many formal and informal lines of communication. They deal with complex processes and methodologies, staffs with varying degrees of skill, tools, budgets and estimates. This is the essence of management.

| Key Point |

Management is about dealing with complexity. Leadership is about dealing with change. |

Kreitner and Kinicki state that leadership is "a social influence process in which the leader seeks the voluntary participation of subordinates in an effort to achieve organizational goals." It's about dealing with change. Leadership is what people possess when they are able to cope with change by motivating people to adopt and benefit from the change. Leadership is more than just wielding power and exercising authority. Leadership depends on a million different little things (e.g., coaching, effectively wandering around, consistency, enthusiasm, etc.) that work together to help achieve a common goal. Management controls people by pushing them in the right direction; leadership motivates them by satisfying basic human needs. In his essay "What Leaders Really Do," John Kotter explains that "management and leadership are complementary." That is, test managers don't need to become test leaders instead of test managers, but they do need to be able to lead as well as manage their organization.

| Key Point |

"Leadership is a potent combination of strategy and character. But if you must be without one, be without strategy." - General H. Norman Schwartzkopf |

Leadership Styles

A debate that has been going on for thousands of years (if you read Thucydides' The Peloponnesian War, you'll see that they were debating the issue over 2000 years ago) is whether leaders are made or born. There are many references to this topic, but, at the end of the day, most conclude that while there are certain personal characteristics that make it easier to be a leader, almost everyone has the potential to be a leader. For example, some people are naturally better communicators than others. Good communications skills are a definite asset to any leader. Still, a determined person can overcome or compensate for a personal deficiency in this area and most others. Certainly, the Marine Corps believe that all Marines are or can become leaders.

We think that what's important for you is to determine what style of leadership best fits your personality. If you're naturally quiet and prefer one-on-one communications, then your leadership style should focus on this strength (of course, you'll still have to talk in front of groups and should work on this skill). If you're naturally extroverted, your leadership style should reflect this trait.

Case Study 10-1: Choosing the correct leadership style is an important part of being an effective leader.

Leadership As a Platoon Commander

As a young Lieutenant stationed in Japan in the 1970s, I was getting my first real taste of leadership as a platoon commander in charge of 30 or so hardcharging Marines. Even after 4 years at the Naval Academy and the Training Program for Marine Lieutenants (appropriately enough, called The Basic School), my leadership style was still very much in the formative stages. There was, however, one Captain who I thought was a really good leader. The one thing I remember about him was that he yelled a lot at his troops. "Move that cannon over there! Do it now! Hurry up!" You get the picture. At that point, I assumed that one of the reasons he was a good leader was because he was very vocal and yelled at his Marines. So, for the first time in my life, I began yelling.

After a couple of days of this surprising tirade, one of my sergeants came up to me and said, "Sir, you know all that yelling crap? Cut it out, it ain't you" (these may not have been his exact words). Now, it takes some courage for a Sergeant to criticize his platoon commander, especially in light of the fact that many new Lieutenants are not yet all that confident in their leadership skills anyway. But, of course, he was right. Yelling was not my style. So I (almost) never yelled at my Marines again and I like to think that I was a better leader for it. Thank you, Sergeant.

P.S. There's another lesson hidden in the story above: Good leaders learn from their employees.

— Rick Craig

Marine Corps Principles of Leadership

The following list contains a set of leadership principles used by the Marine Corps, but pertinent to every leader:

- Know yourself and continually seek self-improvement.

- Be technically and tactically proficient (know your job).

- Develop a sense of responsibility among your subordinates.

- Make sound and timely decisions.

- Set the example.

- Know your Marines and look out for their welfare.

- Keep your Marines informed.

- Seek with responsibility and take responsibility for your actions and the actions of your Marines.

- Ensure that tasks are understood, supervised, and accomplished.

- Train your Marines as a team.

- Employ your command (i.e., testing organization) in accordance with your team's capabilities. (Set goals you can achieve.)[1]

It's easy to see that these principles are as valid for your organization as they are for the Marine Corps. Rick wrote these principles down in his day-timer so he could review them periodically, and apply them to his daily routine. It always seems to help him keep what's really important in perspective.

[1]Derived from NAVMC 2767 User's Guide to Marine Corps Leadership Training

The Test Manager As a Leader

Military-style training for business executives seems to be all the rage these days. There are boot camps and training programs for executives and numerous books on using military leadership skills in the corporate setting. This section uses the model of leadership taught by the Marine Corps.

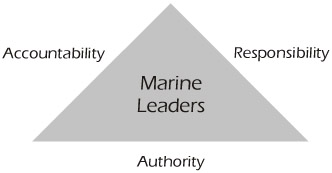

Cornerstones of Leadership

Over the years, as a Marine officer, Rick has had the opportunity to teach many leadership forums for enlisted personnel and officers of various ages and ranks. Authority, responsibility, and accountability (Figure 10-3) are the cornerstones that the Marine Corps uses to teach Marines how to become leaders.

Figure 10-3: Cornerstones of Marine Leaders

Authority

Authority is the legitimate power of a leader. In the case of the test manager, this authority is vested in the manager as a result of his or her position in the organization and the terms of employment with the company that he or she works for. In some test organizations, informal leaders also exist. For example, in the absence of an appointed test lead, an experienced tester may take over the management of a small group of testers and their work. Informal leaders don't receive any kind of power from the organizational hierarchy, but rather they're able to lead due to their influence over the people they're leading. This influence normally exists due to the experience level of the informal leader and his or her willingness to employ some or all of the principles of leadership outlined in this chapter.

| Key Point |

Some military books are now commonly found in the business section of many libraries and book stores:

Other popular "business" books have a military theme or background:

|

Responsibility

Responsibility is the obligation to act. The test manager is obligated to perform many actions in the course of each day. Some of these actions are mandated (i.e., daily meetings, status reporting, performance appraisals, etc.). Other responsibilities are not mandated, but are required because the leader knows and understands that they will support the ultimate satisfaction of the mission of the organization and its people. Examples include developing metrics and reports to manage the testing effort, rewarding staff members for a job well done, and mentoring new employees.

Accountability

Accountability means answering for one's actions. Test managers are held accountable for maximizing the effectiveness of their organization in determining the quality of the software they test. This means that the manager must test what's most important first (i.e., risk management); they must make use of innovations to achieve greater effectiveness (i.e., tools and automation); they must have a way to measure the effectiveness of their testing group (i.e., test effectiveness metrics); they must ensure the training, achievement, and welfare of their staff; and they must constantly strive to improve the effectiveness of their efforts.

| Key Point |

Test managers are held accountable for maximizing the effectiveness of their organization in determining the quality of the software they test. |

Politics

To many people, the word politics evokes an image of some slick, conniving, controlling person. Really, though, there's nothing negative about the word or business we know as politics. One of the definitions of politics in Webster's Dictionary is "the methods or tactics involved in managing a state or government." For our purposes, we can replace the words "state or government" with "organization" and we'll have a workable definition of politics.

Politics is how you relate to other people or groups. If you, as a manager, say, "I don't do politics," you're really saying, "I don't do any work." Politics is part of the daily job of every manager and includes his or her work to secure resources, obtain buy-in, sell the importance of testing, and generally coordinate and work with other groups such as the development group.

| Key Point |

The Politics of Projects by Bob Block provides some good insights about the role that politics plays in organizations. Mr. Block's definition of politics is, "those actions and interventions with people outside your direct control that affect the achievement of your goals." |

Span of Control

| Key Point |

A typical effective leader can successfully manage the work of 4 direct reports, while some leaders can effectively manage up to 8. |

There's a limit to the number of people that can be effectively managed by a single person. This span of control varies, of course, with the skill of the manager and the people being managed, and the environment in which they work. Several years ago, a government agency conducted a study to determine what the ideal span of control is for a proficient leader. Their conclusion was that a typical effective leader can successfully manage the work of 4 direct reports and some leaders can effectively manage up to 8 (note that "direct reports" do not include administrative personnel such as clerks and secretaries). The Commandant of the U.S. Marine Corps ultimately has over 200,000 active-duty and reserve "employees," but he directs them through only four (three active-duty and one reserve) Division Commanders (i.e., his span of control is four). We know that some of you are already saying, "That rule doesn't apply to me. I have 20 direct reports and I'm a great manager." Well then, good for you. But we believe that if you have large numbers of direct reports and you're a good and effective manager, there's something else happening. Informal leaders have emerged to coordinate, mentor, counsel, or even supervise some of their colleagues. There's nothing inherently wrong with these informal "chains of command," other than the extra burden they put on the informal leaders. These informal leaders have to function without organizational authority and prestige, and consequently have to work even harder to succeed. They are also, of course, not receiving pay for this extra work, which can itself have long-term implications on their overall morale. One last thought on the subject: This is an opportunity to create the career path that we talked about in the Software Tester Certifications section of Chapter 9.

Effective Communication

Poor communication is one of the more frequently cited frustrations of testers. It's important to establish good communications channels to resolve conflicts and clarify requirements. Some of these communications channels will naturally develop into an informal network, while others (e.g., configuration management, defect reporting, status reporting) need to be made formal.

| Key Point |

|

One of the most important jobs of the test manager is to provide feedback to the team members. Testers are anxious to know when they've done a good job or, on the other hand, if they missed a critical bug. In particular, testers need feedback on the metrics that they're required to collect. Without the feedback loop, they'll feel like they're feeding a "black hole." If no feedback is provided, some testers will stop collecting metrics and others will record "any old data."

Feedback loops don't just go down through the ranks. Staff members must feel comfortable communicating with their manager. One way to foster this communication is to establish an open-door policy. Basically, the test manager should make it known that any staff member can visit him or her at any time to discuss job-related or personal issues. This open-door policy goes a long way toward developing rapport with the staff and creating the loyalty that all leaders need.

The Test Manager s Role in the Team

Test managers play an important role in the testing team. These managers are responsible for justifying testers' salaries, helping to develop career paths, building morale, and selling testing to the rest of the organization.

Equal Pay for Equal Jobs

Traditionally, test engineers have received less pay than their counterparts in the development group. Lately, we've seen a gradual swing toward parity in pay between the two groups. If your test engineers receive less pay than the developers, it's important to determine why:

- Is it because the developers have more experience?

- Is it because the developers require more training or have to be certified?

- Is it because software development is seen as a profession and testing is not?

- Or is it just culture or tradition that developers receive more pay?

| Key Point |

A good source for salary information can be found at: www.salary.com |

As a test manager, you should determine if a pay disparity exists. If it does exist and the reason is only culture or tradition, you should fight the battle for parity of pay for your staff. Even if you don't succeed, your fight will help instill loyalty and respect from your staff. If your engineers receive less pay because they have less training or are perceived as less professional, you have some work to do before lobbying for equal pay. You might consider:

- implementing a formal training curriculum for your testers.

- providing an opportunity for certification using one of the programs described in the Software Tester Certifications section of Chapter 9.

- developing a career path so that test engineers have a logical way to advance in the organization without transferring out of the testing organization.

- embarking on a "marketing" campaign that extols the benefits and return on investment of testing to the rest of the company.

- developing and using metrics that measure the value of testing and measure test effectiveness.

The only way you can fail is to throw up your hands and declare, "This is the way it has always been and this is the way it will always be!"

Career Path Development

It's desirable to have a clear career path within the testing organization. If the only way testers can advance in pay and prestige is by transferring to development or some other area within your organization, many of your best people will do just that. In larger organizations, it may be possible to establish different testing roles or positions with established criteria for moving move from one role to another. For example, one company that we visit frequently has established the formal positions listed in Table 10-1 for various testing jobs.

|

Position |

Primary Function |

|---|---|

|

Tester |

Executes tests. |

|

Test Engineer |

Develops and executes test cases. |

|

Test Analyst |

Participates in risk analysis, inventories, and test design. |

|

Lead Test Analyst |

Acts as a mentor and manages one of the processes above. |

|

Test Lead |

Supervises a small group of testers. |

|

Test Manager |

Supervises the entire test group. |

Even if you work in a smaller test organization with only one or two testers, it's possible to create different job titles, advancement criteria, and possibly step increases in pay to reward testers for their achievements and progress toward becoming a testing professional.

Desktop Procedures

It is the mark of a good manager if his or her subordinate can step in and take over the manager's job without causing a disruption to the organization. One tool that Rick uses in the Marine Corps (when he's stuck at a desk job) to help ease this transition is the desktop procedure. Desktop procedures are simple instructions that describe all of the routine tasks that must be accomplished by a manager on a daily or weekly basis. These tasks may include reports that must be filed, meetings attended, performance appraisals written, etc.

| Key Point |

Desktop procedures are simple instructions that describe all of the routine tasks that must be accomplished by a manager on a daily or weekly basis. |

Desktop procedures are often supplemented by what are called turnover files. Turnover files are examples of reports, meeting minutes, contact lists, etc. that, along with the desktop procedures, facilitate a smooth transition from one manager to another. We're certain that many of our readers already use similar tools, possibly with different names. But if you don't, we urge you to create and use these simple and effective tools.

Staying Late

Many managers have reached their position because they're hard workers, overachievers, or in a few cases just plain workaholics. For them, becoming a manager is an opportunity to continue the trend to work longer and longer hours. This may be done out of love for the job, dedication, inefficiency, or for some other reason. No matter what the reason is for the manager routinely putting in 12-hour days, it sends a signal to the staff that needs to be understood. How does your staff "see" you? Are you seen as:

- dedicated for working long hours?

- inefficient and having to work late to make up for this inefficiency?

- untrusting of the staff to do their job?

Are you sending a signal that if they become managers, they will have to forgo their personal lives altogether, which will no doubt discourage some of them from following in your footsteps?

One final thought on this subject. There's a culture that exists in a few organizations (including some parts of the military) that urges staff members to arrive as early as the boss and not leave until he or she departs. This is a true morale buster. Often staff members stay late just because the boss is there, even if they have nothing to do or are too burned out to do it. This can foster resentment, plummeting morale, and eventually lower efficiency. Luckily, this culture is not too prevalent, but if it describes your culture and you're the boss, then maybe it's time to go home and see your family!

Motivation

In a Marine Corps Leadership symposium, motivation was simply defined as "the influences that affect our behavior." In his essay "What Leaders Really Do," John Kotter explains that leaders motivate by:

- articulating the organization's vision in a manner that stresses the value of the audience they are addressing.

- involving the members in deciding how to achieve the vision.

- helping employees improve their self-esteem and grow professionally by coaching, feedback and role modeling.

- rewarding success.

We believe that John Kotter's model of what motivates people is accurate and usable. We hope, though, that test managers will remember that different people are motivated by different things. Some people are motivated by something as simple as a pat on the back or an occasional "well done." Other people expect something more tangible such as a pay raise or promotion. Test managers need to understand what motivates their testers in general and, specifically, what motivates each individual staff member. Good leaders use different motivating techniques for different individuals.

| Key Point |

Good leaders use different motivating techniques for different individuals. |

At testing conferences, we often hear discussions about what motivates testers as opposed to developers. Developers seem to be motivated by creating things (i.e., code), while testers are motivated by breaking things (i.e., test). While we agree that some testers are motivated by finding bugs, we don't think they're really motivated by the fact that a bug was found as much as they're motivated by the belief that finding that bug will ultimately lead to a better product (i.e., they helped build a better product).

While we're talking about rewards and motivation, we would like to reward you for taking the time to read this book. Send an e-mail to and we'll send you a coupon good for 25% off your next bill at Rick's restaurant, MadDogs and Englishmen, located in Tampa, Florida. Sorry, plane tickets are not included.

| Key Point |

Don't forget to e-mail Rick to get a free coupon for 25% off your next bill at his restaurant, MadDogs and Englishmen. |

Building Morale

Morale can be defined as "an individual's state of mind," or morale can refer to the collective state of mind of an entire group (e.g., the testing organization). The major factor affecting the morale of an organization is the individual and collective motivation of the group. Test managers need to be aware of the morale of their organization and be on the lookout for signs of poor morale, which can rob an organization of its effectiveness. Signs of declining morale include:

- Disputes between workers.

- Absenteeism (especially on Friday afternoon and Monday morning).

- An unusual amount of turnover in staff.

- Requests for transfer.

- Incompletion of work.

- Poor or shoddy work.

- Change in appearance (dress, weight, health).

- Lack of respect for equipment, work spaces, etc.

- Disdain for authority.

- Clock-watching.

| Key Point |

Morale can be defined as "an individual's state of mind" or refer to the collective state of mind of an entire group (e.g., the testing organization). |

Just because some of these signs exist, doesn't necessarily mean that you have a morale problem. But if the trend of one or more of these signs is negative, you may have cause for concern.

Managers who see morale problems should discuss them with their staff, try to determine the causes, and try to find solutions. Sometimes, morale can be affected by a seemingly trivial event that an astute test manager can nip in the bud. For example, we encountered one organization that had a morale problem because a new performance appraisal system was instituted without adequately explaining it to the staff members who were being measured. Another organization had a morale problem because the testers thought that the way bonuses were allocated discounted their efforts when compared to the developers. Promoting frequent and open dialogue between the test manager and his or her staff can go a long way toward maintaining the morale of the group. Or, as the Marines would say, "Keep your Marines informed."

| Key Point |

Promoting frequent and open dialogue between the test manager and his or her staffs can go a long way toward maintaining the morale of the group. |

Selling Testing

An important part of the test manager's job is to sell the importance of testing to the rest of the Information Technology (IT) division, the user community, and management. This is obviously an instance of trying to achieve buy-in from the aforementioned groups and others. The test manager should always be on the lookout for opportunities to demonstrate the value of testing. This might mean speaking up in a meeting, making a presentation, or customizing metrics for the developer, user, or whomever.

| Key Point |

If a test manager has a mentor, he or she may be able to use the mentor as a sponsor (i.e., a member of management who supports and promotes the work of the test group). |

The test manager must focus on the value of testing to the entire organization and show what testing can do for each of the various organizational entities. For example, not only does creating test cases support the testing of future releases, but the test cases themselves can also be used as documentation of the application. When selling testing, many managers fail to emphasize the larger role that testing can play in producing and maintaining quality software.

This job of promoting the value of testing will have an impact on staffing and budget requests, morale of the testing group, the role of the test manager in decision-making groups such as the Change Control Board (CCB), working relationships with other groups such as development, and just the general perception of the testing group to the rest of the organization.

Manager s Role in Test Automation

It's not the job of the test manager to become an expert on testing tools, but he or she does have an important role in their implementation and use. There was a fairly extensive discussion in Chapter 6 on the pitfalls of testing tools and, essentially, it's up to the test manager to avoid those pitfalls. Additionally, the test manager should:

- be knowledgeable on the state of the practice.

- obtain adequate resources.

- ensure that the correct tool is selected.

- ensure that the tool is properly integrated into the test environment.

- provide adequate training, identify and empower a champion.

- measure the return on investment (ROI) of the tool.

Knowledgeable on State of Practice

Each test manager should understand the basic categories of tools and what they can and cannot do. For example, a test manager should know what the capabilities and differences are between a capture-replay tool, a load testing tool, a code coverage tool, etc. This knowledge of tools should include an awareness of the major vendors of tools, the various types of licensing agreements available, and how to conduct tool trials.

Obtain Adequate Resources

Part of the test manager's job is to obtain the funding necessary to procure the tool, implement it, maintain it, and provide training and ongoing support. Remember that the cost of implementing the tool and providing training often exceeds the cost of the tool itself. While a large part of the training costs are typically incurred early on, the manager must budget for ongoing training throughout the life of the tool due to staff turnover and updates in the tool.

Ensure Correct Tool Is Selected

Even if your company has an organization that is assigned the primary job of selecting and implementing testing tools, the test manager must still ensure that the correct tool is chosen for his or her testing group. We know of many test managers who have had very bad experiences with some tools that were "forced upon them" by the resident tool group. A tool that works well for one testing group may not work well for another.

| Key Point |

Just because a tool works well for one testing organization doesn't mean that it will work well for your organization. |

The good news for those of you who don't have a tools group in your company is that you don't have to worry about having the tools gurus force you to use the wrong tool. On the other hand, it's now up to you to do everything:

- Formulate the requirements for the tool.

- Develop the strategy for the tool use.

- Create the implementation, maintenance, and training plans.

- And so forth…

Ensure Proper Integration of Tool

In tool implementation, one of the most important roles of the test manager is to ensure that the tool is properly integrated into the test environment. For the tool to be successfully used, it must support the testing strategy outlined by the testing manager in the organizational charter and in the master test plan(s). This will require the testing manager to determine what's most useful to automate, who should do the automation, and how the automated scripts will be executed and maintained.

Provide Adequate Training

Many tools are difficult to use, especially if the testers who will be using them don't have any experience in programming or have a fear of changing the way they do business. Training should include two things:

- Teaching testers how to use the tool (literally, which keys to push).

- Teaching testers how to use the tool to support the testing strategy outlined by the test manager.

| Key Point |

|

The first type of training (mentioned above) will most likely be provided by the vendor of the tool. The second type of training may have to be created and conducted within the testing organization, since it's very specific to the group that's using the tool.

Identify and Empower a Champion

Tool users, especially less experienced ones, can easily get frustrated if there's no one readily available to answer their questions. The tool vendor's customer service can help with routine questions regarding the mechanics of the tool, but many of them are incapable of answering questions relating to how the tool is being used at a particular company or organization. That's where the champion comes in. The test manager should seek an enthusiastic employee who wants to become an expert on the tool, and then provide that employee with advanced training, time to help in the tool implementation, and ultimately the time to help their peers when they encounter difficulty in using the selected tool(s).

Measure the Return on Investment

We've already explained that tools are expensive to purchase and implement. A surprising number of tools that are purchased are never used or are used sporadically or ineffectively. It's up to the test manager to determine if the cost and effort to continue to use the tool (e.g., maintenance, upgrades, training, and the actual use) are worthwhile.

It's very painful to go to management and present a powerful argument to spend money for the procurement of a tool and then later announce that the return on investment of that tool doesn't warrant further use. Unfortunately, some test managers throw good money after bad by continuing to use a tool that has proven to be ineffective.

The Test Manager s Role in Training

According to the Fleet Marine Force Manual 1 (FMFM1), commanders should see the development of their subordinates as a direct reflection on themselves. Training has been mentioned repeatedly in this book under various topics (e.g., test planning, implementation of tools, process improvement, etc.). This section deals specifically with the test manager's role in training, common areas of training, and some useful training techniques.

| Key Point |

According to the Fleet Marine Force Manual 1 (FMFM1), commanders should see the development of their subordinates as a direct reflection on themselves. |

One common theme that appears repeatedly in most leadership and management books is that the leader is a teacher. Ultimately a manager or leader is responsible for the training of his or her staff. This is true even in companies that have a training department, formal training curriculum, and annual training requirements. Just because the manager is responsible for the training doesn't mean that he or she must personally conduct formal training, but it does mean that he or she should ensure that all staff members receive adequate training in the required areas, using an appropriate technique.

Topics That Require Training

The topics that managers should provide training in include software testing, tools, technical skills, business knowledge, and communication skills.

Testing

Many of us are proud to proclaim that we're professional testers and, certainly, testing is now regarded by some as a "profession." Since this is the case, it only follows that testers, as professionals, should be trained in their chosen career field. There are formal training seminars, conferences on testing and related topics, Web sites, certification programs and books that deal specifically with testing. In fact, a few universities are now providing classes with a strong testing or QA focus. Managers need to provide the time, money, and encouragement for their testers to take advantage of these and other opportunities.

| Key Point |

|

Tools

In order to be effective, testing tools require testers to obtain special training. Test managers will want to arrange formal training classes with the vendor of the tools they use, as well as informal coaching from the vendor and/or champion. On-site training will need to be conducted to show your team how the tool is supposed to be used to support the organization's testing strategy.

Technical Skills

Testers that possess certain skills, such as programming, are often more effective testers because they better understand how a system works rather than just if it works. Some testers have also told us that they have more confidence speaking to developers and other technical people if they understand exactly what the developers do. Other testers have also told us that even though they never had to use their programming skills, just having those skills seemed to give them more credibility when talking with developers. Programming skills can also help testers understand how to automate test scripts.

| Key Point |

Some types of applications (e.g., Web) require testers to have some technical knowledge just to be able to properly do functional testing. |

Business Knowledge

A common complaint that we hear about testers (usually from the business experts) is that they don't really understand the business aspects of the software that they're testing. In particular, doing risk analysis, creating inventories and employing preventive testing techniques require testers to understand the underlying business needs that the application is supporting. To alleviate this problem, test managers should arrange to have their staffs trained in the business functions. A test manager at an insurance company that we often visit, for example, encouraged his staff members to study insurance adjusting and underwriting. Other test managers arrange to have their staff visit customers at their site, work the customer support desk for a day or two, or attend a college course related to their company's industry.

Communication Skills

Testers have to communicate with people (e.g., developers, QA, users, managers, etc.) who have a wide range of backgrounds and viewpoints in both verbal and written forms. Providing training in speaking and writing skills can help facilitate the interaction of the testing staff with each other, and with people throughout the rest of the company and even other companies. One good way to improve the communication skills of the testers (and test manager) is to urge them to submit papers and participate as speakers at a conference. Nothing improves communication skills better than practice.

Methods of Training

Test managers have several options available to them in choosing a method for training their staff members: mentoring, on-site commercial training, training in a public forum, in-house training, and specialty training.

Mentoring

Mentoring is a powerful technique for training staff members, especially newer ones. It's a process where a less experienced person (e.g., a new-hire in a discipline such as testing) works with a more experienced staff member. The mentor's job is to help train and promote the career of his or her understudy. Mentoring includes teaching understudies about the politics of the organizations, rules, etc., as well as teaching them about the methods, tools, and processes in place within the organization. Assigning a mentor to a new hire also sends a clear message to the new employee, "You're important to us." There are few things worse than reporting to a new organization or company and sitting around for days or weeks without anything productive to do.

| Key Point |

Understudies are not the only ones who benefit from mentoring programs. People who volunteer or are chosen to be mentors also benefit by gaining recognition as experts in their field and the opportunity to hone their communication and interpersonal skills. |

In some companies, mentors are assigned. In this case, mentors have to be trained in how to be mentors and what they have to teach their understudies. Some people seem to be naturally good mentors, while others are less effective. No one should be forced to be a mentor if they don't want to be one. If they're assigned against their wishes, unwilling mentors will almost always be ineffective in their roles because they impart a negative attitude to their understudies.

Some people voluntarily (and without any organizational support) seek out understudies to mentor. Similarly, some employees will seek out a person to be their mentor. Both of these scenarios are signs of motivated employees and normally exist in organizations with high morale.

On-Site Commercial Training

It's possible to contract with an individual or another company to bring training into your organization. This training can be particularly valuable because it allows the employees of a test group to train as a team. Sometimes, the training can even be customized to meet the unique needs of the group being trained. Even if the training materials are not customized in advance, a good commercial trainer should be able to customize the training/presentation to a certain degree based on the interaction of the participants.

| Key Point |

Even if the training materials are not customized in advance, a good commercial trainer should be able to customize the training/presentation to a certain degree based on the interaction of the participants. |

One common problem with on-site training is that participants are often "pulled out" of the class to handle emergencies (and sometimes just to handle routine work). The loss of continuity greatly degrades the value of the training for the person who is called out of class, and also reduces the effectiveness of the training for the other participants by disrupting the flow of the presentation. One way to overcome this problem is to conduct "on-site" training at a local hotel conference room or some other venue removed from the workplace.

Training in a Public Forum

In some cases, it's a good idea to send one or more of your staff members to a public training class. This is especially useful if your group is too small to warrant bringing in a professional instructor to teach your entire team, or if you have a special skill that only one or two staff members need (e.g., the champion of a testing tool). The downside of public training classes is that the instructor has little opportunity to customize the material for any one student, because the audience is composed of people from many different backgrounds and companies. On the positive side, public forum training classes allow your staff to interact with and learn from people from other companies. Some employees will also feel that their selection to attend a public training class is a reward for a job well done, or recognition of the value of the employee.

| Key Point |

Some employees will feel that their selection to attend a public training class is a reward for a job well done, or recognition of the value of the employee. |

In-House Training

Sometimes, due to budget constraints, the uniqueness of the environment, or the small size of an organization, it may be necessary to conduct in-house training. The obvious drawback here is that it can be expensive to create training materials and to train the trainer. Another drawback is that an in-house trainer may not be as skilled a presenter as a professional trainer.

Remember that the biggest cost of training is usually the time your staff spends in class, not the cost of the professional trainer. So, having your staff sit through an ineffective presentation may cost more in the long run than hiring a professional trainer. Also, just like on-site commercial training, in-house training is subject to disruptions caused by participants leaving to handle emergencies in the workplace.

| Key Point |

Remember that the biggest cost of training is usually the time your staff spends in class, not the cost of the professional trainer. So, having your staff sit through an ineffective presentation may cost more in the long run than hiring a professional trainer. |

On the positive side, having one of your team members create and present the training class can be a great learning experience and motivator for the person doing the presentation.

Test managers from larger companies may have an entire training department dedicated to IT or even software testing. In that case, your in-house training will bear more resemblance to commercial training that happens to be performed in-house, than it does to typical in-house training.

Specialty Training

There are many types of specialty training programs available today: Web-enabled training, virtual training conferences, and distance learning are just a few. While all of these are valuable training techniques, they seldom replace face-to-face training.

Case Study 10-2: Will distance learning ever truly replace face-to-face learning in a classroom setting?

Is Distance Learning a Viable Solution?

To: Mr. James Fallows

- The Industry Standard

September 20, 2000

Dear James,

I always read your column with interest, and the one on September 18 really grabbed my attention. I travel every week to a different city, state, or country to give seminars on software testing. You would think that being a consultant and trainer in a technical area would encourage me to buy in to some of the new "distance learning" techniques, but so far I am unimpressed. And believe me when I say that I'm tired of traveling and want to be convinced that there is a better way of communicating than having me board a plane every week.

Over the years, I have seen several stages of evolution that were going to replace face-to-face meetings and training. First we had programmed learning in the old "green screen" days. This was useful for rote learning but didn't encourage much creative thought. Next my talks were videotaped. Most of my students think I'm a fairly dynamic speaker, but occasionally they doze when I'm there in person interacting with them you can imagine how effective a two-dimensional CRT is. So video didn't work - at least not very well. We tried the live video feed with interactive chat rooms. That was only marginally more successful. Recently, I was asked to speak at a virtual computer conference. I can't even imagine how that would succeed…

Still, I'm not totally a Neanderthal. I have participated in many successful video-teleconferences. These seem to work best with people who are comfortable on the phone and in front of a camera. A well-defined agenda is a must, and the meetings have to be short. My biggest complaint about all forms of "interactive" distance communications is the tendency for many of the participants (okay, I'm talking about me) to lose their train of thought without the visual and emotional stimulation of a human being in close proximity.

I do have high hopes for distance education as opposed to training. By this I mean where assignments, projects, and research are inter-dispersed among short recorded or interactive lectures, which ultimately leads to a broad understanding of a topic or even a degree. For a traveler like me, this is an ideal way to continue to learn without having to be physically present. I could, for example, continue my education while in my hotel room in Belfast or Copenhagen or wherever. The idea is to use short periods of time at the convenience of the student. Training, on the other hand, often requires a fairly intensive infusion of information on a specific topic geared particularly to the audience at hand. To me, this needs to be highly interactive and face-to-face.

Even in the area of sales, I have found no substitute for face-to-face meetings. We have a reasonable record of closing training/consulting deals using the Internet, mail, etc. but have an almost perfect record when we have actually gone to visit the client in person. Is it the medium? Is it training in how to use the technology? Is it that by actually arriving on the client's doorstep, we show that we're serious? I don't really know. But for the moment, I'm going to rack up some serious frequent-flier miles. I personally believe there will never come a time when advanced technology replaces face-to-face human interaction.

- Rick Craig

Published in the Industry Standard, September 2000.

Metrics Primer for Test Managers

It's very difficult for a test manager do his or her job well without timely, accurate metrics to help estimate schedules, track progress, make decisions, and improve processes. The test manager is in a very powerful position, because testing is itself a measurement activity that results in the collection of metrics relating to the quality of the software developed (usually by a different group). These metrics, though, can be a double-edged sword for the manager. It's relatively easy to create dissatisfaction or organizational dysfunction, make decisions based on incorrect metrics, and cause your staff to be very, very unhappy if metrics are not used judiciously.

| Key Point |

Testing is itself a measurement activity that results in the collection of metrics relating to the quality of the software developed. |

Software Measurements and Metrics

Many people (including us) do not normally differentiate between the terms software measurement and software metrics. In his book Making Software Measurement Work, Bill Hetzel has defined each of these terms as well as the terms software meter and meta-measure. While we don't think it's imperative that you add these terms to your everyday vocabulary, it is instructive to understand how each of the terms describes a different aspect of software measurement.

|

Software Measure |

A quantified observation about any aspect of software - product, process or project (e.g., raw data, lines of code). |

|

Software Metric |

A measurement used to compare two or more products, processes, or projects (e.g., defect density [defects per line of code]). |

|

Software Meter |

A metric that acts as a trigger or threshold. That is, if a certain threshold is met, then some action will be warranted as a result (e.g., exit criteria, suspension criteria). |

|

Meta-Measure |

A measure of a measure. Usually used to determine the return on investment (ROI) of a metric. Example: In software inspections, a measure is usually made of the number of defects found per inspection hour. This is a measure of the effectiveness of another measurement activity (an inspection is a measurement activity). Test effectiveness is another example, since the test effectiveness is a measure of the testing, which is itself a measurement activity. |

Benefits of Using Metrics

Metrics can help test managers identify risky areas that may require more testing, training needs, and process improvement opportunities. Furthermore, metrics can also help test managers control and track project status by providing a basis for estimating how long the testing will take.

Lord Kelvin has a famous quote that addresses using measures for control:

"When you can measure what you are speaking about and express it in numbers, you know something about it; but when you cannot measure, when you cannot express it in numbers, your knowledge is of a meager and unsatisfactory kind: it may be the beginning of knowledge, but you have scarcely, in your thoughts, advanced to the stage of science."

It seems that about half of the speakers at software metrics conferences use the preceding quote at some point in their talk. Indeed, this quote is so insightful and appropriate to our topic that we too decided to jump on the bandwagon and include it in this book.

Identify Risky Areas That Require More Testing

This is what we called the Pareto Analysis in Chapter 7. Our experience (and no doubt yours as well) has shown that areas of a system that have been the source of many defects in the past will very likely be a good place to look for defects now (and in the future). So, by collecting and analyzing the defect density by module, the tester can identify potentially risky areas that warrant additional testing. Similarly, using a tool to analyze the complexity of the code can help identify potentially risky areas of the system that require a greater testing focus.

| Key Point |

By collecting and analyzing defect density by module, the tester can identify potentially risky areas that warrant additional testing. |

Identify Training Needs

In particular, metrics that measure information about the type and distribution of defects in the software, testware, or process can identify training needs. For example, if a certain type of defect, say a memory leak, is encountered on a regular basis, it may indicate that training is required on how to prevent the creation of this type of bug. Or, if a large number of "testing" defects are discovered (e.g., incorrectly constructed test cases), it may indicate a need to provide training in test case design.

Identify Process Improvement Opportunities

The same kind of analysis described above can be used to locate opportunities for process improvement. In all of the examples in the section above, rather than providing training, maybe the process can be improved or simplified, or maybe a combination of the two can be used. Another example would be that if the test manager found that a large number of the defects discovered were requirements defects, the manager might conclude that the organization needed to implement requirements reviews or preventive testing techniques.

Provide Control/Track Status

Test managers (and testers, developers, development managers, and others) need to use metrics to control the testing effort and track progress. For example, most test managers use some kind of measurement of the number, severity, and distribution of defects, and number of test cases executed, as a way of marking the progress of test execution.

| Key Point |

|

Provide a Basis for Estimating

Without some kind of metrics, managers and practitioners are helpless when it comes to estimation. Estimates of how long the testing will take, how many defects are to be expected, the number of testers needed, and other variables have to be based upon previous experiences. These previous experiences are "metrics," whether they're formally recorded or just lodged in the head of a software engineer.

Justify Budget, Tools, or Training

Test managers often feel that they're understaffed and need more people, or they "know" that they need a larger budget or more training. However, without metrics to support their "feeling," their requests will often fall on deaf ears. Test managers need to develop metrics to justify their budgets and training requests.

Provide Meters to "Flag" Actions

One sign of a mature use of metrics is the use of meters or flags that signal that an action must be taken. Examples include exit criteria, smoke tests, and suspension criteria. We consider these to be mature metrics because for meters to be effective, they must be planned in advance and based upon some criteria established earlier in the project or on a previous project. Of course, there are exceptions. Some organizations ship the product on a specified day, no matter what the consequences. This is also an example of a meter because the product is shipped when the date is reached.

Rules of Thumb for Using Metrics

Ask the Staff

Ask the staff (developers and testers) what metrics would help them to do their jobs better. If the staff don't believe in the metrics or believe that management is cramming another worthless metric down their throats, they'll "rebel" by not collecting the metric, or by falsifying the metric by putting down "any old number." Bill Hetzel and Bill Silver have outlined an entire metrics paradigm known as the practitioner paradigm, which focuses on the observation that for metrics to be effective, the practitioners (i.e., developers and testers) must be involved in deciding what metrics will be used and how they will be collected. For more information on this topic, refer to Bill Hetzel's book Making Software Measurement Work.

| Key Point |

Taxation without representation is tyranny and so is forcing people to use metrics without proper explanation and implementation. |

Use a Metric to Validate the Metric

Rarely do we have enough confidence in any one metric that we would want to make major decisions based upon that single metric. In almost every instance, managers would be well advised to try to validate a metric with another metric. For example, in Chapter 7 where we discussed test effectiveness, we recommended that this key measure be accomplished by using more than one metric (e.g., a measure of coverage and Defect Removal Efficiency [DRE], or another metric such as defect age). Similarly, we would not want to recommend the release of a product based on a single measurement. We would rather base this decision on information about defects encountered and remaining, the coverage and results of test cases, and so forth.

Normalize the Values of the Metric

Since every project, system, release, person, etc. is unique, all metrics will need to be normalized. It's desirable to reduce the amount of normalization required by comparing similar objects rather than dissimilar objects (e.g., it's better to compare two projects that are similar in size, scope, complexity, etc. to each other than to compare two dissimilar projects and have to attempt to quantify [or normalize] the impact of the differences).

As a rule of thumb, the further the metric is from the truth (i.e., the oracle to which it's being compared), the less reliable the metric becomes. For example, if you don't have a reservoir of data, you could compare your project to industry data. This may be better than nothing, but you would have to try to account for differences in the company cultures, methodologies, etc. in addition to the differences in the projects. A better choice would be to compare a project to another project within the same company. Even better would be to compare your project to a previous release of the same project.

| Key Point |

As a rule of thumb, the further the metric is from the truth (i.e., the oracle to which it's being compared), the less reliable the metric becomes. |

Measure the Value of Collecting the Metric

| Key Point |

|

It can take a significant amount of time and effort to collect and analyze metrics. Test managers are urged to try to gauge the value of each metric collected versus the effort required to collect and analyze the data. Software inspections are a perfect example. A normal part of the inspection process is to measure the number of defects found per inspector hour. We have to be a little careful with this data, though, because a successful inspection program should help reduce the number of defects found on future efforts, since the trends and patterns of the defects are rolled into a process improvement process (i.e., the number of defects per inspector hour should go down). A more obvious example is the collection of data that no one is using. There might be a field on the incident report, for example, that must be completed by the author of a report that no one is using.

Periodically Revalidate the Need for Each Metric

Even though good test managers may routinely weigh the value of collecting a metric, some of these same test managers may be a little lax in re-evaluating their metrics to see if there's a continuing need for them. Metrics that are useful for one project, for example, may not be as valuable for another (e.g., the amount of time spent writing test cases may be useful for systematic testing approaches, but has no meaning if exploratory testing techniques are used). A metric that's quite useful at one point of time may eventually outlive its usefulness. For example, one of our clients used to keep manual phone logs to record the time spent talking to developers, customers, etc. A new automated phone log was implemented that did this logging automatically, but the manual log was still used for several months before someone finally said, "Enough is enough."

| Key Point |

|

Make Collecting and Analyzing the Metric Easy

| Key Point |

Collecting metrics as a by-product of some other activity or data collection activity is almost as good as collecting them automatically. |

Ideally, metrics would be collected automatically. The phone log explained in the previous paragraph is a good example of automatically collecting metrics. Another example is counting the number of lines of code, which is done automatically for many of you by your compiler. Collecting metrics as a by-product of some other activity or data collection activity is almost as good as collecting them automatically. For example, defect information must be collected in order to isolate and correct defects, but this same information can be used to provide testing status, identify training and process improvement needs, and identify risky areas of the system that require additional testing.

Finally, some metrics will have to be collected manually. For example, test managers may ask their testers to record the amount of time they spend doing various activities such as test planning. For most of us, this is a manual effort.

Respect Confidentiality of Data

It's important that test managers be aware that certain data may be sensitive to other groups or individuals, and act accordingly. Test managers could benefit by understanding which programmers have a tendency to create more defects or defects of a certain type. While this information may be useful, the potential to alienate the developers should cause test managers to carefully weigh the benefit of collecting this information. Other metrics can be organizationally sensitive. For example, in some classified systems (e.g., government) information about defects can itself be deemed classified.

Look for Alternate Interpretations

There's often more than one way to interpret the same data. If, for example, you decide to collect information (against our recommendation) on the distribution of defects by programmers, you could easily assume that the programmers with the most defects are lousy programmers. Upon further analysis, though, you may determine that they just write a lot more code, are always given the hardest programs, or have received particularly poor specifications.

| Key Point |

We highly recommend that anyone interested in metrics read the humorous book How to Lie with Statistics by Darrell Huff. |

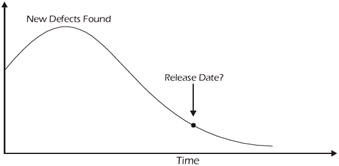

Consider the defect discovery rate shown in Figure 10-4. Upon examining this graph, many people would be led to believe that the steady downward trend in the graph would indicate that the quality of the software must be improving, and many would even go so far as to say that we must be approaching the time to ship the product. Indeed, this analysis could be true. But are there other interpretations? Maybe some of the testers have been pulled away to work on a project with a higher priority. Or maybe we're running out of valid test cases to run. This is another example of where having more than one metric to measure the same thing is useful.

Figure 10-4: Declining Defect Discovery Rate

Present the Raw Data

| Key Point |

|

Presenting the raw data is really a continuation of looking for alternative ways to interpret the data. We've found it very useful to present the raw data that we collected, in addition to the processed or interpreted data. As a matter of style, we often ask our audience (whoever we're presenting the data to) to tell us what the raw data means to them. Sometimes, their interpretation is something that we haven't thought of. At the very least, though, the raw data makes the audience reflect on the results and gives the test manager some insight into how their audience is thinking. This insight is very useful if you're trying to use the data to achieve buy-in for some aspect of the product or process.

Format the Data for the Audience

No doubt, the test manager will have occasion to brief developers, users, upper management, marketing, and many other interested parties. When presenting the data, it's important for the manager to consider the background of the audience and their needs. For example, if you're presenting data that shows testing progress, the data might be presented to the users in a different format than it would to the developers. The users may want to know how much of the functionality has passed the test, while developers might want to see the data presented with respect to the amount of code that was tested.

Beware of the Hawthorne Effect

The test manager has at his or her disposal a powerful weapon - metrics - for good or evil due to a phenomenon called the Hawthorne Effect.

Case Study 10-3: Showing people that you care about them spurs them on to greater productivity.

Does Lighting Affect Productivity?

Between 1924 and 1927, Harvard professor Elton Mayo conducted a series of experiments at the Western Electric Company in Chicago. Professor Mayo's original study was to determine the effect of lighting on the productivity of workers. The work was expanded from 1927 through 1932 to include other topics such as rest breaks, hours worked, etc. Before each change was made, the impact of the change on the workers and their work was discussed with them. It seemed that no matter what change was implemented, the productivity of the workers continuously improved. Mayo eventually concluded that the mere process of observing (and therefore showing concern) about the workers spurred them on to greater productivity. We have encountered many definitions of the so-called Hawthorne Effect, but most of them sound something like this: Showing people that you care about them spurs them on to greater productivity.

Now I'm not a professor at Harvard and I have not conducted any formal experiments on productivity, but I have observed a more far-reaching phenomenon - basically, observing (measuring) people changes their behavior. The Hawthorne study shows that people are more productive when someone observes them (and sends the message that "what you're doing is important"). I have found that observing or measuring some activity changes the behavior of the people conducting that activity — but the change is not always for the better. It depends on what you're measuring. For example, if you spread the word in your company that "defects are bad; we're finding way too many defects," the number of defects reported would surely drop. People would try to find another way to solve the problem without recording a defect. Notice that the number of defects didn't really go down, just the number reported.

In particular, for those of you who specify individual objectives or goals for your staff, you'll probably discover that your staff will focus on achieving those objectives over other tasks. If you were to tell the developers that the most important goal is to get the coding done on time, they would maximize their efforts to meet the deadline, and this may be done without regard to quality (since quality was not stressed).

So you see, any time you measure some aspect of a person or their work, the mere process of collecting this metric can change the person's behavior and therefore ultimately change the metric measuring their behavior.

So, let's define the Hawthorne Effect for Software Engineers as "The process of measuring human activities can itself change the result of the measurement." Okay, maybe we're not ready for Harvard, but this observation is often true and needs to be considered by test managers every time they implement a new metric.

- Rick Craig

| Key Point |

Hawthorne Effect for Software Engineers: The process of measuring human activities can itself change the result of the measurement. |

Provide Adequate Training

To many software engineers, metrics are mysterious and even threatening. It's important that everyone affected by a metric (i.e., those who collect them, those whose work is being measured, and those who make decisions based upon them) receive training. The training should include why the metric is being collected, how it will be used, the frequency of collection, who will see and use it, and how to change the parameters of the metric. One good training aid is a metrics worksheet such as described below.

Create a Metrics Worksheet

The metrics worksheet shown in Table 10-2 takes much of the mystery out of each metric and, therefore, is a useful tool to assist in training and buy-in for metrics. It also helps gain consistency in the collection and analysis of metrics, and provides a vehicle to periodically review each metric to see if it's still necessary and accurately measures what it was designed to measure.

|

Item |

Description |

|---|---|

|

Handle |

Shorthand name for the metric (e.g., number of defects or lines of code could be called the quality metric). |

|

Description |

Brief description of what is being measured and why. |

|

Observation |

How do we obtain the measurement? |

|

Frequency |

How often does the metric need to be collected or updated? |

|

Scale |

What units of measurement are used (e.g., lines of code, test cases, hours, days, etc.)? |

|

Range |

What range of values is possible? |

|

Past |

What values have we seen in the past? This gives us a sense of perspective. |

|

Current |

What is the current or last result? |

|

Expected |

Do we anticipate any changes? If so, why? |

|

Meters |

Are there any actions to expect as a result of hitting a threshold? |

|

NOTE: Adapted from a course called Test Measurement, created by Bill Hetzel. |

|

What Metrics Should You Collect?

We're often asked, "What metrics should I collect?" Of course, the correct answer is, "It depends." Every development and testing organization has different needs. The Software Engineering Institute (SEI) has identified four core metrics areas:

- Schedule

- Quality

- Resources

- Size

| Key Point |

In Software Metrics: Establishing a Company-Wide Program, Robert Grady and Deborah Caswell define rework as "all efforts over and above those required to produce a defect-free product correctly the first time." |

The Air Force has a similar list, but they've added another metric, "Rework," to the SEI's list. Even though it's impossible for us to say exactly what metrics are needed in your organization, we believe that you'll need at least one metric for each of the four areas outlined above. The Air Force metrics shown in Table 10-3, for example, were designed more for a development or project manager, but with a few changes they're equally applicable to a testing effort. These metrics are only examples. You could come up with many other valid examples for each of the five metrics outlined in the table.

|

Metric |

Development Example |

Test Example |

|---|---|---|

|

Size |

Number of modules or lines of code. |

Number of modules, lines of code, or test cases. |

|

Schedule |

Number of modules completed versus the timeline. |

Number of test cases written or executed versus the timeline. |

|

Resources |

Dollars spent, hours worked. |

Dollars spent, hours worked. |

|

Quality |

Number of defects per line of code. |

Defect Removal Efficiency (DRE), coverage. |

|

Rework |

Lines of code written to fix bugs. |

Number of test cycles to test bug fixes. |

Metrics Used by the Best Projects

It's interesting to look at the metrics actually employed at various companies. Bill Hetzel and Rick Craig conducted a study in the early 1990s to determine what metrics were being collected and used by the "best" projects in the "best" companies. Part of the summary results included a list of those metrics seldom used by the "best" projects and those metrics used by all or most of the best projects involved in the study.

| Key Point |

|

The projects chosen for this study were based on the following criteria:

- Perceived as using better practices and measures.

- Perceived as producing high-quality results.

- Implemented recently or in the final test, with the project team accessible for interview.

- Had to have one of the highest scores on a survey of practices and measures.

Were these truly the very best projects? Maybe not. Were they truly superior to the average project available at that time and therefore representative of the best projects available? Probably.

The data in Table 10-4 is based upon the results of a comprehensive survey (more comprehensive than the one used to select the participants of the study), interviews with project participants, and a review of project documentation. The data from the survey is now a decade old. And, even though we have no supporting proof, we believe that the viability of the data has probably not changed remarkably since 1991.

|

Common Metrics |

Uncommon Metrics |

|---|---|

|

|

We don't offer any analysis of this list and don't necessarily recommend that you base your metric collection on these lists. They are merely provided so you can see what some other quality companies have done.

Measurement Engineering Vision

For those readers who have studied engineering, you know that metrics are an everyday part of being an engineer and measurement is a way of life. In fact, some of the distinguishing characteristics of being an engineering discipline are that there is a repeatable and measurable process in place that leads to a predictable result. For those of us who call ourselves software engineers, we can see that we don't always meet the criteria specified above. We're confident, though, that as a discipline, we're making progress. This book has outlined many repeatable processes (e.g., test planning, risk analysis, etc.), but to continue our evolution toward a true engineering discipline, we have to reach the point where metrics are an integral part of how we do business - not just an afterthought.

| Key Point |

The distinguishing characteristics of being an engineering discipline are that there is a repeatable and measurable process in place that leads to a predictable result. |

This vision includes:

- Building good measurements into our processes, tools and technology.

- Requiring good measurements before taking action.

- Expecting good measurements.

- Insisting on good measurement of the measurement.

Preface

- An Overview of the Testing Process

- Risk Analysis

- Master Test Planning

- Detailed Test Planning

- Analysis and Design

- Test Implementation

- Test Execution

- The Test Organization

- The Software Tester

- The Test Manager

- Improving the Testing Process

- Some Final Thoughts…

- Appendix A Glossary of Terms

- Appendix B Testing Survey

- Appendix C IEEE Templates

- Appendix D Sample Master Test Plan

- Appendix E Simplified Unit Test Plan

- Appendix F Process Diagrams

EAN: 2147483647

Pages: 114