The Test Organization

Overview

"A house divided against itself cannot stand. Our cause must be entrusted to, and conducted by, its undoubted friends - whose hands are free, whose hearts are in the work - who do care for the result."

— Abraham Lincoln

Most software testing books (this one included) devote a lot of pages to the technical issues of testing, even though most of us realize that the human element may be the most important part of the testing process. It's really understandable why this happens. We focus on the process and technical side of testing in our classes and in this book because most people (especially managers) think that they already understand the art of communicating and interacting with other people. This is in spite of the fact that many of these same people have had no formal management training. It's easier to understand and implement process and technical change, which means that testers (and managers) seem to be more in control.

| Key Point |

There are at least a few books that deal with the human dynamics of software development. Tom DeMarco and Tim Lister published a wonderful book called Peopleware, which talks about various aspects of the human side of software engineering. An earlier book called The Psychology of Computer Programming by Gerald M. Weinberg also has a lot to offer on the subject. |

In our Test Management class, for example, we spend about 1/3 of the class time talking about the structure of a test organization, leadership, management, and how to hire testers. Most of the attendees in the class tell us in advance that this is one of their weakest areas, but in the post-class review many say that they really wanted to spend all three days talking about technical topics and the testing process. In other words, they know that the human side is critical, but they still prefer to talk about "concrete" topics.

Test Organizations

| Key Point |

|

There are as many ways to organize for software testing, as there are organizations that test. There's really no right or wrong way to organize for test and, in fact, we've seen most of the sample organizational structures discussed below work in some companies and fail in others. How the test organization is internally structured and positioned within the overall organization is very much dependent on politics, corporate culture, quality culture, skill and knowledge level of the participants, and risk of the product.

Case Study 8-1: A Few Words from Petronius Arbiter

This quote is commonly attributed to Petronius Arbiter in 210 B.C., but some sources place the date as 1st Century B.C. or 1st Century A.D. Even though we're not sure of the exact attribution, its message is clear.

Words of Wisdom That Still Hold True

We trained hard, but it seems that every time we were beginning to form up into teams we would be reorganized. I was to learn later in life that we meet any new situation by reorganizing, and a wonderful method it can be for creating the illusion of progress while producing confusion, inefficiency, and demoralization.

- Petronius Arbiter 210 BC

I love the quote above by Petronius Arbiter. After over two millennia, it seems the only thing that is the same is "change." I've used this quote for so long that sometimes I feel like I wrote it. I was surprised to find it in Ed Kit's book Software Testing in the Real World. It seems likely that I may have started using Petronius' quote (Petronius and I are on a first-name basis) after hearing my friend Ed uses it. Ed, if you're reading this book, thanks. The quote is too good not to share.

- Rick Craig

Sample Test Organizations

Table 8-1 lists some of the pros and cons of various types of test organizations. It's possible that a company could have more than one organizational style. For example, they could have an independent test team and a SWAT team, or a test coordinator and a QA group that performs testing. There are many other strategies for organizing the testing functions - this is only intended to be a sampling.

|

Type of Organization |

Pros |

Cons |

|---|---|---|

|

Independent Test Teams |

Professional testers with fresh, objective viewpoints |

Potential conflict between developers and testers, hard to begin testing early enough |

|

Integrated Test Teams |

Teamwork, sharing of resources, facilitates early start |

Pressure to ship in spite of quality |

|

Developers (as the principal testers) |

Expert on software, no conflict with testers |

Lack fresh perspective, lack knowledge of business, may lack software testing skills, pressure to ship, focus on the code, requires rigorous procedures and discipline |

|

SWAT Teams |

Extra professional resources |

Expensive, hard to create and retain; not for small organizations |

|

Test Coordinator |

Don't need permanent test infrastructure |

Staffing, matrix management, testing skill, lack of credibility |

|

QA / QC |

Existing organization, some existing skill, infrastructure |

More to worry about than just testing |

|

Outsourcing |

Professional testers (maybe), don't need to hire/retain staff |

Still requires management of outsourcers, need to have a good contract |

|

Independent Verification and Validation (IV&V) |

Lowers risk, professional testers |

Too expensive for most; at the very end |

| Key Point |

A SWAT team is what our colleague Steven Splaine calls "a reserve group of expert testers that can be rapidly called in an emergency to help put out a testing fire." |

Independent Test Teams

An independent test team is a team whose primary job is testing. They may be tasked with testing just one product or many. The test manager does not report to the development manager and should be organizationally equal (i.e., a peer).

Independent test teams have been around for a long time, but they really gained in popularity starting in the early 1980s. Prior to the creation of independent test teams, most of the testing was done by the programmers or by the QA function, if one existed. All too frequently during that era (and even today), products were shipped that didn't satisfy the user's needs and, in some cases, just flat-out didn't work. One of the major reasons for this failure was the overwhelming pressure to get the product out the door on time, regardless of the consequences. Since the developers did the testing as well as the development, they were pretty much able to ship the software without any oversight. And the software was shipped because it was clear that the date was the most important measure of success. Too many of the developers had received very little (if any) training in testing since their primary job was writing code.

The popularity of independent test teams has grown out of frustration. Independent test teams have allowed testing to move to the status of a discipline within software engineering. Testing techniques, standards, and methodologies were created, and some people became full-time professional testers. These professionals focused on testing and, therefore, became experts in their field. The independent test team provides an objective look at the software being tested. Too often, programmers who test their own code are only able to prove that the code does what the code does, rather than what it's supposed to do.

| Key Point |

Independent test teams provide an objective look at the software being tested. |

On the negative side, the creation of independent test teams often results in the creation of a "brick wall" between the developers and the testers. Developers are less anxious to test their code, since they know that the testers are going to do it anyway. One of the biggest challenges facing managers of independent test groups today is getting started early enough in the product lifecycle. Often, the developers balk at having the testers get involved early because they fear that the testers will slow their progress. This means that the testers may be stuck testing at the end of the lifecycle, where they're the least effective (refer to Chapter 1 for more information about preventive testing).

Integrated Test Teams

Integrated test teams are teams made up of developers and testers who all report to the same manager. Lately, integrated teams seem to be resurging in popularity. We've talked to several testers who indicated that, organizationally, they're trying to move the testing function closer to the development function. We believe that this is occurring because integrated teams are used to working together and are physically collocated, which greatly facilitates communications.

| Key Point |

Many developers have a tendency to assume that the system will work and, therefore, do not focus on how it might break. |

Some organizations also find that it's easier to get buy-in to start the testing early (i.e., during requirements specification) when the teams work together. Independent test teams often find it much more difficult to begin testing early, because developers fear that the testers "will be in the way" and slow down their development effort.

Just as in the Independent Test Teams model, integrated team members who conduct the testing are (or should be) professional testers. We've found that in practice, testers who are part of integrated teams sometimes have to fight harder for resources (especially training and tools) than their counterparts in independent test teams. This is probably due to the fact that there's no dedicated test manager at a level equal to the development manager.

Since the manager is in charge of both development and testing in an integrated team, one of the downsides is that when under schedule pressure he or she may feel compelled to ship the product prematurely.

Developers

In this type of test organization, the developers and testers are the same people. There are no full-time testers. In the good old days, the developers did it all - requirements, design, code, test, etc. Even today, there are a large number of organizations where the same individuals write and test the code. While there has been a lot of negative press written about organizations that don't use independent and/or professional testers, we've encountered several organizations that seem to do a good job of testing using only developers. Most of these organizations tend to be smaller groups, although we've seen larger groups where all of the testing was done (and done well) by the developers.

| Key Point |

While there has been a lot of negative press written about organizations that don't use independent and/or professional testers, we've encountered several organizations that seem to do a good job of testing using only developers. |

On the plus side, when the developers do all of the testing, there's no need to worry about communications problems with the testing group! Another bonus is that the developers have intimate knowledge of the design and code. Decisions on the prioritization of bug fixes are generally easier to make since there are fewer parties participating.

The obvious downside of this organizational strategy is the lack of a fresh, unbiased look at the system. When testing, many developers tend to look at the system the way they do when they're building it. There's often a tendency to assume that the system will work, or that no user would be dumb enough to try "that." It's also asking a lot to expect developers to be expert designers, coders, and testers. When the developers do all the testing, there may be less expertise in testing and less push to get the expertise, because most developers we know still see programming as their primary job and testing as an "also ran." Finally, developers may not have a good understanding of the business aspect of the software. This is a frequent complaint even in unit testing, but can be very serious in system or acceptance testing.

For this organizational strategy to be successful, we believe that the following things need to be considered:

- A rigorous test process needs to be defined and followed.

- Adequate time must be allocated for testing.

- Business expertise must be obtained from the users or the training department.

- Configuration management needs to be enforced.

- Buddy testing, XP, or some other type of team approach should be used.

- Training developers about testing is mandatory.

- Exit criteria need to be established and followed.

- The process needs continuous monitoring (e.g., QA) to ensure that it doesn't break down.

Test Coordinator

In this style of organizing for test, there's no standing test group. A test coordinator is hired or selected from the development, QA, or user community. The test coordinator then builds a temporary team, typically by "borrowing" users, developers, technical writers, or help desk personnel (or sometimes any other warm bodies that are available).

We've seen this strategy employed at several organizations, especially at companies that have large, mature, transaction-oriented systems (e.g., banks, insurance companies, etc.) where a major new product or revision has occurred and the existing test organization (if any) is inadequate or there's no permanent testing group. In particular, we saw this happening a lot during Y2K because the existing testing infrastructure was inadequate to conduct both normal business and Y2K testing.

Obviously, the reason this strategy is chosen is because there's an immediate need for testing, but there's no time, money, or expertise to acquire and develop a team. Sometimes, the temporary team that's assembled becomes so valuable that it's made into a permanent test group.

Being the test coordinator in the scenario described above is a tough assignment. If you're ever selected for this position, we recommend that you "just say no," unless, of course, you like a challenge. Indeed, the first major obstacle in using this strategy is selecting a coordinator who has the expertise, communication skills, credibility, and management skills to pull it off. The test coordinator is faced with building a testing infrastructure from the ground up. Often, there will be no test environment, tools, methodology, existing test cases, or even testers. The coordinator has to ask (sometimes beg) for people to use as testers from other groups such as the developers or users. All too often, the development or user group manager will not give the coordinator the very best person for the job. And even if the people are good employees, they may not have any testing experience. Then, there's the issue of matrix management. Most of these temporary testers know that they'll eventually go back to their original manager, so where does their loyalty really lie?

| Key Point |

Using a test coordinator can be a successful strategy for testing, but hinges on the selection of a very talented individual to fill the role. |

Quality Assurance (QA)

In this organizational strategy, the testing function is done all, or in part, by the staff of an existing Quality Assurance (QA) group. Some of the earliest testing organizations were formed from, or within, an existing QA group. This was done because some of the skills possessed by the QA staff members were similar to the skills needed by testers. Today, there are many groups that call themselves QA, but don't do any traditional quality assurance functions - they only do software testing. That is to say, they're not really a QA group, but rather a testing group with the name QA.

The downside of this strategy is that a true QA organization has more to worry about than the testing of software. The additional responsibilities may make it difficult for the QA group to build an effective test organization.

Outsourcing

In this type of test organization, all or some of the testing is assigned to another organization in exchange for compensation. Outsourcing has received a lot of visibility in recent years and is a good way to get help quickly. The key to making outsourcing work is to have a good contract, hire the right outsourcer, have well-defined deliverables and quality standards, and have excellent oversight of the outsourcer's work.

| Key Point |

The key to making outsourcing of testing work is to have a good contract, hire the right outsourcer, have well-defined deliverables and quality standards, and have excellent oversight of the outsourcer's work. |

Companies often hire an outsourcer to do the testing because they lack the correct type of funding to do it in-house or lack the correct environment or expertise. These are all valid reasons for outsourcing, but outsourcing the testing effort does not necessarily relieve your company of the responsibility of producing high-quality software or guarantee the results achieved by the outsourcer. We've occasionally encountered a situation where a company develops a system and not only wants to outsource the testing, but they also want to "wash their hands" of the entire testing process and transfer the responsibility for delivering a quality product.

| Key Point |

For more information on outsourcing, refer to the article "Getting the Most from Outsourcing" by Eric Patel in the Nov/Dec 2001 issue of STQE Magazine. |

Outsourcers often have great expertise in testing and many have excellent tools and environments, but they rarely have a clear vision of the functional aspects of your business. So even if your testing is outsourced, your organization must maintain oversight of the testing process. Ideally, a liaison will be provided who will take part in periodic progress reviews, walkthroughs or inspections, configuration control boards, and even the final run of the tests.

Outsourcing is ideal for certain kinds of testing, such as performance testing for Web applications. Many organizations may also find that outsourcing of the load testing is economically the right choice. Lower levels of test are also easier to outsource (our opinion) because they are more likely to be based on structural rather than functional techniques.

Independent Verification & Validation (IV&V)

Independent Verification and Validation (IV&V) is usually only performed on certain large, high-risk projects within the Department of Defense (DoD) or some other government agency. IV&V is usually conducted by an independent contractor, typically at the end of the software development lifecycle, and is done in addition to (not instead of) other levels of test. In addition to testing the software, IV&V testers are looking for contract compliance and to prevent fraud, waste, and abuse.

| Key Point |

Independent Verification and Validation (IV&V) is usually only performed on certain large, high-risk projects within the Department of Defense (DoD) or some other government agency. |

IV&V may reduce the risk on some projects, but the cost can be substantial. Most commercial software developers are unwilling or unable to hire an independent contractor to conduct a completely separate test at the end. As we've explained in this book, the end of the lifecycle is usually the most inefficient and expensive time to test. Most of our readers should leave IV&V testing to the contractors who do them on government projects, where loss of life, national prestige, or some other huge risk is at stake.

Office Environment

This may not seem like the most important issue to many testers and managers, but the environment that the testers work in can play an important part in their productivity and long-term success.

Office Space

Testers have certain basic needs in order to perform their job. They need a place to call their own (a cube or office or at least a desk), a comfortable chair, telephone, computer (not a cast-off) and easy access to an area to take a break away from their "home". Offices that are too warm or cold, smoky, poorly lit, too small, or noisy can greatly reduce the effectiveness of the workers. Not only do the situations mentioned above affect the ability of the workers to concentrate, they send a message to the testers that "they are not important enough to warrant a better work environment." If you're a test manager, don't dismiss this issue as trivial, because it will have a big impact on the effectiveness of your group.

| Key Point |

In their book Peopleware: Productive Projects and Teams, Tom DeMarco and Timothy Lister explained that staff members who performed in the upper quartile were much more likely to have a quiet, private workplace than those in the bottom quartile. |

Case Study 8-2: Not all workspaces are ideal.

The Imprisoned Tester

On one project that I worked on, my desk was situated in a hallway. On another project, we were housed in the document vault of a converted prison - the windows still had bars on them.

- Steven Splaine

Location Relative to Other Participants

We've had the opportunity to conduct numerous post-project reviews over the years. One opportunity for improvement that we have recommended more than once is to collocate testers with the developers and/or the user representatives. Now, we understand that if your developers are in India and you're located in California, you can't easily move India any closer to California. On the other hand, if you're all located in the same building or campus, it just might be possible to move the teams closer together. Having the testers, developers, and user representatives located within close proximity of each other fosters better communications among the project participants. So if geography and politics allow, you should consider colocating these staffs. If not, then you'll have to be more creative in coming up with ways to improve communications (e.g., interactive test logs, status meetings, conference calls, intranet pages, site visits, and so on).

| Key Point |

Having the testers, developers, and user representatives located within close proximity of each other fosters better communications among the project participants. |

Cube vs. Office vs. Common Office

This issue is way too sensitive and complicated for us to solve here. It seems that every few years we read an article extolling the virtues of private offices, cubes, common work areas, or whatever the flavor of the month is. Every time we think we understand what the best office design is, someone convinces us that we're wrong. However, we're pretty sure that the style that works for one individual or group is probably not the ideal setup for another.

| Key Point |

There are times when engineers need some privacy to do their best work and there are other times when they need the stimulus of their coworkers. |

We're not totally clueless, though. We do know that there are times when engineers need some privacy to do their best work and there are other times when they need the stimulus of their coworkers. An ideal setup would provide, even if on a temporary basis, both private and common areas. We'll leave it up to you to determine the best way to set up your office for day-in, day-out operations. Unfortunately, some of you will find that you actually have very little to say about the office arrangement, since it may be an inherent part of your corporate culture or be confined by the layout of your office spaces.

Immersion Time

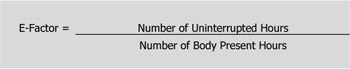

Managers have learned that most 8-hour days result in much less than 8 hours of actual work from each of their employees. We've had clients that claim that they expect to get 6 hours of work per 8-hour day per employee. Others use 4 hours, or 3 hours, or whatever. We're not sure where the values came from, but we're betting that most of them were guesses rather than measured values. In their book Peopleware, Tom DeMarco and Tim Lister introduce a metric known as the Environmental Factor or E-Factor, which somewhat formalizes the metric described above. The E-Factor shown in Figure 8-1 is one way to quantify what percentage of a workday is actually productive.

Figure 8-1: Formula for Calculating the Productive Percentage of a Workday

Multiplying the E-Factor by the number of body-present hours tells you how many productive hours were spent on a particular task. You could just measure uninterrupted work every day, but if the E-Factor is relatively consistent, it's easier to measure this value on a sampling basis, then daily measure the number of hours spent on the job, and multiply the two values.

According to DeMarco and Lister, "What matters is not the amount of time you're present, but the amount of time that you're working at full potential. An hour in flow really accomplishes something, but ten six-minute work periods sandwiched between eleven interruptions won't accomplish anything."

No doubt, most of you have experienced days where you seem to accomplish nothing due to constant interruptions. How long it takes you to get fully back into the flow of a task after an interruption depends very much on the nature of the task, your personal work habits, the environment, your state of mind, and many other things we don't pretend to understand. We call the amount of time it takes to become productive after an interruption immersion time. It occurred to us that if it were possible to reduce the immersion time for an individual or group through some kind of training (although we certainly don't know where you would go to get this training), then productivity would rise. But since we don't really have any good ideas on how to lessen immersion time, we have to achieve our productivity gains by reducing interruptions.

| Key Point |

Immersion time is the amount of time it takes to become productive after an interruption. |

Case Study 8-3: How long does it take for you to truly immerse yourself in your work?

No More Interruptions, Please!

We visit client sites where they still routinely use the overhead speakers located in every room to blast out every trivial message imaginable: "Connie, please call your mother at home." Now this message is not just broadcast in Connie's work area, it's also sent throughout the building. When the message is broadcast, everyone in the building looks up from their desks and wonders, "What has Connie done now?"

If there are 1,000 engineers in the room and it takes each of them 15 minutes to truly immerse themselves into their work, that little message to Connie may have cost the company up to 250 engineering hours. Actually, it probably cost much less, because it's very likely that many of those workers were not currently immersed in their work at that time because they had probably already been interrupted for some other reason. But you get the point. If Connie's mother only wanted her to pick up a loaf of bread, the cost to Connie's company made it a very expensive loaf of bread indeed!

Quiet Time

One manager from a large European telecom company that we frequently work with told us that he had noticed that many of his staff members were staying later and later every day. Some others were coming in early each day. When queried about why they had voluntarily extended their days, most of these employees responded with a comment like, "I come in early so I can get some work done." When asked by the manager what they did all day during normal business hours, these employees explained, "The time that wasn't spent attending meetings was spent constantly answering e-mails, phone calls, or responding to colleagues' questions."

Shortly thereafter, the manager implemented a policy whereby every staff member had to designate a daily 2-hour window as quiet time. During quiet time, they couldn't attend meetings, receive phone calls (although they could call another colleague who was not in his or her quiet time), or be interrupted by colleagues. The quiet times were staggered for the staff members so that not everyone was "quiet" at the same time. After all, someone still had to talk to the customers. They also kept one 2-hour window when no one was on quiet time. This was reserved for group meetings and other activities. The manager said it was very successful and productivity rose significantly, although the policy was modified later to only have quiet time three days a week. We guess they didn't really need to do all that much work after all.

| Key Point |

One innovative test manager that we know required each of his testers to declare a 2-hour period of time each day when no interruptions were allowed. |

Another innovative software engineer that we know "borrowed" a "Do Not Cross - Police Line" banner and drapes it across her door when she needs some quiet time (please note that we are not advocating swiping the police banners from a crime scene). Still another engineer had a sign designed like a clock (like you see in a restaurant window), where the hands can be moved to indicate a time - in this case the time when the engineer is ready to talk to her fellow engineers. These are all different approaches to achieving the same goal of getting a little quiet time to do real work. If you're a test manager, it should be clear to you that your staff already knows the importance of quiet time and so should you!

| Key Point |

Even though "Do Not Cross" banners and "clocks" may provide needed privacy, we worry that they may also label the individuals who use them as loners, rather than team players. |

Meetings

| Key Point |

Many meetings are too long, have the wrong attendees, start and end late, and some have no clearly defined goal. |

Most test managers and testers spend a great deal of time in meetings, and many of these meetings are, without a doubt, valuable. Some meetings, however, are not as effective as they could be. Many meetings are too long, have the wrong attendees, start and end late, and some have no clearly defined goal. We offer the following suggestions to make your meetings more productive:

- Start the meeting on time.

- Publish an agenda (in writing, if possible - an e-mail is fine) and objectives of the meeting.

- Specify who should attend (by name, title, or need).

- Keep the attendees to a workable number.

- Limit conversations to one at a time.

- Have someone take notes and publish them at the conclusion of the meeting.

- Urge participation, but prevent monopolization (by the people who just like the sound of their own voice).

- Choose a suitable location (properly equipped and free from interruptions).

- Review the results of the meeting against the agenda and objectives.

- Assign follow-up actions.

- Schedule a follow-up meeting, if necessary.

- End the meeting on time.

We realize that there are many times when an impromptu meeting will be called or may just "happen." We certainly approve of this communication medium and don't mean to suggest that every meeting has to follow the checklist above.

Case Study 8-4: Some organizations use meeting critique forms to measure the effectiveness of their meetings.

Nobody Told Me There Would Be a Critique Form

I was conducting a meeting at a client site several years ago. At the conclusion of the meeting, all of the participants began to fill out a form, which I learned later was a meeting critique form. This company critiqued every meeting much as they would critique a seminar or training class. I'm not really convinced that most organizations need to go to this level of formality when conducting meetings, but I do admit that it made me re-evaluate how I conducted meetings in the future. Oh, by the way, I didn't get a great rating on my first meeting, but subsequent meetings were graded higher. Perhaps this is a good example of the Hawthorne Effect at work?

— Rick Craig

Preface

- An Overview of the Testing Process

- Risk Analysis

- Master Test Planning

- Detailed Test Planning

- Analysis and Design

- Test Implementation

- Test Execution

- The Test Organization

- The Software Tester

- The Test Manager

- Improving the Testing Process

- Some Final Thoughts…

- Appendix A Glossary of Terms

- Appendix B Testing Survey

- Appendix C IEEE Templates

- Appendix D Sample Master Test Plan

- Appendix E Simplified Unit Test Plan

- Appendix F Process Diagrams

EAN: 2147483647

Pages: 114