Example: Comparing Map Performance

Example Comparing Map Performance

The single-threaded performance of ConcurrentHashMap is slightly better than that of a synchronized HashMap, but it is in concurrent use that it really shines. The implementation of ConcurrentHashMap assumes the most common operation is retrieving a value that already exists, and is therefore optimized to provide highest performance and concurrency for successful get operations.

The major scalability impediment for the synchronized Map implementations is that there is a single lock for the entire map, so only one thread can access the map at a time. On the other hand, ConcurrentHashMap does no locking for most successful read operations, and uses lock striping for write operations and those few read operations that do require locking. As a result, multiple threads can access the Map concurrently without blocking.

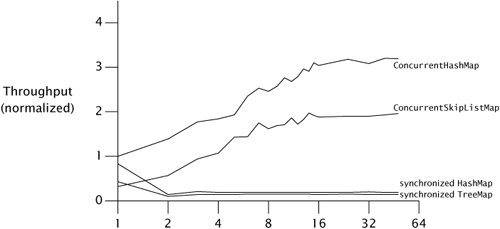

Figure 11.3 illustrates the differences in scalability between several Map implementations: ConcurrentHashMap, ConcurrentSkipListMap, and HashMap and treeMap wrapped with synchronizedMap. The first two are thread-safe by design; the latter two are made thread-safe by the synchronized wrapper. In each run, N threads concurrently execute a tight loop that selects a random key and attempts to retrieve the value corresponding to that key. If the value is not present, it is added to the Map with probability p = .6, and if it is present, is removed with probability p = .02. The tests were run under a pre-release build of Java 6 on an 8-way Sparc V880, and the graph displays throughput normalized to the onethread case for ConcurrentHashMap. (The scalability gap between the concurrent and synchronized collections is even larger on Java 5.0.)

The data for ConcurrentHashMap and ConcurrentSkipListMap shows that they scale well to large numbers of threads; throughput continues to improve as threads are added. While the numbers of threads in Figure 11.3 may not seem large, this test program generates more contention per thread than a typical application because it does little other than pound on the Map; a real program would do additional thread-local work in each iteration.

Figure 11.3. Comparing Scalability of Map Implementations.

The numbers for the synchronized collections are not as encouraging. Performance for the one-thread case is comparable to ConcurrentHashMap, but once the load transitions from mostly uncontended to mostly contendedwhich happens here at two threadsthe synchronized collections suffer badly. This is common behavior for code whose scalability is limited by lock contention. So long as contention is low, time per operation is dominated by the time to actually do the work and throughput may improve as threads are added. Once contention becomes significant, time per operation is dominated by context switch and scheduling delays, and adding more threads has little effect on throughput.

Introduction

- Introduction

- A (Very) Brief History of Concurrency

- Benefits of Threads

- Risks of Threads

- Threads are Everywhere

Part I: Fundamentals

Thread Safety

- Thread Safety

- What is Thread Safety?

- Atomicity

- Locking

- Guarding State with Locks

- Liveness and Performance

Sharing Objects

Composing Objects

- Composing Objects

- Designing a Thread-safe Class

- Instance Confinement

- Delegating Thread Safety

- Adding Functionality to Existing Thread-safe Classes

- Documenting Synchronization Policies

Building Blocks

- Building Blocks

- Synchronized Collections

- Concurrent Collections

- Blocking Queues and the Producer-consumer Pattern

- Blocking and Interruptible Methods

- Synchronizers

- Building an Efficient, Scalable Result Cache

- Summary of Part I

Part II: Structuring Concurrent Applications

Task Execution

- Task Execution

- Executing Tasks in Threads

- The Executor Framework

- Finding Exploitable Parallelism

- Summary

Cancellation and Shutdown

- Cancellation and Shutdown

- Task Cancellation

- Stopping a Thread-based Service

- Handling Abnormal Thread Termination

- JVM Shutdown

- Summary

Applying Thread Pools

- Applying Thread Pools

- Implicit Couplings Between Tasks and Execution Policies

- Sizing Thread Pools

- Configuring ThreadPoolExecutor

- Extending ThreadPoolExecutor

- Parallelizing Recursive Algorithms

- Summary

GUI Applications

- GUI Applications

- Why are GUIs Single-threaded?

- Short-running GUI Tasks

- Long-running GUI Tasks

- Shared Data Models

- Other Forms of Single-threaded Subsystems

- Summary

Part III: Liveness, Performance, and Testing

Avoiding Liveness Hazards

Performance and Scalability

- Performance and Scalability

- Thinking about Performance

- Amdahls Law

- Costs Introduced by Threads

- Reducing Lock Contention

- Example: Comparing Map Performance

- Reducing Context Switch Overhead

- Summary

Testing Concurrent Programs

- Testing Concurrent Programs

- Testing for Correctness

- Testing for Performance

- Avoiding Performance Testing Pitfalls

- Complementary Testing Approaches

- Summary

Part IV: Advanced Topics

Explicit Locks

- Explicit Locks

- Lock and ReentrantLock

- Performance Considerations

- Fairness

- Choosing Between Synchronized and ReentrantLock

- Read-write Locks

- Summary

Building Custom Synchronizers

- Building Custom Synchronizers

- Managing State Dependence

- Using Condition Queues

- Explicit Condition Objects

- Anatomy of a Synchronizer

- AbstractQueuedSynchronizer

- AQS in Java.util.concurrent Synchronizer Classes

- Summary

Atomic Variables and Nonblocking Synchronization

- Atomic Variables and Nonblocking Synchronization

- Disadvantages of Locking

- Hardware Support for Concurrency

- Atomic Variable Classes

- Nonblocking Algorithms

- Summary

The Java Memory Model

EAN: 2147483647

Pages: 141