A Practical Approach to Configuration Verification and Audit

OVERVIEW

EIA-649, the standard for configuration management, requires the organization to verify that a product's stated requirements have been met. Verification can be accomplished by a systematic comparison of requirements with the results of test, analyses, and inspections.

There are three components to establishing a rigorous configuration verification and audit methodology:

- Establishing and implementing a standard design and document verification methodology

- Establishing and implementing a standard configuration audit methodology

- Establishing and implementing a standard testing methodology

This chapter correlates the CM process of verification and audit with traditional software engineering testing methodology.

COMPONENTS OF A DESIGN AND DOCUMENT VERIFICATION METHODOLOGY

The basis of configuration management is the rigorous control and verification of all system artifacts. These artifacts include:

- The feasibility study

- The project plan

- The requirements specification

- The design specification

- The database schemas

- The test plan

EIA-649 states that the documentation must be accurate and sufficiently complete to permit the reproduction of the product without further design effort. What this means is that if the set of documents described in the list above was given to a different set of programmers, the same exact system would be produced.

Activities that can accomplish this end include:

- Rigorous review of all documentation by inspection teams .

- Continuous maintenance of documentation through using an automated library of documentation with check-in and check-out facilities.

- Maintenance of the product's baseline.

- Implementation of requirements traceability review. All requirements were originally stated needs by a person or persons. Traceability back to this person or persons is critical if the product is to be accurately verified .

- Implementation of data dictionary and/or repository functionality to manage digital data.

COMPONENTS OF A CONFIGURATION AUDIT METHODOLOGY

Configuration audit requires the following resources:

- Appropriately assigned staff, to include a team leader as well as representatives of the systems and end- user groups

- A detailed audit plan

- Availability of all documentation discussed in the prior section (it is presumed that this documentation is readily available in a controlled, digitized format)

Audits can be performed upon implementation of a new system or the maintenance of an existing one. Prior to conducting the audit, the audit plan, which details the scope of the effort, is created and approved by all appropriate personnel.

The audit process itself is not unlike the testing process described in the first part of this chapter. An audit plan, therefore, is very similar to a test plan. During the audit, auditors :

- Compare the specification to the product and record discrepancies and anomalies.

- Review the output of the testing cycle and record discrepancies and anomalies.

- Review all documentation and record discrepancies and anomalies. In a CM (configuration management) environment, the following should be verified for each document:

- A documentation library control system is being utilized.

- The product identifier is unique.

- All interfaces are valid.

- Internal audit records of CM processes are maintained .

- Record questions, if any, about what was observed . Obtain answers to these questions.

- Make recommendations as to action items to correct any discovered discrepancies and anomalies.

- Present formal findings.

Audit minutes provide a detailed record of the findings, recommendations, and conclusions of the audit committee. The committee follows up until all required action items are complete.

COMPONENTS OF A TESTING METHODOLOGY

The goal of testing is to uncover and correct errors. Because software is so complex, it is reasonable to assume that software testing is a labor-and resource- intensive process. Automated software testing helps to improve testers' productivity and reduce the resources that may be required. By its very nature, automated software testing increases test coverage levels, speeds up test turn -around time, and cuts costs of testing.

The classic software development life-cycle model suggests a systematic, sequential approach to software development that progresses through software requirements analysis, design, code generation, and testing. That is, once the source code has been generated, program testing begins with the goal of finding differences between the expected behavior specified by system models and the observed behavior of the system.

The process of creating software applications that are error-free requires technical sophistication in the analysis, design, and implementation of that software, proper test planning, as well as robust automated testing tools. When planning and executing tests, software testers must consider the software and the function it performs , the inputs and how they can be combined, and the environment in which the software will eventually operate .

There are a variety of software testing activities that can be implemented, as discusses below.

Inspections

Software development is, for the most part, a team effort, although the programs themselves are coded individually. Periodically throughout the coding of a program, the project leader or project manager will schedule inspections of a program (see Appendices F and G). An inspection is the process of manually examining the code in search of common errors.

Each programming language has it own set of common errors. It is therefore worthwhile to spend a bit of time in search of these errors.

An example of what an inspection team would look for follows :

int i;

for (i = 0; i < 10; i++)

{

cout << "Enter velocity for "

<< i << "numbered data point: "

cin >> data_point[i].velocity;

cout << "Enter direction for that data point"

<< " (N, S, E or W): ";

cin >> data_point[i].direction;

}

The C++ code displayed above looks correct. However, the semicolon was missing from the fifth line of code:

int i;

for (i = 0; i < 10; i++)

{

cout << "Enter velocity for "

<< i << "numbered data point: ";

//the ; was missing

cin >> data_point[i].velocity;

cout << "Enter direction for that data point"

<< " (N, S, E or W): ";

cin >> data_point[i].direction;

}

Walk Throughs

A walk-through is a manual testing procedure that examines the logic of the code. This is somewhat different from an inspection, where the goal is to find syntax errors. The walk-through procedure attempts to answer the following questions:

- Does the program conform to the specification for that program?

- Is the input being handled properly?

- Is the output being handled properly?

- Is the logic correct?

The best way to handle a walk-through is:

- Appoint a chairperson who schedules the meeting, invites appropriate staff, and sets the agenda.

- The programmer presents his or her code.

- The code is discussed.

- Test cases can be used to "walk through" the logic of the program for specific circumstances.

- Disagreements are resolved by the chairperson.

- The programmer goes back and fixes any problems.

- A follow-up walk-through is scheduled.

Unit Testing

During the early stages of the testing process, the programmer usually performs all tests. This stage of testing is referred to as unit testing. Here, the programmer usually works with the debugger that accompanies the compiler. For example, Visual Basic, as shown in Figure 9.1, enables the programmer to "step through" a program's (or object's) logic one line of code at a time, viewing the value of any and all variables as the program proceeds.

Figure 9.1: Visual Basic, along with Other Programming Toolsets, Provides Unit Testing Capabilities to Programmers

Daily Build and Smoke Test

McConnell [1996] describes a testing methodology commonly used at companies such as Microsoft that sell shrink-wrapped software. The "daily build and smoke" test is a process whereby every file is compiled, linked, and combined into an executable program on a daily basis. The executable is then put through a "smoke" test, a relatively simple check, to see if the product "smokes" when it runs.

According to McConnell [1996], this simple process has some significant benefits, including:

- It minimizes integration risk by ensuring that disparate code, usually developed by different programmers, is well integrated.

- It reduces the risk of low quality by forcing the system to a minimally acceptable standard of quality.

- It supports easier defect diagnosis by requiring the programmers to solve problems as they occur rather than waiting until the problem is too large to solve.

For this to be a successful effort, the build part of this task should:

- Compile all files, libraries, and other components successfully

- Link successfully all files, libraries, and components

- Not contain any "show-stopper bugs " that prevent the program from operating properly

The "smoke" part of this task should:

- Exercise the entire system from end to end with a goal of exposing major problems

- Evolve as the system evolves: as the system becomes more complex, the smoke test should become more complex

Integration Testing

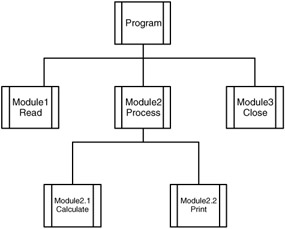

A particular program is usually made up of many modules. An OO (object-oriented) system is composed of many objects. Programmers usually architect their programs in a top-down, modular fashion. Integration testing proves that the module interfaces are working properly. For example, in Figure 9.2, a programmer doing integration testing would ensure that the Module2 (the Process module) correctly interfaces with its subordinate, Module2.1 (the Calculate process).

Figure 9.2: Integration Testing Proves that Module Interfaces Are Working Properly

If Module2.1 had not yet been written, it would have been referred to as a stub. It would still be possible to perform integration testing if the programmer inserts two or three lines of code in the stub, which would act to prove that it is well integrated to Module2.

On occasion, a programmer will code all the subordinate modules first and leave the higher-order modules for last. This is known as bottom-up programming. In this case, Module2 would be empty, save for a few lines of code to prove that it is integrating correctly with Module2.1, etc. In this case, Module2 would be referred to as a driver.

System Testing

Where integration testing is performed on the discrete programs or objects with a master program, system testing refers to testing the interfaces between programs within a system. Because a system can be composed of hundreds of programs, this is a vast undertaking.

Parallel Testing

It is quite possible that the system being developed is a replacement for an existing system. In this case, parallel testing is performed. The goal here is to compare outputs generated by each of the systems (old versus new) and determine why there are differences, if any.

Parallel testing requires that the end user(s) be part of the testing team. If the end user determines that the system is working correctly, one can see that the customer "has accepted" the system. This, then, is a form of customer acceptance testing.

THE QA PROCESS

As the testing progresses, testing specialists may become involved (see Appendix H for a sample QA Handover Document). Within the vernacular of IT, staff members who are dedicated to performing testing are referred to as quality assurance (QA) engineers and reside within the quality assurance department . QA testers must have a good understanding of the program being tested , as well as the programming language in which the program was coded. In addition, the QA engineer must be methodical and able to grasp complex logic. Generally speaking, technical people with these attributes are hard to come by and even harder to keep as most of them aspire to become programmers themselves .

Even simple software can present testers with obstacles. Couple this complexity with the difficulty of attracting and keeping QA staff and one has the main reason why many organizations now automate parts of the testing process.

THE TEST PLAN

Software testing is one critical element of software quality assurance (SQA) that aims to determine the quality of the system and its related models. In such a process, a software system will be executed to determine whether it matches its specification and executes in its intended environment. To be more precise, the testing process focuses on both the logical internals of the software, ensuring that all statements have been tested , and on the functional externals by conducting tests to uncover errors and ensure that defined input will produce actual results that agree with required results.

To ensure that the testing process is complete and thorough, it is necessary to create a test plan (Appendix E).

A thorough test plan consists of the items listed in Table 9.1. A sample test plan, done by this author's students for an OO dog grooming system, can be found in Appendix E. While all components of this test plan are important, one notes that the test plan really focuses on three things:

- The test cases

- Metrics that will determine whether there has been testing success or failure

- The schedule

|

The test cases are the heart of the test plan. A test case specifies the exact steps the tester must take to test a particular function of the system. A sample test case appears in Table 9.2.

|

C1. Log-in Page (based on case A1) |

|---|

|

Description/Purpose: Test the proper display of the login, user verification, and that the user is redirected to the correct screen. |

|

Stubs Required :

|

|

Steps:

|

|

Expected Results:

|

Test plans must be carefully created. A good test plan contains a test case for every facet of the system to be tested.

Some organizations purchase automated testing tools that assist the developer in testing the system. These are particularly useful for:

- Automatically creating test cases. Testing tools can "watch" what a person does at the keyboard and then translate what it records into a testing script. For example, a loan application transaction consists of logging into a system, calling up a particular screen, and then entering some data. Automatic testing tools can "watch" someone keyboard this transaction and create a script that details the steps that person took to complete the transaction.

- Simulating large numbers of end users. If a system will ultimately have dozens, hundreds, or even thousands of end users, then testing the system with a handful of testers will be insufficient. An automatic testing tool has the capability to simulate any number of end users. This is referred to as testing the "load" of a system.

Success must be measured. The test plan should contain metrics that assess the success or failure of the system's components. Examples of viable metrics include:

- For each class, indicators of test failure (as identified in the test cases)

- Number of failure indicators per class

- Number of failure indicators per sub-system

- A categorization of failure indicators by severity

- Number of repeat failures (not resolved in the previous iteration)

- Hours spent by test team in test process

- Hours spent by development team in correcting failures

TEST AUTOMATION

The usual practice in software development is that the software is written as quickly as possible, and once the application is done, it is tested and debugged . However, this is a costly and ineffective way because the software testing process is difficult, time consuming, and resource intensive . With manual test strategies, this can be even more complicated and cumbersome. A better alternative is to perform unit testing that is independent of the rest of the code. During unit testing, developers compare the object design model with each object and sub-system . Errors detected at the unit level are much easier to fix; one only has to debug the code in that small unit. Unit testing is widely recognized as one of the most effective ways to ensure application quality. It is a laborious and tedious task, however. The workload for unit testing is tremendous, so that to manually perform unit testing is practically impossible and hence the need for automatic unit testing. Another good reason to automate unit testing is that when performing manual unit testing, one runs the risk of making mistakes [Aivazis 2000].

In addition to saving time and preventing human errors, automatic unit testing helps facilitate integration testing. After unit testing has removed errors in each sub-system, combinations of sub-systems are integrated into larger sub-systems and tested. When tests do not reveal new errors, additional sub-systems are added to the group , and another iteration of integration testing is performed. The re-execution of some subset of tests that have already been conducted is regression testing. This ensures that no errors are introduced as a result of adding new modules or modification in the software [Kolawa 2001].

As integration testing proceeds, the number of regression tests can grow very large. Therefore, it is inefficient and impractical to re-execute every test manually once a change has occurred. The use of automated capture/playback tools may prove useful in this case. Such tools enable the software engineer to capture test cases and results for subsequent playback and comparison.

Test automation can improve testers' productivity. Testers can apply one of several types of testing tools and techniques at various points of code integration. Some examples of automatic testing tools in the marketplace include:

- C++Test for automatic C/C++ unit testing by ParaSoft

- Cantata++ for dynamic testing of C++ by IPL

- WinRunner for unit and system tests by Mercury Interactive

WinRunner is probably one of the more popular tools in use today because it automates much of the painful process of testing. Used in conjunction with a series of test cases (see Appendix E, Section 5), a big chunk of the manual processes that constitute the bulk of testing can be automated. The WinRunner product actually records a particular business process by recording the keystrokes a user makes (e.g., emulates the user actions of placing an order). The QA person can then directly edit the test script that WinRunner generates and add checkpoints and other validation criteria.

When done correctly, and with appropriate testing tools and strategies, automated software testing provides worthwhile benefits such as repeatability and significant time savings. This is true especially when the system moves into system test. Higher quality is also a result because less time is spent in tracking down test environmental variables and rewriting poorly written test cases [Raynor 1999].

Pettichord [2001] describes several principles that testers should adhere to in order to succeed with test automation. These principles include:

- Taking testing seriously

- Being careful who you choose to perform these tests

- Choosing what parts of the testing process to automate

- Being able to build maintainable and reliable test scripts

- Using error recovery

Testers must realize that test automation itself is a software development activity and thus it needs to adhere to standard software development practices. That is, test automation systems themselves must be tested and subjected to frequent review and improvement to make sure that they are indeed addressing the testing needs of the organization.

Because automating test scripts is part of the testing effort, good judgment is required in selecting appropriate tests to automate. Not everything can or should be automated. For example, overly complex tests are not worth automating. Manual testing is still necessary for this situation. Zambelich [2002] provides a guideline to make automated testing cost-effective . He says that automated testing is expensive and does not replace the need for manual testing or enable one to "down- size " a testing department. Automated testing is an addition to the testing process. Some pundits claim that it can take between three and ten times as long (or longer) to develop, verify, and document an automated test case than to create and execute a manual test case. Zambelich indicates that this is especially true if one elects to use the "record/playback" feature (contained in most test tools) as the primary automated testing methodology. In fact, Zambelich says that record/playback is the least cost-effective method of automating test cases.

Automated testing can be made cost-effective, according to Zambelich, if some common sense is applied to the process:

- Choose a test tool that best fits the testing requirements of your organization or company. An "Automated Testing Handbook" is available from the Software Testing Institute (http://www.softwaretestinginstitute.com).

- Understand that it does not make sense to automate everything. Overly complex tests are often more trouble than they are worth to automate. Concentrate on automating the majority of tests, which are probably fairly straightforward. Leave the overly complex tests for manual testing.

- Only automate tests that are going to be repeated; one-time tests are not worth automating.

Isenberg [1994] explains the requirements for success in automated software testing. To succeed, the following four interrelated components must work together and support one another:

- Automated testing system. It must be flexible and easy to update.

- Testing infrastructure. This includes a good bug tracking system, standard test case format, baseline test data, and comprehensive test plans.

- Software testing life cycle. This defines a set of phases outlining what test activities to do and when to do them. These phases are planning, analysis, design, construction, testing (initial test cycles, bug fixes, and retesting), final testing and implementation, and post implementation.

- Corporate support. Automation cannot succeed without the corporation's commitment to adopting and supporting repeatable processes.

Automated testing systems should have the ability to adjust and respond to unexpected changes to the software under test, which means that the testing systems will stay useful over time. Some of the practical features of automated software testing systems suggested by Isenberg [1994] include:

- Run all day and night in unattended mode

- Continue running even if a test case fails

- Write out meaningful logs

- Keep test environment up-to-date

- Track tests that pass, as well as tests that fail

When automated testing tools are introduced, there may be some difficulties that test engineers must face. Project management should be used to plan the implementation of testing tools. Without proper management and selection of the right tool for the job, automated test implementation will fail [Hendrickson 1998]. Dustin [1999] has accumulated a list of "Automated Testing Lessons Learned" from his experiences with real projects and test engineer feedback. Some include:

- The various tools used throughout the development life cycle do not integrate easily if they are from different vendors .

- An automated testing tool can speed up the testing effort; however, it should be introduced early in the testing life cycle to gain benefits.

- Duplicate information may be kept in multiple repositories and difficult to maintain. As a matter of fact, in many instances, the implementation of more tools can result in decreased productivity.

- The automated testing tool drives the testing effort. When a new tool is used for the first time, more time is often spent on installation, training, initial test case development, and automating test scripts than on actual testing.

- It is not necessary for everyone on the testing staff to spend his or her time automating scripts.

- Sometimes, elaborate test scripts are developed through overuse of the testing tool's programming language, which duplicates the development effort. That is, too much time is spent on automating scripts without much additional value gained . Therefore, it is important to conduct an automation analysis and to determine the best approach to automation by estimating the highest return.

- Automated test script creation is cumbersome. It does not happen automatically.

- Tool training needs to be initiated early in the project so that test engineers have the knowledge to use the tool.

- Testers often resist new tools. When first introducing a new tool to the testing program, mentors and advocates of the tool are very important.

- There are expectations of early payback. When a new tool is introduced to a project, project members anticipate that the tool will narrow down the testing scope right away. In reality, it is the opposite that is, initially the tool will increase the testing scope.

SUMMARY

Test engineers can enjoy productivity increases as the testing task becomes automated and a thorough test plan is implemented. Creating a good and comprehensive automated test system requires an additional investment of time and consideration, but it is cost-effective in the long run. More tests can be executed while the amount of tedious work on construction and validation of test cases is reduced.

Automated software testing is by no means a complete substitute for manual testing. That is, manual testing cannot be totally eliminated; it should always precede automated testing. In this way, the time and effort that will be saved from the use of automated testing can now be focused on more important testing areas.

Configuration management insists that we create and follow procedures for verification of a product's adherence to the specification from which it was derived. A combination of rigorously defined testing, documentation, and audit methodologies fulfills this requirement.

REFERENCES

Aivazis, M., "Automatic Unit Testing," Computer , 33 (5), back cover, May 2000 .

Bruegge, B. and A.H. Dutoit, Object-Oriented Software Engineering: Conquering Complex and Changing Systems , Prentice Hall, Upper Saddle River, NJ, 2000 .

Dustin, E., "Lessons in Test Automation," STQE Magazine , September/October 1999 , and from the World Wide Web: http://www.stickyminds.com/pop_print.asp?ObjectId=1802&ObjectType=ARTCO

Hendrickson, E., The Difference between Test Automation Failure and Success , Quality Tree Software, August, 1998 , retrieved from http://www.qualitytree.com/feature/dbtasaf.pdf

Isenberg, H.M., "The Practical Organization of Automated Software Testing," Multi Level Verification Conference 95 , December 1994 , retrieved from http://www.automated-testing.com/PATfinal.htm

Kolawa, A., "Regression Testing at the Unit Level?," Computer , 34 (2), back cover, February 2001 .

McConnell, Steve, "Daily Build and Smoke Test," IEEE Software , 13 (4), July 1996 .

Pettichord, B., "Success with Test Automation," June 2001 , retrieved from http://www.io.com/~wazmo/succpap.htm

Pressman, R.S., Software Engineering: A Practitioner's Approach , 5th ed., McGraw-Hill, Boston, MA, 2001 .

Raynor, D.A., "Automated Software Testing," retrieved from http://www. trainers -direct.com/resources/articles/ProjectManagement/AutomatedSoftwareTestingRaynor.html

Whittaker, J.A., "What Is Software Testing? And Why Is It So Hard?," IEEE Software , January/February 2000 , 70 “79.

Zallar, K., "Automated Software Testing - A Perspective," retrieved from http://www.testingstuff.com/autotest.html

Zambelich, K., "Totally Data-Driven Automated Testing," 2002 , retrieved from http://www.sqa-test.com/w_paper1.html

Preface

- Introduction to Software Configuration Management

- Project Management in a CM Environment

- The DoD CM Process Model

- Configuration Identification

- Configuration Control

- Configuration Status Accounting

- A Practical Approach to Documentation and Configuration Status Accounting

- Configuration Verification and Audit

- A Practical Approach to Configuration Verification and Audit

- Configuration Management and Data Management

- Configuration Change Management

- Configuration Management and Software Engineering Standards Reference

- Metrics and Configuration Management Reference

- CM Automation

- Appendix A Project Plan

- Appendix C Sample Data Dictionary

- Appendix D Problem Change Report

- Appendix E Test Plan

- Appendix G Sample Inspection Plan

- Appendix I System Service Request

- Appendix J Document Change Request (DCR)

- Appendix K Problem/Change Report

- Appendix L Software Requirements Changes

- Appendix M Problem Report (PR)

- Appendix N Corrective Action Processing (CAP)

- Appendix P Project Statement of Work

- Appendix Q Problem Trouble Report (PTR)

- Appendix S Sample Maintenance Plan

- Appendix T Software Configuration Management Plan (SCMP)

- Appendix U Acronyms and Glossary

- Appendix V Functional Configuration Audit (FCA) Checklist

- Appendix W Physical Configuration Audit (PCA) Checklist

- Appendix X SCM Guidance for Achieving the Repeatable Level on the Software

- Appendix Y Supplier CM Market Analysis Questionnaire

EAN: 2147483647

Pages: 235