Identifying Project Scope Risk

Overview

"Well begun is half done."

—ARISTOTLE

While beginning well never actually completes half of a project, beginning poorly leads to disappointment, rework, stress, and potential failure. A great deal of project risk can be discovered at the earliest stages of project work, when project leaders are defining the scope of the project.

For risks associated with the elements of the project management triple constraint (scope, schedule, and resources), scope risk generally is considered first. Of the three types of doomed projects—those that are beyond your capabilities, those that are over-constrained, and those that are ineffectively executed—the first type is the most significant, because this type of project is literally impossible. Identification of scope risks reveals either that your project is probably feasible or that it lies beyond the state of your art. Early decisions to shift the scope or abandon the project are essential on projects with significant scope risks.

There is little consensus in project management circles on a precise definition of "scope." Very broad definitions use scope to refer to everything in the project, and very narrow definitions limit project scope to include only project deliverables. For the purposes of this chapter, project scope is defined to be consistent with the Guide to the Project Management Body of Knowledge, 2000 edition (PMBOK Guide). The type of scope risk considered here relates primarily to the project deliverable(s). Other types of project risk are covered in later chapters.

The principal risk ideas covered in this chapter are:

- Sources of scope risk

- Deliverable definition

- High-level risk assessment

- Setting limits

- Work breakdown structure

- Market and confidentiality risk

Sources of Scope Risk

While scope risks represent roughly one-third of the data in the Project Experience Risk Information Library (PERIL) database, they account for close to half of the total impact. The two broad categories of scope risk in PERIL relate to changes and to defects. By far the most damage was due to poorly managed change, but all scope risks represent significant exposure in typical high-tech projects. While some of the risk situations, particularly in the category of defects, were legitimately "unknown" risks, quite a few common problems could have been identified in advance through better definition of deliverables and a more thorough work breakdown structure. The summary:

|

SCOPE RISKS |

COUNT |

CUMULATIVE IMPACT (WEEKS) |

AVERAGE IMPACT (WEEKS) |

|---|---|---|---|

|

Changes |

46 |

280 |

6.1 |

|

Defect |

30 |

198 |

6.6 |

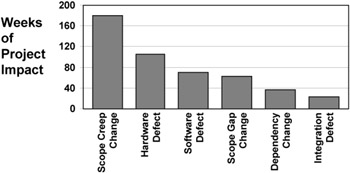

These root causes were further characterized by type, and a Pareto chart of overall impact by type of risk is summarized in Figure 3-1.

Figure 3-1: Scope risks in the PERIL database.

Change Risks

Change risk represents well over half the scope risks reported in the PERIL database. There are three categories of scope change risks:

- Scope creep: requirements that evolve and mutate as a project runs

- Gaps: specifications or activities added to the project late

- Scope dependencies: inputs or other needs of the project not anticipated at the start of a project

Scope creep is the most serious category and the one in which the majority of the change risks in PERIL fall. Nearly all of these incidents represented unanticipated additional investment of time and money that could have been predicted with clearer scope definition. The average impact on projects that reported scope creep was more than two months of slip. Some of the changes were a result of a shift in the specifications. Others were specifications above and beyond the stated project objective that were added as the project ran. While in some, perhaps even most, of these cases, the changes represented good business decisions, there is no question that the projects would have been shorter, less expensive, and easier to run if the definitions ultimately used had been determined earlier. In some particularly severe cases, the changes in scope delayed the project so much that the product had little or no value. The need was no longer pressing, or it had been met by some other means. The tool that uncovers these problems most effectively is early establishment of a better, clearer, more thorough definition.

Scope creep also comes from within, generally from well-intentioned attempts by some part of the project team to "improve" the deliverable. While internally generated changes may have value, often they do not have enough to warrant the project impact they cause. Whatever the source, meandering definition of scope creates a great deal of scope risk. Scope creep is a universal and pervasive issue for technical projects.

Other project changes in the PERIL database resulted from gaps in the project scope that were discovered late in the project. Most of these risks were due to overlooked requirements—work required for the project objective that went unrecognized until late in the project. In a small number of cases, the project objective was so unlike earlier work that the gaps were probably unavoidable. The additional insights provided in midproject by customers, managers, team members, or other project stakeholders were not available at the start of the project, so the work required was not visible. In most of the cases, however, the gaps came from incomplete analysis and could have been avoided. More thorough scope definition and project work breakdown would have clearly shown the missing and incomplete portions of the project plan.

A third category of change relates to unexpected scope dependencies. (Dependency risks that primarily affect the project timeline, rather than the scope, are included with schedule risks in the database.) Again, there were some changes that no amount of realistic analysis would have uncovered. While the legal requirements and other factors external to the project are generally stable, they may occasionally shift quickly and without warning. In the risk database, however, most of the situations were due to factors that should not have come as complete surprises. Some were due to changes in the infrastructure the project was depending upon, such as a new version of system or application software or hardware upgrades. Some projects in the database were hurt by unplanned delays in access to new software versions or product releases. Investigation and more thorough analysis of the systems, software, and the infrastructure your project requires can uncover many dependency risks.

Defect Risks

Technical projects rely on many complicated things to work as expected. Unfortunately, new things do not always operate as promised or as required. Even normally reliable things may break down or fail to perform as desired in a novel application. Hardware failure was the most common defect risk of this sort in the PERIL database, followed by software problems. In several cases, the root cause was new, untried technology that the project was unable to use for the project because it lacked functionality or reliability. In other cases, a component created by the project (such as a custom integrated circuit, a board, or a software module) did not work initially and had to be redone. In still other cases, critical purchased components delivered to the project failed to work and required replacement. Nearly all of these risks are visible, at least as possibilities, through adequate analysis and planning.

Some hardware and software functional failures related to quality. In many projects, some components may work but fail to meet a stated standard of performance. Hardware may fail to meet a throughput standard or require too much power or emit excessive electromagnetic interference. Software may not be easy enough to operate or may not work in unusual circumstances. As with other defects, the definition, planning, and analysis of project work helps in anticipating many of these potential quality risks.

The third type of defect risk, after hardware and software problems, occurs above the component level. In many large technical programs, the work is decomposed into smaller, related subprojects that execute in parallel. Successful integration of the outputs of each of the subprojects into a single system deliverable requires not only that each of the components delivered operate as specified but also that the combination of all these parts also work as a functioning system. As computer users, we are more familiar with this exposure than we would care to be. When the various software programs we use fail to play nicely together, we see a characteristic "blue screen of death" or the crash of a system or application with notification of some sort of "illegal operation." Integration risk is particularly problematic in projects, as it generally occurs very near the deadline and is rarely simple to diagnose and correct. Again, thorough analysis using disciplines such as software architecture and systems engineering can reveal much of this sort of risk early.

A more complete listing of the scope risks in the PERIL database is included in the Appendix. While uncovering scope risks begins with a review of past problems such as these, each new project you take on will also pose unique scope risks that can be uncovered only as you define deliverables and develop the project work breakdown structure.

Defining Deliverables

Defining deliverables thoroughly is a powerful tool for uncovering potential change-oriented scope risks.

The process for specifying deliverables for a project varies greatly depending on the type and the scale of the project. For small projects, informal methods can work well, but for most projects, adopting a more rigorous approach is where good risk management begins. Defining the deliverables for a project gives the project leader and the team their first indication of the risks in the proposed project. Whatever the process, the goal is to develop specific, written requirements that are clear, unambiguous, and agreed to by all project stakeholders and project contributors.

A good, thorough process for defining project deliverables begins with identifying the people who should participate, including all who must agree with the definition. Much project scope risk arises because key project contributors are not involved with the project early, when initial definition work is done. Some scope problems become visible only late in the project, when these staff members finally join the project team. Whenever it is not possible to work with the specific people who will later be part of the project team, locate and work with people who are available and who can represent each needed perspective and functional area. In establishing this team, strive to include all functions that are essential to the project: call in favors, beg, plead, or do whatever you need to do to get qualified people involved. The definition effort includes all of the core project team, but it rarely ends there. You nearly always need to include others from outside your project who may not be directly involved with the work, from functions such as marketing, sales, and support. Outside your organization, you probably also need input from customers, users, other related project teams, and potential subcontractors. Consider the project over its entire development life cycle. Think about who will be involved with all stages of design, development, manufacturing or assembly, testing, documentation, selling, installation, distribution, support, and other aspects of the work.

Even when the right people are available and involved early in the early project definition activities, it is difficult to be thorough. The answers for many questions may not yet be available, and much of your data may be ranges or even guesses. Specifics concerning new methods and technologies add more uncertainty. Three useful techniques for managing scope risk are:

- Using a documented definition process

- Developing a straw-man definition document

- Adopting a rigorous evolutionary methodology

Deliverable Definition Process

Processes for defining deliverables vary depending on the nature of the project. For product development projects, a list of guidelines similar to the one that follows is a typical starting point. By reviewing such a list and documenting both what is known and what is still needed, you build a firm foundation for defining project scope and also begin to capture activities that need to be integrated into the project plan.

Topics for a typical deliverable definition process are:

- Alignment with business strategy (How does this project contribute to stated high-level business objectives?)

- User and customer needs (Has the project team captured the ultimate end user requirements that must be met by the deliverable?)

- Compliance (Has the team identified all relevant regulatory, environmental, and manufacturing requirements, as well as any relevant industry standards?)

- Competition (Has the team identified both current and projected alternatives to the proposed deliverable, including not undertaking the project?)

- Positioning (Is there a clear and compelling benefit-oriented project objective that supports the business case for the project?)

- Decision criteria (Does this project team have an agreed-upon hierarchy of measurable priorities for cost, time, and scope?)

- Delivery (Are logistical requirements understood and manageable? These include, but are not limited to, sales, distribution, installation, sign-off, and support.)

- Sponsorship (Does the management hierarchy collectively support the project, and will it provide timely decisions and ongoing resources?)

- Resources (Does the project have, and will it continue to have, the staffing and funding needed to meet the project goals within the allotted time?)

- Technical risk (Has the team assessed the overall level of risk it is taking? Are technical and other exposures well documented?)

(This list is based on the 1972 SAPPHO Project at the University of Sussex, England.)

While this list is not exhaustive, a thorough examination of each criterion and written documentation of available information provides a firm foundation for defining specific requirements. Assessment of the degree to which each element of the list is adequately understood (on a scale ranging from "Clueless" on one extreme to "Omniscient" on the other) also begins to identify what is missing. The gaps identified in the process may be used to define tasks and activities that will be added to the project plan. While some level of uncertainty will always remain, this sort of analysis makes it clear where the biggest exposures are. It also allows the project team and sponsor to make intelligent choices and decide whether the level of risk is inappropriately high. The last point on this list, technical risk, is most central to the subject at hand. Highlevel project risk assessment is discussed in some detail later in this chapter.

Straw Man Definition Document

Most books on project management prattle on about identifying and documenting all the known project requirements. This is much easier said than done for projects in the real world; it is very hard to get users and stakeholders of technical projects to cooperate with this strategy. When too little about a project is clear, many people see only two options: accept the risks associated with incomplete definition, or abandon the project. Between these, however, lies a third option. By constructing a straw-man definition, instead of simply accepting the lack of data, the project team defines the specific requirements. These requirements may be based on earlier projects, assumptions, guesses, or the team's best understanding of the problem that the project deliverable is supposed to solve. Any definition constructed this way is certain to be inaccurate and incomplete, but formalizing requirements leads to one of two very beneficial results.

The first possibility is that the invented requirements will be accepted and approved, giving the project team a solid basis for planning. Once sign-off has occurred, anything that is not quite right or deemed incomplete can be changed only through a formal project change management process. (Some contracting firms use this to get rich. They win business by quoting fixed fees that are below the cost of delivering all the stated requirements, knowing full well that there will be changes. They then make their profits by charging for the inevitable changes that occur, generating large incremental billings for the project.) Even for projects where the sponsors and project team are in the same organization, the signoff process gives the project team a great deal of leverage when negotiating changes. (This whole process brings to mind the old riddle: "How do you make a statue of an elephant?" Answer: "You get an enormous chunk of marble and chip off anything that does not look like an elephant.")

The second possible outcome is a flood of criticism, corrections, edits, and "improvements." While most people are intimidated by a blank piece of paper or an open-ended question, everyone seems to enjoy being a critic. Once a straw-man requirements document is created, the project leader can circulate it far and wide as "pretty close, but everything is not exactly right yet." Using such a document to gather comments (and providing big, red pens to get things rolling) is very effective for the project, even if it is humbling to the original authors. In any case, it is better to identify these issues early than to find out what you missed during acceptance testing.

Evolutionary Methodologies

A third approach to scope definition used for software development relies on firm definitions for project scope, but only for the short-term future. Evolutionary (or cyclic) methodologies used for this have enjoyed some popularity since the 1980s and are still widely discussed and applied to development of software applications by small project teams that have ready access to their end users. Rather than defining a system as a whole, these methodologies set out a more general objective and then describe incremental stages, each producing a functional deliverable. The system built at the end of each cycle adds more functionality, and each release brings the project closer to its destination. As the work continues, very specific scope is defined for the next several cycles (each varying from about two to six weeks, depending on the specific methodology), but the deliverables for later cycles are defined only in more general terms. These will be revised and specified later, on the basis of evaluations by users during preceding development cycles and other data collected along the way.

While this can be an effective technique for managing revolutionary projects where fundamental definition is initially not possible, it does carry the risk of institutionalizing scope creep (a significant source of scope risk in the PERIL database) and may even be an invitation to "gold plating." It also starts the project with no certain end or budget, as the number of cycles is indeterminate and, without careful management, can even be perpetual.

Historically, evolutionary methodologies have carried higher costs than other project approaches. Compared with projects that are able to define project deliverables with good precision in the early stages (within the first 10 to 15 percent of the proposed schedule) and then execute using a more traditional "waterfall" life cycle, evolutionary development has been both slower and more expensive. Due to rework and other effort associated with a meandering definition process and the need to deliver to users every cycle and then evaluate their feedback, evolutionary methodologies may triple a project's cost and double its timeline. From a risk standpoint, evolutionary methodologies have a tendency to focus exclusively on scope risk, while accepting potentially unlimited schedule and resource risk. Risk management using an evolutionary approach for technical projects is more incremental, and it requires frequent reevaluation of the current risks, as well as extremely disciplined use of scope change assessment. In managing risk using evolutionary methodologies, it is also prudent to set limits for both time and money, not only for the complete project but also for key checkpoints no more than a few months apart.

Current thinking on evolutionary software development includes a number of methodologies described as agile, adaptive, or lightweight. These methods adopt more robust scope control and incorporate project management practices intended to avoid the "license to hack" nature of earlier evolutionary development models. "Extreme programming" (XP) is a good example of this. XP is intended for use on relatively small software development projects by project teams collocated with their users. It adopts effective project management principles for estimating, scope management, setting acceptance criteria, planning, and project communication. XP puts pressure on the users to determine the overall scope initially, and on this basis the project team determines the effort required for the work. Development cycles of a few weeks are used to implement the scope incrementally, as prioritized by the users, but the quantity of scope (which is carved up into "stories") in each cycle is determined exclusively by the programmers. XP allows revision of scope as the project runs, but only as a zero-sum game—any additions cause something to be bumped out or deferred until later. XP rigorously avoids scope creep in the current cycle.

Even for projects adopting this incremental approach to scope definition, you must outline the overall project objective, as in XP, in order to identify scope risk.

Scope Documentation

However you go about defining scope, the next step is to write it down. Managing scope risk requires a clear scope statement that defines both what you will deliver and what you will not deliver. Without thorough scope definition, you cannot even understand the scope risks, let alone manage them. One common type of inadequate scope definition lists project requirements as "musts" and "wants." While it may be fine to have some flexibility during the earliest project investigation, the longer final decisions are delayed, the more problematic they become. Dragging a list of desirable, "want to have" features well into the development phase of a project is a major scope risk faced by many high-tech projects. Because project scope is not well-defined, project schedules and resource plans will be unclear, and estimates will be inexact.

From a risk management standpoint, the "is/is not" technique is far superior to "musts and wants." The "is" list is equivalent to the "musts," but the "is not" list serves to limit scope. Determining what is not in the project specification is never easy, but if you fail to do it, many of your scope risks will remain hidden behind a moving target. The "is/is not" technique is particularly important for projects that will have a fixed deadline and limited resources in order to establish a constraint for scope that is consistent with the limits on timing and budget. It is nearly always better to define the minimum requirements and deliver them as early as possible than to be aggressive with scope and either deliver late or have to drop promised features late in the project in order to meet the deadline. As you document your project scope, establish boundaries that define what the project will not include, to minimize scope creep.

There are dozens of formats for a document that defines scope. In product development, it may be a reference specification or a product data sheet. In a custom solution project (and for many other types of projects), it may be a key portion of the project proposal. For information technology projects, it may be part of the project charter document. In other types of projects, it may be included in a statement of work or a plan of record. For agile software methodologies, it may be a brief summary on a Web page and a collection of index cards tacked to a wall or forms taped to a white-board. Whatever it may be called or be a part of, an effective definition for project deliverables must be in writing. Specific information typically includes:

- A description of the project (what are you doing?)

- Project purpose (why are you doing it?)

- Completion criteria (project end, acceptance criteria)

- Planned project start

- Intended customer(s) and/or users

- What the project will and will not include ("is/is not")

- Dependencies (both internal and external)

- Staffing requirements (in terms of skills and experience)

- High-level risks

- Cost (rough order-of-magnitude, at least)

- Technology required

- Hardware, software, and other infrastructure required

- Detailed requirements, outlining functionality, usability, reliability, performance, supportability, and any other significant issues

- Other data customary and appropriate to your project

High Level Risk Assessment Tools

Technical project risk assessment is part of the earliest phases of project work. (Item 10 of the deliverable definition process on page 43 states this need.) While there is usually very little concrete information on which to base an early project risk assessment, there are several tools that do provide useful insight into project risk even in the beginning stages. These tools are:

- Risk framework

- Risk complexity index

- Risk assessment grid

The first two are useful in any project that creates a tangible, physical deliverable through technical development processes. The third is appropriate for projects that have less tangible results, such as software modules, new processes, commercial applications, network architectures, or Internet service offerings. These tools all start by asking the same question: "How much experience do you have with the work the project will require?" How the tools use this information differs, and each builds on the assessment of technical risk in different ways. These tools are not mutually exclusive; depending on the type of project, one or more of them may help in assessing risk.

While any of these tools may be used at the start of a project to get an indication of project risk, none of the three is very precise. The purpose of each is to provide information about the relative risk of a new project. Each of these three techniques does have the advantage of being quick, and each can provide an assessment of project risk very early in a new project. None of the three is foolproof, but the results provide as good a basis as you are likely to have for deciding whether to go further with investigation and other project work. (You may also use these three tools to reassess project risk later in the project. Chapter 9 discusses reusing these three tools, as well as several additional project risk assessment methods that require planning details, to refine project risk assessment.)

Risk Framework

This is the simplest of the three high-level techniques. To assess risk, consider the following three project factors:

- Technology (the work)

- Marketing (the user)

- Manufacturing (the production and delivery)

For each of these factors, assess the amount of change required by the project. For technology, does the project use only well-understood methods and skills, or will new skills be required (or developed)? For marketing, will the deliverable be used by someone (or by a class of users) you know well, or does this project address a need for someone unknown to you? For manufacturing, consider what is required to provide the intended end user with your project deliverable: are there any unresolved or changing manufacturing or delivery channel issues?

For each factor, the assessment is binary: change is either trivial (small) or significant (large). Assess conservatively; if the change required seems somewhere between these choices, treat it as significant.

Nearly all projects will require significant change to at least one of these three factors. Projects representing no (or very little) change may not be worth doing, and they may not even meet the requirement for a project that the effort be unique. Some projects, however, may require large changes in two or even all three factors. For technical projects, changes correlate with risk. The more change inherent in a project, and the more different types of change, the higher the risk.

In general, if your project has significant changes in only one factor, it probably has an acceptable, manageable level of risk. Evolutionary-type projects, where existing products or solutions are upgraded, leveraged, or improved, often fall into this category. If your project changes two factors simultaneously, it has higher relative risk, and the management decision to proceed, even into further investigation and planning, ought to reflect this. Projects that develop new platforms intended as the foundation of future project work frequently depend upon new methods for both technical development and manufacturing. For projects in this category, the higher risks must be balanced against potential business benefits.

If your project requires large shifts in all three categories, the risks are greatest of all. Many, if not most, projects in this risk category are unsuccessful. Projects that represent this much change are revolutionary and are justified by the very substantial financial or other benefits that will result from successful completion. Often the risks seem so great—or so unknowable—that a truly revolutionary project requires the backing of a very high-level sponsor with a vision.

A commonly heard story around Hewlett-Packard from the early 1970s involves a proposed project pitched to Bill Hewlett, the more technical of the two HP founders. The team brought a mockup of a handheld device capable of scientific calculations with ten significant digits of accuracy. The model was made out of wood, but it had all the buttons labeled and was weighted to feel like the completed device. Bill Hewlett examined the functions and display, lifted the device, slipped it in his shirt pocket, and smiled. The HP-35 calculator represented massive change in all three factors; the market was unknown, manufacturing for it was unlike anything HP had done before, and it was even debatable whether the electronics could be developed on the small number of chips that could fit in the tiny device. The HP-35 was developed primarily because Bill Hewlett wanted one. It was also a hugely successful product, selling more units in a month than had been forecasted for the entire year and yielding a spectacular profit. The HP-35 also changed the direction of the calculator market completely, and it destroyed the market for mechanical slide rules and gear-driven computing machines forever.

This story is known because the project was successful. Similar stories surround many other revolutionary products, like the Apple Macintosh, the Yahoo search engine, and home video-cassette recorders. Stories about the risky projects that fail (or fall far short of their objectives) are harder to uncover; most people and companies would prefer to forget them. The percentage of revolutionary ideas that crash and burn is usually estimated to be at least 90 percent. The higher risks of such projects should always be justified by very substantial benefits and a strong, clear vision.

Risk Complexity Index

A second technique for assessing risk on projects that develop technical products is the risk complexity index. As in the risk framework tool, technology is the starting point. This tool looks more deeply at the technology being employed, separating it into three parts and assigning to each an assessment of difficulty. In addition to the technical complexity, the index also looks at another source of project risk: the complexity arising from larger project teams, or scale. The following formula combines these four factors:

Index = (Technology + Architecture + System) Scale

For the index, Technology is defined as new, custom development unique to this project. Architecture refers to the high-level functional components and any external interfaces, and System is the internal software and hardware that will be used in the product. Assess each of these three against your experience and capabilities, assigning each a value from 0 to 5:

- 0—Only existing technology required

- 1—Minor extensions to existing technology needed in a few areas

- 2—Significant extensions to existing technology needed in a few areas

- 3—Almost certainly possible, but innovation needed in some areas

- 4—Probably feasible, but innovation required in many areas

- 5—Completely new, technological feasibility in doubt

The three technology factors generally correlate, but some variation is common. Add these three factors, to a sum between 0 and 15.

For Scale, assign a value based on the number of people (including all full-time contributors, both internal and external) expected on the project:

- 0.8— Up to twelve people

- 2.4—thirteen to forty people

- 4.3—forty-one to one hundred people

- 6.6—More than one hundred people

The calculation for the index yields a result between 0 and 99. Projects with an index below 20 are generally low-risk projects with durations of well under a year. Projects assessed between 20 and 40 are medium risk. These projects are more likely to get into trouble and often take a year or longer. Most projects with an index above 40 finish long past their stated deadline, if they complete at all.

Risk Assessment Grid

The first two high-level risk tools are appropriate for hardware deliverables. Technical projects with intangible deliverables may not easily fit these models, so the risk assessment grid is a better approach to use for early risk assessment on these projects.

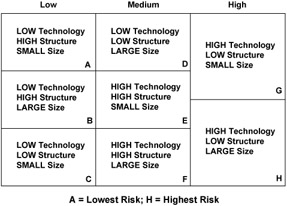

This tool examines three project factors, similar to the risk framework technique. Assessment here also provides two choices for each factor, and technology is again the first. The other factors are different, and here the three factors carry different weights. The factors, in order of priority, are:

- Technology

- Structure

- Size

The highest weight factor, Technology, is based on required change, and it is either Low or High, depending on whether the required technology is well known to the project team and whether it is well established for uses similar to the current project.

The second factor, Structure, is also assessed as either Low or High, on the basis of factors such as solid formal specifications, project sponsorship, and organizational practices appropriate to the project. Structure is Low when there are significant unknowns in staffing, responsibilities, infrastructure issues, objectives, or decision processes. Good up-front definition indicates high structure.

The third factor, Size, is similar to the Scale factor in the risk complexity index. A project is either considered Large or Small. For this tool, size is not an absolute assessment. It is measured relative to the size of teams that the project leader has successfully led in the past. Teams that are only 20 percent larger than the size a project leader has successfully led with should be considered Large. Other considerations in assessing size are the expected length of the project, the overall budget for the project, and the number of separate locations where project work will be performed.

After you have assessed each of the three factors, the project will fall into one of the sections of the grid, A through H (see Figure 3-2). Projects in the right column are most risky; those to the left are more easily managed.

Figure 3-2: Risk assessment grid.

This technique has been used to assess risk on a wide variety of project types. A consulting team in England used it very effectively by making it a central part of their decision process when considering whether to respond to a Request for Proposal (RFP). After an initial review of the RFP, they used the risk assessment grid to decide whether to respond, to "No Bid," or to develop and counterpropose a somewhat different solution.

Beyond risk assessment, these tools may also guide early project risk management, indicating ways to lower project risk by using alternative technologies, making changes to reduce staffing, decomposing longer projects into a sequence of shorter projects with less aggressive goals, or improving the proposed structure. Use of these and other tools to manage project risk is the topic of Chapter 10.

Setting Limits

While many scope risks come from specifics of the deliverable and the overall technology, scope risk also arises from failure to establish firm, early limits for the project.

Running workshops on risk management, I demonstrate this aspect of scope risk using an exercise that begins by getting out a single U.S. one-dollar bill. After showing it to the group, two rules are established:

- The dollar bill will go to the highest bidder, who will pay the amount bid. All bids must be for a real amount—no fractional cents. The first bid must be at least a penny, and each succeeding bid must be higher than earlier bids. (This is the same as in any auction.)

- The second-highest bidder also pays the amount he or she bid (the bid just prior to the winning bid) but gets nothing in return. (This is unlike a normal auction.)

As the auctioneer, I start by asking whether anyone wants to buy the dollar for one cent. Following the first bid, I solicit a second low bid: "Does anyone think the dollar is worth five cents?" After two low bids are made, the auction is off and running. The bidding is allowed to proceed to (and nearly always past) one dollar, until it ends. If one dollar is bid and things slow down, a reminder to the person who has the next highest bid that he or she will spend almost one dollar to buy nothing will usually get things moving again. The bidding ends when no new bids are made. The two final bids nearly always total well over two dollars.

By now everything is quite exciting. Someone has bought a dollar for more than a dollar. A second person has bought nothing but paid nearly as much. To calm things down, I put the dollar away, explain that this is a lesson in risk management (not a robbery), and apologize to people who seem upset.

So, what does the dollar auction have to do with risk management? This game's outcome is very similar to what happens when a project that hits its deadline (or budget) creeps past and just keeps going. "But we are so close. It's almost done; we can't stop now."

People point out that the dollar auction is not fair and is unrealistic. They are only half right; while it is not fair, it does approximate quite a few common situations in real life. It effectively models any case where people have, or think they have, too much invested in an undertaking to quit. The dollar auction was originally developed in the 1950s as a part of game theory, to study decision making in situations similar to the scenario of the game. In social settings, the inventors of the game found that the sum of the two highest bids in the auction generally rose to between three and five dollars.

Decisions to continue or to quit in situations that involve spending more time, more money, or both are common: Do you hold or hang up in a telephone queue for airlines, catalog merchants, or help desks? Continue to wait for a bus or hail a taxi? Repair an old car or invest in a new one? Continue or quit at a slot machine? Hold or sell a falling stock investment? Even submitting a competitive proposal where only the winner will get any reward is a variant of the dollar auction.

Dollar auction losses can be minimized by anticipating the possibility of an uncompensated investment, setting limits in advance, and then enforcing them. Rationally, the dollar auction has an expected return of half a dollar (the total return, one dollar, spread between the two active participants). If everyone participating adopted this rule, the auctioneer would always lose. For projects, clearly defining limits and then monitoring intermediate results will provide early indication that you may be in trouble. Project metrics, such as earned value (described in Chapter 9), are very useful in detecting project overrun early enough to abort or modify impossible or unjustified projects, minimizing unproductive investments. Defining project scope with sufficient detail and limits is an essential foundation for risk management and project planning.

Work Breakdown Structure (WBS)

While scope definition reveals some risks, scope planning digs deeper into the project and uncovers even more. Product definition documents, scope statements, and other written materials provide the basis for decomposing of project work into increasingly finer detail so that it can be understood, delegated, estimated, and tracked. The process used to do this—to create the project work breakdown structure (WBS)—also identifies potential defect risks.

One common approach to developing a WBS starts at the scope or objective statement and proceeds to carve the project into smaller parts, working "top-down" from the whole project concept. Decomposition of work that is well understood is straightforward and quickly done. Project risk becomes visible whenever it is confusing or difficult to decompose project work into smaller, more manageable pieces. The most common starting point for the process is the project deliverable or deliverables, which is why the WBS process is considered part of scope management in the PMBOK Guide. Developing a project WBS often begins by breaking the expected project results into components, features, or specifications that represent subsets of major deliverables for the overall project. There are other possible organizing principles for decomposing project work:

- By organizational function (marketing, R&D, manufacturing)

- By discipline (hardware, software, quality, support)

- By skill set (programming, accounting, assembly)

- By geography (Stuttgart, Bangalore, Boise, Taipei)

- By life-cycle phase (investigation, design, development, test)

If any part of the project resists breakdown using these ideas, that portion of the project is not well understood, and it is inherently risky. As with many aspects of project management, there is significant variation in how the WBS concept is applied. The PMBOK Guide takes a hard line, insisting that a project WBS be deliverable-oriented, and suggests an alphabet soup of acronyms for breakdowns of other types. However, the examples offered in the PMBOK Guide, and eleven more in the PMI Practice Standard for Work Breakdown Structures (2001), are not organized exclusively on the basis of project deliverables. Most are project phase-oriented, or at least partially organized by discipline or skill set. In practice, it is very difficult to include all the project work in a strictly deliverable-oriented WBS.

From a risk management standpoint, any name you choose and any basis for organizing the work that helps you decompose your project thoroughly into more understandable pieces will provide the foundation you need.

Work Packages

The ultimate goal of the WBS process is to describe the entire project in much smaller pieces, often called work packages. The WBS is typically developed by breaking the overall project deliverable into major subsets of work and then continuing the process of decomposition down through multiple levels into a hierarchy, where each portion of the project is described at the lowest level by work packages of modest size. However your WBS may be organized at higher levels, each lowest-level work package must be deliverable-oriented, having a clearly defined output. General guidelines for the size of the work represented by the work packages at the lowest level of the WBS are usually in terms of duration (between two and twenty workdays) or effort (roughly eighty person-hours or less). While guidelines such as these are common, WBS standards for projects vary widely. These guidelines illustrate the connections among scope, schedule, and resource risk and foreshadow the discussions of estimating in Chapters 4 and 5. Project work broken down to this level of detail gives the project leader and team the information they need to understand, estimate, schedule, and monitor project work. It also provides a powerful tool for identifying the parts of the project most likely to cause trouble.

The terminology used for the work at the lowest level also varies a good deal for projects of various types. It is common practice to refer to the lowest-level work package as an "activity" or "task," but project methodologies adopt many other names. The extreme programming methodology has adopted the term "story" for the work packages that constitute project work in each development cycle. What you call the results of the decomposition process does not matter much (though inconsistency between related projects can increase communication risks); what matters from a risk management standpoint is that the work be defined with sufficient detail that it is clear what you must do to complete it. Any portion of a project that you do not understand well enough to break into small, clearly defined work packages is risky. Whenever a portion of your project resists logical decomposition, resulting in work at the lowest level of the WBS that is likely to last longer than a month or will probably require more than eighty hours of effort, you lack an adequate understanding of that part of the project. Note such work as a project risk.

Bottom Up

Work breakdown can also be approached from the other direction, "bottom-up." Instead of starting at the top and carving the project into pieces, some teams prefer to brainstorm a list of required activities and organize it into a WBS after the initial list is developed. Risky portions of the project will emerge from this process, also. To develop the WBS, the identified activities that are similar are clustered, using affinity groupings or some other method to aggregate them. The groupings are then organized further, building up a hierarchy similar to that in the top-down method. Examination of the resulting WBS often reveals gaps—parts of the project for which there are no defined activities—and (again) a few relatively large, hard-to-decompose pieces at the lowest level of the WBS. Note any gaps in the WBS that you are unable to fill in and any work you cannot sufficiently decompose as project risks.

Aggregation

The principle of "aggregation" in a WBS provides one method for detecting missing work, as the defined work at each level of the hierarchical decomposition must plausibly include everything needed at the next higher level. If the work you identified in decomposing a higher-level work package does not represent a complete "to do" list for the higher level, your WBS is incomplete. You need to describe the missing work and add it to the WBS. All the work in the WBS that you cannot describe well contributes to your growing accumulation of identified risks.

Your initial WBS will seldom be complete. Another method for identifying missing activities is to take all the activities at the lowest level and reorganize them using a different method at the first level (for example, convert from a life-cycle model to a functional view). The resulting WBS often reveals holes and gaps, identifying additional work and potential scope risks.

If you are asked to dig a hole in the ground, the work is sometimes easy. A typical approach is to remove the soil, a small amount at a time, until you have cleared out the size hole you need. If there is nothing in the volume to be cleared except soft, moist earth, a good shovel and some effort will get the job done quickly. However, anyone who digs holes regularly knows that it is rarely that easy. The place where you want the hole may have roots growing through it, or, even worse, it may be filled with rocks. Small rocks and roots may be not much trouble for a good shovel, but they will slow you down (just as small risks do in projects). Larger tree roots may require additional technology—an ax, or perhaps a saw. Rocks, depending on the size, may require the assistance of others, levers, power excavation equipment, a hammer and chisel, or even explosives. These obstacles that cannot be broken apart with a shovel are problematic, time-consuming, and potentially expensive. In some cases, you may decide to move the location of the hole or give up completely after assessing the situation.

Like the obstacles hidden beneath the ground, parts of a project that resist easy decomposition are not visible until you systematically seek them. The WBS development process provides a tool for separating the parts of the project that you understand well from those that you do not. As with the large, unruly objects in the hole, you may be able to break activities down using new approaches, technologies, or resources. Before proceeding with a project filled with such challenges, you also must determine whether the associated costs and other consequences are justified.

Ownership

There are many reasons why some project work is difficult to break into smaller parts, but the root cause is often a lack of experience with the work required. While this is a very common sort of risk discovered in developing a WBS, it is not the only one. A key objective in completing the project WBS is the delegation of each lowest-level work package (or whatever you may choose to call it) to someone who will take responsibility for the completion of that part of the project. Delegation and ownership are well established in management theory as motivators, and they also contribute to team development and broader project understanding.

Delegation is most effective when responsibilities are assumed by team members voluntarily. It is fairly common on projects to allow people to assume ownership of project activities in the WBS by signing up for them, at least on the first pass. While there is generally some conflict over activities that more than one person wants, sorting this out by balancing the workload, selecting the more experienced person, or using some other logical decision process is rarely difficult. The risk discovery opportunity here is when the opposite occurs—when no one wants to be the owner.

Activities where no one volunteers to be responsible are risky, and you need to probe to find out why. There are a number of common root causes, including the one discussed before: no one understands the work very well. It may be that no one currently on the project has developed key skills that the work requires or that the work is technically so uncertain that no one believes it can be done at all. It could even be the case that the work is feasible but that no one believes that it can be completed in the "roughly two weeks" expected for activities defined at the lowest level of the WBS. In other cases, the description of deliverables may be so fuzzy or unclear that no one wants to be involved.

There are many other possible reasons, and these are also risks. Of these, availability is usually the most common. If everyone on the project is already working beyond full capacity on other work and other projects, no one will volunteer.

Another possible cause might be that the activity requires working with people that no one wants to work with. If the required working relationships are likely to be difficult or unpleasant, no one will volunteer, and successful completion of the work is uncertain. Some activities may depend on outside support or require external inputs that the project team is skeptical about. Few people willingly assume responsibility for work that is likely to fail due to causes beyond their control.

In addition, the work itself might be the problem. Even easy work can be risky, if people see it as thankless or unnecessary. All projects have at least some required work that no one likes to do. It may involve documentation or some other dull, routine part of the work. If it is done successfully, no one notices; this is simply expected. If something goes wrong, though, there is a lot of attention. The activity owner has managed to turn an easy part of the project into a disaster, and he or she will at least get yelled at. Most people avoid these activities.

Another situation is the "unnecessary" activity. Projects are full of these, too, at least from the perspective of the team. Lifecycle, phase gate, and project methodologies place requirements on projects that seem to be (and, in many cases, may actually be) unnecessary overhead. Other project work may be scheduled primarily because it is part of a planning template or because "that's the way we always do it." If the work is actually not needed, good project managers seek to eliminate it.

To the project risk list, add clear descriptions of each risk identified while developing the WBS, including your best understanding of the root cause for each. These risks may emerge from difficulties in developing the WBS to an appropriate level of detail or in finding willing owners for the lowest-level activities. A typical risk listed might be: "The project requires conversion of an existing database from Sybase to Oracle, and no one on the project staff has relevant experience."

WBS Size

Project risk correlates with size; when projects get too large, risk becomes overwhelming. Scope risk rises with complexity, and one measure of complexity is the size of the WBS. Once your project work has been decomposed into work packages that are sufficiently detailed (for example, with an average duration of about two weeks), count up the number at the lowest levels. When the number exceeds about 200, project risk rises very rapidly.

The more separate bits of work that a single project leader is responsible for, the more likely it becomes that he or she will miss something crucial to the project. As the volume of work and project complexity expands, the tools and practices of basic project management, as applied by a single project leader, become more and more inadequate.

At high levels of complexity, the overall effort is best managed in one of two ways: as a series of shorter projects in sequence that deliver what is required in stages or as a program made up of a collection of smaller projects. In both cases, the process of decomposing the total project into sequential or parallel parts is done using a decomposition very like a WBS. In the case of sequential execution, the process is essentially similar to the evolutionary methodologies discussed previously in this chapter. For programs, the resulting decomposition creates a number of projects, each of which will be managed by a separate project leader using project management principles, and the overall effort will be the responsibility of a program manager. Project risk is managed by the project leaders, and overall program risk is the responsibility of the program leader.

When excessively lengthy or complex projects are left as the responsibility of a single project leader to plan, manage risk, and execute, the probability of successful completion is low.

Other Risks

Not all scope risks are strictly within the practice of project management. Examples are market risk and confidentiality risk. These risks are related, and, although they may not show up in all projects, they are often present at least to some degree. Ignoring these risks is inappropriate and dangerous.

A business balance sheet has two sides: assets and liabilities. Project management primarily focuses on "liabilities," the expense and execution side, using measures related to "scope/ schedule/resources." Market and confidentiality risks tend to be on the asset, or value, side of the business ledger, where project techniques and teams are involved indirectly, if at all. Project management is primarily about delivering what you have been asked to deliver, and this does not always equate to "success" in the marketplace. While it is obvious that "on time, on budget, within scope" does not necessarily make a project an unqualified success, managing these aspects alone is a big job, and in a perfect world it is really all the project team ought to be held responsible for. The primary owners for market and confidentiality risks may not even be active participants in the project, although trends in many kinds of technical projects are toward assigning projects to cross-functional business teams—making these risks more central to the project. In any case, these risks are real, and they relate to scope. Unless identified and managed, they too can lead to project failure.

Market Risk

This first type of risk is mostly about getting something in the definition wrong. Market risk relates to features, to timing, to cost, or to almost any facet of the deliverable. It can happen when long development efforts are initiated, during which time the problem to be solved changes, goes away, or is addressed by an unexpected new technology. It can happen because a satisfactory deliverable is brought to market a week after an essentially identical offering from a competitor. It can even result when a project produces exactly what was requested by a sponsor or economic buyer but that product is rejected by the intended end user. Sometimes the people responsible for promoting and selling a good product do not (or cannot) follow through. Many paths can lead to a result that meets the specifications and is delivered on time and on budget, yet is never deployed or fails to achieve the expectations set at the beginning of the project.

The longer and the more complicated the project is, the greater the market risk tends to be. Project leaders contribute to the management of these risks through active, continuing participation in any market research and customer interaction and by frequently communicating with (ideally, without annoying) all the people surrounding the project who will be involved with deployment of the deliverable.

Some of the techniques already discussed may help manage this. A thorough process for deliverable definition probes for many of the sources of this sort of risk and the high-level risk tools outlined previously also provide opportunities to understand the environment surrounding the project.

In addition, ongoing contact with the intended users, through interviews, surveys, market research, and other techniques, will help to uncover problems and shifts in the assumptions the project is based upon. Agile methodologies employ ongoing user involvement in the definition of short, sequential project cycles, minimizing the "wrong" deliverable risk greatly for small project teams colocated with their users.

If the project is developing a product that will compete with similar offerings from competitors, ongoing competitive analysis to predict what others are planning can be useful (but, of course, competitors will not make this straightforward or easy—confidentiality risks are addressed next). Responsibility for this work may be fully within the project, but if it is not, the project team must still review what is learned and, if necessary, encourage the marketing staff (or other stakeholders) to keep the information up-to-date.

The project team should always probe beyond the specific requirements (the stated need) to understand where the specifications come from (the actual need). Understanding what is actually needed is generally much more important than simply understanding what was requested. Early use of models, prototypes, mockups, and other simulations of the deliverable will help you find out whether the requested specifications are in fact likely to provide what is needed. Short cycles of development with periodic releases of meaningful functionality (and value) throughout the project also minimize this category of risk. Standards, testing requirements, and acceptance criteria need to be established in clear, specific terms and periodically reviewed with those who will certify the deliverable.

Confidentiality Risk

The second type of risk that is generally not exclusively in the hands of the project team relates to secrecy. While some projects are done in an open and relatively unconstrained environment, confidentiality is crucial to many high-tech product development projects, particularly long ones. If the information on what is under way is made public, the value of the project might decrease or even disappear. Better-funded competitors with more staff might learn what you are working on and build it first, making your work irrelevant. Of course, managing this risk well potentially increases the market risk, as you will be less free to gather information from end users and the market. The use of prototypes, models, mock-ups, or even detailed descriptions might provide data to competitors that you want to keep in the dark. On some technical projects, the need for secrecy may also be a specific contractual obligation, as with government projects. Even if the product is not a secret, you may be using techniques or methodologies that are proprietary competitive advantages, and loss of this sort of intellectual property also represents a confidentiality risk.

Within the project team, several techniques may help. Some projects work on a "need to know" basis and provide to team members only the information required to do their current work. While this usually hurts teamwork and motivation, and may even lead to substandard results (people will optimize only for what they know, not for the overall project), it can effectively protect confidential information.

Emphasizing the importance of confidentiality also helps. Periodically reinforce the need for confidentiality with all team members, and especially with contractors and other outsiders. Be specific on the requirements for confidentiality in contract terms when you bring in outside help, and discuss the requirements with them to ensure that the terms are clearly understood. Any external market research or customer contact also requires effective non-disclosure agreements, again with enough discussion to make the need for secrecy clear.

In addition to all of this, project documents and other communication must be appropriately marked "confidential" (or according to the requirements set by your organization). Restrict distribution of project information, particularly electronic versions, to people who need it and who understand, and agree with, the reasons for secrecy. Protect information stored on computer networks or the Internet with passwords that are changed often enough to limit inappropriate access. Use legal protections such as copyrights and patents as appropriate to establish ownership of intellectual property. (Timing of patents can be tricky. On the one hand, they protect your work. On the other hand, they are public and may reveal to competitors what you are working on.)

While the confidentiality risks are partially the responsibility of the project team, many lapses are well out of their control. Managers, sponsors, marketing staffs, and favorite customers are the sources for many leaks. Project management tools address principally execution of the work, not secrecy. Effective project management relies heavily on good, frequent communication, so projects with heavy confidentiality requirements can be very difficult and inefficient to lead. Managing confidentiality risk requires discipline, frequent reminders of the need for secrecy to all involved (especially those involved indirectly), limits on the number of people involved, and more than a little luck.

Document the Risks

As the requirements, scope definition documents, WBS, and other project data start to take shape, you can begin to develop a list of specific issues, concerns, and risks related to the scope and deliverables of the project. When the definitions are completed, review the risk list, and inspect it for missing or incomplete information. If some portion of the project scope seems likely to change, note this in the list, as well. Typical scope risks involve performance, reliability, untested methods or technology, or combinations of deliverable requirements that are beyond your experience base. Make very clear why each item listed is an exposure for the project; cite any relevant specifications and measures that go beyond those successfully achieved in the past in the risk description, using explicitly quantified criteria. An example might be, "The system delivered must execute at double the fastest speed achieved in the prior generation."

Sources of specific scope risks include:

- Requirements that seem likely to change

- Mandatory use of new technology

- Requirements to invent or discover new capabilities

- Unfamiliar or untried development tools or methods

- Extreme reliability or quality requirements

- External sourcing for a key subcomponent or tool

- Incomplete or poorly defined acceptance tests or criteria

- Technical complexity

- Conflicting or inconsistent specifications

- Incomplete product definition

- Very large WBS

Using the processes for scope planning and definition will reveal many specific technical and other potential risks. List these risks for your project, with information about causes and consequences. The list of risks will expand throughout the project planning process and will serve as your foundation for project risk analysis and management.

Key Ideas for Identifying Scope Risks

- Clearly define all project deliverables, and note challenges.

- Set limits on the project based on the value of the deliverables.

- Decompose all project work into small pieces, and identify work not well understood.

- Assign ownership for all project work and probe for reasons behind any reluctance.

- Note risk arising from expected project duration or complexity.

Panama Canal Setting the Objective (1905 1906)

One of the principal differences between the earlier unsuccessful attempt to build the Panama Canal and the later project was the application of good project management practices. While this was ultimately true, the new project had a shaky beginning. The scope and objectives of the revived Panama Canal project, conceived as a military project and funded by the U.S. government, should have been very clear, even at the start. They were not.

The initial manager for the project when work commenced in 1904 was John Findlay Wallace, formerly the general manager of the Illinois Central Railroad. Wallace was visionary; he did a lot of investigating and experimenting, but he accomplished very little in Panama. His background included no similar project experience. In addition to his other difficulties, he could do almost nothing without the consent of a seven-man commission set up back in the United States, a commission that rarely agreed on anything. Also, nearly every decision, regardless of size, required massive amounts of paperwork. A year later, in 1905, $128 million had been spent but still there was no final plan, and most of the workers were still waiting for something to do. The project had in most ways picked up just where the earlier French project had left off, problems and all. Even after a year, it was still not clear whether the canal would be at sea level or constructed with locks and dams. In 1905, mired in red tape, Wallace announced that the canal was a mistake, and he resigned.

John Wallace was promptly replaced by John Stevens. Stevens was also from the railroad business, but his experience was on the building side, not the operating side. He built a reputation as one of the best engineers in the United States by constructing railroads throughout the Pacific frontier. Prior to appointing Stevens, Theodore Roosevelt eliminated the problematic seven-man commission, and he significantly reduced the red tape, complication, and delay. As chief engineer, Stevens, unlike Wallace, effectively had full control of the work. Arriving in Panama, Stevens took stock and immediately stopped all work on the canal, stating, "I was determined to prepare well before construction, regardless of clamor of criticism. I am confident that if this policy is adhered to, the future will show its wisdom." And so it did.

With the arrival of John Stevens, managing project scope became the highest priority. He directed all his initial efforts at preparation for the work. He built dormitories for workers to live in, dining halls to feed them, warehouses for equipment and materials, and other infrastructure for the project. The doctor responsible for the health of the workers on the project, William Crawford Gorgas, had been trying for over a year to gain support from John Wallace for measures needed to deal with the mosquitoes, by then known to spread both yellow fever and malaria. Stevens quickly gave this work his full support, and Dr. Gorgas proceeded to eradicate these diseases. Yellow fever was conquered in Panama just six months after Dr. Gorgas received Stevens's support, and he made good progress combating malaria as well.

Under the guidance of Stevens, all the work was defined and planned employing well-established, modern project management principles. He said, "Intelligent management must be based on exact knowledge of facts. Guesswork will not do." He did not talk much, but he asked lots of questions. People commented, "He turned me inside out and shook out the last drop of information." His meticulous documentation served as the basis for work throughout the project.

Stevens also determined exactly how the canal should be built, to the smallest detail. The objective for the project was ultimately set in 1907 according to his recommendations: The United States would build an eighty-kilometer (fifty-mile) lock-and-dam canal at Panama connecting the Atlantic and Pacific oceans, with a budget of $375 million, to open in 1915. With the scope defined, the path forward became clear.

Introduction

- Why Project Risk Management?

- Planning for Risk Management

- Identifying Project Scope Risk

- Identifying Project Schedule Risk

- Identifying Project Resource Risk

- Managing Project Constraints and Documenting Risks

- Quantifying and Analyzing Activity Risks

- Managing Activity Risks

- Quantifying and Analyzing Project Risk

- Managing Project Risk

- Monitoring and Controlling Risky Projects

- Closing Projects

- Conclusion

- Appendix A Selected Detail From the PERIL Database

EAN: 2147483647

Pages: 130