Computers as Persuasive Social Actors

Overview

Shortly after midnight, a resident of a small town in southern California called the police to report hearing a man inside a house nearby screaming “I’m going to kill you! I’m going to kill you!” Officers arrived on the scene and ordered the screaming man to come out of the house. The man stepped outside, wearing shorts and a Polo shirt. The officers found no victim inside the house. The man had been yelling at his computer. [1 ]

No studies have shown exactly how computing products trigger social responses in humans, but as the opening anecdote demonstrates, at times people do respond to computers as though they were living beings. The most likely explanation is that social responses to certain types of computing systems are automatic and natural; human beings are hardwired to respond to cues in the environment, especially to things that seem alive in some way. [2 ]At some level we can’t control these social responses; they are instinctive rather than rational. When people perceive social presence, they naturally respond in social ways—feeling empathy or anger, or following social rules such as taking turns. Social cues from computing products are important to understand because they trigger such automatic responses in people.

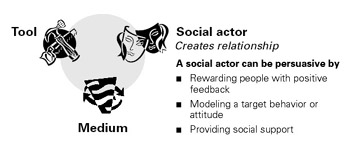

This chapter will explore the role of computing products as persuasive social actors—the third corner in the functional triad (Figure 5.1). These products persuade by giving a variety of social cues that elicit social responses from their human users.

Figure 5.1: Computers as social actors.

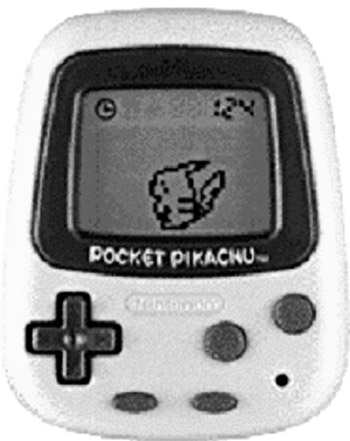

The Tamagotchi craze in the late 1990s was perhaps the first dramatic demonstration of how interacting directly with a computer could be a social experience. [3 ]People interacted with these virtual pets as though they were alive: They played with them, fed them, bathed them, and mourned them when they “died.” Tamagotchi was soon followed by Nintendo’s Pocket Pikachu (Figure 5.2) a digital pet designed to persuade. Like other digital pets, Pikachu required care and feeding, but with a twist: the device contained a pedometer that could register and record the owner’s movements. For the digital creature to thrive, its owner had to be physically active on a consistent basis. The owner had to walk, run, or jump—anything to activate the pedometer. Pocket Pikachu is a simple example of a computing device functioning as a persuasive social actor.

Figure 5.2: Nintendo’s Pocket Pikachu was designed to motivate users to be physically active.

[1 ]Based on the preliminary report of the Seal Beach, CA, Police Department (June 8, 2002) and the police log of the Long Beach (CA) News-Enterprise (June 12, 2002), p. 18.

[2 ]B. Reeves and C. Nass, The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places (New York: Cambridge University Press, 1996).

[3 ]In many ways the Tamagotchi’s success was helpful for those of us working with CliffNass at Stanford University. Since the early 1990s we had been researching people’s social responses to computers (see Reeves and Nass (1996) for a summary). Before the Tamagotchi craze, many people didn’t fully understand our research—or they didn’t understand why we would study computers in this way. However, after the Tamagotchi became widely known, our research made more sense to people; they had seen how the human computer relationship could take on social qualities.

Five Types of Social Cues

The fact that people respond socially to computer products has significant implications for persuasion. It opens the door for computers to apply a host of persuasion dynamics that are collectively described as social influence —the type of influence that arises from social situations. [4 ]These dynamics include normative influence (peer pressure) and social comparison (“keeping up with the Joneses”) as well as less familiar dynamics such as group polarization and social facilitation. [5 ]

When perceived as social actors, computer products can leverage these principles of social influence to motivate and persuade.6My own research, discussed later in this chapter, confirms that people respond to computer systems as though the computers were social entities that used principles of motivation and influence. [7 ]

As shown in Table 5.1, I propose that five primary types of social cues cause people to make inferences about social presence in a computing product: physical, psychological, language, social dynamics, and social roles. The rest of this chapter will address these categories of social cues and explore their implications for persuasive technology.

|

Cue |

Examples |

|---|---|

|

Physical |

Face, eyes, body, movement |

|

Psychological |

Preferences, humor, personality, feelings, empathy, “I’m sorry” |

|

Language |

Interactive language use, spoken language, language recognition |

|

Social dynamics |

Turn taking, cooperation, praise for good work, answering questions, reciprocity |

|

Social roles |

Doctor, teammate, opponent, teacher, pet, guide |

[4 ]P. Zimbardo and M. Leippe, The Psychology of Attitude Change and Social Influence (New York: McGraw-Hill, 1991).

[5 ]“Group polarization” is a phenomenon whereby people, when part of a group, tend to adopt a more extreme stance than they would if they were alone. “Social facilitation” is a phenomenon whereby the presence of others increases arousal. This helps a person to perform easy tasks but hurts performance on difficult tasks.

[7 ]B. J. Fogg, Charismatic Computers: Creating More Likable and Persuasive Interactive Technologies by Leveraging Principles from Social Psychology, doctoral dissertation, Stanford University (1997).

Persuasion through Physical Cues

One way a computing technology can convey social presence is through physical characteristics. A notable example is Baby Think It Over, described in Chapter 4. This infant simulator conveys a realistic social presence to persuade teenagers to avoid becoming teen parents.

Another example of how technology products can convey social presence comes from the world of gambling, in the form of Banana-Rama. This slot machine (Figure 5.3) has two onscreen characters—a cartoon orangutan and a monkey—whose goal is to persuade users to keep playing by providing a supportive and attentive audience, celebrating each time the gambler wins.

Figure 5.3: The Banana-Rama slot machine interface has onscreen characters that influence gamblers to keep playing.

As the examples of Baby Think It Over and Banana-Rama suggest, computing products can convey physical cues through eyes, a mouth, movement, and other physical attributes. These physical cues can create opportunities to persuade.

The Impact of Physical Attractiveness

Simply having physical characteristics is enough for a technology to convey social presence. But it seems reasonable to suggest that a more attractive technology (interface or hardware) will have greater persuasive power than an unattractive technology.

Physical attractiveness has a significant impact on social influence. Research confirms that it’s easy to like, believe, and follow attractive people. All else being equal, attractive people are more persuasive than those who are unattractive. [8 ]People who work in sales, advertising, and other high- persuasion areas know this, and they do what they can to be attractive, or they hire attractive models to be their spokespeople.

Attractiveness even plays out in the courtroom. Mock juries treat attractive defendants with more leniency than unattractive defendants (unless attractiveness is relevant to the crime, such as in a swindling case). [9 ]

Psychologists do not agree on why attractiveness is so important in persuasion, but a plausible explanation is that attractiveness produces a “halo effect.”

If someone is physically attractive, people tend to assume they also have a host of admirable qualities, such as intelligence and honesty. [10 ]

Principle of Attractiveness

A computing technology that is visually attractive to target users is likely to be more persuasive as well.

Similarly, physically attractive computing products are potentially more persuasive than unattractive products. If an interface, device, or onscreen character is physically attractive (or cute, as the Banana-Rama characters are), it may benefit from the halo effect; users may assume the product is also intelligent, capable, reliable, and credible. [11 ]

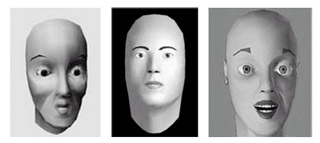

Attractiveness issues are prominent in one of the most ambitious— and frustrating—efforts in computing: creating human-like faces that interact with people in real time. Over the past few decades, researchers have taken important steps forward in making these faces more technically competent, with better facial expressions, voices, and lip movements. However, because of the programming challenges involved, many of these interactive faces are not very attractive, as shown in Figure 5.4. If interactive faces are to be used for persuasive purposes, such as counseling, training, or advertising, they need to be visually pleasing to be most effective. [12 ]

Figure 5.4: These interactive faces, created in the mid- to late 1990s, show that researchers have a long way to go in developing attractive, human-like interactive onscreen characters.

Two studies performed at the School of Management at Boston University reinforce the power of attractiveness. In the initial study, in 1996, researchers had participants play a two-person social dilemma game, in which participants could cooperate with an onscreen character or could choose to serve their own selfish purposes.

In this initial study, the onscreen character (representative of the technology at the time) looked unattractive, even creepy, in my view. And it received rather low cooperation rates: just 32%.

A couple of years later, the researchers repeated the study. Thanks to technology developments, in this second study, the onscreen character looked less artificial and, I would argue, more attractive and less creepy. This new and improved character garnered cooperation rates of a whopping 92%—a figure that in this study was statistically indistinguishable from cooperation rates for interacting with real human beings. The researchers concluded that “the mere appearance of a computer character is sufficient to change its social influence.” [13 ]

Of course, people have different opinions about what is attractive. Evaluations vary from culture to culture, generation to generation, and individual to individual. (However, judging attractiveness is not entirely subjective; some elements of attractiveness, such as symmetry, are predictable). [14 ]

Because people have different views of what’s attractive, designers need to understand the aesthetics of their target audiences when creating a persuasive technology product. The more visually attractive the product is to its target audience, the more likely it is to be persuasive. The designer might review the magazines the audience reads and music they listen to, observe the clothes they wear, determine what trends are popular with them, and search for other clues to what they might find attractive. With this information, the designer can create a product and test it with the target group.

Computing technology also can convey social presence without using physical characters. We confirmed this in laboratory experiments at Stanford. We designed interface elements that were simple dialog boxes—no onscreen characters, no computer voices, no artificial intelligence. Yet participants in the experiments responded to these simple computer interfaces as though they were responding to a human being. Among other things, they reported feeling better about themselves when they were praised by a computer and reciprocated favors from a computer. (These experiments are discussed in detail later in this chapter.)

[8 ]For academic work on computers as persuasive social actors, see the following:

a. B. J. Fogg, Charismatic Computers: Creating More Likable and Persuasive Interactive Technologies by Leveraging Principles from Social Psychology, doctoral dissertation, Stanford University (1997).

b. B. J. Fogg and C. I. Nass, How users reciprocate to computers: An experiment that demonstrates behavior change, in Extended Abstracts of the CHI97 Conference of the ACM/SIGCHI (New York: ACM Press, 1997).

c. B. J. Fogg and C. I. Nass, Silicon sycophants: The effects of computers that flatter, International Journal of Human-Computer Studies, 46: 551–561 (1997).

d. C. I. Nass, B. J. Fogg, and Y. Moon, Can computers be teammates? Affiliation and social identity effects in human-computer interaction, International Journal of Human- Computer Studies, 45(6): 669–678 (1996).

[9 ]For more information on the role of attractiveness in persuasion, see the following:

a. E. Berscheid and E. Walster, Physical attractiveness, in L. Berkowitz (ed.), Advances in Experimental Social Psychology, Vol. 7, (New York: Academic, 1974), pp. 158–216.

b. S. Chaiken, Communicator physical attractiveness and persuasion, Journal of Personality and Social Psychology, 37: 1387–1397 (1979).

[10 ]H. Sigall and N. Osgrove, Beautiful but dangerous: Effects of offender attractiveness and nature of crime on juridic judgement, Journal of Personality and Social Psychology, 31: 410–414 (1975).

[11 ]For more on the connection between attractiveness and other qualities, see the following:

a. K. L. Dion, E. Bersheid, and E. Walster, What is beautiful is good, Journal of Personality and Social Psychology, 24: 285–290 (1972).

b. A. H. Eagly, R. D. Ashmore, M. G. Makhijani, and L. C. Longo, What is beautiful is good, but . . . : A meta-analytic review of research on the physical attractiveness stereotype, Psychological Bulleting, 110: 109–128 (1991).

[12 ]The principle of physical attractiveness probably applies to Web sites as well, although my research assistants and I could find no quantitative public studies on how attractiveness of Web sites affects persuasion—a good topic for future research.

[13 ]These interactive faces typically have not been created for persuasive purposes—at least not yet. Researchers in this area usually focus on the significant challenge of simply getting the faces to look and act realistically, with an eye toward developing a new genre of human-computer interaction. In the commercial space, people working on interactive talking faces are testing the waters for various applications, ranging from using these interactive faces for delivering personalized news to providing automated customer support online.

[14 ]S. Parise, S. Kiesler, L. Sproull, and K. Waters, Cooperating with life-like interface agents, Computers in Human Behavior, 15 (2): 123–142 (1999). Available online as an IBM technical report at http://domino.watson.ibm.com/cambridge/research.nsf/2b4f81291401771785256976004a8d13/ce1725c578ff207d8525663c006b5401/$FILE/ decfac48.htm.

Using Psychological Cues to Persuade

Psychological cues from a computing product can lead people to infer, often subconsciously, that the product has emotions, [15 ]preferences, motivations, and personality—in short, that the computer has a psychology. Psychological cues can be simple, as in text messages that convey empathy (“I’m sorry, but . . .”) or onscreen icons that portray emotion, such as the smiling face of the early Macintosh computer. Or the cues can be more complex, such as those that convey personality. Such complex cues may become apparent only after the user interacts with technology for a period of time; for example, a computer that keeps crashing may convey a personality of being uncooperative or vengeful.

It’s not only computer novices who infer these psychological qualities; my research with experienced engineers showed that even the tech savvy treat computing products as though the products had preferences and personalities. [16 ]

The Stanford Similarity Studies

In the area of psychological cues, one of the most powerful persuasion principles is similarity. [17 ]Simply stated, the principle of similarity suggests that, in most situations, people we think are similar to us (in personality, preferences, or in other attributes) can motivate and persuade us more easily than people who are not similar to us. [18 ]Even trivial types of similarity—such as having the same hometown or rooting for the same sports teams—can lead to more liking and more persuasion. [19 ]In general, the greater the similarity, the greater the potential to persuade.

In the mid-1990s at Stanford, my colleagues and I conducted two studies that showed how similarity between computers and the people who use them makes a difference when it comes to persuasion. One study examined similarity in personalities. The other investigated similarity in group affiliation— specifically, in belonging to the same team. Both studies were conducted in a controlled laboratory setting.

The Personality Study

In the first study, my colleagues and I investigated how people would respond to computers with personalities. [20 ]All participants would work on the same task, receiving information and suggestions from a computer to solve the Desert Survival Problem. [21 ]This is a hypothetical problem-solving situation in which you are told you have crash-landed in the desert in the southwestern part of the United States. You have various items that have survived the crash with you, such as a flashlight, a pair of sunglasses, a quart of water, salt tablets, and other items. You have to rank the items according to how important each one is to surviving in the desert situation. In our study, participants would have to work with computers to solve the problem.

To prepare for the research, we designed two types of computer “ personalities”: one computer was dominant, the other submissive. We focused on dominance and submissiveness because psychologists have identified these two traits as one of five key dimensions of personality. [22 ]

How do you create a dominant or submissive computer? For our study, we created a dominant computer interface by using bold, assertive typefaces for the text. Perhaps more important, we programmed the dominant computer to go first during the interaction and to make confident statements about what the user should do next. Finally, to really make sure people in the study would differentiate between the dominant and submissive computers, we added a “confidence scale” below each of these messages, indicating on a scale of 1 to 10 how confident the computer was about the suggestion it was offering. The dominant computer usually gave confidence ratings of 7, 8, and 9, while the submissive computer offered lower confidence ratings.

For example, if a participant was randomly assigned to interact with the dominant computer, he or she would read the following on the screen while working with the computer on the Desert Survival Task:

In the desert, the intense sunlight will clearly cause blindness by the second day. The sunglasses are absolutely important.

This assertion from the computer was in a bold font, with a high confidence rating.

In contrast, if a person was assigned to the submissive computer, he or she would read a similar statement, but it would be presented in this way:

In the desert, it seems that the intense sunlight could possibly cause blindness by the second day. Without adequate vision, don’t you think that survival might become more difficult? The sunglasses might be important.

This statement was made in an italicized font, along with a low ranking on the confidence meter. To further reinforce the concept of submissiveness, the computer let the user make the first move in the survival task.

Another step in preparing for this study was to find dominant and submissive people to serve as study participants. We asked potential participants to fill out personality assessments. Based on the completed assessments, we selected 48 people who were on the extreme ends of the continuum—the most dominant and the most submissive personalities.

We found these participants by having almost 200 students take personality tests. Some, but not all, were engineers, but all participants had experience using computers. In the study, half of the participants were dominant types and half were submissive.

In conducting the study, we mixed and matched the dominant and submissive people with the dominant and submissive computers. In half the cases, participants worked with a computer that shared their personality type. In the other half, participants worked with a computer having the opposite personality.

The information provided by all the computers was essentially the same. Only the computer’s style of interacting differed, as conveyed through text in dialog boxes: either the computer was dominant (“The intense sunlight will clearly cause blindness”), or it was submissive (“It seems that the intense sunlight could possibly cause blindness”).

After we ran the experiment and analyzed the data, we found a clear result: participants preferred working with a computer they perceived to be similar to themselves in personality style. Dominant people preferred the dominant computer. Submissive people preferred the submissive computer.

Specifically, when working with a computer perceived to be similar in personality, users judged the computer to be more competent and the interaction to be more satisfying and beneficial. In this study we didn’t measure persuasion directly, but we did measure key predictors of persuasion, including likability and credibility.

Research Highlights: The Personality Study

- Created dominant and submissive computer personalities

- Chose as participants people who were at extremes of dominant or submissive

- Mixed and matched computer personalities with user personalities

- Result: Participants preferred computers whose “personalities” matched their own.

The evidence from this study suggests that computers can motivate and persuade people more effectively when they share personality traits with them—at least in terms of dominance and submission. For designers of persuasive technology, the findings suggest that products may be more persuasive if they match the personality of target users or are similar in other ways.

The Affiliation Study

While running the personality study, we set out to conduct another study to examine the persuasive effects of other types of similarity between people and computers. [23 ]For this second study we investigated similarity in affiliation— specifically, the persuasive impact of being part of the same group or team. The study included 56 participants, mostly Stanford students along with a few people from the Silicon Valley community. All the participants were experienced computer users.

In this study, we gave participants the same Desert Survival Problem to solve. We assigned them to work on the problem either with a computer we said was their “teammate” or with a computer that we gave no label. To visually remind them of their relationships with the computers, we asked each participant to wear a colored wristband during the study. If the participant was working with a computer we had labeled as his or her teammate, the participant wore a blue wristband, which matched the color of the frame around the computer monitor. The other participants—the control group—wore green wristbands while working with the blue-framed computers. For both groups, the interaction with the computer was identical: the computer gave the same information, in the same style.

Research Highlights: The Affiliation Study

- Participants were given a problem to solve and assigned to work on the problem either with a computer they were told was a “teammate” or a computer that was given no label.

- For all participants, the interaction with the computer was identical; the only difference was whether or not the participant believed the computer was a teammate.

- The results compared to responses of other participants: people who worked with a computer labeled as their teammate reported that the computer was more similar to them, that it was smarter, and that it offered better information. These participants also were more likely to choose the problem solutions recommended by the computers.

After completing the study, we examined the data and found significant differences between the conditions. When compared with other participants, people who worked with a computer labeled as their teammate reported that the computer was more similar to them, in terms of approach to the task, suggestions offered, interaction style, and similarity of rankings of items needed for survival. Even more interesting, participants who worked with a computer labeled as a teammate thought the computer was smarter and offered better information.

In addition, participants who worked on the task with a computer labeled as a teammate reported that the computer was friendlier and that it gave higher quality information. Furthermore, people who perceived the computer to be similar to themselves reported that the computer performed better on the task. [24 ]

During the study we also measured people’s behavior. We found that computers labeled as teammates were more effective in influencing people to choose problem solutions that the computer advocated. In other words, teammate computers were more effective in changing people’s behavior.

All in all, the study showed that the perception of shared affiliation (in this case, being on the same “team”) made computers seem smarter, more credible, and more likable—all attributes that are correlated with the ability to persuade.

Among people, similarity emerges in opinions and attitudes, personal traits, lifestyle, background, and membership. [25 ]Designers of persuasive technology should be aware of these forms of similarity and strive to build them into their products.

One company that has done a good job of this is Ripple Effects, Inc., which “helps schools, youth-serving organizations, and businesses change social behavior in ways that improve performance.” [26 ]The company’s Relate for Teens CD-ROM leverages the principle of similarity to make its product more persuasive to its target audience—troubled teens. It conveys similarity through the language it uses, the style of its art (which includes graffiti and dark colors), audio (all instructions are given by teen voices), and photos and video clips that feature other, similar teens. Researchers at Columbia University and New York University have shown that the product produces positive effects on teen behavior, including significant reductions in aggressive acts, increases in “prosocial” acts, and improvements in educational outcomes. [27 ]

Principle of Similarity

People are more readily persuaded by computing technology products that are similar to themselves in some way.

As this example and the Stanford research suggests, designers can make their technology products more persuasive by making them similar to the target audience. The more that users can identify with the product, the more likely they will be persuaded to change their attitudes or behavior in ways the product suggests.

Ethical and Practical Considerations

The two studies just described suggest that people are more open to persuasion from computers that seem similar to themselves, in personality or affiliation. In addition to similarity, a range of other persuasion principles come into play when computers are perceived to have a psychology. Computers can motivate through conveying ostensible emotions, such as happiness, anger, or fear. [28 ]They can apply a form of social pressure. [29 ]They can negotiate with people and reach agreements. Computers can act supportively or convey a sense of caring.

Designing psychological cues into computing products can raise ethical and practical questions. Some researchers suggest that deliberately designing computers to project psychological cues is unethical and unhelpful. [30 ]They argue that psychological cues mislead users about the true nature of the machine (it’s not really having a social interaction with the user). Other researchers maintain that designing computer products without attention to psychological cues is a bad idea because users will infer a psychology to the technology one way or another. [31 ]

While I argue that designers must be aware of the ethical implications of designing psychological cues into their products, I side with those who maintain that users will infer a psychology to computing products, whether or not the designers intended this. For this reason, I believe designers must embed appropriate psychological cues in their products. I also believe this can be done in an ethical manner.

The Oscilloscope Study

My belief that users infer a psychology to computing technology stems in part from research I conducted in the mid-1990s for a company I’ll call Oscillotech, which made oscilloscopes. The purpose of the research was to determine how the engineers who used the scopes felt about them.

What I found surprised Oscillotech’s management. The scopes’ text messages, delivered on a single line at the bottom of the scopes’ displays, were somewhat harsh and at times unfriendly, especially the error messages. I later found out that the engineers who wrote these messages, more than a decade earlier, didn’t consider what impact the messages would make on the scope users; they didn’t think people would read the messages and then infer a personality to the measuring device.

They were wrong. My research showed that Oscillotech’s scopes made a much less favorable impression on users than did a competitor’s scopes. This competitor had been gaining market share at the expense of Oscillotech. While many factors led to the competitor’s success, one clear difference was the personality its scopes projected: the messages from the competitor’s scopes were invariably warm, helpful, and friendly, but not obsequious or annoying.

What was more convincing was a controlled study I performed. To test the effects of simply changing the error messages in Oscillotech’s scopes, I had a new set of messages—designed to portray the personality of a helpful senior engineer—burned into the Oscillotech scopes and tested users’ reactions in a controlled experiment.

The result? On nearly every measure, people who used the new scope rated the device more favorably than people who used the previous version of the scope, with the unfriendly messages. Among other things, users reported that the “new” scope gave better information, was more accurate, and was more knowledgeable. In reality, the only difference between the two scopes was the personality of the message. This was the first time Oscillotech addressed the issue of the “personality” of the devices it produced.

This example illustrates the potential impact of psychological cues in computing products. While it is a benign example, the broader issue of using computer technology to convey a human-like psychology—especially as a means to persuade people—is a controversial area that has yet to be fully explored and that is the subject of much debate. (Chapter 9 will address some of the ethical issues that are part of the debate.)

[15 ]For more on the predictability of attractiveness, see the following:

a. M. Cunningham, P. Druen, and A. Barbee, Evolutionary, social and personality variables in the evaluation of physical attractiveness, in J. Simpson and D. Kenrick (eds.), Evolutionary Social Psychology (Mahwah, NJ: Lawrence Erlbaum, 1997), 109–140.

b. J. H. Langlois, L. A. Roggman, and L. Musselman, What is average and what is not average about attractive faces? Psychological Science, 5: 214–220 (1994).

[16 ]When it comes to emotions and computers, Dr. Rosalind Picard’s Affective Computing Research Group at MIT has been blazing new trails. For more information about the group’s work, see http://affect.media.mit.edu/.

[17 ]My research involving engineers and social responses to computer devices was performed for HP Labs in 1995.

[18 ]H. Tajfel, Social Identity and Intergroup Relations (Cambridge, England: Cambridge University Press, 1982).

[19 ]R. B. Cialdini, Influence: Science and Practice, 3rd ed. (New York: HarperCollins, 1993).

[20 ]Persuasion scholar Robert Cialdini writes, “As trivial as . . . similarities may seem, they appear to work. . . . even small similarities can be effective in producing a positive response to another.” Robert B. Cialdini, Influence: Science & Practice (Boston: Allyn and Bacon, 2000). See also H. Tajfel, Human Groups and Social Categories (Cambridge: Cambridge University Press, 1981).

See also H. Tajfel, Social Identity and Intergroup Relations (Cambridge, England: Cambridge University Press, 1982).

[21 ]C. I. Nass, Y. Moon, B. J. Fogg, B. Reeves, and D. C. Dryer, Can computer personalities be human personalities? International Journal of Human-Computer Studies, 43: 223–239 (1995).

[22 ]For each study, we adapted the desert survival task from J. C. Lafferty and P. M. Eady, The Desert Survival Problem (Plymouth, MI: Experimental Learning Methods, 1974).

[23 ]J. M. Digman, Personality structure: An emergence of the five-factor model, The Annual Review of Psychology, 41: 417–440 (1990).

[24 ]C. I. Nass, B. J. Fogg, and Y. Moon, Can computers be teammates? Affiliation and social identity effects in human-computer interaction, International Journal of Human- Computer Studies, 45(6): 669–678 (1996).

[25 ]B. J. Fogg, Charismatic Computers: Creating More Likable and Persuasive Interactive Technologies by Leveraging Principles from Social Psychology, doctoral dissertation, Stanford University (1997).

[26 ]Part of my list about similarity comes from

S. Shavitt and T. C. Brock, Persuasion: Psychological Insights and Perspectives (Needham Heights, MA: Allyn and Bacon, 1994).

[27 ]http://www.rippleeffects.com.

[28 ]To read about the research, see http://www.rippleeffects.com/research/studies.html.

[29 ]To get a sense of how computers can use emotions to motivate and persuade, see R. Picard, Affective Computing (Cambridge, MA: MIT Press, 1997). See also the readings on Dr. Picard’s Web site: http://affect.media.mit.edu/AC_readings.html.

[30 ]C. Marshall and T. O. Maguire, The computer as social pressure to produce conformity in a simple perceptual task, AV Communication Review, 19: 19–28 (1971).

[31 ]For more about the advisability (pro and con) of incorporating social elements into computer systems, see the following:

a. B. Shneiderman, Designing the User Interface: Strategies for Effective Human- Computer Interaction (Reading, MA: Addison Wesley Longman, 1998).

b. B. Shneiderman and P. Maes, Direct manipulations vs. interface agents, Interactions, 4(6): 42–61 (1997).

Influencing through Language

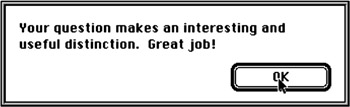

Computing products also can use written or spoken language (“You’ve got mail!”) to convey social presence and to persuade. Dialogue boxes are a common example of the persuasive use of language. Whether asking questions (“Do you want to continue the installation?”), offering congratulations for completing a task (Figure 5.5), or reminding the user to update software, dialog boxes can lead people to infer that the computing product is animate in some way.

Figure 5.5: Quicken rewards users with a celebratory message each time they reconcile their accounts.

E-commerce sites such as Amazon.com make extensive use of language to convey social presence and persuade users to buy more products.

Amazon.com is a master of this art. When I log on, the site welcomes me by name, offers recommendations, and lists a host of separate stores tailored to my preferences. Each page I click on addresses me by name and lists more recommendations. To keep me online, I’m asked if the site’s recommendations are “on target” and to supply more information if they are not. The designers’ goal, it’s safe to say, is to persuade me and other users to maximize our online purchases.

Iwin.com, a leading site for online gaming and lotteries, uses language in a very different way. The site conveys a young, brash attitude in an attempt to persuade users to log in, amass iCoins (a type of online currency) by performing various activities on the site, and use that currency to play lotteries.

When you arrive at the homepage, you can answer a “Daily Poll” and gain 25 iCoins. One sample question was “Who’s eaten more?” You can choose from two answers: “PacMan” or “Dom DeLuise.” Obviously this question is not serious, more of a teaser to get people to start clicking and playing. The submit button for the survey doesn’t even say “submit.” Instead, it reads “Hey big daddy” or something similarly hip (the message changes each time you visit the site).

If you keep playing games on the main page without logging in, you’ll see this message in large type:

What’s the deal? You don’t call, you don’t log in . . . Is itme?

And if you continue to play games without logging in, again you’ll get a prompt with attitude:

Well, you could play without logging in, but you won’t win anything. It’s up to you.

Later, as you log out of your gaming session, the Web site says:

You’re outta here! Thanks for playing!

The use of language on this Web site is very different from Amazon. Note how the creators of Iwin.com had the option to choose standard language to move people through the transactional elements (register, log in, log out, enter lotteries for prizes) of the online experience. Instead, they crafted the language to convey a strong online personality, one that has succeeded in acquiring and keeping users logging in and playing games. [32 ]

Persuading through Praise

One of the most powerful persuasive uses of language is to offer praise. Studies on the effect of praise in various situations have clearly shown its positive impact.[33]My own laboratory research concludes that, offered sincerely or not, praise affects people’s attitudes and their behaviors. My goal in this particular line of research was to determine if praise from a computer would generate positive effects similar to praise from people. The short answer is “yes.” [34 ]

My colleagues and I set up a laboratory experiment in which Stanford students who had significant experience using computers played a “20Questions” game with computers. As they played this game, they could make a contribution to the computer’s database. After they made a contribution, the computer would praise them via text in a dialog box (Figure 5.6). Half of the people in the study were previously told this feedback was a true evaluation of their contribution (this was the “sincere” condition). The other half were told that the positive feedback had nothing to do with their actual contribution (the “insincere” condition).

Figure 5.6: Dialog boxes were a key element in our research on the impact of positive feedback from computers.

In all, each study participant received 12 messages from the computer during the game. They saw text messages such as:

Very smart move. Your new addition will enhance this game in a variety of ways.

Your question makes an interesting and useful distinction. Great job!

Great! Your suggestions show both thoroughness and creativity.

Ten of the messages were pure praise. The other two were less upbeat: All the players received the following warning message after their fourth contribution to the game: “Be careful. Your last question may steer the game in the wrong direction.” After their eighth contribution, players received this somewhat negative message: “Okay, but your question will have a negligible effect on overall search efficiency.” Previous studies had shown that adding the non-praise messages to the mix increased the credibility of the 10 praise messages.

After participants finished playing the 20Questions game with the computer, they responded to a questionnaire about their experience. The questionnaire had a few dozen questions about how they felt, their view of the computer, their view of the interaction, and their view of the computer’s evaluations of their work.

In analyzing the data, we compared the two praise conditions (sincere and insincere) along with a third condition that offered no evaluation, just the text, “Begin next round.” The findings were clear. Except for two questions that focused on the sincerity of the computer, participants responded to true praise and flattery identically—and these responses were positive.

In essence, after people received computer praise—sincere or not—they responded significantly more positively than did people who received no evaluation. Specifically, compared to the generic, “no evaluation” condition, the data show that people in both conditions who received praise

- Felt better about themselves

- Were in a better mood

- Felt more powerful

- Felt they had performed well

- Found the interaction engaging

- Were more willing to work with the computer again

- Liked the computer more

- Thought the computer had performed better

Principle of Praise

By offering praise, via words, images, symbols, or sounds, computing technology can lead users to be more open to persuasion.

Although these aren’t direct measures of persuasion, these positive reactions open the door to influence. These findings illustrate the importance of using language in ways that will set the stage for persuasive outcomes, rather than in ways that build barriers to influence. Language—even language used by a computing system—is never neutral. It can promote or hinder a designer’s persuasion goals.

[32 ]For more about the advisability of incorporating social elements into computer systems, see the following:

a. B. Reeves and C. Nass, The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places (New York: Cambridge University Press, 1996).

b. B. Shneiderman and P. Maes, Direct manipulations vs. interface agents, Interactions, 4(6): 42–61 (1997).

[33]As of this writing, the Web rankings and search directory top9.com ranks iwin.com as the #1 site in the sweepstakes and lottery category, and trafficranking.com ranks the site above americanexpress.com, nih.gov, hotjobs.com, and nfl.com.

[34 ]For more on the effects of praise, see the following:

a. E. Berscheid and E. H. Walster, Interpersonal Attraction, 2nd ed. (Reading, MA: Addison- Wesley, 1978).

b. E. E. Jones, Interpersonal Perception (New York: W. H. Freeman, 1990).

c. J. Pandey and P. Singh, Effects of Machiavellianism, other-enhancement, and power position on affect, power feeling, and evaluation of the ingratiator, Journal of Psychology, 121: 287–300 (1987).

Social Dynamics

Most cultures have set patterns for how people interact with each other— rituals for meeting people, taking turns, forming lines, and many others. These rituals are social dynamics —unwritten rules for interacting with others. Those who don’t follow the rules pay a social price; they risk being alienated.

Computing technology can apply social dynamics to convey social presence and to persuade. One example is Microsoft’s Actimates characters, a line of interactive toys introduced in the late 1990s. The Microsoft team did a great deal of research into creating toys that would model social interactions. [35 ]The goal of the toys, of course, is to entertain kids, but it also seems that the toys are designed to use social rituals to persuade kids to interact with the characters.

Consider the Actimates character named DW (Figure 5.7). This interactive plush toy says things like “I love playing with you” and “Come closer. I want to tell you a secret.” These messages cue common social dynamics and protocols.

Figure 5.7: DW, an interactive plush toy developed by Microsoft, puts social dynamics into motion.

By cueing social dynamics, DW affects how children feel and what they do. DW’s expressions of friendship may lead children to respond with similar expressions or feelings. DW’s invitation to hear her secret sets up a relationship of trust and support.

E-commerce sites also use social dynamics to help interactions succeed. They greet users, guide people to products they may like, confirm what’s being purchased, ask for any needed information, and thank people for making the transaction. [36 ]In short, they apply the same social dynamics users might encounter when shopping in a brick-and-mortar store.

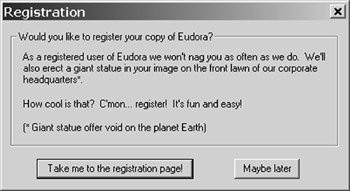

An application of social dynamics can be found by users of Eudora, an email program. If you don’t register the product immediately, every week or so the program will bring up a dialogue box, inviting you to register. The registration screen (also shown in the Introduction, as an example of how computers can be persistent) has some funny, informal text (“As a registered user of Eudora we won’t nag you as often as we do. We’ll also erect a giant statue in your image on the front lawn of our corporate headquarters”—with a note below: “Giant statue offer void on the planet Earth.”)

All of this text is designed to persuade you to choose one of two buttons in the dialogue box (Figure 5.8):

Take me to the registration page!

or

Maybe later.

Figure 5.8: The dialogue box that Eudora uses to persuade users to register.

Eudora doesn’t give users the option of clicking “no” (although you can avoid choosing either option above by simply clicking the “close” button). Instead, to get the dialogue box to vanish and get on with their task at hand, people most likely click on “Maybe later.” By clicking on this box, the user has made an implicit commitment that maybe he or she will register later. This increases the likelihood that the user will feel compelled to register at some point.

The Eudora dialogue box seems simple-minded—even goofy. But it’s actually quite clever. The goofy content and language serve a few purposes: elevating the mood of the user, making the request seem fun and easy, positioning the requestor as approachable and good-humored. Perhaps the main purpose of the goofiness is to serve as a distraction, just as people can use distractions effectively during negotiations.

The truth is that Eudora is making a very serious request. Getting people to eventually say yes is vital to the future of the product. And the dynamic that plays out with this dialogue box is not so different from the social dynamics that play out during serious human-human exchanges, such as asking for a raise. (If you can get the boss to say “maybe later” rather than “no” in response to your request for a raise, you’re in a much more powerful position when you raise the issue again later, as the boss has made a subtle commitment to considering it.)

Other social dynamics can be set in motion when people interact with computing products. Users may succumb to “peer pressure” from computers. Or they may judge information as more accurate when it comes from multiple computing sources. These and many other social dynamics have yet to be tested, but based on early efforts by Amazon.com, Eudora, and others, the potential for using technology to leverage social dynamics appears to be strong.

The Reciprocity Study

One social dynamic that may have potential for persuasive technology is the rule of reciprocity. This unwritten social rule states that after you receive a favor, you must pay it back in some way. Anthropologists report that the reciprocity rule is followed in every human society. [37 ]

In my laboratory research, my colleagues and I set out to see if the rule of reciprocity could be applied to interactions between humans and computers. Specifically, we set up an experiment to see if people would reciprocate to a computer that had provided a favor for them. [38 ]

I recruited Stanford students and people living in the Silicon Valley area to be participants in the research. In total, 76 people were involved in the reciprocity study.

Each participant entered a room that had two identical computers. My research assistants and I gave each person a task that required finding specific types of information, using the computer. In this study, we again used the context of the Desert Survival Problem. The study participants were given a modified version of the challenge. They needed to rank seven items according to their survival value (a compress kit, cosmetic mirror, flashlight, salt tablets, sectional air map, topcoat, and vodka). To do this, they could use a computer to find information about each item.

Half the participants used a computer that was extremely helpful. We had preprogrammed the computer to provide information we knew would be useful: Months before the study, we tested lots of information about these items in pilot studies and selected the bits of information people had found most useful. We put this information into the computer program.

As a result, when study participants performed a search on any of the survival items, they received information that we had already confirmed would be helpful to them in ranking the items (“The beam from an ordinary flashlight can be seen as far as 15 miles away on a clear night.”). The computer also claimed to be searching many databases to get the best information for the participants (this experiment took place just before the Web was popular, though the search we designed was much like searching on Google today).

The user could search for information on five of the seven items to be ranked. Users had to make separate requests for each search. The idea behind all of this was to set up a situation in which study participants would feel that the computer had done them a favor: it had searched many databases on the user’s behalf and had come up with information that was useful.

Only half of the participants worked with computers that provided this high quality of help and information. The other half also went into the lab alone and were given the same task but used a computer that provided low-quality help. The computer looked identical and had the same interface. But when these participants asked the computer to find information on the items, the information that came back was not very helpful.

We again had pretested information and knew what would seem a plausible result of an information search, but because of our pilot tests we knew the information would not be useful to the participants (“Small Flashlight: Easy to find yellow Lumilite flashlight is there when you need it. Batteries included.”).

In setting up the experiment this way, our goal was to have two sets of participants. One group would feel the computer had done a favor for them; the other group would feel the computer had not been helpful.

In a subsequent, seemingly unrelated task (it was related, but we hid this fact from participants), each participant was given the opportunity to help a computer create a color palette that matched human perception. The computer would show three colors, and the participants would rank the colors, light to dark. The participants could do as many, or as few, of these comparisons as they wished for the computer.

Because this was a controlled study, half of the participants worked with the same computer on the second task, the color perception task (the reciprocity condition), and half of the participants worked with a different computer (the control condition).

Those who worked with the same helpful computer on the second task—the color perception task—had an opportunity to reciprocate the help the computer had provided earlier. (During the experiment, we never mentioned anything about reciprocity to participants.) In contrast, those who worked with a different computer served as a control group.

After completing the study, we analyzed the data and found that people did indeed reciprocate to the computer that helped them. Participants who returned to work with the initially helpful computer performed more work for that computer on the second task. Specifically, participants in the reciprocity condition performed more color evaluations—almost double the number— than those who worked with a different, although identical, computer on the second task. In summary, the study showed that people observed a common social dynamic, the rule of reciprocity; they repaid a favor that a computer had done for them.

Research Highlights: The Reciprocity Study

- Participants entered a room with two computers and were assigned a task of finding information, with the help of one of the computers.

- Half of the participants used the computer that was helpful in finding the information; the other half used the computer that was not helpful.

- In a subsequent task, participants were asked to help one of the computers to create a color palette. Half of the participants worked with the same computer they’d worked with on the initial task; half worked with the other computer.

- Result: Those participants who worked with the same helpful computer on both tasks performed almost twice as much work for their computers on the second task as did the other participants.

This reciprocity study included control conditions that rule out other possible explanations for the results. One such explanation is that getting good information during the first task made participants happy, so they did more work in the second task. This explanation is ruled out because half of those who received good information from a computer for the first task used a different but identical-looking computer on the second task, but only those who used the same computer for both tasks showed the reciprocity effect.

The other alternative explanation is that people who remained at the same workstation for the second task were more comfortable and familiar with the chair or the setup, leading to an increase in work on the second task. This explanation can be ruled out because participants who received bad information from the computer in the first task and used the same computer on the second task did less work, not more, indicating that people may have retaliated against the computer that failed to help them on the previous task. (The retaliation effect may be even more provocative than the reciprocity effect, though it is not very useful for designing persuasive computer products.) With the alternative explanations ruled out, the evidence suggests that the rule of reciprocity is such a powerful social dynamic that people followed it when working with a machine.

Principle of Reciprocity

People will feel the need to reciprocate when computing technology has done a favor for them.

The implication for designers of persuasive technology is that the rule of reciprocity—an important social dynamic—can be applied to influence users. A simple example that leverages the rule of reciprocity is a shareware program that, after multiple uses, might query the user with a message such as “You have enjoyed playing this game ten times. Why not pay back the favor and register?”

[35 ]For more on investigations into praise from computers, see the following:

a. B. J. Fogg, Charismatic Computers: Creating More Likable and Persuasive Interactive Technologies by Leveraging Principles from Social Psychology, doctoral dissertation, Stanford University (1997).

b. B. J. Fogg and C. I. Nass, Silicon sycophants: The effects of computers that flatter, International Journal of Human-Computer Studies, 46: 551–561 (1997).

[36 ]For more on designing interfaces for children, see the following:

a. Erik Strommen and Kristin Alexander, Emotional interfaces for interactive aardvarks: Designing affect into social interfaces for children, Proceeding of the CHI 99 Conference on Human Factors in Computing Systems: The CHI Is the Limit, 528–535 (1999).

b. Erik Strommen, When the interface is a talking dinosaur: Learning across media with ActiMates Barney, Conference Proceedings on Human Factors in Computing Systems, 228–295 (1998).

[37 ]An online article in InfoWorld (Online ‘agents’ evolve for customer service, Dec. 11, 2000, http://www.itworld.com/AppDev/1248/IWD001211hnenabler/online) notes that “The new breed of agent is highly customizable, allowing e-tailers to brand them as they wish. The ‘agents’ also aim to assist and reassure customers by emoting: If an agent can’t answer a question, it expresses disappointment and tries again or it pushes the user toward another channel of service. If the customer expresses interest in a product, the agent can suggest related items. If a transaction is completed, the agent can thank the customer and urge him or her to come back soon.”

[38 ]A. W. Gouldner, The norm of reciprocity: A preliminary statement, American Sociological Review, 25: 161–178 (1960).

Persuading by Adopting Social Roles

In the mid-1960s, MIT’s Joseph Weizenbaum created ELIZA, a computer program that acted in the role of a psychotherapist. ELIZA was a relatively simple program, with less than 300 lines of code. It was designed to replicate the initial interview a therapist would have with a patient. A person could type in “I have a problem,” and ELIZA would respond in text, “Can you elaborate on that?” The exchange would continue, with ELIZA continuing to portray the role of a therapist.

The impact of a computer adopting this human role surprised many, including Weizenbaum. Even though people knew intellectually that ELIZA was software, they sometimes treated the program as though it were a human therapist who could actually help them. The response was so compelling that Weizenbaum was distressed over the ethical implications and wrote a book on the subject. [39 ]Even though Weizenbaum was disturbed by the effects of his creation, the controversial domain of computerized psychotherapy continues today, with the computer playing the role of a therapist. [40 ]

Computers in Roles of Authority

Teacher, referee, judge, counselor, expert—all of these are authority roles humans play. Computers also can act in these roles, and when they do, they gain the automatic influence that comes with being in a position of authority, as the example of ELIZA suggests. In general, people expect authorities to lead them, make suggestions, and provide helpful information. They also assume authorities are intelligent and powerful. By playing a role of authority convincingly, computer products become more influential.

That’s why Symantec’s popular Norton Utilities program includes Norton Disk Doctor and WinDoctor. The doctor metaphor suggests smart, authoritative, and trustworthy—more persuasive than, say, “Disk Helper” or “Disk Assistant.”

That’s also why Broderbund used the image of a teacher when creating its popular software program Mavis Beacon Teaches Typing (Figure 5.9). Mavis Beacon is a marketing creation, not a real person. But her physical image, including prim hairdo, and her name suggest a kindly, competent high school typing teacher. By evoking the image of a teacher, Broderbund probably hoped that its software would gain the influence associated with that role.

Figure 5.9: Broderbund created a fictional authority figure—a teacher—to persuade users of its typing program.

Although the power of authority has received the most attention in formal persuasion studies, authority roles aren’t the only social roles that influence people. Sometimes influence strategies that don’t leverage power or status also can be effective. Consider the roles of “friend,” “entertainer,” and “opponent,” each of which can cause people to change their attitudes or behavior.

Principle of Authority

Computing technology that assumes roles of authority will have enhanced powers of persuasion.

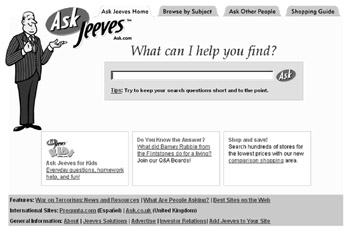

Ask Jeeves is a search engine that takes on the role of a butler (Figure 5.10) to distinguish its product from competing search engines. [41 ]When you visit ask.com, you ask a simple question, and Jeeves the butler is at your service, searching his own database and the most common Web search engines.

Figure 5.10: The Ask Jeeves Web search engine adopts a social role of butler or servant.

It’s likely that setting up the search engine in the role of a butler was a deliberate attempt to influence. In terms of attitude, the creators likely wanted people to feel the site would be easy to use, the service was helpful, and the site would treat them as special and important—all attributes associated with a butler or servant.

In terms of behavior, the Ask Jeeves creators probably hoped a butler-based Web site would influence users to return and use the site frequently, developing a kind of ongoing social relationship with the character, something the other search engines don’t provide. If the popularity of Web sites is any indication, the Ask Jeeves strategy is working. The site consistently ranks—according to some accounts—in the top 20 Web sites in terms of unique visitors. [42 ]

Another example, the Personal Aerobics Trainer (PAT), takes the concept of persuasiveness one step further. PAT is a virtual interactive fitness trainer created by James Davis of MIT. The system lets users choose the type of coach they will find the most motivational (including the “Virtual Army Drill Sergeant” shown in Figure 5.11). The virtual coach uses computer vision technology to watch how the person is performing and offers positive feedback (“Good job!” and “Keep up the good work!”) or negative feedback (“Get moving!”). [43 ]

Figure 5.11: The Personal Aerobics Trainer (PAT) is an interactive fitness trainer that lets users choose from a variety of coaches, including a virtual army drill sergeant.

For computers that play social roles to be effective in motivating or persuading, it’s important to choose the role model carefully or it will be counterproductive. Adult authority figures might work well for traditional business types, but teenagers may not respond. One user may prefer the “Army Drill Sergeant,” and another may find it demotivating. The implication for designers of persuasive technology that incorporates social role-playing: know your target audience. As the PAT system suggests, a target audience can have multiple user groups. Designers should provide a way for different groups of users to choose the social roles they prefer.

[39 ]For more on investigations into computers and reciprocity, see

B. J. Fogg, Charismatic Computers: Creating More Likable and Persuasive Interactive Technologies by Leveraging Principles from Social Psychology, doctoral dissertation, Stanford University 1997.

[40 ]His book is entitled Computer Power and Human Reason(San Francisco: W. H. Freeman, 1976).

[41 ]For a good introductory discussion of computerized therapy with pointers to other sources, see the Web page of John Suler, Ph.D., at http://www.rider.edu/users/suler/psycyber/eliza.html.

[42 ]www.ask.com.

[43 ]In March 2002, top9.com rated AskJeeves.com just above Google, Excite, and About.com. InMay 2002, the Search Engine Watch ranked AskJeeves as the #3 search engine, behind Google and Yahoo (source: http://searchenginewatch.com/sereport/02/05-ratings.html). Although these and other reports differ, the main point remains the same: AskJeeves is a popular site.

Social Cues Handle with Care

Although people respond socially to computer products that convey social cues, to be effective in persuasion, designers must understand the appropriate use of those cues. Inmy view, when you turn up the volume on the “social” element of a persuasive technology product, you increase your bet: you either win bigger or lose bigger, and the outcome often depends on the user. If you succeed, you make a more powerful positive impact. If you fail, you make users irritated or angry.

With that in mind, when is it appropriate to make the social quality of the product more explicit? In general, I believe it’s appropriate to enhance social cues in leisure, entertainment, and educational products (smart toys, video games, kids’ learning applications). Users of such applications are more likely to indulge, accept, and perhaps even embrace an explicit cyber social actor— either embodied or not. The Actimates toys are a good example. In the case of Actimates, one purpose of the toys is to teach social dynamics, so designers can rightly focus on maximizing the use of social cues.

When is it not appropriate to enhance social cues? When the sole purpose of the technology product is to improve efficiency.

When I buy gas for my car, I choose a station with gas pumps that take credit cards directly. I don’t want to deal with a cashier; I’m not looking for a social experience. I believe the analogy applies to interactive technologies, such as word processing programs or spreadsheets, that people use to perform a task more efficiently. For such tasks, it’s best to minimize cues for social presence, as social interactions can slow things down. This is probably why Amazon.com and other e-commerce sites use social dynamics but do not have an embodied agent that chats people up. As in brick-and-mortar stores, when people buy things they are often getting work done; it’s a job, not a social event. Enhancing social cues for such applications could prove to be distracting, annoying, or both.

The quality and repetition of the social cue should be of concern to designers as well. For example, dialogue boxes designed to motivate need to be crafted with care to avoid being annoyingly repetitious. When I created my experiment to study praise in dialogue boxes, I started out with dozens of possible ways to praise users and winnowed down the options, through user testing and other means, to 10 praise messages that would show up during the task. Users never got the same type of praise twice; they were praised many times, but the message was varied so it didn’t feel repetitious.

For updates on the topics presented in this chapter, visit www.persuasivetech.info.

Introduction Persuasion in the Digital Age

- Overview of Captology

- The Functional Triad Computers in Persuasive Roles

- Computers as Persuasive Tools

- Computers as Persuasive Media Simulation

- Computers as Persuasive Social Actors

- Credibility and Computers

- Credibility and the World Wide Web

- Increasing Persuasion through Mobility and Connectivity

- The Ethics of Persuasive Technology

- Captology Looking Forward

EAN: 2147483647

Pages: 103