2.2 Hardware

|

| < Day Day Up > |

|

2.2 Hardware

There are many hardware components that go into making an IBM ![]() Cluster 1350. At least the following hardware will arrive to the customer:

Cluster 1350. At least the following hardware will arrive to the customer:

-

One or more 19-inch racks

-

One management node for cluster management and administration

-

Between 4 to 512 nodes. Each node is tailored to customer requirements based on application requirements and function (compute node, user node, storage node, and so on)

-

A cluster network connecting all nodes in the cluster. This is used for management and installation purposes and, optionally, user traffic

-

A private management network connecting the management node securely to hardware used for cluster administration

-

A KVM switch for a local keyboard, mouse, and display

-

A terminal server network for remote console support

In addition, some of the following optional components may be installed:

-

A reserved Inter-Process Communication (IPC) network specifically for application traffic. The following hardware configurations are available:

-

An additional 10/100/1000 Mb Ethernet

-

Gigabit Fibre Ethernet SX

-

A high-performance Myrinet 2000 cluster interconnect

-

-

External disks to provide storage node function (particularly if GPFS is used).

2.2.1 Racks

Clusters are housed in industry standard 42U, 19-inch racks, suitable for shipping fully-integrated clusters.

A cluster consists of one primary rack and, if necessary, a number of expansion racks. For a 128-node cluster, up to five racks may be required (one primary and four expansion). Additional racks may also be required to house optional Fibre Channel RAID controllers and external storage.

The primary rack houses cluster infrastructure hardware (management node, KVM switch, keyboard, display, and the optional Myrinet 2000 switch). It may also hold other cluster nodes, depending on the configuration options selected by the user.

All racks (primary and expansion) house Ethernet switches and terminal servers that provide connectivity to all nodes within that rack.

2.2.2 Cluster nodes

The cluster is built from Intel-based, rack-optimized servers. Each can be configured to match the requirements of the application(s) that will run on the cluster.

Typically, a cluster 1350 is made up of IBM ![]() xSeries Models 335 and 345. Model 345s are typically employed for management and storage nodes, because the high number of PCI slots and drive bays is useful for attaching large volumes of storage and/or extra network adapters. The Model 335 is generally used for compute nodes due to its compact size.

xSeries Models 335 and 345. Model 345s are typically employed for management and storage nodes, because the high number of PCI slots and drive bays is useful for attaching large volumes of storage and/or extra network adapters. The Model 335 is generally used for compute nodes due to its compact size.

Model 345 nodes

The Model 345 is a 2U server featuring one or two Intel Xeon processors. The system has a 533 MHz front-side bus and utilizes PC2100 ECC RAM. It has an integrated Ultra320 SCSI interface that supports mirroring, two integrated 10/100/1000 Ethernet adapters and five PCI slots (Two 64-bit/133 MHz PCI-X, two 64-bit/100 MHz low profile PCI-X, and one 32-bit/33 MHz half length PCI). The node also includes an Integrated Systems Management processor for hardware control and management as shown in Figure 2-2.

Figure 2-2: Model 345 for cluster (storage or management) nodes

The Model 345 supports up to 8 GB of memory per node (Maximum of 4 GB until 2 GB DIMMs become available). Up to six internal hot-swap Ultra320 SCSI disks can be configured in sizes of 18, 36, or 72 GB, giving a total maximum of 440 GB. These can optionally be mirrored using the on-board LSI-Logic SCSI controller, which supports hardware level mirroring (RAID1). If increased throughput and/or capacity is desired, an optional RAID5i adapter can be installed that works in conjunction with the on-board controller.

The five PCI slots may optionally be populated with a combination of SCSI RAID cards, Fibre Channel cards, network adapters, and Myrinet 2000 adapters (only one Myrinet adapter is allowed per node).

The node must also include a Remote Supervisor Adapter for remote hardware control and management.

A Linux cluster, upon which GPFS would be deployed, would most likely utilize Model 345 nodes as storage nodes. These storage nodes could use multi-attached Fibre Channel disks or network twin-tailed disks. SAN support and support of Fibre Channel and SCSI disks on up to 32 nodes is included.

Model 335 nodes

The Model 335 is a 1U server with one or two Intel Xeon processors. The system has a 266 MHz front-side bus, integrated Ultra 320 SCSI interface, two integrated 10/100/1000 Ethernet adapters, and two 64-bit/100 MHz PCI-X slots (one full-length and one half-length). The Model 335 is designed for space efficiency and usability in a rack environment as shown in Figure 2-3.

Figure 2-3: Model 335 for cluster (compute) nodes

Each node can be configured with any of the following options:

-

One or two Xeon processors (running at 2.4, 2.6, 2.8, or 3.0 GHz).

-

0.5, 1, 2, 4, or 8 GB memory (if 2 GB SDRAM chips are used in all 4 slots).

-

One or two disks per node. Disks can either be IDE (40, 60, 80, and 120 GB sizes available) or hot-swap SCSI (18, 36, or 72 GB operating at 15K rpm, and 146 GB operating at 10K rpm) but not a mixture of both SCSI and IDE at this same time. SCSI disks can be mirrored using the on-board Ultra320 SCSI controller, if IDE mirroring is needed then a software RAID solution will need to be used. At least one disk is required at all times.

-

At least one in every sixteen Model 335 nodes must have a Remote Supervisor Adapter (RSA) installed to support remote monitoring and control via the cluster management VLAN.

-

PCI slots can optionally be populated with ServeRAID™, Fibre Channel, network, and Myrinet 2000 adapters. At most, one Myrinet adapter is allowed per node.

Model 360 nodes

The Model 360 is a 3U server with one, two, or four Intel Xeon MP processors with up to 2.8 GHz of power each. The x360 will allow support for up to 16 GB of (PC1600 DDR SDRAM) memory and comes with up to a 400 MHz front-side bus. This system has an integrated Ultra 160 SCSI interface, and three hot-swapable disk drive bays that will hold up to 220.2 GB. The x360 also comes with 6 PCI based expansion slots, and currently has a large offering of add-on cards. The Model x360 is designed to pack as much power as possible into its current 3U's of rack space. The x360 is shown in Figure 2-4

Figure 2-4: Model 360 for cluster (storage) nodes

Model HS20 nodes

The Model HS20 is the blade server (displayed in the left hand side portion of Figure 2-5 on page 27) that is housed inside of the BladeCenter 7U chassis (displayed in the right-hand portion of Figure 2-5 on page 27). The HS20 is a 1U vertically mounted blade server and comes with either one or two Intel Xeon DP processors at speeds up to 2.8 GHz. The HS20 has room for 4 GB of (PC2100 ECC DDR) memory and comes with a 533 MHz front-side bus. The HS20 can house an Ultra 320 SCSI card, and two internal drives. The HS20 has two integrated 10/100/1000 Ethernet adapters, and daughter board based expansion slot. The Model HS20 is so compact that 14 blades can easily fit into the BladeCenter chassis.

Figure 2-5: Model HS20 for cluster (compute) nodes

2.2.3 Remote Supervisor Adapters

Each compute node has an Integrated Systems Management processor (also known as service processor) that monitors node environmental conditions (such as fan speed, temperature, and voltages) and allows remote BIOS console, power management, and event reporting.

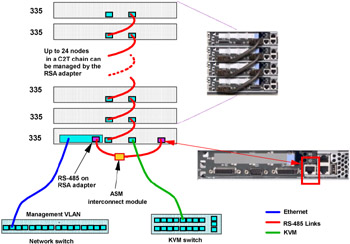

As shown in Figure 2-6 on page 29, remote access to the service processors is provided through an RS-485 bus carried over the Cable Chain Technology (C2T chain) connection. One node in each chain (usually the first) is fitted with a Remote Supervisor Adapter (RSA). This PCI card is externally powered, features an on-board processor and is connected to the management VLAN via its Ethernet port. The card allows remote control (power, BIOS console, and so on) and event reporting (fan failures, temperature alarms, and so on) of connected nodes over the management VLAN. This can occur even if there is no power to the node in which it resides due to the external power supply. Each RSA is capable of managing up to (24) x335 / x345 based compute nodes.

Figure 2-6: Management processor network

| Note | Here are a couple of things to keep in mind when you are dealing with RSA / C2T.

|

2.2.4 External storage

The IBM ![]() Cluster 1350 may be configured with IBM FAStT200 or FAStT700 Fibre storage subsystems.

Cluster 1350 may be configured with IBM FAStT200 or FAStT700 Fibre storage subsystems.

The FAStT200 controller supports up to 60 Fibre drives which are available in 18, 36, 72, and146 GB sizes (speeds vary from 10K - 15K rpm), giving a total maximum storage capacity of approximately 8.7 TB. This can be utilized in RAID 0, 1, 3, 5, or 10 configurations with one or two Fibre RAID controllers.

The FAStT700 controller supports the new FC2 standard, allowing a 2 Gb end-to-end solution. It may be connected to up to 224 Fibre drives, giving a maximum total storage capacity of approximately 32 TB. It comes with two RAID controllers as standard that support RAID 0, 1, 3, 5, or 10.

-

Supports up to 8 EXP700 expansion enclosures for each (2) FC loop

-

112 Disk Drives can be used in each loop (for example, 224 Drives for both)

-

-

Supports up to 11 EXP500 expansion enclosures for each (2) FC loop

-

110 Disk Drives can be used in each loop (for example, 220 Drives for both

-

Chapter 4, "Introducing General Parallel File System for Linux" on page 75 provides more information about the IBM TotalStorage® FAStT Storage Servers.

2.2.5 Networking

Depending on the needs of the cluster application(s), the IBM ![]() Cluster 1350 provides flexibility in the networking options that may be configured.

Cluster 1350 provides flexibility in the networking options that may be configured.

Cluster network

All the nodes in a Cluster 1350 must be connected together on a common network, often referred to as the cluster network. This network is used by the management software to administer and monitor the nodes and for network installations. If there is not a separate IPC network, the cluster network is also used for IPC traffic. The cluster network has become very flexible and will allow for connection speeds of 10/100/1000 Mbit Ethernet. Typically you will want to adjust your speed to match the size of your cluster.

If 100 Mbit Ethernet is used for the cluster network, one 10/100/1000 switch is installed in the top of each rack. If the cluster consists of multiple racks, each of the 10/100/1000 switches are connected together via a small gigabit switch, usually located in the primary rack.

Typically, the management node connects to the cluster network via gigabit Ethernet, either to the backbone switch or directly to a single 10/100/1000 switch. This higher bandwidth connection allows parallel network installation of a large number of nodes.

Management network

All the management hardware (terminal servers, RSAs, and so on) is connected together via a separate management network, usually just a separate VLAN on the same switch as the cluster network. This second network is employed, because the management hardware uses simple, plain-text passwords or no authentication at all. Isolating this network from all but the management node minimizes the risk of any unauthorized access.

Gigabit Ethernet

If a faster connection between the nodes in the cluster is desired, Gigabit Ethernet can be used.

Because the x335s and x345s have two on-board copper 10/100/1000 Mbit ports, it is possible to either migrate (configure) from the 10/100/1000 cluster network to an entirely switched gigabit network or add a separate gigabit Interprocess Communication (IPC) network. An IPC network is preferred when the cluster will be running heavily network intensive jobs; an overloaded cluster network can interfere with management functionality, for example, causing the Cluster Systems Management software to erroneously report the nodes as "down".

Myrinet

Parallel computing jobs often require the ability to transfer high volumes of data among the participating nodes without delay. The Myrinet network from Myricom has been designed specifically for this kind of high-speed and low-latency requirement.

Myrinet 2000 usually runs over Fibre connections, providing speeds of 2 Gb full-duplex and delivering application latency as low as 10 microseconds. Switches consist of a number of "line cards" or blades in a chassis; blades may be moved into a larger chassis if expansion beyond the current capacity is required. The largest single chassis supports up to 128 connections, but multiple switches may be linked together for larger clusters.

A Myrinet network is optional in the IBM ![]() Cluster 1350, because the need for this specialist network depends on the application(s) running on the cluster. It is most commonly required where highly network intensive applications are used, although the high network throughput offered by Myrinet is also desirable for network file servers, including GPFS.

Cluster 1350, because the need for this specialist network depends on the application(s) running on the cluster. It is most commonly required where highly network intensive applications are used, although the high network throughput offered by Myrinet is also desirable for network file servers, including GPFS.

2.2.6 Terminal servers

The IBM ![]() Cluster 1350 includes one or more terminal servers; The models supported are the Equinox ESP-16 and MRV In-Reach 8000 series (IR-8020-10120 and IR-8020-10140).

Cluster 1350 includes one or more terminal servers; The models supported are the Equinox ESP-16 and MRV In-Reach 8000 series (IR-8020-10120 and IR-8020-10140).

Each Equinox Ethernet Serial Provider (ESP) box adds 16 serial ports (ttys) to a server. Each can be used to access the node as if it were connected to a local serial port. Multiple ESP boxes can be used to extend serial port capability to match the total number of nodes.

The MRV boxes provide a simple IP based connection to the serial port. Each physical port on the box (there are 20 on the 10120 and 40 on the 10140) has a TCP port assigned to it. Connecting to that TCP port is equivalent to accessing the corresponding serial port.

Either of these products is used in the cluster to give an administrator full access to the nodes as if each one had a serially-connected display, even when she or he is not physically at the cluster. This is particularly useful when the cluster is situated in a different room and almost essential when the cluster is located off-site.

Through a telnet or secure shell (SSH) connection to the management node, an administrator can use the terminal server to gain console access to any node, whether or not its network card is active. This is useful when the system has been accidentally misconfigured and for diagnosing and correcting boot-time errors.

The terminal server can also be used to monitor the installation of each cluster node. This allows the administrator to confirm the installation is progressing and perform problem determination in the event of installation problems.

2.2.7 Hardware console: Keyboard, video, and mouse (KVM)

Traditional PCs require a monitor, keyboard and mouse for local operations; however, purchasing one for each node in a cluster would be very expensive, especially considering there will be virtually no local interaction! The Cluster 1350 allows a single monitor and keyboard to be attached to the entire cluster using a combination of the following:

-

C2T technology

x335 nodes include a unique IBM feature known as Cable Chain Technology or C2T. With C2T, a number of short jumper cables "daisy-chain" the KVM signals through the nodes. A different cable is used on the bottom node in the rack that breaks out to standard keyboard, monitor, and mouse connectors. The single, physical monitor, keyboard and mouse is then logically attached to a single node by pressing a button on the front of that node.

-

KVM switch

Multiple x335 chains and any x345 nodes are connected to a single keyboard, monitor, and mouse via a KVM switch, located in the primary rack. A KVM switch is connected to a single monitor, keyboard, and mouse, but has connectors that accept a number of standard PC keyboard, video, and mouse inputs. By pressing a hot key (Print Screen), the user may select which of the inputs the physical devices access.

A standard IBM switch has eight ports, each of which may be connected to a rack of x335 or an individual x345. Multiple switches may be connected together for particularly large systems.

Monitor and keyboard

The single physical console installed in the management rack along with the KVM switch(es). A special 2U drawer houses a flat-panel monitor and a "space saver" keyboard that features a built-in track-point mouse, such as is found on IBM Thinkpads.

|

| < Day Day Up > |

|

EAN: 2147483647

Pages: 123