A General Surveillance Game

We now take these lessons and use them to construct a more general model. We begin with what we assume to be obvious ” that employees will not react to all surveillance in the same way. As an employee of a large institution, for example, I react very differently to surveillance cameras in our parking lots and surveillance software on our computer networks. I approve of and cooperate with the former but perhaps not the latter. We would like our model to capture this complexity and provide some ethical guidance in navigating it.

From the perspective of strategic interaction, we can immediately distinguish two quite different contexts in which workplace surveillance might be used ” welcome and adverse.

-

Welcome surveillance. Video conferencing is an example of welcome surveillance. Using video conferencing software in order to communicate better, I want you to see me as much as I want to see you. This is a simple coordination problem where we both want the same outcome. A more complex example is video surveillance of parking lots, which protects a core shared value of physical safety, but also threatens privacy and may be used to enforce less widely valued workplace rules (e.g., against smoking). Similarly, telephone monitoring protects employees by deterring harassment and unfair customer complaints about service, but comes at a risk to employees privacy.

-

Adverse surveillance. The Panopticon Game in Figure 1 exemplifies this category. Turning to a workplace example, when installing a nanny-cam or e-mail monitoring software, the employer hopes to be able to view or deter behavior that the other party does not want observed . Because the two sides want opposite outcomes , their interaction is purely conflictual. The lesson learned from the game theoretic analysis of our first model is to look not merely for strategies and preferences, but ahead to the likely equilibria of the game or social situation. In games of pure conflict, we expect randomized behavior (mixed strategies) and deception, such as dummy cameras and bluffs about the extent of network monitoring.

Next, we identify two complicating elements: dynamic countermeasures and mixed populations.

Dynamic Countermeasures

Since adverse surveillance is a competitive situation, not a game with fixed rules, we should expect countermeasures. These add a dynamic element to the situation. For example, if e-mail surveillance is resented and resisted, we may find employees adding trigger terms to innocuous e-mail as a tactic to overload the software. Just how elaborate these adverse arms races can become is seen in the current non-employment examples of unwanted commercial e-mail (spam) and computer viruses. In both cases, automated surveillance software is the primary remedy, but as the intruders continue to develop their techniques, the problems remain unsolved and become increasingly expensive.

Mixed Populations

In many cases, different agents will react differently in the same situation. For example, one employee may welcome e-mail and Web filtering that removes spam and blocks access to non-work- related sites, while another may find surveillance in this situation adverse. Or, at a more sophisticated level, if I expect the system to work adversely to my interests or to treat me unfairly, I might refuse to cooperate with it.

An Assurance Game

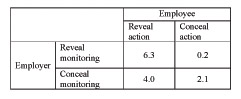

Figure 2: A general surveillance game

Lessons from the Model

We can draw some lessons from this analysis of a workplace surveillance situation. First, in our model of the general surveillance game, our expectations of the other player s behavior determine which equilibrium we end up with. This suggests that it would be a mistake to see the technology, by itself, determining outcomes. This is clearest if we consider the first step in the introduction of workplace surveillance, where the cooperative equilibrium might be do not employ . Surveillance technology does not determine the choice to use it. Deployment is rational only if one expects the other party to choose non-cooperatively. This point generalizes to the choice of more or less cooperative surveillance technologies.

Second, in a situation with two equilibria, one should neither treat everyone as a problem nor everyone as a well-intentioned cooperator. Varied populations and different reactions to different contexts are to be expected. It is particularly important not to overlook ways to reinforce the fragile but socially important cooperative (NW) equilibrium.

Third, the cost of a technology with an adversarial aspect will tend to be underestimated for several reasons:

-

Countermeasures may not be taken into account. This is especially relevant in the case of workplace surveillance, as the time and effort put into these countermeasures will largely be taken away from the organizational goal. That is, even if employer wins the battle against, say, devious Web browsing techniques, the employer will have paid for the time and effort of both sides in this arms race.

-

This tendency to underestimate costs becomes more important as we move from a simple to a more realistic model. We need to consider the additional complexity induced by introducing additional observers ( third parties ) of the surveillance data. This complicates some of the promised benefits of the technology. For example, surveillance may deter some instances of bribery and sexual harassment, but those instances that do occur are now known to additional agents, introducing new opportunities for loss of privacy or even extortion. Putting the point most generally, surveillance confirms the centrality of principal-agent problems in organizational ethics (Buchanan, 1996). As we elaborate our simple model, we are forced to unpack technological black boxes to reveal the agents needed to staff them. Each of them needs to be motivated to do what is required, spawning new strategic issues.

-

In addition, obtaining information often has unexpected costs due to the strategic element. For example, if others rely on surveillance, acquiring new information brings new responsibilities to them. Green (1999) discusses the case where third parties rely on surveillance cameras to bring them assistance. Another example is harassment, which ceases to be a private matter once it is known to management. New information may also be really unexpected in the sense of surprising and straining established institutional procedures. For example, a device installed to deter an expected class of activity may catch evidence of completely unsuspected illicit activity.

Finally, we emphasize that our model is exploratory and open to criticism and refinement as we proceed. For example, while the model treats Employer and Employee as occupying unequal roles, much more needs to be said about the difference this makes for questions like leadership. It is obviously much more difficult for an employee to move an organization to a cooperative equilibrium than it is for an employer. More generally, our model is of the simplest kind: a two-player game between an Employer and an Employee. A more realistic account would need to look at populations of agents, when an employer needs to deal with employees using different strategies. Danielson (2002b) discusses some of these more complex relationships.

EAN: 2147483647

Pages: 161