Configuration Verification and Audit

OVERVIEW

A variety of things can go wrong with the CM (configuration management) process. Brown et al. [1999] list a set of "antipatterns" ” commonly repeated flawed practices:

- Reliance on a software configuration tool to implement an SCM program.

- The CM manager becomes a controlling force beyond his or her planned role. This leads to the CM manager dictating the delivery sequence and dominating all other processes.

- Delegating CM functions to whoever happens to be available. Project managers, often strapped for resources, frequently parcel out the CM function to developers. CM really needs to be process that stands apart from development. Brown says that their role as a developer compromises their role as software configuration manager, because their primary responsibility is for the development of software. Developers use this as the "product." From a CM perspective, the product is not just the software, but also the documentation.

- Use of decentralized repositories. The key behind CM is shared information. This requires a shared repository. Many organizations utilize a decentralized mode of operation. Decentralization negates shared information.

- Object-oriented development poses granularity problems. CM must happen at a detailed level of the interaction of a few objects and at a higher level where component interfaces are deployed.

The configuration verification and audit process includes:

- Configuration verification of the initial configuration of a CI, and the incorporation of approved engineering changes, to assure that the CI meets its required performance and documented configuration requirements

- Configuration audit of configuration verification records and physical product to validate that a development program has achieved its performance requirements and configuration documentation or the system/CI being audited is consistent with the product meeting the requirements

The common objective is to establish a high level of confidence in the configuration documentation used as the basis for configuration control and support of the product throughout its life cycle. Configuration verification should be an imbedded function of the process for creating and modifying the CI or CSCI.

As shown in Figure 8.1, inputs to the configuration verification and audit activity include:

- Configuration, status, and schedule information from status accounting

- Approved configuration documentation (which is a product of the configuration identification process)

- The results of testing and verification

- The physical hardware CI or software CSCI and its representation

- Manufacturing

- Manufacturing/build instructions and engineering tools, including the software engineering environment, used to develop, produce, test, and verify the product

Figure 8.1: Configuration Verification and Audit Activity Model

Successful completion of verification and audit activities results in a verified system/CI(s) and a documentation set that can be confidently considered a product baseline. It also results in a validated process to maintain the continuing consistency of product to documentation. Appendices V and W provide sample checklists for performing both a functional configuration and physical configuration audit.

CONFIGURATION VERIFICATION AND AUDIT CONCEPTS AND PRINCIPLES

There is a functional and a physical attribute to both configuration verification and configuration audit. Configuration verification is an ongoing process. The reward for effective release, baselining, and configuration/change verification is delivery of a known configuration that is consistent with its documentation and meets its performance requirements. These are precisely the attributes needed to satisfy the ISO 9000 series requirements for design verification and design validation as well as the ISO 10007 requirement for configuration audit.

Configuration Verification

Configuration verification is a process that is common to configuration management, systems engineering, design engineering, manufacturing, and quality assurance. It is the means by which a developer verifies his or her design solution.

The functional aspect of configuration verification encompasses all of the test and demonstrations performed to meet the quality assurance sections of the applicable performance specifications. The tests include verification/qualification tests performed on a selected unit or units of the CI, and repetitive acceptance testing performed on each deliverable CI, or on a sampling from each lot of CIs, as applicable . The physical aspect of configuration verification establishes that the as-built configuration conforms to the as-designed configuration. The developer accomplishes this verification by physical inspection, process control, or a combination of the two.

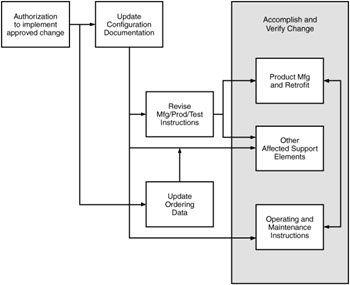

Once the initial configuration has been verified, approved changes to the configuration must also be verified . Figure 8.2 illustrates the elements in the process of implementing an approved change.

Figure 8.2: Change Implementation and Verification

Change verification may involve a detailed audit, a series of tests, a validation of operation, maintenance, installation, or modification instructions, or a simple inspection. The choice of the appropriate method depends on the nature of the CI, the complexity of the change, and the support commodities that the change impacts.

Configuration Audit

The dictionary definition of the word "audit" as a final accounting gives some insight into the value of conducting configuration audits . Configuration management is used to define and control the configuration base-lines for the CIs and the system. In general, a performance specification is used to define the essential performance requirements and constraints that the CI must meet.

For complex systems and CIs, a "performance" audit is necessary to make this determination. Also, because development of an item involves the generation of product documentation, it is prudent to ascertain that the documentation is an accurate representation of the design being delivered.

Configuration audits provide the framework, and the detailed requirements, for verifying that the development effort has successfully achieved all the requirements specified in the configuration baselines. If there are any problems, it is the auditing activity's responsibility to ensure that all action items are identified, addressed, and closed out before the design activity can be deemed to have successfully fulfilled the requirements.

There are three phases to the audit process, and each is very important. The pre-audit part of the process sets the schedule, agenda, facilities, and rules of conduct and identifies the participants for the audit. The actual audit itself is the second phase; and the third phase is the post-audit phase, in which diligent follow-up of the audit action items must take place. For complex products, the configuration audit process may be a series of sequential/parallel audits of various CIs conducted over a period of time to verify all relevant elements in the system product structure. Audit of a CI can include incremental audits of lower-level items to assess the degree of achievement of requirements defined in specifications/documentation.

Functional Configuration Audit

The functional configuration audit (FCA) is used to verify that the actual performance of the CI meets the requirements stated in its performance specification and to certify that the CI has met those requirements. For systems, the FCA is used to verify that the actual performance of the system meets the requirements stated in the system performance specification. In some cases, especially for very large, complex CIs and systems, the audits can be accomplished in increments. Each increment can address a specific functional area of the system/CI and will document any dis-crepancies found in the performance capabilities of that increment. After all the increments have been completed, a final (summary) FCA can be held to address the status of all the action items that have been identified by the incremental meetings and to document the status of the FCA for the system or CI in the minutes and certifications. In this way, the audit is effectively accomplished with minimal complications.

Physical Configuration Audit

The physical configuration audit (PCA) is used to examine the actual configuration of the CI that is representative of the product configuration in order to verify that the related design documentation matches the design of the deliverable CI. It is also used to validate many of the supporting processes that were used in the production of the CI. The PCA is also used to verify that any elements of the CI that were redesigned after the completion of the FCA also meet the requirements of the CI's performance specification.

Application of Audits During Life Cycle

It is extremely unlikely that FCAs or PCAs will be accomplished during the Concept Exploration and Definition phase or the Program Definition and Risk Reduction phase of the life cycle. Audits are intended to address the acceptability of a final, production-ready design and that is hardly the case for any design developed this early in the life cycle.

It is during the Engineering and Manufacturing Development (EMD) phase that the final, production, operationally ready design is developed. Thus, this phase is normally the focus for the auditing activity. A PCA will be preformed for each HW CI that has completed the FCA process to "lock down" the detail design by establishing a product baseline. Hardware CIs built during this phase are sometimes "pre-production prototypes " and are not necessarily representative of the production hardware. Therefore, it is very common for the PCAs to be delayed until early in the Production phase of the program.

Requirements to accomplish FCAs for systems and CIs are included in the Statement of Work (SOW) tasking. The FCA is accomplished to verify that the requirements in the system and CI performance specifications have been achieved in the design. It does not focus on the results of the operational testing that is often accomplished by operational testing organizations in the services, although some of the findings from the operational testing may highlight performance requirements in the baselined specification that have not been achieved. Deficiencies in performance capability, as defined in the baselined specification, result in FCA action items requiring correction without a change to the contract. Deficiencies in the operational capability, as defined in user -prepared need documents, usually result in Engineering Change Proposals (ECPs) to incorporate revised requirements into the baselined specifications or to fund the development of new or revised designs to achieve the operational capability.

Because the final tested software design verified at the FCA normally becomes the production design, the PCAs for CSCIs are normally included as a part of the SOW tasking for the EMD phase. CSCI FCAs and PCAs can be conducted simultaneously to conserve resources and to shorten schedules.

During a PCA, the deliverable item (hardware or software) is compared to the product configuration documentation to ensure that the documentation matches the design. This ensures that the exact design that will require support is documented. The intent is that an exact record of the configuration will be maintained as various repair and modification actions are completed. The basic goal is sometimes compromised in the actual operation and maintenance environment. Expediency, unauthorized changes, cannibalization, overwork, failure to complete paperwork, and carelessness can cause the record of the configuration of operational software or hardware to become inaccurate. In some situations, a unit cannot be maintained or modified until its configuration is determined. In these kinds of circumstances, it is often necessary to inspect the unit against approved product configuration documentation, as in a PCA, to determine where differences exist. Then the unit can be brought back into conformance with the documentation, or the records corrected to reflect the actual unit configuration.

As discussed, configuration audits address two major concerns:

- The ability of the developed design to meet the specified performance requirements (the FCA addresses this concern)

- The accuracy of the documentation reflecting the production design (the PCA addresses this concern)

Audit checklists are provided in Table 8.1.

|

Audit Planning Checklist:

Audit Agenda Checklist:

Audit Teams Checklist:

Conducting Configuration Audits Introductory Briefings Checklist:

|

|

Conduct Reviews. Prepare Audit Findings (problem write-ups) Checklist: Sub-teams facilitate the conduct of the audit by enabling parallel effort; auditors assigned to work in area of expertise.

|

|

Problem Write-up Checklist:

|

|

Disposition Audit Findings Checklist:

Documenting Audit Results Checklist:

|

SUMMARY

Testing is a critical component of software engineering. It is the final step taken prior to deploying the system. Configuration Verification and Audit organizes this process to ensure that the deployed system is as expected by the end users.

REFERENCES

This chapter is based on the following report: MIL-HDBK-61A(SE), February 7, 2001 , Military Handbook: Configuration Management Guidance .

[Brown 1999] Brown, William, Hays McCormick, and Scott Thomas, AntiPatterns and Patterns in Software Configuration Management , John Wiley & Sons, New York, 1999 .

Preface

- Introduction to Software Configuration Management

- Project Management in a CM Environment

- The DoD CM Process Model

- Configuration Identification

- Configuration Control

- Configuration Status Accounting

- A Practical Approach to Documentation and Configuration Status Accounting

- Configuration Verification and Audit

- A Practical Approach to Configuration Verification and Audit

- Configuration Management and Data Management

- Configuration Change Management

- Configuration Management and Software Engineering Standards Reference

- Metrics and Configuration Management Reference

- CM Automation

- Appendix A Project Plan

- Appendix C Sample Data Dictionary

- Appendix D Problem Change Report

- Appendix E Test Plan

- Appendix G Sample Inspection Plan

- Appendix I System Service Request

- Appendix J Document Change Request (DCR)

- Appendix K Problem/Change Report

- Appendix L Software Requirements Changes

- Appendix M Problem Report (PR)

- Appendix N Corrective Action Processing (CAP)

- Appendix P Project Statement of Work

- Appendix Q Problem Trouble Report (PTR)

- Appendix S Sample Maintenance Plan

- Appendix T Software Configuration Management Plan (SCMP)

- Appendix U Acronyms and Glossary

- Appendix V Functional Configuration Audit (FCA) Checklist

- Appendix W Physical Configuration Audit (PCA) Checklist

- Appendix X SCM Guidance for Achieving the Repeatable Level on the Software

- Appendix Y Supplier CM Market Analysis Questionnaire

EAN: 2147483647

Pages: 235