Reading and Writing XML

This first section of the chapter looks at how you can manipulate lexical XML (XML as a character or byte stream) from a Java application. You look first at the input side (parsing), then at output (serialization).

Because this is a bottom-up approach to the chapter, it risks giving too much prominence to these interfaces. Remember that these are low-level interfaces. They represent the foundation on which other things are built. You may find that you never have to use these interfaces directly because you can take advantage of the superstructure built on top of them. But it's worth knowing that they are there and that they offer things you can't achieve at a higher level of the stack.

Parsing from Java

Until recently, the only standard low-level interface for parsing from Java was the SAX interface. SAX is sufficiently important that the whole of Chapter 13 is devoted to it. This section starts with a quick overview of SAX and then moves on to a new contender in this area, the StAX interface.

SAX: Push Parsing

SAX is a push interface. The parser is in control: It reads the XML input stream, and when it finds something of interest like a start tag, an end tag, or a processing instruction, it notifies the application by calling an appropriate method. The application registers a ContentHandler with the parser; the ContentHandler implements methods such as startElement(), endElement(), characters(), comment(), and processingInstruction(). The parser calls these methods when the relevant events occur.

That's a very cursory overview, of course, but you can afford to take a bird's eye view because all the detail is covered in Chapter 13.

Writing Java applications to use the SAX interface is traditionally considered to be rather difficult. The reasons for this include the following:

-

q Programmers like to be in control. It's difficult to write an application as a set of methods without knowing which method is going to be called next. Of course, that's exactly what you have to do when you write a GUI application that responds to events such as button clicks; but knowing that doesn't make it any easier.

-

q Closely related to this, it can be hard to keep track of the context. If an element representing, say, an invoice has a flexible structure, it can be hard to know what processing to perform as each event occurs, and where to put the logic, for example, that assigns a value to fields that were absent from the input.

SAX is, however, extremely efficient. The interface is designed to avoid unnecessary creation of objects, which is always an expensive operation in Java. If you're not careful you can throw away all these performance benefits at the application level, but that's a general characteristic of low-level interfaces.

One of the nice features of SAX is that it lends itself very well to the construction of pipelines. A pipeline consists of a sequence of processing stages, each of which takes XML as its input and produces XML as its output. In principle, the XML that passes from one stage to another could be represented any way you like: It could be as a file of lexical XML or as a DOM tree in memory, for example. It might seem like an abstract notion to represent an XML document as a sequence of events pushed from one stage of the pipeline to the next, and it certainly isn't one that comes naturally to many people. But it's actually a very effective way of doing it. Unlike lexical XML, you avoid the overhead of having one stage in the pipeline serialize the XML, while the next stage parses it again. And unlike when you use a DOM or other tree representations, you don't need to tie down memory-this, of course, becomes increasingly important as the size of your documents increases. (It's also important if you are processing many concurrent transactions.)

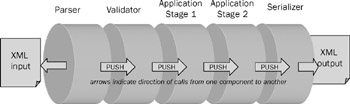

Figure 16-1 shows a typical push pipeline implemented using SAX. At the source of the pipeline is a SAX parser followed by a schema validation stage, followed by two application-level processing stages that manipulate the content before passing it to a SAX-based serializer that acts as the final destination. One of the advantages of such a pipeline is that the different stages don't all have to use the same technology: You can write SAX-based pipeline filters in XSLT or XQuery as well as in Java.

Figure 16-1

SAX parsers are widely available. The field has consolidated so that most people nowadays use Xerces, a product that originated in IBM and was donated to the Apache project. It is probably installed on your machine as a built-in component of the Sun JDK-that's if you're using J2SE 5.0. In JDK 1.3 and 1.4, a different parser, called Crimson, was bundled in. Of course, one of the reasons for having standard interfaces is that it's possible to swap one implementation with another without applications noticing. Other SAX parsers you might come across include one from Oracle (part of the Oracle XDK toolkit), and if you use the GNU Classpath library, its default SAX parser is Ælfred2.

You come back to pipelines in a moment, after looking at the new alternative for low-level parsing, namely StAX.

StAX: Pull Parsing

When Microsoft came out with its XML tools for the .NET platform (see Chapter 15), it decided to include a parser with a very different API, referred to as a pull API. Instead of having the parser in control, calling the application when it comes across something of interest, the application is now in control, executing a series of getNext() calls to ask the parser for more data. It's not clear to what extent StAX was actually based on the .NET ideas, but it's certainly true to say that .NET popularized this alternative approach to low-level parsing, and the Java community responded to the interest that Microsoft created.

For many programmers, this style of interface comes naturally. It's easier to call on a service than to be called by it; it makes it easier to understand the flow of control and the sequence of events, and easier to see where to put the conditional logic that says, "If the next thing is an X, do this; if it's a Y, then do that."

When it was first proposed, many advocates of pull parsing claimed that it was potentially faster. I think the case for that is unproven. In the quest for ultimate parsing speed, many apparently small things can make a big difference. For example, it's important to minimize the number of times characters are moved from one buffer to another. This is affected by the fine detail of the API design, but it's unclear that either pull or push interfaces are superior in this regard. The best parsers in both categories seem to be within 20 percent of each other in processing speed (I won't say which way, because they are playing leapfrog with each other), and in my view this is unlikely to be significant in terms of overall application performance.

The StaX interface, also known as JSR (for Java Specification Request) 173, has been in gestation for a long time. The initiative came from BEA Systems back in 2002, bringing together previous projects that had previously lacked critical mass. But StAX doesn't find its way into the Java mainstream until J2SE 6.0, which many users won't be adopting until 2008 or beyond. The years in between have been rather frustrating for users keen to try the technology, with long intervals between parser releases that turned out to be rather buggy and with poor interoperability between the different products. One of the best implementations at the time of writing is probably Woodstox, written by Tatu Saloranta (woodstox.codehaus.org).

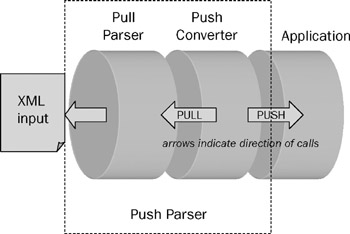

One of the attractions of a pull interface for parser vendors is that you can layer a push interface on top of a pull interface, but not the other way around. This is illustrated in Figure 16-2.

Figure 16-2

Here a control program pulls data from the parser (by calling its getNext() method) and pushes it to the application (by calling the SAX ContentHandler methods such as startElement(), endElement(), and so on).

Note how this is another example of a pipeline. In a pipeline, the components can either pull data from the previous stage in the pipeline, or they can push data to the next stage. The problem is that only one component can be in charge. Upstream from that component, everyone has to pull; downstream, everyone has to push. The Figure 16-3 illustrates this.

Figure 16-3

If you want to connect a pipeline that pushes data to one that pulls it, you can do this in two ways.

-

q The first pipeline can build a tree in memory (for example, a DOM), and the second pipeline can read from the tree, as shown in Figure 16-4.

Figure 16-4 -

q The two pipelines can operate in different threads or processes, which allows more than one control loop. This requires some fairly difficult concurrent programming and is not to be attempted lightly; the overhead of coordinating multiple threads can quickly eliminate any performance gains. Typically, the two threads communicate via a cyclic buffer holding a queue of events, as shown in the diagram that follows.

One of the potential attractions of the StAX pull interface is that in time, you can pull data not only from a parser analyzing lexical XML, but from other sources of XML. For example, an XSLT or XQuery engine enables you to read the results of a transformation or query into your application using this style of API. Of course, this is equally true of SAX, but it might well be an area where the added programming convenience proves decisive.

So far I've discussed the principles of StAX. Because it's not yet well-established enough to justify a chapter of its own in this book, the following sections go into a little more detail to make the ideas more concrete.

StAX, in fact, offers two pull APIs, the cursor API and the iterator API. Why two? Because one optimizes performance, whereas the other optimizes usability. The team that defined the specification wasn't prepared to trade one for the other. You can regard the iterator API as a layer on top of the cursor API, making it a bit more user-friendly. The implementation, however, doesn't necessarily work that way internally.

In the lower-level cursor API, the interface offered by the parser is called XMLStreamReader. Its main methods are hasNext(), which tests whether there are more parsing events to come, and next(), which gets the next such event. The next() method returns an integer identifying the event, for example START_ELEMENT, END_ELEMENT, CHARACTERS, or COMMENT. You can request further details of the current event from the XMLStreamReader. For example, if you are positioned on a START_ELEMENT event, you can call getName() to determine the name of the element. One reason this API is efficient is that it doesn't give you any information unless you actually ask for it. (However, the efficiency is limited by the fact that a conformant XML parser is obliged to check that the XML is well-formed. For example it must detect when an element name contains invalid characters even if the application doesn't ask to see the element name).

Attributes and namespaces can also be read directly from the XMLStreamReader. After a START_ELEMENT event, a call on getAttributeCount() tells you how many attributes the element has, and you can then call methods such as getAttributeName(N) and getAttributeValue(N) to find details of the Nth attribute. Similar methods are available for namespace declarations (which in StAX are not treated as attributes).

The higher-level API in StAX is the iterator API, presented by the interface XMLEventReader. This has two similar methods hasNext() and nextEvent() that you can use to read though the input document.

Unlike the next() method of the cursor API, however, nextEvent returns an Event object, which provides properties directly to the current event. When it encounters an element start tag, the relevant event can be cast to a StartElement event, which offers a method getAttributeByName() to find the attribute with a given qualified name. The iterator API also maintains the full namespace context on your behalf. So if the document contains attributes such as xsi:type, whose value contains a QName, you can use this namespace context to see what namespace the prefix xsi refers to, without having to track all the namespace declarations in your application. Clearly, this interface is likely to be a bit less efficient because it is collecting information just in case you happen to need it.

Both the iterator and the cursor API allow you to do something that's not possible in SAX, namely to skip forward. For example, if you hit the start tag of an element that you're not interested in, you can fast-forward to the corresponding end tag. This gives a potential performance boost by reducing unnecessary chit-chat and also simplifies your application code, which no longer has to deal with the events for the unwanted subtree.

Another thing that's much easier to do cleanly in a pull API rather than a push API is to abandon processing. If you have read as much of the document as you need to see, in StAX you just stop reading. (It's a good idea to issue a close() to give the parser a chance to tidy up, but if you don't, the garbage collector will take care of it eventually.) In SAX, the only way an application can ask the parser to stop is to throw an exception. That's much messier: For a start, exceptions are expensive, and also, it can be difficult to distinguish it from a real application error, especially when the application is part of a complex pipeline.

I hope you've learned enough about pull parsing to give you a feel for whether this is an interface you should look at more closely. If it is, then you can find plenty of reference information on the Web. The best place to look is probably the J2SE 6.0 JavaDoc specification in package javax.xml.stream and its subpackages. You don't actually need J2SE 6.0 to use StAX, however. Parsers such as Woodstox come with a copy of the interface definitions that you need.

Writing XML (Serialization)

Having looked at the interfaces available to a Java program for reading XML, you can now turn to the other side of the coin: How do you write a file containing lexical XML?

One option is simply to create a PrintWriter and write to it:

PrintWriter w = new PrintWriter(new File("output.xml")); w.write("<a>here is some XML</a>"); w.close(); This isn't something I would recommend, although I have to admit I've done it often enough myself when I was in a hurry. The main traps to avoid are the following:

-

q Make sure that special characters in text and attribute nodes are properly escaped, for example that & is written as & and < as <

-

q You need to make sure that the character encoding of the file as written to disk matches the character encoding specified in the XML declaration.

-

q It's entirely your responsibility to make sure that the document is well-formed, for example that all namespaces are properly declared.

There's also a more subtle reason why this is not the preferred interface. After you've committed your code to writing lexical XML (angle brackets, escaped ampersands and all), you won't be able to deploy your application so readily in a pipeline. Pipelines are the key to writing reusable software components in an XML-based application (which is why I keep coming back to the subject), and you should always bear in mind that someone else might one day want to modify the output of your program by adding a postprocessing stage to the pipeline. Unless you write your XML using a higher-level interface than basic print statements, this won't be possible without expensive reparsing.

Furthermore, if you use an XML serialization library, you can probably tweak the output in many ways without changing your application. An obvious example is switching indentation on or off: Indented output makes life much easier if the XML must be read by human beings, but it can add significant overhead when transmitted over a network.

So now, look at the alternatives. In this section, you see approaches that enable your Java application to write directly to a serializer. If you've got the data in a tree representation such as DOM or one of the other tree models discussed later in the chapter, you can also serialize directly from the tree. But you don't want to build a tree in memory just so that you can serialize it.

Using a JAXP Serializer

In its very first incarnations, the JAXP (Java API for XML Processing) suite of interfaces provided control over two aspects of XML processing: XML parsing, and XSLT transformation. You look at the transformation API more closely later in this chapter. It so happens that the XSLT specification includes the definition of a serializer that converts XSLT's internal tree representation of XML into lexical XML output.

It uses an <xsl:output> declaration in the stylesheet to control the details of how this is done. The designers of the JAXP interface decided to structure the interface so that you can invoke the serialization component whether or not you have done a transformation.

In fact, no class or interface in JAXP explicitly calls itself a serializer. Instead, something called an identity transformer can convert one representation of XML (provided as a Source) into a different representation (the Result), without modifying the XML en route. Three kinds of Source objects are defined: a DOMSource, a SAXSource, and a StreamSource, as well as three kinds of Result objects: DOMResult, SAXResult, and StreamResult. An IdentityTransformer can convert any kind of Source into any kind of Result. Moreover, implementers can provide additional kinds of Source or Result, further adding to the possibilities.

Because a StreamResult represents XML lexically, any identity transformer that produces a StreamResult as its output is acting as an XML serializer.

To serialize XML from a Java application, you want the identity transformer in the form of a TranformerHandler. The way you achieve this is:

TransformerFactory factory = TransformerFactory.newInstance(); TransformerHandler serializer = ((SAXTransformerFactory)factory).newTransformerHandler(); serializer.setResult(new StreamResult(new File("output.xml"))); Technically, before doing this, you should check that the TransformerFactory is one that offers this optional feature, but as far as I know all the implementations in common use do.

The interface TransformerHandler extends the SAX ContentHandler interface, so you can now write your output by calling the ContentHandler methods:

serializer.startDocument(); serializer.startElement("", "a", "a", new AttributesImpl()); String s = "some XML content"; serializer.characters(s.toCharArray(), 0, s.length()); serializer.endElement("", "a", "a"); serializer.endDocument(); This approach has a number of advantages. The serialization library takes care of all the details of escaping and character encoding, reducing the risk of bugs in your application. And because you are writing to the standard ContentHandler interface, it's easy to change your application so it pipes the output into a different ContentHandler, one which performs further application processing rather than doing immediate serialization.

You can also set serialization properties using this interface. Here's an example that illustrates how to do this. The output is serialized as HTML (which will only be useful, of course, if the elements you are writing are valid HTML elements-but that applies equally to any vocabulary).

Transformer trans = serializer.getTransformer(); trans.setOutputProperty(OutputKeys.METHOD, "html"); trans.setOutputProperty(OutputKeys.INDENT, "yes"); trans.setOutputProperty(OutputKeys,ENCODING, "iso-8859-1");

Serializing Using StAX

I've already discussed StAX as a pull parser API, which is how most people think of it. But in fact, StAX has a push API as well. The SAX ContentHandler interface, used in the previous section, was primarily designed as an interface allowing an XML parser to push events to a Java application. This explains why it's a little bit clumsy when you use it, as you just did, to push events from a Java application to a serializer. By contrast, the StAX push API is designed primarily to allow applications to push events to other components, such as serializers, so it is more user-friendly from the point of view of the component doing the pushing.

As with the pull API, the StAX push API comes in two flavors. The cursor-level interface is called XMLStreamWriter (mirroring XMLStreamReader), whereas the iterator-level interface is XMLEventWriter (mirroring XMLEventReader).

Here's how you might serialize a simple document using the XMLStreamWriter interface:

XMLOutputFactory factory = XMLOutputFactory.newInstance(); XMLStreamWriter serializer = factory.createXMLStreamWriter( new FileOutputStream(new File("output.xml"))); serializer.writeStartDocument("iso-8859-1", "1.0"); serializer.writeStartElement("", "a"); serializer.writeCharacters("some XML content"); serializer.writeEndElement(); serializer.writeEndDocument(); You can also call setProperty() on the XMLStreamWriter object to set serialization properties, but unlike the JAXP interface, it has no standard property names. You have to look in the documentation to see what properties are available for your chosen implementation. Woodstox, for example, has a property that allows you to control whether empty elements should be written in minimized form (such as <empty/>).

The StAX serialization interface is slightly more convenient to use than the JAXP ContentHandler interface, but I will probably continue to use the SAX interface until StAX implementations are more widely available and offer serialization properties similar to those available in JAXP. Some features, such as HTML serialization, are available only through the JAXP interface.

This completes a tour of the lowest level of XML interfaces for Java, the interfaces for reading and writing lexical XML. In the next section, you explore the next level: generic tree models for XML.

EAN: 2147483647

Pages: 215