Building and Delivering Reports

| | ||

| | ||

| | ||

Building and Delivering Reports

At last, its time to put together a standard reporting environment. This section concentrates on the process of building the environment and the reports themselves . As always, our process starts with a bit of planning and preparation. We then proceed to the creation of the initial set of standard reports based on the prioritized list presented in Chapter 8. Once the individual reports are in place, we examine an approach to creating the BI portal. This is followed by setting up the standard report operations process. Finally, we talk a bit about ongoing maintenance of the standard reporting system. Although this is not intended to be a step-by-step tutorial, we will build an example report based on the updated Adventure Works data warehouse relational and Analysis Services databases described in Chapters 3 and 7. You should be able to recreate the example report once you get a little Reporting Services experience.

Planning and Preparation

The temptation to dive in and start building reports is almost irresistible at this point. Be strong. Even if youre a Reporting Services pro, its worth taking a few days to set up your reporting environment and figure out the overall reporting process before you start creating reports. You should also have done all the application specification work described in Chapter 8. The major items you need to address are setting up the development environment, creating standard templates and styles, setting up the delivery and notification metadata and processes, and setting up a usage tracking system.

Setting Up the Development Environment

We discussed setting up the development environment for Reporting Services in Chapter 4. The main challenge lies in finding the optimal combination of services and machines. Reporting Services keeps its metadata in a SQL Server database called ReportServer. While it is possible to have Reporting Services and the ReportServer database on the same machine, moving the catalog to a SQL Server instance on another machine is the easiest way to improve Reporting Services performance.

Creating Standard Templates

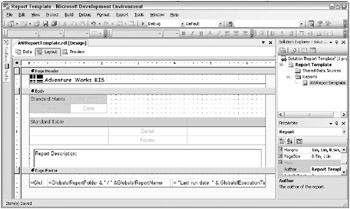

Once the development environment is in place, you need to set up the standard report layout templates we described in Chapter 8. The easiest way to create a report template is to create a blank report with the standard elements you and your business partners defined and laid out according to the specification from Chapter 8 (see Figure 8.2). We wont go through the step-by-step process of creating the template because its the same process as creating a report, which we do in the next section. Figure 9.6 shows what the simple report template from Chapter 8 might look like in the Report Designer. It includes both a Table and Matrix with the appropriate styles (fonts, background colors, and the like) predefined, but there are no data fields in these items. Usually, the developer uses the appropriate item for the report and deletes the other item.

Figure 9.6: Example Adventure Works Cycles layout template in the Report Designer

After youve defined your layout template, use your source control system to make it available to the development team. If youd like to have it appear in the dialog box as a choice when you use the Add/New Item Report dialog box to add a new item, you need to save the template to a special directory. Save the completed layout template report to its project directory and copy the resulting .rdl file to the template directory.

| Tip | The location of the template directory depends on how your system is configured. Its usually located under the Visual Studio folder inside Program Files. Search for the ReportProject directory in the Program Files directory. |

In most cases, experienced report designers will start each report from the standard layout template by selecting the Add/New Item Report choice in the Project menu, or by right-clicking on the project in the Solution Explorer pane. One of the report item choices will be your standard layout template, if you put it in the right directory.

| Note | The Report Wizard is a great place to start if you are just learning Reporting Services because it provides a structured step-by-step framework for creating a report. However, you will soon move beyond its capabilities as you begin to create real-world reports. Also, the wizard does not provide the opportunity to select a layout template. |

One drawback of the standard layout template in Reporting Services is that it does not include a master style sheet. The developer can define the style of each individual element in the templatebold type, font, color , and so onbut he or she cannot set defaults styles for any new elements that are added once the template is being used to create a specific report. New elements added to the report take on the bland styles of the generic report.

Interestingly enough, while the Report Wizard does not access the layout template, it does allow the selection of a style template. You can choose from at least four predefined styles in the Report Wizard: Bold, Casual, Compact, and Corporate. If these dont work for you, you can add to the XML document that defines the available styles. This XML document is called StyleTemplates.xml.

| Reference | Look in Books Online in the Creating a Report Using Report Wizard topic for more information on finding and editing the StyleTemplates.xml document. |

Creating Reports

Now that youre ready to build some reports, the question is where to start. The Business Dimensional Lifecycle provides the answer. Revisit the report specifications you created in the End User Application Specification step of the Lifecycle described in Chapter 8. These specifications list the standard reports in priority order along with mock-ups and documentation on the definitions and contents of the reports. After reviewing the specification document, start building the report set. When the set is complete, deploy them to the test server for testing. Finally, deploy them to the production server. Well go through each of these steps.

Revisit the Report Specifications

In Chapter 8, the BI Application Specification step of the Lifecycle involved creating a list of candidate reports based on the requirements interviews and then prioritizing the list with the business folks. In reviewing the standard report specifications for Adventure Works Cycles, recall that senior management decided that orders data is the top priority. During the requirements gathering process, it became clear that everyone wanted to see orders data, but they all wanted to see it sliced differently. Because you did such a great job capturing the standard reporting needs, all you need to do at this point is pull out the prioritized list and the associated mock-ups and start at the top.

The specifications from Figure 8.3 in Chapter 8 list the Sales Rep Performance Ranking report as the top priority report. The specifications include a report mock-up for this report, illustrated in Figure 9.7.

| Adventure Works Cycles | | ||||||

|---|---|---|---|---|---|---|---|

| Sales Rep Performance Ranking <Year> Compared to {Prior Year} | |||||||

| Sales Rep | <Year> Sales | <Year> Rank | Prior Sales | Prior Rank | Change in Rank | ||

| ID | Last Name | First Name | |||||

| xx | xxxxxx | xxxxxx | \\xxx,xxx\\ [1] | xx.x | xxx,xxx | xx.x | x.x [2] |

| xx | xxxxxx | xxxxxx | \\xxx,xxx\\ | xx.x | xxx,xxx | xx.x | x.x |

| xx | xxxxxx | xxxxxx | \\xxx,xxx\\ | xx.x | xxx,xxx | xx.x | x.x |

| xx | xxxxxx | xxxxxx | \\xxx,xxx\\ | xx.x | xxx,xxx | xx.x | x.x |

| xx | xxxxxx | xxxxxx | \\xxx,xxx\\ | xx.x | xxx,xxx | xx.x | x.x |

| NOTES: | |||||||

| Report Description: The Sales Rep Performance Ranking report lists each sales rep with their total sales in the selected Year and Prior Year. The report is ordered by the selected year rank and calculates a change in rank. | |||||||

| Report Information | |||||||

| Report Category: {Sales Analysis} | |||||||

| Report Name: {Sales Rep Performance Ranking | -current vs. prior year} | ||||||

| Source: {DW - Sales Performance} | Run on: {Run_Date} Page {1} | ||||||

| [1] The current Sales field links to the Sales Rep Detail report. Parameters: Sales Rep ID and Year. [2] The background color of each row is conditionally set based on the Change in Rank field. Rule: Change > 2 = light green, Change <-2 = light red. | |||||||

Figure 9.7: Sales Rep Performance Ranking report mock-up

This report is not very creative or clever and the fact that its top priority could indicate who holds the most power in Adventure Works Cycles. It could also be first because the report provides a good demonstration of several Reporting Services features. As youll see as you work through this section, even a straightforward report like this one presents some development challenges.

| |

Your standard template should include both the layout of standard elements on the page (headers, titles, footers), and the standard formatting styles (fonts, sizes, colors). Reporting Services can use standard layout templates or custom report styles, but not both at the same time. If youre willing to go the extra mile, you can have both a layout template and a style template. Create a metadata table with the standard style entries in your database, and then create a dataset to retrieve the style information into your report. You can then use this to set style properties based on the query results. If the dataset is named StyleDataSet, and it has a column called FontFamily, then an expression to assign the FontFamily property of a Textbox might look like the following:

=First(Fields!FontFamily.Value,"StyleDataSet"))

This option is probably overkill for most DW/BI projects, but it does give you the best of both worlds . You can create a standard layout template and include a dataset that reads the style information along with the code that applies it. You need to create this only once and save it out to the template directory. From then on, all reports built from this template will have the standard layout and style. An extra benefit comes from the fact that the styles are applied dynamically, every time the report is run. When you change the standard styles in the metadata table, all the standard reports will automatically change to the new style the next time they are run.

| |

| Tip | To get the most out of this section, you should be at your computer with SQL Server 2005 and the Adventure Works relational and Analysis Services sample databases installed. This section uses a walk-through format to describe the process of creating a report. We dont describe every mouse click, but if youve at least worked through the SQL Server 2005 Reporting Services tutorials, there should be enough information in each step for you to follow along. |

Creating Your First Standard Report

Begin by creating a new report project in BI Studio. Rename the Solution to Sales Reports, and rename the Project to Sales Rep Performance. To add a new report to the project, right-click on the Reports directory in the Solution Explorer pane and select Add New Item from the popup menu. Be careful not to use the Add New Report choice because it will bring up the Report Wizard, and you wont be able to select your standard template.

Select your standard template in the Add New Item dialog window. When the report appears in the Solution Explorer, rename it Sales Rep Performance Ranking. Click the Add button, and the new report should open up in the Report Designer design surface with the Layout tab selected and all the standard report elements in place. At this point, it should look much like your version of the standard report template in Figure 9.6. If you havent defined a standard template, just add a new blank report item.

Creating the Data Source and Query

Reporting Services uses the same kind of data sources that Integration Services and Analysis Services use. Share data sources across the projects in your solution to make it easy to change connection information. Create a shared data source called AdventureWorksAS that references the Adventure Works DW Analysis Services database that ships with SQL Server 2005. Be sure to test the connection before you proceed. While youre at it, create another shared data source called AdventureWorksDW that references the AdventureWorksDW SQL Server database.

| Tip | In general, it makes sense to use the production data warehouse databases as the sources, even for report development ( assuming the data has already been loaded into production). Report development is essentially the same as ad hoc querying and poses no significant additional risks to the database server. It makes the development job easier because the data used for creating the report is the final data. In addition, you dont need to change the data sources when you move from development to test to production. |

Now create a dataset called SalesRankData for the Sales Rep Performance Ranking report based on the AdventureWorksAS data source. Click the Data tab in the upper-left corner of the report template, select the dropdown menu box in the header bar labeled Dataset:, and select <New Dataset >.

Change the name of the dataset to SalesRankData, and make sure the data source for the dataset is AdventureWorksAS. When you click OK, BI Studio displays the Analysis Services query designer in the design surface. The Analysis Services query designer lets you build simple MDX queries with a drag-and-drop interface. You can view the MDS or enter it directly, by switching out of design mode in the upper-right corner of the designer.

If your data source was a SQL Server relational database, the query designer would allow you to enter SQL into the upper pane and view the results in the lower pane. The relational query design also has a more graphical interface mode called the query builder. It looks suspiciously like the Microsoft Access query builder. If the data source were a Report Builder model, youd use the Report Builder tool to define datasets.

The query building tools in the Report Designer are not so great in the initial release of SQL Server 2005. In many cases, developers will end up creating the SQL or MDX in some other tool and pasting it in. Even in our simple example, the fields for the Sales Rep Performance Ranking report require the creation of calculated members in MDX.

| Warning | If you enter your SQL or MDX directly, do not try to switch over to the query builder or into design mode. The designer will try to rewrite your query and may fail because of its complexity, ruining the SQL and the formatting in the process. In the MDX case, it doesnt even pay to rewrite the query. You have to start over. |

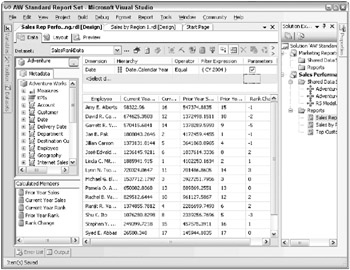

According to the report specs and the mock-up for the Sales Rep Performance Ranking report, the VP of Sales wants to compare current year and prior year sales by sales rep. Like many business requests , this is conceptually simple, but it turns out to be not so easy to do in MDX, SQL, or the Report Designer. For purposes of the example, well create this report using an MDX query against the Analysis Services data warehouse database. The Data tab in the Report Designer shown in Figure 9.8 shows the completed dataset.

Figure 9.8: The completed data tab for the Sales Rep Performance Ranking report

When you create a report, you need to break it down into subsets to make it easier to build. You usually need a minimum of two datasets: one for the report contents and one for the user input parameter prompt list. The report in Figure 9.8 requires identifying two subsets of data, current year sales and prior year sales by employee; ranking each of those subsets independently; and calculating the change in ranking from the prior year to the current year. Let us be clear at this point: We are not MDX experts. In MDX, the easiest way to accomplish the creation of subsets, as far as we can tell, is to create calculated members for each of the two sales subsets (in the Calculated Members pane in the lower-left corner), then create calculated members that rank those subsets , and finally, calculate the difference of the two rank members.

The current year sales calculated member is easy because it can simply be the sales measure. To create this calculated member, simply drag the appropriate measure from the Measures list in the Metadata pane in the upper-left corner into the MDX expression box in the Calculated Member Builder window. For this report, that expression would be:

[Measures].[Reseller Sales Amount]

Technically, you dont need a calculated member for this measure, but it helps clarify the contents of the report and subsequent calculations. Next, recall that the mock-up identified the target year as a user-entered parameter. If you limit the calendar year to CY 2004 in the filter pane at the upper part of the designer, you will see a check box to make this limit a parameter. When you check the box, the Analysis Services report designer creates a Reporting Services parameter along with the query needed to populate the choice list.

The next calculated member is the prior year sales field. This is a bit more complex because it relies on the ParallelPeriod function:

SUM({ParallelPeriod([Date].[Calendar Year].LEVEL,1)}, [Measures].[Current Year Sales]) This says to sum the measure for one year lagged behind the current year, or CY2002 based on the filter we previously set.

The next calculated measure, called Current Year Rank, does a rank on current year sales. Like so much of the MDX language, the RANK function in MDX is not like the RANK function in SQL, although it has the same name. The MDX RANK tells you the location of a member in its current set. For employees , the current set might be in alphabetical order. Therefore, a straight ranking of sales reps might rank Amy Alberts as number one, even though shes actually sixteenth on the list. When you use the RANK, you need to tell it what set it is part of, and what order the set is in, as follows :

IIF (ISEMPTY([Measures].[Current Year Sales]), NULL, RANK ([Employee].[Employee].CurrentMember, ORDER ([Employee].[Employee].[Employee].Members , ([Measures].[Current Year Sales]) , BDESC)))

This MDX first checks to make sure there are Current Year Sales before it does the RANK. This is because only a handful of employees are sales reps, and you dont want the report to list all 296 employees. Next, the expression ranks the current employee according to where it is in a set of employees ordered by Current Year Sales, descending. The Prior Year Rank is the same expression only ordered by Prior Year Sales.

The final column, Rank Change, is simply the difference in the two ranking calculated members:

[Measures].[Prior Year Rank] - [Measures].[Current Year Rank]

While this may seem complicated, there are two aspects to consider that might make it seem relatively easy. First, you can define commonly used calculated members, like Current Year Sales or Prior Year Sales, in the Analysis Services OLAP database once; then they become drag-and-drop fields in the Metadata pane, just like any other measure. Second, the SQL alternative to this query is even less attractive; it goes on for almost a page to accomplish the same results. (You can get a script for the equivalent SQL query at the books web site: www.MsftDWToolkit.com.)

Even though the primary dataset is complete, you still have at least one more dataset to consider. When you select the parameter check box in the Date.Calendar Year limit, the MDX query designer automatically creates a second dataset, called DateCalendarYear, and links that dataset to a parameter also called DateCalendarYear. The dataset retrieves the distinct list of choices for the attribute selected and includes a value field and a label field. The label field is used to populate the choice list of a pulldown menu in the user interface, and the corresponding value field is passed to the DateCalendarYear parameter in the MDX query. If you use the SQL query builder, you have to create the dataset to populate the parameter choice list yourself.

| Tip | To toggle back and forth between the two datasets, select the name of the dataset you would like to see in the pulldown menu in the Data tab header bar in the Report Designer. Hit the exclamation mark button in the header bar to refresh the results set. |

Design the Report Layout

Once your datasets are defined, switch over to the Layout tab in the Report Designer design surface and start putting the report together. Fortunately, the Sales Rep Performance Ranking report has a simple layout. Start with the standard layout, and then add a few subtle items like the DateCalendarYear parameter and some conditional formatting to highlight potential problem areas.

The design surface in the Layout view should look familiar to anyone who has created forms or reports with Access, Visual Basic, VBA, or Visual Studio. The basic process involves dragging various Report Items from the Toolbox onto the design surface and then specifying their properties as appropriate.

The standard template from Figure 9.6 already has predefined Table and Matrix items. The report mock-up looks like a good candidate for the Table control, so delete the Matrix control to clear up a little space. (If youre not using a standard template, just drag a Table from the Toolbox into the report area.) Fill in the Table control by dragging columns from the Fields list in the Dataset pane into the Detail row of the Table control. Once you have the columns in place, select the Preview tab to see how the initial report looks.

| Tip | If the template doesnt have enough columns in the Table, you can add more by right-clicking in the gray header for any column. Select Insert Column to the Right or Left as needed. This will clone any pre-set formatting into the new column. |

When you select Preview, the report should run and generate results for the default calendar year of 2004. Try changing the Date.Calendar Year parameter in the pulldown menu at the top of the report. You need to hit the View Report button to re-execute the query. Note that the pulldown menu allows you to select more than one year. This will break the prior year calculations, so go back and change this.

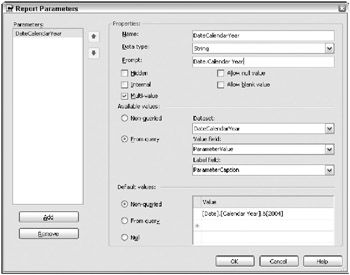

To improve the default settings, return to the Layout pane and select Report Report Parameters to display the Report Parameters dialog box, as illustrated in Figure 9.9.

Figure 9.9: Report Parameters dialog box

Change the prompt string to make sense to your business users, and uncheck the multi-value box in the Properties section. Now, if you select the Preview tab, you should be able to select only a single year in the pulldown menu.

Creating reports is a classic 80/20 process, or even 90/10, in that it takes only 10 percent of the time to create 90 percent of the report. Most of the final 10 percent of creating a report is formatting, and it usually takes much longer than you would expect. In this case, the Sales Rep Performance report now has the key elements it will have when its finished, but it doesnt look very professional. Go back to the Layout view and take a few minutes to clean up some of the following appearance problems:

-

Change the column widths to make better use of the available space.

-

Format the sales columns to get rid of all those decimal values and add some commas. Try selecting the field in the Detail row and putting an N in its Format property, or try a custom format string, like #,##0. (See the Predefined Numeric Formats topic in Books Online for more options.)

Note You might think that number formatting would be easier if the data source is Analysis Services rather than SQL. One of the advantages of Analysis Services is that it contains metadata about such things as formatting for measures.

Alas, no. Reporting Services does not respect the formatting thats defined in the Analysis Services database.

-

Verify the line spacing and borders.

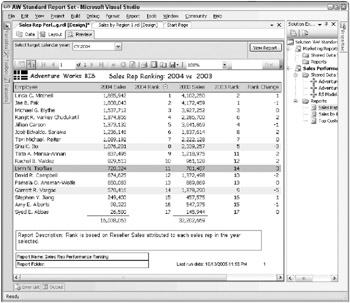

Figure 9.10 shows the finished report in the Report Designer environment. Notice the Total Sales fields, which are expressions added to the Footer row of the table.

Figure 9.10: Final report layout for the Sales Rep Performance Ranking report

The guiding philosophy in laying out and formatting reports is that they should be as clear and self-explanatory as possible. Users will not take the time to look elsewhere for report documentation, nor should they be expected to. Clarity is one of the major challenges the DW/BI team takes on when it includes standard reports as part of its responsibilities. Involve someone who has solid graphic design expertise in the design process of the layout template and the initial report set. Experiment with alternatives and get feedback from users as to which one works best. A bit of extra work at this point will pay off massively in the long run.

| |

To create a clear, understandable, professional-looking report, you will need to go beyond these basic formatting items. Some additional improvements to consider include:

-

Add a report description to the reports metadata. This description lives in the properties of the report itself. To access these properties, select the area outside the design surface in the Layout view and view the properties sheet, or right-click in the same area and select Properties.

-

Add conditional formatting. Reporting Services supports expressions written in Visual Basic .NET. You can use this powerful capability to define most of the properties in the report programmatically. Conditional formatting is a good example of this capability. (See the Common Expressions topic in Books Online for more examples.)

For example, in the Sales Rep Performance Ranking report, the VP of Sales might want to highlight those sales reps that have risen or slipped more than two places in the rankings. You need a soothing green color for the rising stars and an ominous red color for the slackers. To accomplish this, apply conditional formatting to the entire Detail row. Select the row, and then select the pulldown menu for the BackgroundColor property in the Properties window. The first choice in the menu is <Expression >. Select it and the Edit Expression dialog window opens. Delete the contents of the Expression box and enter the following expression:

=iif(Fields!Rank_Change.Value > 2, "LightGreen", iif(Fields!Rank_Change.Value < -2, "MistyRose", "White"))

Select OK, and then select the Preview tab. You should see some nice highlighting at this point. Can you tell who is going to be in trouble when you publish this report?

-

Add parameterized labeling. This is another useful application of expressions. Rather than have generic column labels that dont tell the user exactly what is in the column, you can use expressions to incorporate parameters right into the report text. This is particularly helpful for when reports are printed. Once you separate the report from the Reporting Service, it is unclear which parameters you used to generate the report. Labels can be made more descriptive by using expressions as follows:

Right-click the SalesAmnt header field and select Expression. In the Expression box, delete the text title and enter the following expression:

=Left(Right(Parameters!DateCalendarYear.Value,5),4) & " Sales"

-

Select OK, and then select the Preview tab. If the TargetYear is still set to 2003, the Sales column header should now be 2003 Sales.

-

Make appropriate, similar changes to the CurrentRank, PriorSalesAmnt, and PriorRank header fields. Remember, the parameter is a string value, so you may need to use the Val() and Str() functions to get the prior year into the titles.

-

Add an interactive sort expression to the Current Ranking header field. This will allow your users to sort out the top or bottom performers.

-

Verify print layout. It is rare that a report you have created to fit on the screen will also fit on the printed page the first time. You may need to change the column widths and font sizes to squeeze things in a bit. You can change the page setup as well, adjusting margins and switching from portrait to landscape. If you cant keep all the columns on a single page, you may need to change some of the properties of certain fields and groups to cause them to repeat on subsequent printed pages.

-

| |

Unit Test

Report developers should do the first round of testing right in the development environment. At the very least, the developer should test the parameters and validate the results.

Test different parameters. For example, try the Sales Rep Performance Report with the year 2003. What happens? It looks like some of the rows are missing a few values. This makes sense in this case because you would expect some sales reps to have data in one year and not the other. Fortunately, Analysis Services does an outer join for you to make sure everyone in the target year is represented in the report.

Validate the numbers. Check the numbers as carefully as possible. Compare them to any known alternative sources for the same information. If the numbers should be the same and they are not, figure out why. Research the business rules in the alternative source and compare them to your own. The problem may be in your query, or all the way back somewhere in the ETL process. If theres a problem, resolving it is serious detective work and can take a lot of time and energy.

If the numbers are supposed to be different because they have been improved or corrected in the ETL process, carefully document the reasons for the differences. If possible, show how you can get from the data warehouse numbers back to the alternative source numbers. This documentation should be available in the BI portal, and the report description should refer to it.

Before you actually deploy the report, you may want to create a few more reports and deploy an entire project all at once. In this case, you can add a Sales Rep Detail report or a report that includes quota information and set up the ranking report to drillthrough to the detail.

Deploy to the Test Web Server and Test Some More

In large environments with hundreds or thousands of users pounding on the standard report set, it makes sense to deploy the reports to a test server environment that is as similar to the production environment as possible. This step allows the reporting team to stress test the new reports to ensure they dont reduce the performance of other reports before moving them into production. In medium- sized or smaller organizations where the user population is smaller, it may not be necessary for a full test server environment. The reporting team could deploy the reports to the production Report Server and test them there. You can minimize the risk of this move by limiting access to the new report directories, and by not publishing the new reports in the BI portal until you have completed testing.

This test phase typically involves several steps. The process begins with deploying the project to the target Report Server (either test or production). Once there, the reports need to be retested to ensure proper display and printing. The tester should make sure the reports all perform as expected in the target environment. If they are not working well enough, there are a number of tuning techniques available. These range from tuning the query to creating report snapshots to actually changing the server configurations.

Deploying the project or report to a Reporting Services instance is straightforward. Each project has its own configuration properties, so if you have multiple projects in a solution, you will need to set up the properties for each project. Within each project, there are several configurations and each can have its own target Report Server. The default configurations are DebugLocal, Debug, and Production. To set up the target server in the project properties, in the Project menu, select Properties. This opens a project-specific Properties pages window. To deploy the project, you need to provide a target server URL for the active configuration. In the simplest case, where the web server is on the same machine as the development environment, the target server URL can be http://localhost/ReportServer.

After you test the basics of appearance and performance, the next step is to integrate the new reports into the production process. If there are standard schedules these reports depend on, the reports should be linked to the appropriate schedules in the Report Manager. If there are standard distribution lists that should receive these reports, they should be set up at this point. The DW/BI team should also validate the subscription process to make sure the report is available for users to subscribe to and receive on a regular basis.

Deploy to Production

When there is an actual deployment to the production server, you will need to repeat many of the steps you went through to move the reports into test. These include schedules, snapshots, subscriptions, and email distribution lists. However, in most cases, the deployment to production has already taken place in the test step, so this step is more of an unveiling than anything else. This is especially true when the primary user interface with the reporting environment is through a web site or portal. In this case, the reports are not visible to the users until you make them available in the portal. On the other hand, if users access the reports through the Report Manager interface, the new directories and reports will appear as soon as you deploy them to the server (assuming they have appropriate access rights).

The BI portal is such a powerful tool for the DW/BI system that we explore it in detail in the following section. At this point, it is enough to say that if you are providing reports through a portal, you need to integrate this new set of reports into that portal as part of the production deployment.

An important part of deploying a report to production is setting the security for the report. Reporting Services has several options for managing security, as do the relational engine and Analysis Services. We discuss security in Chapter 12.

The BI Portal

In Chapter 8, we introduced the concept of the navigation portal as the organizing framework for the standard report set. Any time we use the word portal , it invokes visions of a major enterprise effort to collect and categorize all structured and unstructured information and make it available through a rich framework with intelligent search capabilities and the ability to personalize the experience. Building the enterprise information portal may be a useful and important task, but in most cases, it is someone elses task. For our purposes, think about the navigation portal as the web site for the DW/BI system: the BI portal.

The success of the DW/BI system is determined by whether or not the organization gets value out of it. For the organization to get value from the DW/BI system, people have to use it. Since the BI portal is the primary interaction most people have with the DW/BI system, the DW/BI team needs to do everything in its power to make sure the BI portal provides the best possible experience.

As you begin the design process, keep in mind that a significant component of the work a DW/BI team does is about managing organizational change (sometimes it feels like that is just another way to say politics). The BI portal plays a significant role in this change process, so it has to work at several levels. It must be:

-

Usable: People have to be able to find what they need.

-

Content-rich: It should include much more than just the reports. It should include as much support information, documentation, help, examples, and advice as possible.

-

Clean: It should be nicely laid out so people are not confused or overwhelmed by it.

-

Current: It needs to be someones job to keep the content up-to-date. No broken links or 12-month-old items labeled New! allowed.

-

Interactive: It should include functions that engage the users and encourage them to return to the portal. A good search tool, a metadata browser, maybe even a support-oriented news group are all ways for people to interact with the portal. A capability for users to personalize their report home page, and to save reports or report links to it, makes it directly relevant to them. It also helps to have new items appear every so often. Surveys, class notices, and even data problem warnings all help keep it fresh.

-

Value-oriented: This is the organizational change goal. We want everyone who comes to the BI portal to end up with the feeling that the DW/BI system is a valuable resource, something that helps them do a better job. In a way, the BI portal is one of the strongest marketing tools the DW/BI team has and you need to make every impression count.

In short, the design principles that apply to any good web site apply to the BI portal.

Planning the BI Portal

The process of creating the BI portal requires a careful balancing of two basic design principles: density and structure.

-

Density: The human mind can take in an incredible amount of information. The human eye is able to resolve images at a resolution of about 530 pixels per inch at a distance of 20 inches. Compare this with the paltry 72 pixels per inch resolution of the typical computer screen. Our brains have evolved to rapidly process all this information looking for the relevant elements. The browser gives us such a low-resolution platform that we have to use it as carefully and efficiently as possible. Every pixel counts.

-

Structure: Although we need to fill the BI portal home page with information, it doesnt work if we jam it full of hundreds of unordered descriptions and links. Your brain can handle all this information only if its well organized. For example, a typical major daily newspaper has an incredible amount of information but you can handle it because its structured in a way that helps you find what you need. At the top level, the paper is broken up into sections. If youre looking for certain kinds of information, you know which section to start with. Some readers look at every section, but most skip a few that they deem irrelevant to their lives. The next level down within each section may be divided into subsections, but the most common organizing structure is the headline. Headlines (at least non-tabloid headlines) attempt to communicate the content of the article in as few words as possible. These headlines are the relevant elements that allow readers to quickly parse through the newspaper to find information that is interesting to them.

| Reference | For interesting reading about image density, see the work of R. N. Clark:

Edward Tuftes work provides a good general reference for structure and information display, including:

|

Impact on Design

The idea of density translates to the BI portal in a couple of ways. Primarily, it means we flatten the information hierarchy. Categories are often represented as hierarchies in the browser. You see a list of choices, each representing a topic. Click on a topic, and youre taken to a page with another list of choices, and so on until you finally reach some content. Flattening the hierarchies means bringing as much information to the top-level pages as possible. Meaning that was captured in the hierarchy of pages is now collapsed down to an indented list of category and subcategory headings on a single page.

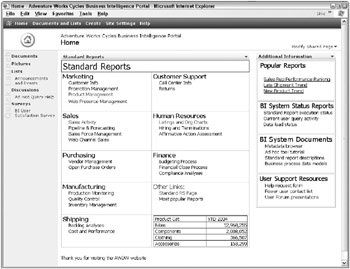

Figure 9.11 translates these concepts into the world of Adventure Works Cycles. The BI portal shown here demonstrates how two levels of report categories have been collapsed into one page. The portal is easy to navigate because you can identify major categories of information based on the headings and ignore them if they dont apply to your current needs, or examine them more closely if they seem relevant. Having the two levels on the same page actually gives the user more information because the two levels help define each other. For example, Sales helps group the sales- related subcategories together, but at the same time, each subcategory helps the user understand what activities are included in Sales.

Figure 9.11: The Adventure Works Cycles BI portal home page

Business Process Categories

Every word you include on the portalevery header, description, function, and linkall need to communicate what content people will find behind it. Generally, the best way to organize the portal is to use the organizations business processes as the main outline. Look at Figure 9.11 from a business process perspective. The left column under Standard Reports includes Adventure Works Cycles major business processes. You can also think about this as the organizations value chain. In Adventure Works Cycles case, Marketing and Sales business processes come early in the value chain, working to bring in new customers and new orders. Once the company has orders, they purchase materials from their suppliers, manufacture the bikes, and ship them out to the customers. Customer Support may interact with the customers at any point along the way, and even after the product has been shipped. There are also internal business processes that generate information that is useful across the organization, like headcount data from HR, or cost data from Finance.

Beyond business process categories, the BI portal needs to have a standard layout so people can easily find what theyre looking for. If your organization has a standard page layout that you can adapt for the BI portal, use it. Your users wont have to learn a new interface when they come to the BI portal.

Additional Functions

Although one of the main points of the BI portal is to provide access to the standard reports, it must offer much more than just reports. In addition to the categories and reports lists, you need to provide several common functions:

-

Search: The search tool serves as an alternative report locator if the business process categories arent helpful. A good search tool that indexes every report, document, and page on the BI web site can dramatically shorten the amount of time it takes a user to find what she wants.

-

Metadata browser: A metadata browser can be as simple as a few ASP.NET pages or even Reporting Services reports that allow the user to browse through the metadatas descriptions of the databases, schemas, tables, columns, business rules, load statistics, report usage, report content, and so on. In short, interested users can learn a lot about the DW/BI system through the metadata browser.

-

Newsgroup: It may make sense to host a support-oriented news group on the BI portal. This can be a good way for users to find help when they need it. It can also create a record of problems and their solutions for future reference. It takes a fairly large user community to generate the critical mass of activity needed to make a newsgroup successful.

-

Personalization: Users should be able to save reports or report links to their personal pages. This personalization can be a powerful incentive for people to return to the portal every day.

-

Information center: It helps keep things interesting to have new items appear on a regular basis. Offer a survey, have people sign up for tool training, or post a notice of an upcoming User Forum meeting.

There is also a whole set of support and administration content the BI portal needs to provide. This includes online training/tutorials, help pages, metadata browser, example reports, data cheat sheets, help request forms, and contact info for the DW/BI team. This information all goes in the lower-right corner, the least valuable real estate on the screen (at least for languages that read from left to right and top to bottom). We discuss this supporting content again in Chapters 14 and 15.

Creating and maintaining the BI portal is much more work than most DW/BI teams ever expect. The effort is worth it because the BI portal is the farthest-reaching tool the DW/BI team has for delivering value to the organization. It is also one of your best tools for marketing the DW/BI system.

SharePoint Portal Server

While it is possible to create a fine BI portal using FrontPage, several major enterprise portal players are on the market, offering an extended set of web functionality. Microsofts SharePoint Portal Server and the supporting Windows SharePoint Services provide local and enterprise-level portal capabilities. The BI portal shown in Figure 9.11 was built in the Windows SharePoint Services environment. These technologies allow you to add a whole range of functionality to your BI portal, including:

-

Embedding live reports on web pages

-

User personalization capabilities (my reports, custom layouts, and so on)

-

Advanced search across the portal environment

-

News and Topics controls to provide information to users

-

Web-based surveys

-

Discussion groups

-

Alerts to notify users when documents or other items of interest change

Reporting Operations

The deployment process included steps to tie the new reports in with existing reporting operations processes like schedules and distribution. These operations processes will also need to be maintained on an ongoing basis, independent of the introduction of new reports.

In addition to building the reports themselves, you may need to cause a set of reports to run automatically when the data load for a business process dimensional model is finished. Reporting Services isnt directly tied to the ETL system, but it does have a control structure called a subscription that can help. Create a set of subscriptions that the main load processes will kick off as part of the regular update process.

One way to accomplish this is to invoke the subscription from within the data load Integration Services package. The problem with this approach is it hard-codes the link between Integration Services and Reporting Services, making it difficult to maintain and enhance.

An alternative solution is to create a metadata layer between the two components . You can use a simple set of tables to drive the relationships between your ETL and reporting systems. The table-driven approach described in the associated sidebar is easy for an administrator to manage.

As you deploy new standard reports to the Report Server, add them to the appropriate schedules and create any system subscriptions to distribute the reports as needed. The DW/BI team will also need to maintain any data-driven subscriptions that involve individual users and email lists. The team may need to add and delete users from these lists as they come and go from the organization.

The same goes for other distribution mechanisms, like file shares. Computers and networks have a tendency to change. For example, the Accounting department may have requested a set of reports distributed to their file server. Then they get a new file server and turn off the old one without telling you. At this point, you have a subscription failing on a regular basis, and a set of users not receiving scheduled reports.

| |

A simple example of event-based notification involves a Reporting Services component and an Integration Services component, with a metadata table in between to define the linkages. On the Reporting Services side, you need to do the following:

-

Use Management Studio to create a shared schedule. Name the shared schedule in a way that describes the event it represents, like Orders Data Load Complete.

-

Link reports to the shared schedule by going to the Execution properties of the Properties tab of each report.

-

Set the Execution properties of the report to create a snapshot on the shared schedule created in Step 1 (for example, Orders Data Load Complete).

-

Set up subscriptions to distribute the report. To do this, go to the Subscriptions property tab of each report and create a new subscription set to Notify me when the report content is refreshed.

-

When the Shared schedule runs, it will cause all reports linked to it to execute, refreshing the snapshot contents and triggering the notification subscription.

-

The administrator could set up master subscriptions using the data-driven subscription feature to send reports to email distribution lists.

A table named LoadToScheduleMap sits between Reporting Services and Integration Services. The following figure shows how this table links a particular load name to any number of schedules. In the example dataset, the Orders Data Load Complete load is linked to three schedules. Each of these schedules is linked to several reports in the Reporting Services metadata. Fortunately, the linkage between reports and schedules is part of the Reporting Services infrastructure. Note that one of the LoadTypes is Test. This allows the Integration Services package to run in test mode to test the entire process. The schedule associated with the Test LoadType is linked to only a few reports that are sent to the developers. Without this flag, everyone will receive a full set of reports every time the IntegrationServices package is run, even if its a test run.

| LoadToScheduleMap | |

|---|---|

| PK PK | LoadID ScheduleID |

| LoadName LoadType ScheduleName ScheduleStatus ModifiedDate | |

| Load ID | Schedule ID | LoadName | LoadType | ScheduleName | ScheduleStatus | ModifiedDate |

|---|---|---|---|---|---|---|

| 23 | 47 | Orders Data Load Complete | Regular | Sales Org Orders Reports | Active | 7/29/2004 |

| 23 | 52 | Orders Data Load Complete | Regular | Product Orders Reports | Active | 8/17/2004 |

| 23 | 37 | Orders Data Load Complete | Test | Test Orders Reports | Active | 6/29/2004 |

| ... | ||||||

| The LoadToScheduleMap table and example rows | ||||||

On the Integration Services side, each load package should execute a shared Notification package at the end of a successful completion. The Integration Services package will need to pass a few parameters, including LoadName, LoadType, and CompletionTimestamp. See Chapter 6 for a discussion of how to communicate between Integration Services packages.

The Notification package uses the LoadName and LoadType to select the list of Schedules that need to be initiated from the LoadToScheduleMap table. It then runs a script that calls the rs.exe utility to set each schedule to run in the next few minutes. You may want to get clever and include a priority value in the LoadToScheduleMap table. The script could then stagger the start time for each schedule, depending on its priority. You can find an example of these scripts and data structures on the books web site at www.MsftDWToolkit.com .

| |

| | ||

| | ||

| | ||

EAN: N/A

Pages: 125

- Chapter VII Objective and Perceived Complexity and Their Impacts on Internet Communication

- Chapter X Converting Browsers to Buyers: Key Considerations in Designing Business-to-Consumer Web Sites

- Chapter XII Web Design and E-Commerce

- Chapter XIII Shopping Agent Web Sites: A Comparative Shopping Environment

- Chapter XVIII Web Systems Design, Litigation, and Online Consumer Behavior