7.2 Areas of Standardization

| | ||

| | ||

| | ||

7.2 Areas of Standardization

For some time digital interface standards have existed for 525/59.94 and 625/50 4:2:2 component and 4 F SC composite. More recently digital interface standards for a variety of HD scanning standards have been set.

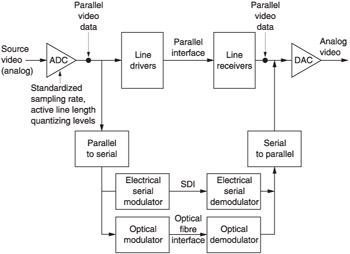

Digital interfaces require to be standardized in the following areas: connectors, to ensure plugs mate with sockets; pinouts; electrical signal specification, to ensure that the correct voltages and timing are transferred; and protocol, to ensure that the meaning of the data words conveyed is the same to both devices. As digital video of any type is only data, it follows that the same physical and electrical standards can be used for a variety of protocols. It also follows that the same protocols can be conveyed down a variety of physical channels. Figure 7.1 shows that serial, parallel and optical fibre interfaces may carry exactly the same data.

Figure 7.1: If a video signal is digitized in a standardized way, the resulting data may be electrically transmitted in parallel or serial or sent over an optical fibre.

Parallel interfaces are obsolete, but they are worthy of study because the parallel interface standard actually contains a comprehensive definition of how a television signal is described in the digital domain as a series of binary numbers . This definition will include such factors as how the image is described as a pixel array, the sampling rate needed to do that, how the colour is subsampled, the colorimetry and gamma assumed, the connection between analog signal voltage and the value of binary codes and so on. The actual parallel interface usually consists of little more than a number of ECL line drivers and receivers, one for each bit along with a clock. A serial interface will require some form of shift register to convert the word format data into a bitstream along with a channel coder to turn the bitstream into a self-clocking waveform compatible with the channel, which may be electrical or optical. The SD and HD serial interfaces are quite similar except, of course, for the bit rate.

| | ||

| | ||

| | ||

EAN: 2147483647

Pages: 120

- ERP System Acquisition: A Process Model and Results From an Austrian Survey

- The Effects of an Enterprise Resource Planning System (ERP) Implementation on Job Characteristics – A Study using the Hackman and Oldham Job Characteristics Model

- Healthcare Information: From Administrative to Practice Databases

- Relevance and Micro-Relevance for the Professional as Determinants of IT-Diffusion and IT-Use in Healthcare

- Development of Interactive Web Sites to Enhance Police/Community Relations