22.

| [Cover] [Contents] [Index] |

Page 117

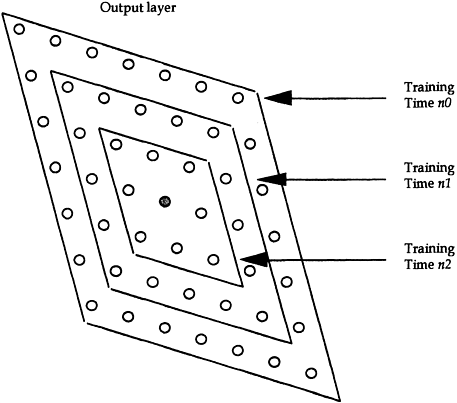

where j′ denotes the neighbourhood of j including j itself. The term γn is also a time-decaying function, and is defined by:

|

(3.15) |

Usually, a value of γmin in the region of 1.0 is chosen, and γmax can be set sufficiently large to make the neighbourhood function βj′ (γn) cover the whole output layer.

Equation (3.14) indicates that, for j′ = j, i.e. for the winning neurone itself, the updating magnitude for the associated weights is proportional to the learning rate α (because βj′=βj=1). The updating magnitude for other j′ (i.e. the magnitude of change in j′) is sequentially decreasing with the increasing distance between the neighbourhood j′ and the winning neurone j. Figure 3.7 illustrates the update in neighbourhood function as a time-decreasing function.

Different values of the functions γ and α produce different effects on the

Figure 3.7 Modification of the weights of those neurones around a selected neurone begins with a wide neighbourhood that decreases in size following training time n2> n1>n0.

| [Cover] [Contents] [Index] |

EAN: 2147483647

Pages: 354