Section 3.10. The Kernel SleepWakeup Facility

|

|

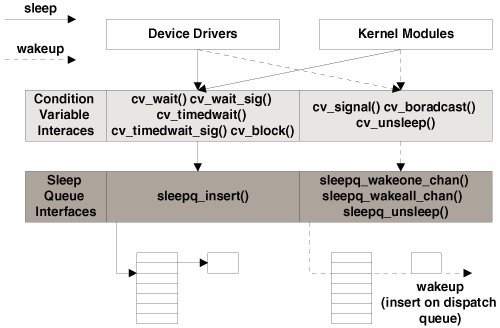

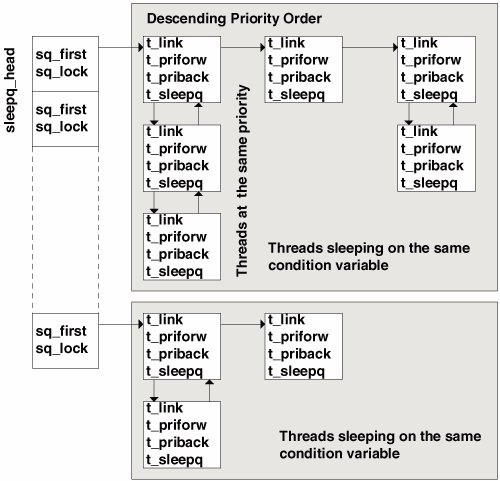

3.10. The Kernel Sleep/Wakeup FacilityThe typical lifetime of a thread includes not only execution time on a processor but also time spent waiting for requested resources to become available. An obvious example is a read or write from disk, when the thread issues the read(2) or write(2) system call, then sleeps so another thread can make use of the processor while the I/O is being processed by the kernel. Once the I/O has been completed, the kernel wakes up the thread so it can continue its work. Threads that are runnable and waiting for a processor reside on dispatch queues. Threads that must block, waiting for an event or resource, are placed on sleep queues. A thread is placed on a sleep queue when it needs to sleep, awaiting availability of a resource (for example, a mutex lock, reader/writer lock, etc.) or awaiting some service by the kernel (for example, a system call). A few sleep queues implemented in the kernel vary somewhat, although they all use the same underlying sleep queue structures. Turnstiles are implemented with sleep queues and are used specifically for sleep/wakeup support in the context of priority inheritance, mutex locks, and reader/writer locks. Threads put to sleep for something other than a mutex or reader/writer lock are placed on the system's sleep queues. 3.10.1. Condition VariablesThe underlying synchronization primitive used for sleep/wakeup in Solaris is the condition variable. Condition variables are always used in conjunction with mutex locks. A condition variable call is issued according to whether a specific condition is either true or false. The mutex ensures that the tested condition cannot be altered during the test and maintains state while the kernel thread is being set up to block on the condition. Once the condition variable code is entered and the thread is safely on a sleep queue, the mutex can be released. This is why all entry points to the condition variable code are passed the address of the condition variable and the address of the associated mutex lock. In implementation, condition variables are data structures that identify an event or a resource for which a kernel thread may need to block and are used in many places around the operating system. /* * Condition variables. */ typedef struct _condvar_impl { ushort_t cv_waiters; } condvar_impl_t; #define CV_HAS_WAITERS(cvp) (((condvar_impl_t *)(cvp))->cv_waiters != 0) See usr/src/uts/common/sys/condvar_impl.h The condition variable itself is simply a 2-byte (16-bit) data type with one defined field, cv_waiters, that stores the number of threads waiting on the specific resource the condition variable has been initialized for. The implementation is such that the various kernel subsystems that use condition variables declare a condition variable data type with a unique name either as a stand-alone data item or embedded in a data structure. Try doing a grep(1) command on kcondvar_t in the /usr/include/sys directory, and you'll see dozens of examples of condition variables. A generic kernel cv_init() function sets the condition variable to all zeros during the initialization phase of a kernel module. Other kernel-level condition variable interfaces are defined and called by different areas of the operating system to set up a thread to block a particular event and to insert the kernel thread on a sleep queue. At a high level, the sleep/wakeup facility works as follows. At various points in the operating system code, conditional tests are performed to determine if a specific resource is available. If it is not, the code calls any one of several condition variable interfaces, such as cv_wait(), cv_wait_sig(), cv_timedwait(), cv_wait_stop(), etc., passing a pointer to the condition variable and mutex. This sequence is represented in the following small pseudocode segment. kernel_function() mutex_init(resource_mutex); cv_init(resource_cv); mutex_enter(resource_mutex); if (resource is not available) cv_wait(&resource_cv, &resource_mutex); consume resource mutex_exit(resource_mutex); These interfaces provide some flexibility in altering behavior as determined by the condition the kernel thread must wait for. Ultimately, the cv_block() inter-face is called; the interface is the kernel routine that actually sets the t_wchan value in the kernel thread and calls sleepq_insert() to place the thread on a sleep queue. The t_wchan, or wait channel, contains the address of the conditional variable that the thread is blocking on. This address is listed in the WCHAN column in the output of a ps -efl command. The notion of a wait channel or wchan is something that's familiar to folks that have been around UNIX for a while. Traditional implementations of UNIX maintained a wchan field in the process structure, and it was always related to an event or resource the process was waiting for (why the process was sleeping). Naturally, in the Solaris multithreaded model, we moved the wait channel into the kernel thread, since kernel threads execute independently of other kernel threads in the same process and can execute system calls and block. When the event or resource that the thread was sleeping on is made available, the kernel uses the condition variable facility to alert the sleeping thread (or threads) and to initiate a wakeup, a process that moves the thread from a sleep queue to a processor's dispatch queue. Figure 3.12 illustrates the sleep/wake process. Figure 3.12. Sleep/Wakeup Flow 3.10.2. Sleep QueuesSleep queues are organized as a linked list of kernel threads, each linked list rooted in an array referenced through a sleepq_head kernel pointer, which references a doubly linked sublist of threads at the same priority. A hashing function indexes the sleepq_head array, hashing on the address of the condition variable. The singly linked list that establishes the beginning of the doubly linked sublists of kthreads at the same priority is also in ascending order of priority. The sublist is implemented by t_priforw (forward pointer) and t_priback (previous pointer) in the kernel thread. Also, a t_sleepq pointer points back to the array entry in sleepq_head, identifying which sleep queue the thread is on and also affording a quick method to determine if a thread is on a sleep queue at all. (If t_sleepq == NULL, the thread is not on a sleep queue). The number of kernel interfaces to the sleep queue facility is minimal. Only a few operations are performed on sleep queues: inserting a kernel thread on a sleep queue (putting a thread to sleep), removing a thread from a sleep queue (waking a thread up), and traversing the sleep queue in search of a kernel thread. There are interfaces that let us wake one thread only or all threads sleeping on the same condition variable. Insertion of a thread simply involves indexing into the sleepq_head array to find the appropriate sleep queue specified by the condition variable address, then traversing the list, checking thread priorities along the way to determine the proper insertion point. Once the appropriate sublist has been found (at least one kernel thread at the same priority) or it has been determined that no other threads on the sleep queue have the same priority, a new sublist is started, the kernel thread is inserted, and the pointers are set up properly. The removal of a kthread involves either searching for and removing a specific thread that has been specified by the code calling into sleepq_dequeue() or sleepq_unsleep(), or waking up all the threads blocking on a particular condition variable. Waking up all threads or a specified thread is relatively straightforward: the code hashes into the sleepq_head array specified by the address of the condition variable, and walks the list, either waking up each thread or searching for a particular thread and waking the targeted thread. In case a single, unspecified thread needs to be removed, the code implements the list as a FIFO (First In, First Out), so the kthread that has been sleeping the longest on a condition variable is selected for wakeup first. Figure 3.13. Sleep Queues 3.10.3. The Sleep ProcessNow that we've introduced condition variables and sleep queues, let's tie them together to form the complete sleep/wakeup picture in Solaris. The interfaces to the sleep queue (sleepq_insert(), etc.) are, for the most part, called only from the condition variables and turnstiles subsystems. The process of putting a thread to sleep begins with a call into the condition variable code wait functions, one of cv_wait(), cv_wait_sig(), cv_wait_sig_swap(), cv_timedwait(), or cv_timedwait_sig(). Each of these functions is passed the condition variable and a mutex lock. They all ultimately call cv_block() to prepare the thread for sleep queue insertion. cv_wait() is the simplest condition variable sleep interface; it grabs the dispatcher lock for the kthread and invokes the class-specific sleep routine (for example, ts_sleep()). The timed variants of the cv_wait() routines take an additional time argument, ensuring that the thread will be woken up when the time value expires if it has not yet been removed from the sleep queue. cv_timedwait() and cv_timedwait_sig() use the kernel callout facility for handling the timer expiration. The realtime_timeout() interface is used and places a high-priority timeout on the kernel callout queue. The setrun() function is placed on the callout queue, along with the kernel thread address and time value. When the timer expires, setrun(), followed by the class-specific setrun function (for example, rt_setrun()), executes on the sleeping thread, making it runnable and placing it on a dispatch queue. The sig variants of the condition variable code, cv_wait_sig(), cv_timedwait_sig(), etc., are designed for potentially longer-term waits, when it is desirable to test for pending signals before the actual wakeup event. These variants return 0 to the caller if a signal has been posted to the kthread. The swap variant, cv_wait_sig_swap(), can be used if it is safe to swap out the sleeping thread while it's sleeping. The various condition variable routines are summarized below. Note that all functions described below release the mutex lock after cv_block() returns, and they reacquire the mutex before the function itself returns.

All of the above entry points into the condition variable code call cv_block() or cv_block_sig(), which just sets the T_WAKEABLE flag in the kernel thread and then calls cv_block(). cv_block() does some additional checking of various state flags and invokes the scheduling-class-specific sleep function through the CL_SLEEP() macro, which resolves to ts_sleep() for a TS/IA class thread. The intention of the ts_sleep() code is to boost the priority of the sleeping thread to a SYS priority if such a boost is flagged. As a result, the kthread is placed in an optimal position on the sleep queue for early wakeup and quick rescheduling when the wakeup occurs. Otherwise, the priority is reset according to how long the thread has been waiting to run. The ts_sleep() function is the most complex of all the class xx_sleep() routines, so we start with ts_sleep(). The assignment of a SYS priority to the kernel thread is not guaranteed every time ts_sleep() is entered. Flags in the kthread structure, along with the kthread's class-specific data (ts_data in the case of a TS class thread), specify whether a kernel mode (SYS) priority is required. A SYS class priority is flagged if the thread is holding either a reader/writer lock or a page lock on a memory page. For most other cases, a SYS class priority is not required and thus will not be assigned to the thread. RT class threads do not have a class sleep routine; because they are fixed-priority threads, there's no priority adjustment work to do. The ts_sleep() function is represented in the following pseudocode. ts_sleep() if (SYS priority requested) /* t)_kpri_req flag */ set TSKPRI flag in kthread set t_pri to requested SYS priority /* tpri = ts_kmdpris[arg] */ set kthread trap return flag /* t_trapret */ set thread ast flag /* t_astflag */ else if (ts_dispwait > ts_maxwait) /* has the thread been waiting long */ calculate new user mode priority set ts_timeleft = ts_dptbl[ts_cpupri].ts_quantum set ts_dispwait = 0 set new global priority in thread (t_pri) if (thread priority < max priority on dispatch queue) call cpu_surrender() /* preemption time */ else if (thread is already at a SYS priority) set thread priority to TS class priority clear TSKPRI flag in kthread if (thread priority < max priority on dispatch queue) call cpu_surrender() /* preemption time */ The thread priority setting in ts_sleep() in the second code segment above is entered if the thread has been waiting an inordinate amount of time to run, as determined by ts_dispwait in the ts_data structure and by the ts_maxwait value from the dispatch table, as indexed by the current user-mode priority, ts_umdpri. The code returns to cv_block() from ts_sleep(), where the thread's t_wchan is set to the address of the condition variable and the thread's t_sobj_ops is set to the address of the condition variable's operations structure. /* * Type-number definitions for the various synchronization * objects defined for the system. The numeric values * assigned to the various definitions begin with zero, since * the synch-object mapping array depends on these values. */ #define SOBJ_NONE 0 /* undefined synchronization object */ #define SOBJ_MUTEX 1 /* mutex synchronization object */ #define SOBJ_RWLOCK 2 /* readers/writer synchronization object */ #define SOBJ_CV 3 /* cond. variable synchronization object */ #define SOBJ_SEMA 4 /* semaphore synchronization object */ #define SOBJ_USER 5 /* user-level synchronization object */ #define SOBJ_USER_PI 6 /* user-level sobj having Prio Inheritance */ #define SOBJ_SHUTTLE 7 /* shuttle synchronization object */ /* * The following data structure is used to map * synchronization object type numbers to the * synchronization object's sleep queue number * or the synch. object's owner function. */ typedef struct _sobj_ops { int sobj_type; kthread_t *(*sobj_owner)(); void (*sobj_unsleep)(kthread_t *); void (*sobj_change_pri)(kthread_t *, pri_t, pri_t *); } sobj_ops_t; See usr/src/uts/common/sys/sobject.h This is a generic structure that is used for all types of synchronization objects supported by the operating system. Note the types in the header file; they describe mutex locks, reader/writer locks, semaphores, condition variables, etc. Essentially, this object provides a placeholder for a few routines that are specific to the synchronization object and that may require invocation while the kernel thread is sleeping. In the case of condition variables (our example), the sobj_ops structure is populated with the address of the cv_owner(), cv_unsleep(), and cv_change_pri() functions, with the sobj_type field set to SOBJ_CV. The address of this structure is what the kthread's t_sobj_ops field is set to in the cv_block() code. With the kthread's wait channel and synchronization object operations pointers set appropriately, the correct sleep queue is located by use of the hashing function on the condition variable address to index into the sleepq_head array. Next, the cv_waiters field in the condition variable is incremented to reflect another kernel thread blocking on the object, and the thread state is set to TS_SLEEP. Finally, the sleepq_insert() function is called to insert the kernel thread into the correct position (based on priority) in the sleep queue. The kthread is now on a sleep queue in a TS_SLEEP state, waiting for a wakeup. The remaining xx_sleep() functions are actually quite simple. For FSS class threads, a SYS priority is set if t_kpri_req was true or the FSSKPRI flag was set in fss_flags (which would happen for the same reason as the TS/IA casea RW lock or memory page lock is being held by the thread). Otherwise, the function just sets the thread's t_stime field (sleep time) and returns. The FX class is much the same. The RT class does not implement a sleep function; since RT class threads are already at a priority higher than the SYS class, so there's no reason to request a SYS class priority for an RT thread. 3.10.4. The Wakeup MechanismFor every cv_wait() (or variant) call on a condition variable, a corresponding wakeup call uses cv_signal(), cv_broadcast(), or cv_unsleep(). cv_signal() wakes up one thread, and cv_broadcast() wakes up all threads sleeping on the same condition variable. Here is the sequence of wakeup events.

The ts_wakeup() code puts the kernel thread back on a dispatch queue so that it can be scheduled for execution on a processor. Threads that have a kernel mode priority (as indicated by the TSKPRI flag in the class-specific data structure, which is set in ts_sleep() if a SYS priority is assigned) are placed at the front of the appropriate dispatch queue. IA class threads also result in setfrontdq() being called; otherwise, setbackdq() is called to place the kernel thread at the back of the dispatch queue. Threads that are not at a SYS priority are tested to see if their wait for a shot at getting scheduled is longer than the time value set in the ts_maxwait field in the dispatch table for the thread's priority level. If the thread has been waiting an inordinate amount of time to run (if dispwait > dispatch_table[priority]dispwait), then the thread's priority is recalculated with the ts_slpret value from the dispatch table. This is essentially the same logic used in ts_sleep() and gives a priority boost to threads that have spent an inordinate amount of time on the sleep queue. For RT and FX class threads, the class wakeup function is very simplewe just reset the time quantum for the thread and call setbackdq() to insert the thread at the back of a dispatch queue. The fss_wakeup() code is also relatively simple. If the thread already has a SYS priority, we call setbackdq(). If the thread has a kernel priority request flag (t_kpri_req), we boost the thread's priority to a SYS priority, and call setbackdq(). Otherwise, we just recalculate the priority and call setbackdq(). It is in the dispatcher queue insertion code (setfrontdq(), setbackdq()) that the thread state is switched from TS_SLEEP to TS_RUN. It's also in these functions that we determine if the thread we just placed on a queue is of a higher priority than the currently running thread and if so, force a preemption. At this point, the kthread has been woken up and is sitting on a dispatch queue, ready to get context-switched onto a processor when the dispatcher swtch() function runs again and the newly inserted thread is selected for execution. For monitoring sleep events, have a look at /usr/demo/dtrace/whatfor.d, which uses the sched provider's off-CPU probe to track when a thread is going to sleep, and aggregates on the type of synchronization object, so we get an idea of how much time per-synchronization object was spent sleeping. |

|

|

EAN: 2147483647

Pages: 244