CREATING NORMALS OUT OF GEOMETRY

|

|

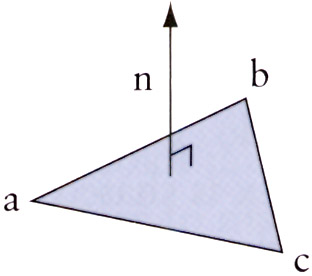

One practical aspect is to use the cross product to create normals for your objects if they don't have them. Suppose you have a triangle with three vertices: a, b, and c (Figure 2.7). You can create two direction vectors by calculating a − b, and a − c, and then calculate the normal vector, n, by taking the cross product of the two vectors.

Figure 2.7: The normal n of a triangle.

So, first we create two direction vectors, v1 and v2, from the three points.

Then we create the normal from the cross product of these two vectors. Note that if we cross from ac to ab, we'll get the normal pointing out of the triangle, as shown in Figure 2.7. If we did it the other way, we'd get the normal pointing out of the bottom of the triangle.

![]()

Finally, you'll probably want to normalize the normal vector.

Vertex Normals vs. Face Normals

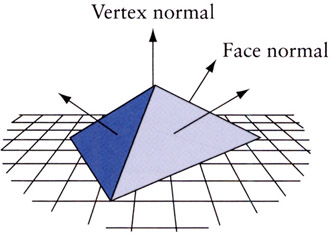

When creating normal vectors from geometry, you frequently don't want the normal that we've calculated here, which is called a face normal (since it's the normal of the triangle's surface or the face). Instead, you want normals for the individual vertices. This is a bit more work, but you'd start with face normals, then find all the faces to which a vertex is used in, then average all the normals from those faces, possibly with some sort of weighting function thrown in. Once you get an averaged normal, you store that as the vertex's normal (Figure 2.8).

Figure 2.8: Vertex normals and face normals.

This is fine for models with smoothly varying surfaces, but for models with sharp angles, there's more work to do. Typically, there's some sort of crease angle cutoff, which means that if a vertex is shared between faces in which the angle between the faces is too large, such as a crease or a point (imagine the vertex at the corner of a cube), then the vertex needs to be assigned to one set of faces. Or, the vertex needs to be duplicated into two or more vertices that share the same position, but are assigned normals from one set of face averages. Then others use the other normals from the faces that were over the crease angle.

Another creative example of using a cross product is given by [VERTH 2001]. If you are an object traveling on a reasonably level xy plane in direction d1 and you want to turn to direction d2, then you can examine the cross product's z value–if it's positive you'll turn left; if it's negative, you'll turn right.

Though you might not be using the cross product in a shader, you'll typically use it to calculate other vectors that are required at some point.

|

|

EAN: 2147483647

Pages: 104