The Ethics of Persuasive Technology

Overview

An advertising agency creates a Web site that lets children play games online with virtual characters. To progress, kids must answer questions[1]

such as, “What is your favorite TV show?” and “How many bathrooms are in your house?” [2] Kids provide these answers quickly in their quest to continue playing. [3]

Kim’s portable device helps her choose affordable products made by companies with good environmental records. In advising Kimon buying a new printer, the system suggests a more expensive product than she wanted. It shows how the company has a much better environmental record. Kim buys the recommended printer. The system fails to point out that the printer she bought will probably break down more often.

Julie has been growing her retirement fund for almost 20 years. To optimize her investment strategy, she signs up for a Web-based service that reportedly can give her individualized expert advice. Using dramatic visual simulations and citing expert opinion, the system persuades Julie to invest more in the stock market and strongly recommends a particular stock. Two months later, the stock drops dramatically and Julie loses much of her hard-earned retirement money. Although the system has information about risk, the information isn’t prominently displayed. Nor is the fact that the site is operated by a company with a major financial investment in the company issuing the stock.

Mark is finally getting back into shape. At the gym he’s using a computerized fitness system that outlines his training routines, monitors each exercise, and acknowledges his progress. The system encourages people to use a full range of motion when lifting weights. Unfortunately, the range of motion it suggests is too extreme for Mark, and he injures his back.

As the hypothetical scenarios above suggest, persuasive technology can raise a number of ethical concerns. It combines two controversial domains— persuasion and technology—each with its own history of moral debate. Debate over the ethics of persuasion dates back to Aristotle and other classical rhetoricians, and the discussion continues to this day. As for technology and ethics, people have expressed misgivings about certain computer applications [4]

at least since Joseph Weizenbaum created ELIZA, the computer “therapist” described in Chapter 5.[5]

Is persuasion unethical? That depends. Can it be unethical? Clearly, the answer is yes.

Examining ethical issues is a key component of captology, the study of persuasive technology. When is persuasive technology ethical and when is it not? Because values vary from one culture to the next, there is no easy answer that will satisfy everyone, no single ethical system or set of guidelines that will serve in all cases. The key for those who design, study, or use persuasive technologies is to become sensitive to the range of ethical issues involved. The purpose of this chapter is to provide a foundation for identifying and examining those issues.

[1]Passed by Congress in 1998, the Children’s Online Privacy Protection Act (COPPA) established strict privacy guidelines for child-oriented Web sites. Final rules on COPPA, drafted by the Federal Trade Commission (FTC) in 1999, became enforceable in April 2001. In April of 2002, the Federal Trade Commission reached a settlement with Etch-Sketch, the toy manufacturer that was violating the Children’s Online Privacy Protection Rule. Etch-A-Sketch agreed to modify its data collection practices and pay a civil penalty of $35,000. For more, see http://www.ftc.gov/opa/2002/04/coppaanniv.htm.

[2]The number of bathrooms in a home is a rough indicator of socioeconomic status.

[3]The idea for this concept came from Stanford captology students exploring the dark side of persuasive technologies. The students were Peter Westen, Hannah Goldie, and David Li.

[4]See the following online bibliographies for information about computer ethics:

http://courses.cs.vt.edu/~cs3604/lib/Bibliography/Biblio.acm.html

http://www.rivier.edu/faculty/htavani/biblio.htm

http://www.cs.mdx.ac.uk/harold/srf/justice.html.

Professional societies have developed ethical codes for their members. Below are some codes relating to psychology and to computer science:

American Psychological Association (APA) Ethics Code Draft for Comment: http://anastasi.apa.org/draftethicscode/draftcode.cfm#toc

American Psychological Association (APA) Ethics Code (1992): http://www.apa.org/ethics/code.html

Association for Computing Machinery (ACM) Code of Ethics and Professional Conduct: http://www.acm.org/constitution/code.html

Australian Computer Society (ACS) Code of Ethics: http://www.acs.org.au/national/pospaper/acs131.htm

For printed material on computer ethics, see the following:

a. B. Friedman, Human Values and the Design of Computer Technology (Stanford, CA: CSLI Publications, 1997).

b. D. Gotterbarn, K. Miller, and S. Rogerson, Software engineering code of ethics, Communications of the ACM, 40(11): 110–118 (1997).

[5]Joseph Weizenbaum, Computer Power and Human Reason: From Judgment to Calculation (San Francisco, CA: W.H. Freeman, 1976).

Is Persuasion Unethical?

Is persuasion inherently unethical? The answer to this question depends on whom you ask. Some people believe that attempting to change another person’s attitudes or behaviors always is unethical, or at least questionable. In the extreme, this view holds that persuasion can lead to indoctrination, coercion, brainwashing, and other undesirable outcomes. Even some notable health promotion experts have questioned the foundation of their work, wondering what right they have to tell others how to live and what to believe. [6 ]

Other people view persuasion as fundamentally good. To some, persuasion is the foundation of ethical leadership, [7 ]while others see persuasion as essential for participatory democracy. [8 ]

Can persuasion be unethical? The answer clearly is yes. People can use persuasion to promote outcomes that we as a culture find unacceptable: persuading teens to smoke, advocating that people use addictive drugs[9], persuading people to harm those who are different in race, gender, or belief. Persuasion also is clearly unethical when the tactics used to persuade are deceptive or compromise other positive values. I’ll revisit both of these concepts later in this chapter.

In the end, the answer to the question “Is persuasion unethical?” is neither yes nor no. It depends on how persuasion is used.

[6 ]Health interventions, Guttman (1997) argues, can do damage when experts imply that others have weak character, when experts compromise individual autonomy, or when they impose middle-class values. As Guttman writes, “Interventions by definition raise ethical concerns” (p. 109). Guttman further states that ethical problems arise when “ values emphasized in the intervention [are] not fully compatible with values related to cultural customs, traditions, and some people’s conceptions of what is enjoyable or acceptable” (p. 102). In short, persuasion can become paternalism. See N. Guttman, Beyond strategic research: A value-centered approach to health communication interventions, Communication Theory, 7(2): 95–124 (1997).

For more on the ethics of interventions, see the following:

- C. T. Salmon, Campaigns for social “improvement”: An overview of values, rationales, and impacts,” in C. T. Salmon (ed.), Information Campaigns: Balancing Social Values and Social Change (Newbury Park, CA: Sage, 1989).

- K. Witte, The manipulative nature of health communication research: Ethical issues and guidelines, American Behavioral Scientist, 38(2): 285–293 (1994).

[7 ]R. Greenleaf, Servant (Peterborough, NH: Windy Row Press, 1980).

[8 ] R. D. Barney and J. Black, Ethics and professional persuasive communications, Public Relations Review, 20(3): 233–248 (1994).

[9]For example, Opioids.com is a site that at one time rationally argued for use of controlled substances.

Unique Ethical Concerns Related to Persuasive Technology

Because persuasion is a value-laden activity, creating an interactive technology designed to persuade also is value laden.

I teach a module on the ethics of persuasive technology in my courses at Stanford. One assignment I give students is to work in small teams to develop a conceptual design for an ethically questionable persuasive technology—the more unethical the better. The purpose is to let students explore the dark side of persuasive technology to help them understand the implications of future technology and how to prevent unethical applications or mitigate their impact. After teaching this course for a number of years, I’ve come to see certain patterns in the ethical concerns that arise. The information I offer in this chapter comes from working with students in this way, as well as from my own observations of the marketplace and investigations into the possibilities of future technologies.

For the most part, the ethical issues relating to persuasive technologies are similar to those for persuasion in general. However, because interactive technology is a new avenue of persuasion, it raises a handful of ethical issues that are unique. Below are six key issues, each of which has implications for assessing the ethics of persuasive technology.

The Novelty of the Technology Can Mask Its Persuasive Intent

While people have been persuaded by other forms of media for generations, most people are relative novices when it comes to dealing with persuasion from interactive computing systems—in part because the technologies themselves are so new. As a result, people may be unaware of the ways in which interactive computer technology can be designed to influence them, and they may not know how to identify or respond to persuasion tactics applied by the technology.

Sometimes the tactics can be subtle. Volvo Ozone Eater (Figure 9.1), an online game produced for the Swedish automaker, provides an example. In this Pacman-like game, players direct a blue Volvo around a city block. Other cars in the simulation give off exhaust and leave pink molecules behind—ozone. As the Volvo drives over the pink molecules, it converts them into blue molecules—oxygen.

Figure 9.1: Volvo Ozone Eater is a simulation game wherein Volvos convert ozone into oxygen.

The point of the game is to drive the Volvo through as many ozone areas as possible, cleaning up the city by producing oxygen. This simple simulation game suggests that driving a Volvo will remove ozone from the air and convert it to oxygen. The truth is that only Volvos containing a special radiator called Prem Air can convert ozone to oxygen, and then only ground-level ozone. (The implications of such designer bias were discussed in Chapter 4.) But I hypothesize that those who play the game often enough are likely to start viewing all Volvos as machines that can clean the air.[10] Even if their rational minds don’t accept the claim, a less rational element that views the simulation and enjoys playing the game is likely to affect their opinions. It’s subtle but effective.[11]

Ethical issues are especially prominent when computer technology uses novelty as a distraction to increase persuasion. When dealing with a novel experience, people not only lack expertise but they are distracted by the experience, which impedes their ability to focus on the content presented.[12] This makes it possible for new applications or online games such as Volvo Ozone Eater to deliver persuasive messages that users may not scrutinize because they are focusing on other aspects of the experience.

Another example of distraction at work: If you want to sign up for a particular Web site, the site could make the process so complicated and lengthy that all your mental resources are focused on the registration process. As a result, you may not be entirely aware of all of the ways you are being influenced or manipulated, such as numerous pre checked “default” preferences that you may not want but may overlook to get the registration job done as quickly as possible.

Some Web sites capitalize on users’ relative inexperience to influence them to do things they might not if they were better informed. For instance, some sites have attempted to expand their reach through “pop-up downloads,” which ask users via a pop-up screen if they want to download software. Once they agree—as many people do, sometimes without realizing what they’re agreeing to—a whole range of software might be downloaded to their computers. The software may or may not be legitimate. For instance, it could be used to point users to adult Web sites, created unwanted dial-up accounts, or even interfere with the computer’s operations.[13] At a minimum, these virtual Trojan horses use up computer resources and may require significant time and effort to uninstall.

In summary, being in a novel situation can make people more vulnerable because they are distracted by the newness or complexity of the interaction.

Persuasive Technology Can Exploit the Positive Reputation of Computers

When it comes to persuasion, computers also benefit from their traditional reputation of being intelligent and fair, making them seem credible sources of information and advice. While this reputation isn’t always warranted ( especially when it comes to Web credibility, as noted in Chapter 7), it can lead people to accept information and advice too readily from technology systems. Ethical concerns arise when persuasive technologies leverage the traditional reputation of computers as being credible in cases where that reputation isn’t deserved.

If you are looking for a chiropractor in the Yellow Pages, you may find that some display ads mention computers to make this sometimes controversial healing art appear more credible. In my phone book, one ad reads like this:

No “cracking” or “snapping.” Your adjustments are done with a computerized technology advancement that virtually eliminates the guesswork.

It’s hard to judge whether the claim is accurate or not, but it’s clear that this chiropractor—and many others like her in a wide range of professions—are leveraging the positive reputation of computers to promote their own goals.

Computers Can Be Proactively Persistent

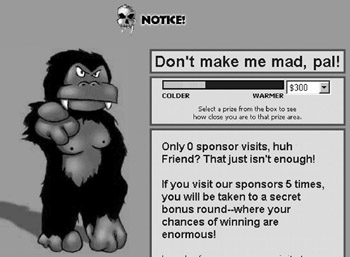

Another advantage of computers is persistence. Unlike human persuaders, computers don’t get tired; they can implement their persuasive strategies over and over. One notable example is TreeLoot.com, which pops up messages again and again to motivate users to keep playing the online game and visit site sponsors (Figure 9.2). The requests for compliance never end, and users may finally give in.

Not only can computers persist in persuading you while you are using an application, they also can be persistent when you are not. Persuasive messages can pop up on your desktop or be streamed to your email inbox on a frequent basis. Such proactive attempts to persuade can have a greater impact than other persistent media. You can always set aside a direct-mail solicitation, but a pop-up screen is hard to avoid; it’s literally in your face.

Computers Control the Interactive Possibilities

A fourth area of ethical uniqueness lies in how people interact with computing technology. When you deal with human persuaders, you can stop the persuasion process and ask for clarification, you can argue, debate, and negotiate. By contrast, when you interact with computing technology, the technology ultimately controls how the interaction unfolds. You can choose either to continue or stop the interaction, but you can’t go down a path the computer hasn’t been programmed to accept.

Figure 9.2: TreeLoot.com is persistent in its attempts to persuade users to keep playing and to visit sponsors.

Computers Can Affect Emotions But Can t Be Affected by Them

The next ethical issue has to do with emotional cues. In human persuasion, the process proceeds more smoothly when people use emotional cues. A coach can sense when you’re frustrated and modify her motivational strategy. A salesperson may give subtle signals that he’s exaggerating a bit. These cues help persuasive exchanges reach more equitable and ethical outcomes.

By contrast, computing products don’t (yet) read subtle cues from people,[14] but they do offer emotional cues, which can be applied to persuade. This imbalance puts humans at a relative disadvantage. We are emotional beings, especially when it comes to issues of influence. We expect ethical persuasion to include elements of empathy and reciprocity. But when dealing with interactive technology, there is no emotional reciprocity.

Computers Cannot Shoulder Responsibility

The final ethical issue unique to interactive technology involves taking responsibility for errors. To be an ethical agent of persuasion, I believe you must be able to take responsibility for your actions and at least partial responsibility for what happens to those whom you persuade. Computers cannot take responsibility in the same way.[15] As persuasive entities they can advise, motivate, and badger people, but if computers lead people down the wrong path, computers can’t really shoulder the blame; they are not moral agents. (Consider the last anecdote at the start of this chapter. Who could blame the computerized fitness system for Mark’s injury?)

Making restitution for wrongdoings (or at least being appropriately punished) has been part of the moral code of all major civilizations. But if computers work autonomously from humans, perhaps persuading people down treacherous paths, the computers themselves can’t be punished or follow any paths to make restitution.

The creators of these products may be tempted to absolve themselves of their creations. Or, they may be nowhere to be found (the company has folded, the project leader has a different job), yet their creations continue to interact with people. Especially now with the Internet, software doesn’t necessarily go away when the creators leave or after the company abandons the product. It still may exist somewhere in cyberspace.

This changes the playing field of persuasion in ways that raise potential ethical concerns: one party in the interaction (the computer product) has the power to persuade but is unable to accept responsibility if things go awry. Imagine a Web-based therapy program that was abandoned by its developer but continues to exist on the Internet. Now imagine a distraught person stumbling upon the site, engaging in an online session of computer-based therapy, requiring additional help from a human being, but being unable to get a referral from the now-abandoned computer therapist.

Ethical Issues in Conducting Research

Ethical issues arise when doing research and evaluation on persuasive technology products. These issues are similar to, but more acute than, those for studies on products designed solely for information (like news Web sites) or straightforward transactions (such as e-commerce sites). Because persuasive technologies set out to change attitudes or behaviors, they may have a greater impact on the participants involved in the studies. And the impact won’t always be positive. As a result, researchers and evaluators should take care when setting up the research experience; they should follow accepted standards in the way they recruit, involve, and debrief participants.

Academic institutions usually have a board that formally reviews proposed research to help prevent abuses of study participants and unplanned outcomes. At Stanford, it typically takes about six to eight weeks for my study protocols to receive approval. To win approval, I must complete a rather involved online application (which is much easier than the old method with paper forms). I outline the purpose of the study, describe what will happen during the experience, explain recruitment procedures, list the research personnel involved, outline the sources of funding (conflict of interest check), and more. I submit examples of the stimuli and the instrument for collecting the measurements (such as questionnaires). A few things complicate the application: involving children as participants, collecting data on video, or using deception as part of the study. In our lab we rarely do any of those things, and if we do, we must carefully describe how we will address and overcome any ethical concerns.

A number of weeks after I submit the application, I hear back from the panel, either giving the okay to move forward or asking for further information. Each time I receive the official approval letter from the Stanford review board, I pass it around at my weekly lab meeting. I want the other researchers in my lab to understand how the research approval system operates, and I want them to know that I consider institutional approval a necessary step in our research process. While the procedure for winning approval from the Stanford board slows down the research cycle, it clearly serves a useful purpose, protecting both the study participants and the researchers.

In my work at technology companies, I also seek approval from review committees. However, in some companies research approval systems don’t exist. It’s up to the individuals designing and conducting the research to assure that participants are treated with respect—that they understand the nature of the study, give their specific consent, are allowed to withdraw at any time, and are given a way to contact the responsible institution later. In my view, these are the basic steps required to protect participants when conducting research involving persuasive technology products.

Alone or in combination, the six factors outlined above give interactive computing technology an advantage when it comes to persuasion. Said another way, these factors put users of the technology at a relative disadvantage, and this is where the ethical issues arise. These six areas provide a solid starting point for expanding our inquiry into the ethics of persuasive technologies.

[10]At the time of this writing, the Volvo car game is available at http://fibreplay.com/other/portfolio_en.html#.

[11]There are at least three arguments that the Volvo interactive simulation will make an impact on users. First, users are essentially role playing the part of the Volvo. The psychology research on role playing and persuasion suggests that playing roles, even roles we don’t believe or endorse, influences our thinking. For a review of the classic studies in role playing and persuasion, see R. Petty and J. Cacioppo, Attitudes and Persuasion: Classic and Contemporary Approaches (Dubuque, IA: Brown, 1981).

Second, the message processing literature suggests that people have a tendency to forget the source of a message but remember the message, a phenomenon called the “sleeper effect.” In this situation, the message is that Volvo cars clean the air, which people may remember after they have forgotten that the simple simulation was the source of the message. Research on the sleeper effect goes back to the 1930s. For a deeper exploration of this phenomenon, see D. Hannah and B. Sternthal, Detecting and explaining the sleeper effect, Journal of Consumer Research, 11 (Sept.): 632–642 (1984).

Finally, the marketing literature on demonstrations show that showing product benefits in dramatic ways can influence buying decisions. For example, see R. N. Laczniak and D. D. Muehling, Toward a better understanding of the role of advertising message involvement in ad processing, Psychology and Marketing, 10(4): 301–319 (1993). Also see Amir Heiman and Eitan Muller, Using demonstration to increase new product acceptance: Controlling demonstration time, Journal of Marketing Research, 33: 1–11 (1996).

[12]The classic study in distraction and persuasion is J. Freedman and D. Sears, Warning, distraction, and resistance to influence, Journal of Personality & Social Psychology, 1(3): 262–266 (1965).

[13]S. Olson, Web surfers brace for pop-up downloads, CNET news.com, April 8, 2002. Available online at http://news.com.com/2100-1023-877568.html.

[14]The most notable research in the area of computers and emotion has been done by Rosalind Picard’s Affective Computing group at MIT Media Lab (see http://affect.media.mit.edu ). Thanks to research in this lab and elsewhere, someday computers may deal in emotions, opening new paths for captology.

[15]For more on this issue, see B. Friedman, Human Values and the Design of Computer Technology (Stanford, CA: CSLI Publications, 1997).

Intentions, Methods, and Outcomes Three Areas Worthy of Inquiry

Many ethical issues involving persuasive technologies fall into one of three categories: intentions, methods, and outcomes. By examining the intentions of the people or the organization that created the persuasive technology, the methods used to persuade, and the outcomes of using the technology, it is possible to assess the ethical implications.

Intentions Why Was the Product Created?

One reasonable approach to assessing the ethics of a persuasive technology product is to examine what its designers hoped to accomplish. Some forms of intentions are almost always good, such as intending to promote health, safety, or education. Technologies designed to persuade in these areas can be highly ethical.

Other intentions may be less clearly ethical. One common intention behind a growing number of persuasive technologies is to sell products or services. While many people would not consider this intent inherently unethical, others may equate it with less ethical goals such as promoting wasteful consumption. Then there are the clearly unethical intentions, such as advocating violence.

The designer’s intent, methods of persuasion, and outcomes help to determine the ethics of persuasive technology.

To assess intent, you can examine a persuasive product and make an informed guess. According to its creators, the intent of Baby Think It Over ( described in Chapter 4) is to teach teens about the responsibilities of parenthood—an intention that most people would consider ethical. Similarly, the intent of Chemical Scorecard (discussed in Chapter 3) would appear to be ethical to most people. Its purpose appears to be mobilizing citizens to contact their political representatives about problems with polluters in their neighborhoods. On the other hand, you could reasonably propose that Volvo commissioned the Volvo Ozone Eater game as a way to sell more cars to people who are concerned about the environment. For some people, this intent may be questionable.

Identifying intent is a key step in making evaluations about ethics. If the designer’s intention is unethical, the interactive product is likely to be unethical as well.

Methods of Persuasion

Examining the methods an interactive technology uses to persuade is another means of establishing intent and assessing ethics. Some methods are clearly unethical, with the most questionable strategies falling outside a strict definition of persuasion. These strategies include making threats, providing skewed information, and backing people into a corner. In contrast, other influence strategies, such as highlighting cause-and-effect relationships, can be ethically sound if they are factual and empower individuals to make good decisions for themselves.

How can you determine if a computer’s influence methods are ethical? The first step is to take technology out of the picture to get a clearer view. Simply ask yourself, “If a human were using this strategy to persuade me, would it be ethical?”

Recall CodeWarriorU.com, a Web site discussed in Chapter 1. While the goals of the online learning site include customer acquisition and retention, the influence methods include offering testimonials, repeatedly asking potential students to sign up, putting students on a schedule for completing their work in each course, and tracking student progress. Most people would agree that these methods would be acceptable ways to influence if they were used by a person. So when it comes to this first step of examining ethical methods of influence by interactive technology, CodeWarriorU.com earns a passing grade.

Now consider another example: a Web banner ad promises information, but after clicking on it you are swept away to someplace completely unexpected. A similar bait-and-switch tactic in the brick-and-mortar world would be misleading and unethical. The cyber version, too, is unethical. (Not only is the approach unethical, it’s also likely to backfire as Web surfers become more familiar with the trickery.) [16]

Using Emotions to Persuade

Making the technology disappear is a good first step in examining the ethics of persuasion strategies. However, it doesn’t reveal one ethical gray area that is unique to human-computer interactions: the expression of emotions.

Because humans respond so readily to emotions, it’s likely that computers that express “emotions” can influence people. When a computer expresses sentiments such as “You’re my best friend,” or “I’m happy to see you,” it is posturing to have human emotions. Both of these statements are uttered by ActiMates Barney, the interactive plush toy by Microsoft that I described in Chapter 5.

The ethical nature of Barney has been the subject of debate.[17] When I monitored a panel discussion of the ethics of the product, I found that panelists were divided into two camps. Some viewed the product as ethically questionable because it lies to kids, saying things that imply emotions and motives, and presenting statements that are not true or accurate, such as “I’m happy to see you.” Others argued that kids know it is only a toy without emotions or motives, part of a fantasy that kids understand.

The social dynamics leveraged by ActiMates characters can make for engaging play, which is probably harmless and may be helpful in teaching children social rules and behaviors.[18] But social dynamics could be used in interactive toys to influence in a negative or exploitative way what children think and do, and this raises ethical questions.

My own view is that the use of emotions in persuasive technology is unethical or ethically questionable only when its intent is to exploit users or when it preys on people’s naturally strong reactions to negative emotions or threatening information expressed by others. [19]For instance, if you play at theWeb site TreeLoot.com, discussed earlier in this chapter, you might encounter a character who says he is angry with you for not visiting the site’s sponsors (Figure 9.3).

Figure 9.3: A TreeLoot.com character expresses negative emotions to motivate users.

Because the TreeLoot site is so simple and the ruse is so apparent, you may think this use of emotion is hardly cause for concern. And it’s probably not. But what if the TreeLoot system were much more sophisticated, to the point where users couldn’t tell if the message came from a human or a computer, as in the case of a sophisticated chat bot? Or what if the users believed the computer system that expressed anger had the power to punish them? The ethics of that approach would be more questionable.

The point is that the use of emotions to persuade has unique ethical implications when computers rather than humans are expressing emotions. In addition to the potential ethical problems with products such as ActiMates Barney and TreeLoot.com, there is the problem discussed earlier in this chapter: while computers may convey emotions, they cannot react to emotions, giving them an unfair advantage in persuasion.

Methods That Always Are Unethical

Whether used by a person or a computer system, some methods for changing attitudes and behaviors are almost always unethical. Although they do not fall into the category of persuasion per se, two methods deserve mention here because they are easy to incorporate into computing products: deception and coercion.

Web ads are perhaps the most common example of computer-based deception. Some banner ads (Figure 9.4) seem to do whatever it takes to get you to click on them. They may offer money, sound false alarms about computer problems, or, as noted earlier, promise information that never gets delivered. The unethical nature of these ads is clear. If the Web were not so new, it’s unlikely we’d tolerate these deceptive methods. [20]

Figure 9.4: This banner ad claims it’s checking qualifications—a deception (when you click on the ad, you are simply sent to a gambling site).

Besides deception, computers can use coercion to change people’s behaviors. Software installation programs provide one example. Some installation programs require you to install additional software you may not need but that is bundled as part of the overall product. In other situations, the new software may change your default settings to preferences that benefit the manufacturer rather than the user, affecting how you work in the future (some media players are set up to do this when installed). In many cases, users may feel they are at the mercy of the installation program. This raises ethical questions because the computer product may be intentionally designed to limit user choice for the benefit of the manufacturer.

Methods That Raise Red Flags

While it’s clear that deception and coercion are unethical in technology products, two behavior change strategies that fit into a broad definition of persuasion—operant conditioning and surveillance—are not as clearly ethical or unethical, depending on how the strategies are applied.

Operant Conditioning

Operant conditioning, described in Chapter 3, consists mainly of using reinforcement or punishment to promote certain behavior. Although few technology products outside of games have used operant conditioning to any great extent, one could imagine a future where operant conditioning is commonly used to change people’s behavior, sometimes without their direct consent or without them realizing what’s going on—and here is where the ethical concerns arise.

For instance, a company could create a Web browser that uses operant conditioning to change people’s Web surfing behavior without their awareness. If the browser were programmed to give faster page downloads to certain Web sites—say, those affiliated with the company’s strategic partners—and delay the download of other sites, users would be subtly rewarded for accessing certain sites and punished for visiting others. In my view, this strategy would be unethical.

Less commonly, operant conditioning uses punishment to reduce the instances of a behavior. As I noted in Chapter 3, I believe this approach is generally fraught with ethical problems and is not an appropriate use of conditioning technology.

Having said that, operant conditioning that incorporates punishment could be ethical, if the user is informed and the punishment is innocuous. For instance, after a trial period, some downloaded software is designed to take progressively longer to launch. If users do not register the software, they are informed that they will have to wait longer and longer for the program to become functional. This innocuous form of punishment (or negative reinforcement, depending on your perspective) is ethical, as long as the user is informed. Another form of innocuous and ethical punishment: shareware programs that bring up screens, often called “nag screens,” to remind users they should register and pay for the product.

Now, suppose a system were created with a stronger form of punishment for failure to register: crashing the computer on the subsequent startup, locking up frequently used documents and holding them for ransom, sending email to the person’s contact list pointing out that they are using software they have not paid for. Such technology clearly would be unethical.

In general, operant conditioning can be an ethical strategy when incorporated into a persuasive technology if it is overt and harmless. If it violates either of those constraints, however, it must be considered unethical.

Another area of concern is when technologies use punishment—or threats of punishment—to shape behaviors. Technically speaking, punishment is a negative consequence that leads people to perform a behavior less often. A typical example is spanking a child. Punishment is an effective way to change outward behaviors in the short term,[21] but punishment has limited outcomes beyond changing observable behavior.

Surveillance

Surveillance is another method of persuasion that can raise a red flag. Think back to Hygiene Guard, the surveillance system to monitor employees’ hand washing, described in Chapter 3. Is this an ethical system? Is it unethical? Both sides could be argued. At first glance, Hygiene Guard may seem intrusive, a violation of personal privacy. But its purpose is a positive one: to protect public health. Many institutions that install Hygiene Guard belong to the healthcare and food service industries. They use the system to protect their patients and patrons.

So is Hygiene Guard ethical or unethical? In my view, it depends on how it is used. As the system monitors users, it could give gentle reminders if they try to leave the restroom without washing their hands. Or it could be set up mainly to identify infractions and punish people. I view the former use of the technology as ethical and the latter application as unethical.

The Hygiene Guard example brings up an important point about the ethics of surveillance technology in general: it makes a huge difference how a system works—the nature and tone of the human-machine interaction. In general, if surveillance is intended to be supportive or helpful rather than punitive, it may be ethical. However, if it is intended mainly to punish, I believe it is unethical.

Whether or not a surveillance technology is ethical also depends on the context in which it is applied. Think back to AutoWatch, the system described in Chapter 3 that enables parents to track how their teenagers are driving.[22] This surveillance may be a “no confidence” vote in a teenager, but it’s not unethical, since parents are ultimately responsible for their teens’ driving, and the product helps them to fulfill this responsibility.

The same could be said for employers that implement such a system in their company cars. They have the responsibility (financially and legally, if not morally) to see that their employees drive safely while on company time. I believe this is an acceptable use of the technology (although it is not one that I endorse). However, if the company were to install a system to monitor employees’ driving or other activities while they were not on company time, this would be an invasion of privacy and clearly an unethical use of technology.

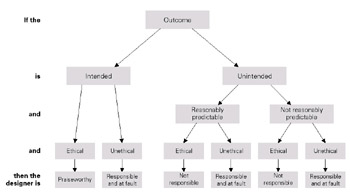

Figure 9.5: The ethical nature of a persuasive technology can hinge on whether or not the outcome was intended.

Outcomes Intended and Unintended

In addition to examining intentions and methods, you can also investigate the outcomes of persuasive technology systems to assess the ethics of a given system, as shown in Figure 9.5. (This line of thinking originated with two of my former students: Eric Neuenschwander and Daniel Berdichevsky.)

If the intended outcome of a persuasive technology is benign, generally there is no significant ethical concern. Many technologies designed for selling legitimate products and strengthening brand loyalty fall into this category.

The intended outcomes of other technologies may raise ethical concerns. Think back to Banana-Rama, the high-tech slot machine described in Chapter 5. This device uses onscreen characters, an ape and a monkey, to motivate players to continue gambling. When you win, the characters celebrate. When you hesitate to drop in more of your money to continue playing, the characters’ expressions change from supportive to impatient.

Some people would find this product ethically objectionable because its intended outcome is to increase gambling, an activity that conflicts with the values of some individuals and cultures. Other people would not consider this intended outcome a cause for ethical alarm; gambling is an accepted part of many cultures and is often promoted by government groups. However, if Banana-Rama were wildly popular, with Las Vegas tourists lining up to lose their fortunes, the outcome may be significant enough to make it a major ethical issue.

Hewlett-Packard’s MOPy (Multiple Original Printouts) is a digital pet screen saver that rewards users for printing on an HP printer (Figure 9.6). The point of the MOPy system is to motivate people to print out multiple originals rather than using a copy machine. As you make original prints, you earn points that can be redeemed for virtual plants and virtual toys for your virtual fish. In this way, people use up HP ink cartridges and will have to buy more sooner.

Figure 9.6: The MOPy screen saver (no longer promoted by Hewlett-Packard) motivates people to make original prints, consuming disposable ink cartridges.

Some might argue that MOPy is unethical because its intended outcome is one that results in higher printing costs and environmental degradation. (To HP’s credit, the company no longer promotes MOPy.) [23] Others could argue that there is no cause for ethical alarm because the personal or environmental impact of using the product is insignificant.

But suppose that Banana-Rama and MOPy were highly successful in achieving their intended outcomes: increasing gambling and the consumption of ink cartridges. If these products produced significant negative impacts—social, personal, and environmental—where would the ethical fault reside? Who should shoulder the blame?

In my view, three parties could be at fault when the outcome of a persuasive technology is ethically unsound: those who create, distribute, or use the product. I believe the balance of culpability shifts on a case-by-case basis.[24] The creators have responsibility because, in the case of MOPy, their work benefited a private company at the expense of individuals and the global environment. Likewise, distributors must also shoulder the ethical responsibility of making unethical technologies widely available.

Finally, users of ethically questionable persuasive technologies must bear at least some responsibility. In the cases of Banana-Rama and MOPy, despite the persuasive strategies in these products, individual users are typically voluntarily choosing to use the products, thus contributing to the outcomes that may be ethically questionable.

Responsibility for Unintended Outcomes

Persuasive technologies can produce unintended outcomes. Although captology focuses on intended outcomes, creators of persuasive technology must take responsibility for unintended unethical outcomes that can reasonably be foreseen.

To act ethically, the creators should carefully anticipate how their product might be used for an unplanned persuasive end, how it might be overused, or how it might be adopted by unintended users. Even if the unintended outcomes are not readily predictable, once the creators become aware of harmful outcomes, they should take action to mitigate them.

Designed to reduce speeding, the Speed Monitoring Awareness Radar Trailer, discussed in Chapter 3, seems to have unintended outcomes that may not have been easy to predict. Often when I discuss this technology with groups of college students, at least one male student will say that for him the SMART trailer has the opposite effect of what was intended: he speeds up to see how fast he can go.

As far as I can tell, law enforcement agencies have not addressed the possibility that people might actually speed up rather than slow down when these trailers are present. It may be the unintended outcome has not been recognized or is considered to apply to a relatively small number of people—mostly younger male drivers who seek challenges. In any case, if this unintended outcome were to result in a significant number of accidents and injuries, I believe the developers of the SMART trailer would have to take responsibility for removing or altering the system.

Some companies turn a blind eye to the unintentional, though reasonably predictable, outcomes of using their products. Consider the video game Mortal Kombat, which rewards players for virtual killing. In this game, players interact with other players through virtual hand-to-hand combat. This entertainment product can be highly compelling for some people.

Unfortunately, Mortal Kombat and other violent video games not only motivate people to keep playing, they also may have a negative effect on players’ attitudes and behaviors in the real world. Social learning theory [25]suggests that practicing violent acts in a virtual world can lead to performing violent acts in the real world. [26]The effect of video game violence has been much debated for over a decade. After reviewing results of previous studies and presenting results of their own recent work, psychologists Craig Anderson and Karen Dill conclude:

When the choice and action components of video games . . . is coupled with the games’ reinforcing properties, a strong learning experience results. In a sense, violent video games provide a complete learning environment for aggression, with simultaneous exposure to modeling, reinforcement, and rehearsal of behaviors. This combination of learning strategies has been shown to be more powerful than any of these methods used singly.[27]

Although violent real-world behavior is not the intended outcome of the creators of video games such as Mortal Kombat, it is a reasonably predictable outcome of rewarding people for rehearsing violence, creating an ethical responsibility for the makers, distributors, and users of such violent games.

[16]See “Do they need a “trick” to make us click?,” a pilot study that examines a new technique used to boost click-through, by David R. Thompson, Ph.D., Columbia Daily Tribune, and Birgit Wassmuth, Ph.D., University of Missouri. Study conducted September 1998. Paper presented at the annual Association for Education in Journalism and Mass Communication Convention, August 4–7, 1999, New Orleans, Louisiana.

[17]At the 1999 ACM SIGCHI Conference, I organized and moderated a panel discussion on the ethical issues related to high-tech children’s plush toys, including Barney. This panel included the person who led the development of the Microsoft ActiMates products (including Barney) and other specialists in children’s technology. The panelists were Allen Cypher, Stagecast Software; AllisonDruin, University of Maryland; Batya Friedman, Colby College; and Erik Strommen, Microsoft Corporation.

You can find a newspaper story of the event at http://www.postgazette.com/businessnews/19990521barney1.asp.

[18]E. Strommen and K. Alexander, Emotional interfaces for interactive aardvarks: Designing affect into social interfaces for children, Proceeding of the CHI 99 Conference on Human Factors in Computing Systems, 528–535 (1999).

[19]In an article reviewing various studies on self-affirmation, Claude Steele discusses his research that showed higher compliance rates from people who were insulted than from people who were flattered. In both cases, the compliance rates were high, but the people receiving the negative assessments about themselves before the request for compliance had significantly higher rates of compliance. See C. M. Steele, The psychology of self affirmation: Sustaining the integrity of the self, in L. Berkowitz (ed.), Advances in Experimental Social Psychology, 21: 261–302 (1988).

For a more recent exploration of compliance after threat, see Amy Kaplan and Joachim Krueger, Compliance after threat: Self-affirmation or self-presentation? Current Research in Social Psychology, 2:15–22 (1999). http://www.uiowa.edu/~grpproc. (This is an online journal. The article is available at http://www.uiowa.edu/~grpproc/crisp/crisp.4.7.htm.)

Also, Pamela Shoemaker makes a compelling argument that humans are naturally geared to pay more attention to negative, threatening information than positive, affirming information. See Pamela Shoemaker, Hardwired for news: Using biological and cultural evolution to explain the surveillance function, Journal of Communication, 46(2), Spring (1996).

[20]For a statement about the “Wild West” nature of the Web in 1998, see R. Kilgore, Publishers must set rules to preserve credibility, Advertising Age, 69 (48): 31 (1998).

[21]For book-length and readable discussions about how discipline works (or doesn’t work) with children in changing behavior, see

a. I. Hyman, The Case Against Spanking: How to Discipline Your Child without Hitting (San Francisco: Jossey-Bass Psychology Series, 1997).

b. J. Maag, Parenting without Punishment: Making Problem Behavior Work for You ( Philadelphia, PA: The Charles Press, 1996).

[22]To read about the suggested rationale for AutoWatch, see the archived version at http:// web.archive.org/web/19990221041908/

http://www.easesim.com/autowatchparents.htm.

[23]While Hewlett-Packard no longer supports MOPy, you can still find information online at the following sites:

http://formen.ign.com/news/16154.html

http://cna.mediacorpnews.com/technology/bytesites/virtualpet2.htm

[24]Others suggest that all parties involved are equally at fault. For example, see K. Andersen, Persuasion Theory and Practice (Boston: Allyn and Bacon, 1971).

[25]A. Bandura, Self-Efficacy: The Exercise of Control (New York: Freeman, 1997).

[26]C. A. Anderson and K. E. Dill, Video games and aggressive thoughts, feelings, and behavior in the laboratory and in life, Journal of Personality and Social Psychology, 78 (4): 772–790 (2000). This study, which includes an excellent bibliography, can be found at http://www.apa.org/journals/psp/psp784772.html.

[27]Other related writings on video games and violence include the following:

- D. Grossman, On Killing (New York: Little Brown and Company, 1996). (Summarized at http://www.mediaandthefamily.org/research/vgrc/1998-2.shtml.)

- Steven J. Kirsh, Seeing the world through “Mortal Kombat” colored glasses: Violent video games and hostile attribution bias. Poster presented at the biennial meeting of the Society for Research in Child Development, Washington, D.C., ED 413 986, April 1997. This paper now also available: Steven J. Kirsh, Seeing the world through “Mortal Kombat” colored glasses: Violent video games and hostile attribution bias, Childhood, 5(2): 177–184 (1998).

When Persuasion Targets Vulnerable Groups

Persuasive technology products can be designed to target vulnerable populations, people who are inordinately susceptible to influence. When they exploit vulnerable groups, the products are unethical.

The most obvious vulnerable group is children, who are the intended users of many of today’s persuasive technology products.[28] It doesn’t take much imagination to see how such technologies can take advantage of children’s vulnerability to elicit private information, make an inappropriate sale, or promote a controversial ideology.

Figure 9.7: A conceptual design for an unethical computer game, by captology students Hannah Goldie, David Li, and Peter Westen.

How easy it is to exploit children through persuasive technology is evident from the classes I teach at Stanford in which I assign students to develop ethically questionable product concepts. One team made a simple prototype of a Web-based Pokémon game for kids. In the prototype, the team showed how this seemingly innocuous game could be designed to elicit personal information from children who play the game, using the popular Pokémon characters (Figure 9.7).

Are You Ready for “Behavioronics”?

The idea of embedding computing functionality in objects and environments is common, but it’s less common to think about embedding computing devices into human bodies. How will we—or should we—respond when implantable interactive technologies are created to extend human capability far beyond the norm? Are we ready to discuss the ethics of the Bionic Human, especially when this is an elective procedure? (“Hey, I got my memory upgraded this weekend!”) And how should we react when implantable devices are created not just to restore human ability but to change human behavior?

Some of these technologies raise ethical concerns. For example, in an effort to help drug-dependent people stay clean, these individuals might agree to— or be coerced into—having an implant put into their bodies that would detect the presence of the illegal substance and report it to authorities.

Who decides if and when it is ethical to use such technologies? Who should have access to the information produced? And who should control the functionality of the embedded devices? Important questions.

Unfortunately, our current understanding of what I call “behavioronics” is limited, so we don’t have solid answers to these questions. Especially as we combine computing technology with pharmacological interventions, behavioronics is a frontier that should be explored carefully before we begin to settle the territory.

Children are perhaps the most visible vulnerable group, but there are many others, including the mentally disabled, the elderly, the bereaved, and people who are exceptionally lonely. With the growth of workplace technology, even employees can be considered a vulnerable group, as their jobs are at stake. Those who complain about having to use a surveillance system or other technology designed to motivate or influence them may not be treated well by their employers and could find that their jobs are in jeopardy.

Any technology that preys on the vulnerability of a particular group raises ethical concerns. Whether employers, other organizations, or individuals, those who use persuasive technologies to exploit vulnerable groups are not likely to be their own watchdogs. Outside organizations and individuals must take responsibility for ensuring that persuasive technologies are used ethically.

[28]P. King and J. Tester, Landscape of persuasive technologies, Communications of the ACM, 42(5): 31–38 (1999).

Stakeholder Analysis A Methodology for Analyzing Ethics

Even if you are not formally trained in ethics, you can evaluate the ethical nature of a persuasive technology product by examining the intentions, methods, and outcomes of the product as well as the populations it targets, as outlined in this chapter. You also can rely on your intuition, your feeling for right and wrong, and your sense of what’s fair and what’s not. In many cases, this intuitive, unstructured approach can work well.

However, when examining the ethics of a complicated situation—or when collaborating with others—a more structured method may be required. One useful approach is to conduct a stakeholder analysis, to identify all those affected by a persuasive technology, and what each stakeholder in the technology stands to gain or lose. By conducting such an analysis, it is possible to identify ethical concerns in a systematic way.[1]

To assess the ethics of a technology, identify each stakeholder and determine what each stands to gain or lose.

With that in mind, I propose applying the following general stakeholder analysis to identify ethical concerns. This seven-step analysis provides a framework for systematically examining the ethics of any persuasive technology product.

Step 1 List All of the Stakeholders

Make a list of all of the stakeholders associated with the technology. A stakeholder is anyone who has an interest in the use of the persuasive technology product. Stakeholders include creators, distributors, users, and sometimes those who are close to the users as well—their families, neighbors, and communities. It is important to be thorough in considering all those who may be affected by a product, not just the most obvious stakeholders.

Step 2 List What Each Stakeholder Has to Gain

List what each stakeholder has to gain when a person uses the persuasive technology product. The most obvious gain is financial profit, but gains can include other factors as well, such as learning, self-esteem, career success, power, or control.

Step 3 List What Each Stakeholder Has to Lose

List what each stakeholder has to lose by virtue of the technology, such as money, autonomy, privacy, reputation, power, or control.

Step 4 Evaluate Which Stakeholder Has the Most to Gain

Review the results of Steps 2 and 3 and decide which stakeholder has the most to gain from the persuasive technology product. You may want to rank all the stakeholders according to what each has to gain.

Step 5 Evaluate Which Stakeholder Has the Most to Lose

Now identify which stakeholder stands to lose the most. Again, losses aren’t limited to time and money. They can include intangibles such as reputation, personal dignity, autonomy, and many other factors.

Step 6 Determine Ethics by Examining Gains and Losses in Terms of Values

Evaluate the gain or loss of each stakeholder relative to the other stakeholders. By identifying inequities in gains and losses, you can determine if the product is ethical or to what degree it raises ethical questions. This is where values, both personal and cultural, enter into the analysis.

Step 7 Acknowledge the Values and Assumptions You Bring to Your Analysis

The last step is perhaps the most difficult: identifying the values and assumptions underlying your analysis. Any investigation of ethics centers on the value system used in conducting the analysis. These values are often not explicit or obvious, so it’s useful to identify the moral assumptions that informed the analysis.

These values and assumptions will not be the same for everyone. In most Western cultures, individual freedom and self-determination are valued over institutional efficiency or collective power. As a result, Westerners are likely to evaluate persuasive technology products as ethical when they enhance individual freedom and unethical when they empower institutions at the expense of individuals. Other cultures may assess the ethics of technology differently, valuing community needs over individual freedoms.

[1]The stakeholder approach I present in this chapter brings together techniques I’ve compiled during my years of teaching and research. I’m grateful to Professor June Flora for introducing me to the concept in early 1994. The stakeholder approach originated with a business management book: R. E. Freeman, Strategic Management: A Stakeholder Approach (Boston: Pitman, 1984).

Later work refined stakeholder theory. For example, see K. Goodpaster, Business ethics and stakeholder analysis, Business Ethics Quarterly, 1(1): 53–73 (1991).

Education Is Key

This chapter has covered a range of ethical issues related to persuasive technologies. Some of these issues, such as the use of computers to convey emotions, represent new territory in the discussion of ethics. Other issues, such as using surveillance to change people’s behaviors, are part of a familiar landscape. Whether new or familiar, these ethical issues should be better understood by those who design, distribute, and use persuasive technologies.

Ultimately, education is the key to more ethical persuasive technologies. Designers and distributors who understand the ethical issues outlined in this chapter will be in a better position to create and sell ethical persuasive technology products. Technology users will be better positioned to recognize when computer products are applying unethical or questionably ethical tactics to persuade them. The more educated we all become about the ethics of persuasive technology, the more likely technology products will be designed and used in ways that are ethically sound.

For updates on the topics presented in this chapter, visit www.persuasivetech.info.

Introduction Persuasion in the Digital Age

- Overview of Captology

- The Functional Triad Computers in Persuasive Roles

- Computers as Persuasive Tools

- Computers as Persuasive Media Simulation

- Computers as Persuasive Social Actors

- Credibility and Computers

- Credibility and the World Wide Web

- Increasing Persuasion through Mobility and Connectivity

- The Ethics of Persuasive Technology

- Captology Looking Forward

EAN: 2147483647

Pages: 103