Chapter 18: Miscellaneous Subsystems

You get up in the morning and drive your car to work. Normally you don't pay special attention to the roads, streetlights, stop signs, car engine, or buildings. They're just there. However, consider the difficulty of driving to work if there were no roads, or maybe there are roads but no stoplights and other traffic controls. A nightmare scenario, wouldn't you say? This chapter takes the standard drive to work in the morning and exposes the critical infrastructure of the traffic control system and roads. The Internode Communication Services (ICS – the roads upon which cluster communication travels), Distributed Lock Manager (DLM – the traffic controls), Kernel Group Services (KGS – providing for car pool membership and decisions during cluster communication), and the Reliable Datagram (RDG – providing messaging between applications on a cluster – horn honking and turn signals) mechanisms are described in this chapter. We also look at the Cluster Mount Subsystem (CMS) and the Token subsystem to round out our discussion of miscellaneous subsystems.

These components are essential to the lower level functioning of the cluster, and these miscellaneous subsystems provide the critical infrastructure for all the components discussed previously in this book.

18.1 Internode Communication Services (ICS)

The internode communications subsystem (ICS) supports communication between members within a cluster. It provides the roads and traffic control infrastructure mentioned in the chapter introduction. In V1.X releases of TruCluster , there was no need for a flexible cluster communications component such as ICS because only Memory Channel was supported for the Cluster Interconnect (CI). The use of the Ethernet as a communications medium between cluster members introduced an additional option for the cluster transport. Basically, the cluster software must support the ability to choose between MC and LAN for your communications medium (like choosing between the interstate or travel local roads). The development of ICS was not an afterthought to support LAN but a plan to seamlessly prepare for future cluster interconnects and remove the dependencies that were in the earlier TruCluster software.

ICS provides the means to carry communication between cluster members. It is organized as a layer of abstraction, meaning that it will not have to change radically as the underlying hardware technology changes over time. ICS is divided into two levels: the lower level handles the details of transporting the data, and the upper level provides a hardware independent interface to the services that rely on ICS.

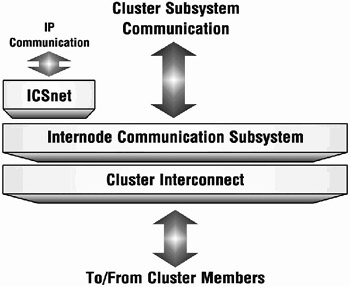

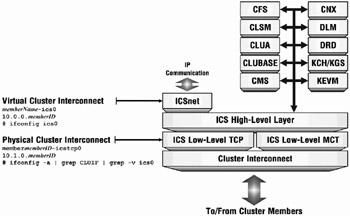

Thus cluster software components (cluster services – such as CFS, CLSM, CNX, CLUA, DLM, DRD, and others in the future) are built currently, and can be built in the future, to communicate with the high level of ICS, eliminating the necessity of grappling with the complexities of the underlying hardware technology and providing a more hierarchical relationship between components. The high level ICS code communicates with the appropriate low level ICS software on behalf of the service. Figure 18-1 shows how the ICS subsystem fits into the cluster subsystem component hierarchy. It is above the actual communications hardware and below such critical components as the CFS, CNX, DLM, and DRD. Below the ICS will be whatever software constitutes a device driver for the hardware (Memory Channel, Ethernet, others).

Figure 18-1: ICS Cluster Subsystem Communication

There are two layers of software within ICS:

-

High-level ICS (ics_hl)

-

Used by cluster subsystems (CFS, CLSM, CNX, DRD, DLM, CLUA).

-

Provides hardware-independent support for component initialization (used by DRD).

-

Provides information supporting cluster membership changes (used by CNX).

-

Provides high-level server control code (used by DLM).

-

Provides generic transport of data across the interconnect (used by CFS).

-

-

Low-level ICS (ics_ll_mct or ics_ll_tcp)

-

Provides the transport-specific (not hardware independent) component.

-

Handles the actual transmission and reception of data across the cluster interconnect.

-

Detects communication failures.

-

Low-level ICS software is called solely by routines in the High-Level ICS.

-

Figure 18-2 illustrates the structure of ICS.

Figure 18-2: ICS Cluster Subsystem Communication – Detailed

# sman all sys_attrs_ics Section Reference Page Description ------- -------------------- ------------ 5 sys_attrs_ics_hl ics_hl subsystem attributes 5 sys_attrs_ics_ll_tcp ics-ll-tcp subsystem attributes 5 sys_attrs_icsnet icsnet subsystem attributes

18.1.1 ICSNET

ICSNET is another service that interfaces with the ICS high-level software. ICSNET is essentially a network driver that puts TCP/IP (or UDP/IP) headers and data on the CI encapsulated in an Ethernet format (despite that fact the data may not be sent over the Ethernet). Consider the situation where information is picked up from the network and needs to be delivered to a cluster member other than the one that received the data. Let's say that this cluster uses the Memory Channel for its CI. The Ethernet device physically connected to the cluster member receiving the network transmission will issue an interrupt and cause the Ethernet driver's Interrupt Service Routine to execute.

Eventually the netisr_thread kernel thread will execute to handle the mid-layer IP protocol stack decisions. The data will be stored in message buffer structures (called mbufs). So far, this is normal processing of network activity. The problem, at this point, is that the processing of the data may be taking place on the wrong cluster member. (We are assuming that the receiving member is not the member to whom the data is ultimately being sent.) The data should be forwarded to the target member and fully processed by the receiving application on the target cluster member. The data (in the mbufs) must be sent through the CI (think Memory Channel in this case) to the correct cluster member, but the Memory Channel is accessible through ICS (see Figure 18-2). Control will be transferred to the netisr_thread software as is customary when processing the data through the IP stack, but the ICSNET software will be called by the netisr_thread to pass the data to the target cluster member over the Memory Channel (or Ethernet in the case of a LAN-based cluster).

The ICS Network driver (ICSNET) is used during steady state operation of the cluster to channel messages across the CI that emanate from cluster applications (as opposed to cluster kernel services). The cluster kernel services interface directly with ICS as pictured in Figure 18-2.

18.1.2 ICS Implementation

ICS is implemented using client-server strategies. A request begins execution within a client service (such as CFS, CLSM, DRD, CLUA, DLM, etc.); it is then transported to the ICS server for execution (using Remote Procedure Calls). See Figure 18-2. The ICS server software is implemented as a potentially dynamic number of daemons. The daemons contain one or more threads, which provide the context within which to execute the server side of the RPCs issued by the ICS clients. The number of daemons supporting the client requests can be static or dynamic depending on the necessities of the individual client request.

The ICS subsystem may request the creation of more daemons depending on the workload and the nature of the service. For instance, the count of server side ICS daemons created for DLM will not be dynamic since processing should be performed in the order that the requests are received. The count of daemons for DRD, on the other hand, will be drawn from a pool that grows and shrinks based on the amount of DRD activity requested. The following output shows some of the daemons supporting ICS.

# ps -eo pid,command | grep ics 524291 [icssvr_nomem_dae] 524292 [icssvr_throttle_] 524293 [icssvr_daemon_fr] 524294 [icssvr_daemon_fr] 524295 [icssvr_nanny] 524296 [icscli_throttle_] 524298 [icssvr_daemon_fr] 524299 [icssvr_daemon_fr] 524300 [icssvr_daemon_fr] 524301 [icssvr_daemon_fr] 524302 [icssvr_daemon_fr] 524303 [icssvr_daemon_pe] 524304 [icssvr_daemon_pe] 524305 [icssvr_daemon_pe] 524306 [icssvr_daemon_pe] 524307 [icssvr_daemon_pe] 524308 [icssvr_daemon_pe] 524309 [icssvr_daemon_fr] 524310 [icssvr_daemon_fr] 524311 [icssvr_daemon_fr] 524312 [icssvr_daemon_fr] 524328 [icssvr_daemon_pe] 524929 [icssvr_daemon_pe] 524946 [icssvr_daemon_pe] 524947 [icssvr_daemon_pe] 525602 [icssvr_daemon_fr] 525603 [icssvr_daemon_fr]

The following output is from a Memory Channel-based cluster. The program will be available on the BRUDEN or Digital Press web site (see Appendix B for the URLs).

# ./kernel_idle_threads | grep ics 55:0x0fffffc000f09c380:0x0fffffc0000ef0758:"ics_mct_thread" 59:0x0fffffc000f09d180:0x0fffffc0000ef03d8:"ics_mct_recv_nohandle" 118:0x0fffffc000e9eaa80:0x0fffffc00008a2888:"ics_mct_heapwalker_main

The following output was generated on a LAN-based cluster. It shows 16 ICS kernel threads.

# ./kernel_idle_threads | grep ics 116:0x0fffffc001db0b500:0x0fffffc001db1800a:"ics_chan_listen" 117:0x0fffffc001db0b880:0x0fffffc001db1838a:"ics_chan_listen" 118:0x0fffffc001db0bc00:0x0fffffc001db1870a:"ics_chan_listen" 119:0x0fffffc001db10000:0x0fffffc001db18a8a:"ics_chan_listen" 120:0x0fffffc001db10380:0x0fffffc001db18e0a:"ics_chan_listen" 121:0x0fffffc001db10700:0x0fffffc001db1918a:"ics_chan_listen" 122:0x0fffffc001db10a80:0x0fffffc001db1950a:"ics_chan_listen" 123:0x0fffffc001db10e00:0x0fffffc001db1988a:"ics_chan_listen" 124:0x0fffffc001db11180:0x0fffffc001db19c0a:"ics_chan_listen" 125:0x0fffffc001db11500:0x0fffffc001db1a00a:"ics_chan_listen" 126:0x0fffffc001db11880:0x0fffffc001db1a38a:"ics_chan_listen" 127:0x0fffffc001db11c00:0x0fffffc001db1a70a:"ics_chan_listen" 128:0x0fffffc001db12000:0x0fffffc001db1aa8a:"ics_chan_listen" 129:0x0fffffc001db12380:0x0fffffc001db1ae0a:"ics_chan_listen" 130:0x0fffffc001db12700:0x0fffffc001db1b18a:"ics_chan_listen" 131:0x0fffffc001db12a80:0x0fffffc001db1b50a:"ics_chan_listen"

# ps -lmp 524288 | grep ics | wc -l 16

The following sections further describe selected ICS server processes.

18.1.2.1 ICS Server Daemon from Pool[icssvr_daemon_fr]

Some services are able to share a pool of daemons available to satisfy their processing needs dynamically. Services that use this pool of daemons include CFS, CLSM, DRD, and KGS. The activity level of these services can vary greatly over the life of the cluster. Thus, it makes sense to handle their ICS requests such that the system will dynamically create more of these daemons during bursts of activity.

Note that the count of daemons also has downward flexibility so that at one point you may see N of these daemons, and at another point you may see N-2.

18.1.2.2 ICS Server Daemon Per Node[icssvr_daemon_pe]

Some services require a more predictable server environment. The predictability is based on a server daemon per node. It's like having a multi-lane highway with each lane taking you to exactly one location. Technically these lanes are called "channels". (At least you don't have to worry about the exit number, or keeping up with traffic while traveling in these lanes.) Services requiring this kind of server environment include CLUA, CNX, DLM, Memory Channel Transport (MCT), and others.

18.1.2.3 Miscellaneous ICS Server Daemons

Two more ICS server daemons exist to handle special case situations. The icssvr_nomem_daemon is used when kernel level memory allocation fails and a thread context is needed in order to wait for memory to be deallocated. This daemon should rarely be required.

The icssvr_throttle_daemon should also be rarely used. It is awakened to handle client activities that have been throttled due to lack of memory. This daemon will be responsible for waking any throttled clients once their channel or memory issue is resolved.

18.1.2.4 ICS Channels

The traffic between cluster members using ICS goes through channels. Each channel can handle zero or more services. Think of our multi-lane highway example from the previous section, and put the Distributed Lock Manager service from one cluster member on one end of the lane (channel) and the Distributed Lock Manager service from another cluster member on the other end. Placing data on the channel guarantees delivery to the target service and the order of delivery. For a service like DLM, these guarantees are critical to ensure fair access to locked resources.

Now imagine a lane on a super-highway where multiple services use the same lane. This would require some dispatching on the receiving end to make sure that the message gets to the proper service on the target member. This strategy is used on a channel traveled by CFS, AdvFS, and CMS. They all share a channel. It works out well since these services are all file related anyway. Section 18.1.4 shows channel statistics generated by the cfsstat(8) command.

Table 18-1 relates various services and their associated channels.

| ICS Services per ICS Channel | ||||

|---|---|---|---|---|

| Channel# | Services | Cluster Subsystem | # of Services | ICS Server Daemon |

| BOOT 0 | CNX | cnx | 3 | per node |

| (CLUSTER_PANIC) | ics_h1 | custom | ||

| SIGNAL | ics_h1 | from pool | ||

| PRI0 1 | none | n/a | 0 | n/a |

| PRI1 2 | none | n/a | 0 | n/a |

| CFS 3 | ADVFS, CFS, FS | cfs | 4 | from pool |

| CMS | cms | from pool | ||

| NET 4 | CLUMGT | clubase | 2 | from pool |

| ICSNET | icsnet | custom | ||

| CLSM 5 | CLSM | clsm | 1 | from pool |

| DRD 6 | DRDV1, DRDV2 | drd | 2 | from pool |

| TUNL 7 | TUNNEL | clua | 1 | per node |

| CLUA 8 | CLUA | clua | 1 | per node |

| KEVM 9 | KEVM | kevm_clu | 1 | per node |

| CFSR 10 | CFSREC | cfs | 1 | from pool |

| RBLD 11 | RBLD | cnx | 2 | from pool |

| SSN | n/a | from pool | ||

| KCH 12 | KCH | kch | 1 | from pool |

| DLM 13 | DLM | dlm | 1 | per node |

| TEST 14 | (TEST), (TEST1), (TEST2), (TEST3), (TEST4), (TEST5), (TEST6), (TEST7) | n/a | 7 | varies |

| PBT 15 | MCTCTL_SVC | ics_11_mct | 1 | per node |

18.1.2.5 ICS Server Nanny Process [icssvr_nanny]

So who's in charge of all these hale and hearty ICS server processes? When you were young, you may have had a nanny looking after you (or maybe you just had your older brother, or a neighbor, or weird old Hal from down the street); similarly, the ICS server processes are watched over by the "nanny" process. It will cause the creation of the pool-based processes when needed and will cause the creation of the per-node based processes as members come and go from the cluster.

If you were a really rambunctious kid, and you had lots of siblings, you may have needed a second nanny. Similarly, when the ICS server nanny is overstressed, it may be joined by other nannies.(We guess they just call for backup assistance when necessary.) Note that, as of this writing, it would be unusual to see more than one nanny.

18.1.3 ICS Attributes

The following output provides information on the sysconfig attributes supporting ICS.

| Note | A typical cluster member will use ics_ll_tcp or ics_ll_mct attributes but not both, although both sets of attributes will be visible. Also, note that the low-level attributes are undocumented and should not be altered unless you are directed to change them by HP engineering. We include them here to show the existence of software levels within the ICS subsystem. |

# for i in $(sysconfig -m | grep ics | cut -d: -f1) > do > print > sysconfig -q $i > done ics_hl: ← ICS High-Level ics_hl_debug = 1 Attributes. Module_Config_Name = attribute does not allow this operation ics_hl_verbose = 2 ics_idle_daemons_lwm = 4 ics_idle_daemons_hwm = 15 ics_ll_tcp: ←ICS Low-Level TCP Module_Config_Name = attribute does not allow this operation Attributes. ics_tcp_rendezvous_port = 0 ics_tcp_ports[0] = 0 ... ics_tcp_ports[15] = 0 ics_tcp_inetaddr0 = ics_tcp_netmask0 = ics_tcp_adapter0 = ics_tcp_ipmtu0 = 1500 ics_tcp_nr0[0] = ... ics_tcp_nr0[7] = ics_ll_mct: ← ICS Low-Level ics_mct_debug_flags = 0 Memory Channel ics_mct_fsw_timeout = 4096 Transport ics_mct_rsw_timeout = 4096 Attributes. ics_mct_old_vers = 1 ics_mct_new_vers = 1 ics_mct_heap_timeout = 30 ics_mct_small_bucket = 512 ics_mct_medium_bucket = 2048 ics_mct_large_bucket = 8192 ics_mct_frag_freelim = 32 ics_mct_nodedown_timeout = 100 ics_mct_nodedown_paniclimit = 0 ics_mct_enabled = 1 ics_mct_auto_oolbucket_up = 0 ics_mct_hb_disable = 0 ics_mct_yield_ticks = 10 Module_Config_Name = attribute does not allow this operation ←Network Interface Communication icsnet: Attributes. hdwr_addr = 42:00:00:00:00:01 ←Internally managed verbose = 0 MAC address icsnet_mtu = 7000

In order to quickly handle spikes of activity from ICS client services requesting unordered activities (such as CFS and DRD), a pool of idle processes is kept ready. The ics_hl subsystem attribute ics_idle_daemons_lwm sets the low water mark for this pool. The process count may grow up to ics_idle_daemons_hwm and even grow beyond it to handle heavy bursts of activity.

# sysconfig -q ics_hl ics_idle_daemons_hwm ics_hl: ics_idle_daemons_hwm = 15

# ps aux | grep icssvr_daemon_fr | wc -l 18

Once the process count has been increased, it will never fall back below the high water mark, and it won't decrease at all if it stays less than the high water mark. The previous output shows the attribute values and then counts the current number of processes. In this case, the count exceeds the high water mark. If this situation arises consistently, the ics_idle_daemons_hwm may need to be raised since it could indicate a constant creation and deletion of the ICS daemon processes. If the kernel process creator daemon shows noticeable CPU time, you should raise the high water mark.

# ps -eo user,pid,time,command | awk '/^USER|kproc/ { print $0 }' USER PID TIME COMMAND root 524290 0:00.05 [kproc_creator_da] The CPU time column in the above output shows no measurable CPU time, so there is no need to alter the ics_hl subsystem attributes.

18.1.4 ICS Statistics

The "ics" option in the cfsstat command provides statistics on ICS activities. Despite the command's name, it provides a wealth of ICS statistics. It can be issued with an interval option providing excellent insights into ongoing ICS activities.

Note that there are many options within cfsstat that focus on various ICS statistics as shown in Table 18-2.

| ICS options to cfsstat(8) | |||

|---|---|---|---|

| Option | V5.1B V5.1A | V5.1 V5.0A | Description |

| ics | √ | √ | Displays all ICS statistics |

| icsstat | √ | √ | Displays ICS client/server statistics |

| icschan | √ | √ | Displays ICS channel statistics (same as icechanbytes) |

| icssvc | √ | √ | Displays ICS service statistics (same as icssvcbytes) |

| icschanbps | √ | x | Displays ICS channel statistics (byte per second mode) |

| icschanops | √ | x | Displays ICS channel statistics (ops per second mode) |

| icschanbytes | √ | x | Displays ICS channel statistics (byte mode) |

| icschancounts | √ | x | Displays ICS channel statistics (count mode) |

| icssvcbytes | √ | x | Displays ICS services statistics (bytes mode) |

| icssvccounts | √ | x | Displays ICS services statistics (count mode) |

| icschanhist | √ | x | ICS channel transfer size histograms (total table) |

| icschanhisttot | √ | x | ICS channel transfer size histograms (total table) |

| icschanhistcli | √ | x | ICS channel transfer size histograms (client table) |

| icschanhistsvr | √ | x | ICS channel transfer size histograms (server table) |

| icschanhistclisend | √ | x | ICS channel transfer size histograms (cli send table) |

| icschanhistclirecv | √ | x | ICS channel transfer size histograms (cli recv table) |

| icschanhistsvrrecv | √ | x | ICS channel transfer size histograms (svr recv table) |

| icschanhistsvrsend | √ | x | ICS channel size transfer histograms (svr send table) |

| icschanhistall | √ | x | ICS channel transfer size histograms (all tables) |

| icslog | √ | √ | Displays last 200 message sent/received by ICS -- Available only in DEBUG kernels |

The following output displays ICS statistics per channel.

# cfsstat -i 5 icschanbps TOTL BOOT PRI0 PRI1 CFS NET CLSM DRD TUNL CLUA KEVM CFSR RBLD KCH DLM TEST 680m 15k 90m 9k 282m 1m 520 265m 17k 32m 6m 0 0 316k 2m 0 136m 0 0 0 0 0 0 0 0 808 0 0 0 0 0 0 3k 0 0 0 0 0 0 0 0 3k 0 0 0 0 350 0 2k 0 1k 0 284 0 0 0 0 808 0 0 0 0 175 0 7k 0 0 0 7k 0 0 0 0 0 0 0 0 0 0 0

The following output gives you an idea of the spectacular quantity of statistics available from cfsstat. The "-S" option requests that the display be sorted from largest to smallest and eliminates any zero statistics.

# cfsstat -S ics | more ICS static stats: 16 total number of channels (istat_num_chans) 3 total number of priority channels (istat_num_prios) 64 maximum number of services (istat_max_svcs) 27 number of elements per histogram (istat_hist_num_elems) 4 histogram element size (istat_hist_elem_size) ICS client stats: 31544 number of sync rpcs sent (istat_clirpc) 8404 number of async rpcs sent (istat_climsg) 11 signals forwarded (istat_clisigforward) 11 signal forward retries (istat_clisigforward_retry) ICS server stats: 319 number allocated handles (istat_svrhand_create) 319 number of handles in use (istat_svrhand) 110854 number of sync rpcs recvd (istat_svrrpc) 11849 number of async rpcs recvd (istat_svrmsg) 1 handle allocs deferred due to no memory (istat_svrhand_nomem) 13 number of daemons created (istat_svrdaemon_create) 13 number of waiting daemons (istat_svrdaemon) ICS Channel Stats: Total bytes per interval: 403219489 (cli: 8% svr: 91%) Channel Svcs Usage CliSend CliRecv SvrRecv SvrSend _______ ____ _____ ______________ ______________ ______________ ________________ 6 DRD 2 70% 7796 21096 2661844 280235728 3 CFS 4 22% 6984632 16638304 37480556 29464584 1 PRI0 0 4% 2298888 67800 14105516 188824 8 CLUA 1 1% 3821840 1208 2545200 928 9 KEVM 1 1% 2169040 0 2111344 0 13 DLM 1 0% 716276 0 685032 0 12 KCH 1 0% 123911 72644 134066 62348 5 CLSM 1 0% 235564 4 0 0 4 NET 2 0% 118208 0 116964 0 7 TUNL 1 0% 2184 0 110080 0 0 BOOT 3 0% 10800 72 10316 32 15 PBT 1 0% 4912 1640 5196 1736 2 PRI1 0 0% 900 456 452 232 10 CFSR 1 0% 216 36 72 12 11 RBLD 1 0% 0 0 0 0

ICS Service Stats: Total bytes per interval: 403219569 (cli: 8% svr: 91%) Service Ch #Pr Usage CliSend CliRecv SvrRecv SvrSend _________ __ ___ _____ _____________ _____________ _____________ _____________ 16 DRDV1 6 7 70% 5276 1560 2633064 280202392 12 FS 3 53 20% 6936472 10096108 37396624 27511744 9 CFS 3 15 6% 2219684 6473128 13979868 1886716 18 CLUA 8 2 1% 3821840 1208 2545200 928 19 KEVM 9 1 1% 2169040 0 2111344 0 23 DLM 13 6 0% 716280 8 685036 8 26 ADVFS 3 36 0% 59224 135364 82272 253480 22 KCH 12 2 0% 123911 72644 134066 62348 25 CLSM 5 13 0% 235564 4 0 0 14 ICSNET 4 2 0% 118208 0 116964 0 20 CFSREC 10 5 0% 65940 68 123300 72 17 TUNNEL 7 2 0% 2184 0 110160 0 27 DRDV2 6 7 0% 2520 19536 32732 34584 0 CNX 0 25 0% 10800 72 10316 32 24 MCTCTL 15 8 0% 4912 1640 5196 1736 10 CMS 3 9 0% 2032 1424 128 160 21 RBLD 11 1 0% 896 448 448 224 7 SIGNAL 0 3 0% 384 48 0 0 Total: BOOT PRI0 PRI1 CFS NET CLSM DRD TUNL CLUA KEVM CFSR RBLD KCH DLM TEST 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 2 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 4 0 1k 2 0 0 1 0 0 534 0 12 0 0 0 0 8 17 4 86 0 0 0 125 0 0 0 0 0 0 0 0 16 1 4 84 0 0 0 175 0 0 0 12 0 0 0 0 32 4 813 0 39k 82 0 530 0 0 0 0 0 299 0 0 64 3 2k 0 5k 2k 0 5k 1k 0 0 0 0 387 0 0 128 0 2k 0 120k 11 0 159 25 0 127 0 0 78 0 0 256 77 1k 0 90k 0 0 153 0 0 9k 0 0 109 4k 0 512 0 84 0 2k 0 0 23 0 0 24 0 0 311 0 0 1k 0 169 0 226 0 0 12 0 0 0 0 0 0 0 0 2k 0 330 0 124 0 0 0 0 1k 0 0 0 12 0 0 4k 0 305 0 82 0 0 0 0 0 0 0 0 9 0 0 8k 0 1k 0 2k 0 0 1k 0 0 0 0 0 0 0 0 16k 0 138 0 118 0 0 126 0 0 0 0 0 3 0 0 32k 0 3 0 70 0 0 584 0 0 0 0 0 0 0 0 64k 0 0 0 16 0 0 3k 0 0 0 0 0 0 0 0 128k 0 0 0 0 0 1 212 0 0 0 0 0 0 0 0 256k 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 512k 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1m 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 2m 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 4m 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 8m 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 16m 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 more 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

EAN: 2147483647

Pages: 273