Locating data

5.2 Locating data

The collection of data, in the form of measures, is a necessary task if we are to develop metrics. Most data will come from easily available sources, such as budget and schedule reports, defect reports, personnel counts, help line call records, and the like. The data usually reflects defect experience, project planning and status, and user response to the system.

5.2.1 Defect reporting

When software systems and programming staffs were small, the usual method of trouble reporting was to note the location of the defect on a listing or note pad and give it to the person responsible for repair. Documentation defects were merely marked in the margins and passed back to the author for action. As organizations have grown, and software and its defects have become more complex, these old methods have become inadequate. In many cases, though, they have not been replaced with more organized, reliable techniques. It is clear that the more information that can be recorded about a particular defect, the easier it will be to solve. The older methods did not, in general, prompt the reporter for as much information as was available to be written down.

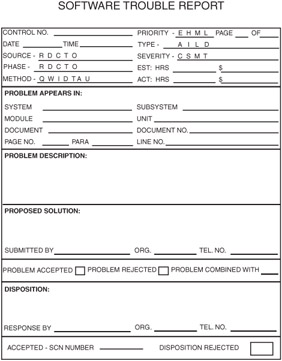

Figure 5.1 depicts a typical defect reporting form. It is a general software defect report. The actual format of the form is less important than the content. Also of less importance is the medium of the form; many organizations are reporting troubles directly on-line for interactive processing and broad, instant distribution.

Figure 5.1: Software trouble report.

It can be seen that the STR in Figure 5.1 is a combined document/software defect report. While many organizations maintain both a document and a software defect processing system, there is much to suggest that such a separation is unnecessary. Using the same form, and then, logically, the same processing system, eliminates duplication of effort. Further, all defect reports are now in the same system and database for easier recording, tracking, and trend analysis. Table 5.1 defines the codes used in the sample shown in Figure 5.1.

| Code or Field | Meaning | |

|---|---|---|

| Control number | Usually a sequential number for keeping track of the individual STRs. | |

| Priority | An indication of the speed with which this STR should be addressed: | |

| E = | emergency; work must stop until this STR is closed. | |

| H = | high; work is impeded while this STR is open. | |

| M = | medium; some negative impact on work exists until this STR is closed. | |

| L = | low; This STR must be closed, but it does not interfere with current work. | |

| Source | The phase in which the error was made that introduced the defect being described (these will depend on the organization's actual life cycle model and its phase names):

| |

| Severity | An estimate of the impact of this defect had it not been found until the software was in operation:

| |

| Phase | The phase in which this defect was detected (typically the same names as for source):

| |

| Estimate: hours and money | An estimate of the costs of correcting this defect and retesting the product | |

| Method | The defect detection technique with which this defect was detected (each organization will have its own set of techniques):

| |

| Actual: hours and money | The actual costs of correcting this defect and retesting the product | |

The STR form shown in Figure 5.1, when used to report documentation defects, asks for data about the specific location and the wording of the defective area. It then calls for a suggested rewording to correct the documentation defect, in addition to the simple what's wrong statement. In this way, the corrector can read the trouble report and, with the suggested solution at hand, can get a more positive picture of what the reviewer had in mind. This is a great deterrent to the comment "wrong" and also can help to avoid the response "nothing wrong." By including the requirement for a concise statement of the defect and a suggested solution, both the author and the reviewer have ownership of the results.

As a tool to report software defects, the STR includes data to be provided by both the initiator (who can be anyone involved with the software throughout its life cycle) and the corrector. Not only does it call for location, circumstances, and visible data values from the initiator, it asks for classification information such as the priority of the repair, criticality of the defect, and the original source of the defect (coding error, requirements deficiency, and so on). This classification information can be correlated later and can often serve to highlight weak portions of the software development process that should receive management attention. Such correlations can also indicate potential problem areas when new projects are started. These potential problem areas can be given to more senior personnel, be targeted for tighter controls, or receive more frequent reviews and testing during development.

5.2.2 Other data

Other, nondefect measures are available from many sources. During development of the software system, project planning and status data is available. These data include budget, schedule, size estimates (such as lines of code, function points, pages counts, and so on).

After installation of the system, data can be collected regarding customer satisfaction, functions most used and most ignored, return on investment, requirements met or not met, and so on.

EAN: 2147483647

Pages: 137