Custom Logging

| Integration Services logging is flexible and there are several options for logging destinations. However, with the standard facilities, logging might not always be as surgical as you'd like. Also, as you've seen previously, you can only create log events that the components support. There are several methods to augment and enhance the standard logging events and infrastructure. What follows is a description of two of those methods. Using the Script Task and Script ComponentThis is by far the easiest and simplest way to do custom logging. In the previous quickstart sample, this method was used to add a custom log entry as well as the sample packages for this chapter. For most cases, this method is the best choice for custom logging. In the Script Task, the code to write to the log is as follows: Dts.Log(messageText as String, dataCode as Integer, dataBytes() as Byte ) And you log the message similar to the following: Dts.Log("This is a log entry message", 42, nothing) You log in the Script transform the same way with the exception that you need to omit the "Dts." prefix. You'd write the log in the script component as follows: Log("This is a script component message", 42, nothing) The dataCode is caller dependent and can be any integer. These log entries show up with the name ScriptLogEntry, so if you plan to parse the logs later and you want to find a particular custom log entry, give it a unique ID to differentiate it from the others. Using the SQL TaskAn even more flexible way to do custom logging is to use the SQL Task. This approach has its advantages and disadvantages. The disadvantages to using the SQL Task are as follows:

The advantages to using the SQL Task are as follows:

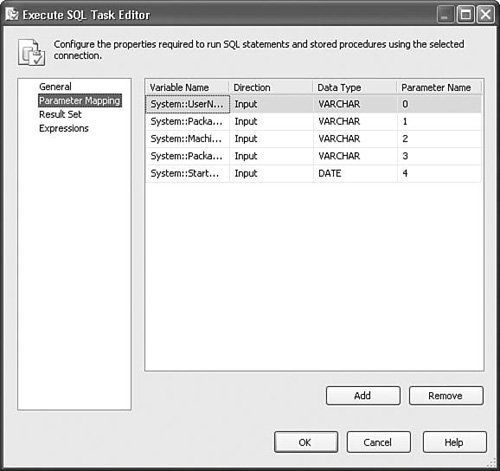

The sample package CustomLogging.dtsx in the S11-Logging solution creates a simple table in the ISSAMPLES sample database called CustomLog with the following statement: USE [SSISSAMPLES] GO if OBJECT_ID('CustomLog') IS NULL CREATE TABLE CustomLog ( username varchar(50), packagename varchar(50), machinename varchar(50), packageID varchar(50), starttime datetime ); GO This is there just for purposes of the sample to create the table. It's not necessary, of course, if the table already exists in a production environment. The second SQL Task has the following INSERT statement: INSERT INTO CustomLog VALUES(?,?,?,?,?); /* username varchar(50), packagename varchar(50), machinename varchar(50), packageID varchar(50), starttime date */ Next, some of the system variables are mapped to the query using parameter mappings. Figure 11.7 shows the Parameter Mapping tab of the Execute SQL Task Editor. This creates a new row with the values of the system variables listed and generates a nice table that tracks executed packages, their names, IDs, where they were executed, and at what time. This log schema doesn't include anything you don't get with the IS log schema, but it's easy to see how you could change the sample to implement your own that conforms to your custom schema. Figure 11.7. Use parameter mappings to include package information in your logs Put this SQL Task in the package at package scope to log simple information about the run of the package. Now, whenever you run the package, it enters a log into the PackageRun table, as shown in Figure 11.7. You can use this pattern to log any information you want in any schema you want inside a package without constraints. |

EAN: 2147483647

Pages: 200

- Integration Strategies and Tactics for Information Technology Governance

- Assessing Business-IT Alignment Maturity

- A View on Knowledge Management: Utilizing a Balanced Scorecard Methodology for Analyzing Knowledge Metrics

- Governance in IT Outsourcing Partnerships

- Governance Structures for IT in the Health Care Industry