6.1 Bioelectronics versus Semiconductor Electronics

|

6.1 Bioelectronics versus Semiconductor Electronics

One of the best ways to introduce bioelectronic computing is to compare the potential advantages of this new technology with the current advantages of semiconductor electronics, as outlined in table 6.1. Many additional characteristics could have been included, but those listed in table 6.1 were selected to provide the broadest coverage with a minimum number of categories. The first column of table 6.1 serves to represent the principal issues that not only encourage but also challenge scientists seeking to implement bioelectronics. We should also point out that many of the same challenges faced by molecular engineers to achieve the goals of miniaturization and speed will soon be faced by the semiconductor engineers as technology advances. The problems involved in developing computing systems become the same (i.e., quantum limits, interconnect issues, nanoscale engineering, and stability and reliability issues), independent of the technology used, as the molecular level is approached. We discuss each item in table 6.1 separately below.

| Characteristic | Potential Advantages (Bioelectronics) | Current Advantages (Semiconductor) |

|---|---|---|

| | ||

| Size | Small size of molecular scale offers high intrinsic speed. Picosecond switching rates are common. | Already impressive minimum feature sizes are decreasing by 15%/year. However, advancement into the molecular domain will be limited by similar hurdles faced by bioelectronics. |

| Speed | High intrinsic speed is a result of small size. Picosecond switching rates are common. | Current clock speeds are on the order of 1 GHz and a factor of 3– 5 improvement is expected before the standard technology reaches its scalable limit. |

| Nanoscale engineering | Synthetic organic chemistry, selfassembly and genetic engineering provide nanometer resolution. | Nanolithography provides higher scale factors and flexibility than current molecular techniques. |

| Architecture | Neural, associative and parallel architectures can be implemented directly. | Three terminal devices and standard logic designs offer high levels of integration. |

| Reliability | Ensemble averaging via optical coupling or state assignment averaging provides high reliability. | Current technology provides highly reliable systems, but advancement toward molecular realm will create reliability issues. |

Size

Molecules are synthesized "from the bottom up" by carrying out additive synthesis, starting with readily available organic compounds. Bulk semiconductor devices are generated "from the top down" via lithographic manipulation of bulk materials. A synthetic chemist can selectively add an oxygen atom to a chromophore with a precision that is far greater than a comparable lithographic step—facilitated using electron beams or X-rays—to pattern an oxide layer on a semiconductor surface. Molecular-based gates can be made hundreds of times smaller than their semiconductor equivalents. At the same time, such gates have yet to approach a comparable level of reliability or interconnect capacity as compared to their semiconductor counterparts.

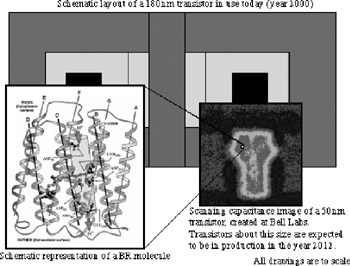

Consider the size of transistors fabricated in production for use in computers today. A schematic diagram of such a device is shown in figure 6.1. Superimposed over this schematic is a scanning capacitance image of an experimental transistor created by Bell Labs which is near the minimum limit for the standard room temperature CMOS architecture. The Semetech semiconductor roadmap predicts that transistor fabrication of this size will be reached in approximately the year 2012 (ITRS, 1999). A small white window appears in this image, which is a scale representation of a bacteriorhodopsin molecule. The BR molecule by itself is a complete optically triggered binary switching device. Notice that the switching devices considered in bioelectronics are of an extremely small size. The key research effort in the bioelectronics field is to develop an architecture that can harness the switching capability of extremely small components such as the bacteriorhodopsin molecule.

Figure 6.1: A schematic representation of a semiconductor transistor currently in production (0.18-mm minimum dimension). A scanning capacitance image of a 0.05-mm transistor fabricated at Bell Labs (: 2000 Lucent Technology) is superimposed over this schematic with the same scale factor. The 0.05-mm transistor is considered to be about the minimum dimension for room temperature CMOS device operation because the "off" state will not be separable from the "on" state due to noise in smaller devices. Transistors of this size are to be fabricated in production around the year 2012 (Sematech). Also, inset in the figure is a small white square. The square represents the relative size of a bacteriorhodopsin molecule, which by itself is a complete optically activated switching device.

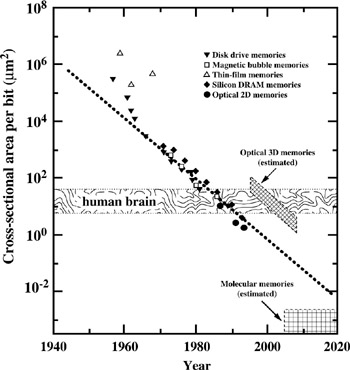

It is also interesting to examine the trends in bit size that have characterized the last few decades of memory development. The results shown in figure 6.2 indicate that the area per bit has decreased logarithmically since the early 1970s (Keyes 1992; Birge 1994). For comparison, we also show in figure 6.2 the cross-sectional area per bit calculated for the human brain (assuming one neuron is equivalent to one bit) and for proposed three-dimensional memories and proposed molecular memories. Although current technology has surpassed the cross-sectional density of the human brain, the major advantage of the neural system of the brain is that information is stored in three dimensions. At present, the mind of a human being can store more "information" than the disk storage allocated to the largest supercomputer. Of course, the human brain is not digital, and such comparisons are tenuous. Nevertheless, the analogy underscores the fact that current memory technology is still anemic compared to the technology that is inherent in the human brain. It also demonstrates the rationale for, and potential of, the development of threedimensional memories as described later in this chapter. We can also conclude from an analysis of figure 6.2 that the trend in memory densities will soon force the bulk semiconductor industry to address some of the same issues that confront scientists who seek to implement molecular electronics.

Figure 6.2: Analysis of the area in square microns required to store a single bit of information as a function of the evolution of computer technology in years. The data for magnetic disk, magnetic bubble, thin-film and silicon DRAM memories are taken from (Keyes 1992). These data are compared to the cross-sectional area per bit (neuron) for the human brain as well as anticipated areas and implementation times for optical three-dimensional memories and molecular memories (Birge 1994). Note that the optical 3D memory, the brain and the molecular memories are three-dimensional and therefore the cross-sectional area (A) per bit is plotted for comparison. The area is calculated in terms of the volume per bit, V/bit, by the formula A = (V)2/3.

Speed

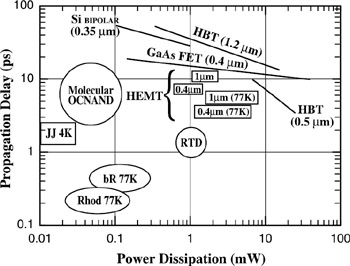

Molecular gates achieve very rapid switching speeds due in large part to their small sizes. Whether the gate is designed to operate via electron transfer, electron tunneling, or conformational photochromism, a decrease in size will yield a comparable increase in speed. The fact that all gates in use, under study, or envisioned, are activated by the shift in the position of a charge carrier and all charge carriers have mass, creates the speed-versus-size trade-off. Whether the device is classical or relativistic, the mass of the carrier places a limit on how rapidly the conformational change can take place. One can criticize this view as arbitrarily restrictive in that electrostatic changes can be generated via optical excitation, and the generation of an excited electronic state can occur within a large chromophore in less than one femtosecond (one femtosecond = 10−15 sec, the time it takes light to travel ∼0.3 μm). Nevertheless, the reaction of the system to the charge shift is still a size-dependent property and the relationship between the total size of the device and the response time remains valid. A comparison of switching speeds of molecular versus some of the higher-speed semiconductor gates and switches is presented in figure 6.3.

Figure 6.3: The propagation delay and power dissipation of selected molecular systems and semiconductor devices. HBT, hetero-junction bipolar transistor; HEMT, high electron mobility transistor; RTD, resonant tunneling device; OCNAND, optically coupled NAND gate; JJ, Josephson junction; bR, bacteriorhodopsin primary photochemical event; Rhod, visual rhodopsin primary photochemical event. Feature sizes of the semiconductor devices are indicated in parentheses. Propagation delay of photonic molecular devices are defined in terms of the time necessary for the absorption spectrum to reach 1/e of the final photoproduct absorption maximum.

When considering extremely small devices, the ultimate speed is determined by quantum mechanical effects. Heisenberg uncertainty limits the maximum frequency of operation, fmax, of a monoelectronic or monomolecular device based on the following relationship (Birge, Lawrence, and Tallent 1991):

![]()

where vs is the energy separation of the two states of the device in wave numbers and N is the number of state assignments that must be averaged to achieve reliable state assignment. This equation only applies to monoelectronic or monomolecular devices; Heisenberg's uncertainty principle permits higher frequencies for ensemble averaged devices. For example, if a device requires 1000 state assignment averages to achieve reliability and vs ≅ 1000 cm−1, it will have a maximum operating frequency of ∼960 MHz. (The concept of state assignment averaging is defined and quantitatively examined in Birge, Lawrence, and Tallent 1991.) Virtually all monomolecular or monoelectronic devices require N > 500 at ambient temperature, but cryogenic devices operating at 1.2 K can approach N = 1. Thus, although molecular devices have an inherent advantage with respect to speed, quantum mechanics places constraints on the maximum operating frequency, and these constraints are significant at ambient temperatures.

Nanoscale Engineering

As indicated in figure 6.2, continued improvement in computer processing power requires a decrease in feature size. Driven by the demand for higher speeds and densities, submicron feature sizes are now commonplace. Ultraviolet lithography must eventually be replaced by higher resolution techniques such as electron beam or Xray lithography. Although such lithography is well understood, it is very expensive to implement. As we have noted above, organic synthesis provides a "bottom-up" approach that offers a 10to 100-fold improvement in resolution relative to the best lithographic methods. Organic synthesis has been developed to a high level of sophistication, due in large part to the efforts of natural product synthetic chemists to re-create a priori the complex molecules that nature has developed via billions of years of natural selection. There is already a sophisticated synthetic effort within the drug industry, and thus a commercially viable molecular electronic device could likely be generated in large quantities using present commercial facilities.

There are two alternatives to organic synthesis that have had a significant impact on current efforts in molecular electronics; self-assembly and genetic engineering. The use of the Langmuir-Blodgett technique to prepare organized structures is the best known example of self-assembly (Birge 1994b; Ratner and Jortner 1997). But self-assembly can also be used in the generation of membrane-based devices, microtubule-based devices, and liquid crystal holographic films (Birge 1994b; Ratner and Jortner 1997). Genetic engineering offers a unique approach to the generation and manipulation of large biological molecules. We discuss this unique element of bioelectronics below. Thus molecular electronics provides at least three discrete methods of generating nanoscale devices: organic synthesis, self-assembly, and mutagenesis. The fact that the latter two methods currently offer access to larger and often more complicated structures has been responsible in part for the early success of biomolecular electronics. All three techniques offer resolutions significantly better than those possible via bulk lithography.

Architecture

The fundamental building block of current computer systems and signal processing circuitry is the three-terminal transistor. Lithography offers an advantage that none of the techniques available to bioelectronics can duplicate. Lithography can be used to construct ultra large scale integrated (ULSI) devices involving from 107 to 109 discrete components with complex interconnections. The true power of the integrated circuit does not come from the fact that many millions of devices can be fabricated on a chip, but stems from the ability to interconnect the devices in a complex circuit. This particular architecture is difficult to implement using molecules, although it should be recognized that it is even becoming difficult to implement with semiconductor materials. As an example, one can cite the need to switch to copper from aluminum for the interconnection material because aluminum has become susceptible to electromigration at the wire size in use today. It is not clear how interconnect technology would be implemented with molecular-sized semiconductor transistors.

Bioelectronics offers significant potential for exploring new architectures and represents one of the key features prompting the enthusiasm of researchers. The need for new architectures as devices approach the molecular level could encourage the investigation and development of new designs based on neural, associative, or parallel architectures and lead to hybrid systems with enhanced capabilities relative to current technology. For example, as described later in this chapter, optical associative memories and three-dimensional memories can be implemented with unique capabilities based on molecular electronics (Birge et al. 1997). Implementation of these memories within hybrid systems is anticipated to have near-term application. Furthermore, the human brain, a computer with capabilities that far exceed the most advanced supercomputer, is a prime example of the potential of molecular electronics (Kandel, Schwartz, and Jessell 1991). Although the development of an artificial neural computer is beyond our current technology, it would be illogical to assume that such an accomplishment is impossible. Thus we should view molecular electronics as opening new architectural opportunities that will lead to advances in computer and signal processing systems.

Reliability

The issue of reliability has been invoked repeatedly by semiconductor scientists and engineers as a reason to view molecular electronics as impractical. Some believe that the need to use ensemble averaging in optically coupled molecular gates and switches is symptomatic of the inherent unreliability of molecular electronic devices. This point of view is comparable to suggesting that transistors are inherently unreliable because more than one charge carrier must be used to provide satisfactory performance. The majority of ambient-temperature molecular and bulk semiconductor devices use more than one molecule or charge carrier to represent a bit for two reasons: ensemble averaging improves reliability and it permits higher speeds. The nominal use of ensemble averaging does not, however, rule out reliable monomolecular or monoelectronic devices.

The probability of correctly assigning the state of a single molecule, p1, is never exactly unity. This less-than-perfect assignment capability is due to quantum effects as well as inherent limitations in the state assignment process. The probability of an error in state assignment, Perror, is a function of p1 and the number of molecules, n, within the ensemble used to represent a single bit of information. Perror can be approximated by the following formula (Birge, Lawrence, and Tallent 1991):

![]()

where erf [Z0; Z1] is the differential error function defined by:

![]()

where

![]()

Equation 6.2 is approximate and neglects error associated with the probability that the number of molecules in the correct conformation can stray from their expectation values bases on statistical considerations. Nevertheless, it is sufficient to demonstrate the issue of reliability and ensemble size. First, we define a logarithmic relaibility parameter, ξ, which is related to the probability of error in the measurement of the state of the ensemble (device) by the function, Perror = 10−ξ. A value of ξ = 10 is considered a minimal requirement for reliability in non-error-correcting digital architectures.

If we assume that the state of a single molecule can be assigned correctly with a probability of 90 percent (p1 = 9), then equation 6.2 indicates that 95 molecules must collectively represent a single bit to yield ξ > 10 [Perror(95, 0.9) ≅ 8 10−11]. We must recognize that a value of p1 = 0.9 is larger than is normally observed—some examples of reliability analyses for specific molecular-based devices are given in Birge, Lawrence, and Tallent (1991). In general, ensembles larger than 103 are required for reliability unless fault-tolerant or fault-correcting architectures can be implemented.

The question then arises as to whether or not we can design a reliable computer or memory that uses a single molecule to represent a bit of information. The answer is "yes", provided one of tow conditions apply. The first condition is architectural. It is possible to dexign fault-tolerant architectures that either recover from digital errors or simply operate reliably with occasional is ther use of additional bits beyond the number required to represent a number. This approach is common in semiconductor memories, and under most implementations these additional bits provide for single bit error correction and multiple bit error detection. Such architectures lower the required value of ε to values less than 4. An example of analog error tolerance is embodied in many optical computer designs that use holographic and/or Fourier architectures to carry out complex functions.

The second condition is more subtle. It is possible to design molecular architectures that can undergo a state reading process that does not disturb the state of the molecule. For example, an electrostatic switch could be designed that can be "read" without changing the state of the switch. Alternatively, an optically coupled device can be read by using a wavelength that is absorbed or diffracted but that does not initiate state conversion. Under these conditions, the variable n (which appears in equation 6.2) can be defined as the number of read "operations" rather than the ensemble size. Thus our previous example, indicating that 95 molecules must be included in the ensemble to achieve reliability, can be restated as follows: A single molecule can be used, provided we can carry out 95 nondestructive measurements to define the state. Multiple-state measurements are equivalent to integrated measurements, and should not be interpreted as a start-read-stop cycle repeated n number of times. A continuous read with digital or analog averaging can achieve the same level of reliability.

|