Protection Mechanisms

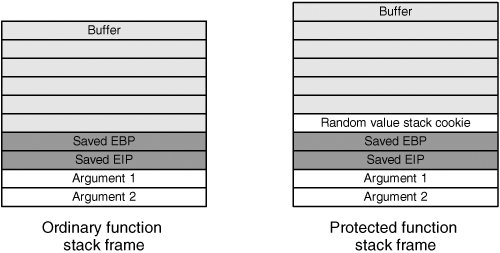

| The basics covered so far represent viable exploitation techniques for some contemporary systems, but the security landscape is changing rapidly. Modern OSs often include preventive technologies to make it difficult to exploit buffer overflows. These technologies typically reduce the attacker's chance of exploiting a bug or at least reduce the chance that a program can be constructed to reliably exploit a bug on a target host. Chapter 3, "Operational Review," discussed several of these technologies from a high-level operations perspective. This section builds on Chapter 3's coverage by focusing on technical details of common anticorruption protections and addressing potential and real weaknesses in these mechanisms. This discussion isn't a comprehensive study of protection mechanisms, but it does touch on the most commonly deployed ones. Stack CookiesStack cookies (also known as "canary values") are a method devised to detect and prevent exploitation of a buffer overflow on the stack. Stack cookies are a compile-time solution present in most default applications and libraries shipped with Windows XP SP2 and later. There are also several UNIX implementations of stack cookie protections, most notably ProPolice and Stackguard. Stack cookies work by inserting a random 32-bit value (usually generated at runtime) on the stack immediately after the saved return address and saved frame pointer but before the local variables in each stack frame, as shown in Figure 5-13. This cookie is inserted when the function is entered and is checked immediately before the function returns. If the cookie value has been altered, the program can infer that the stack has been corrupted and take appropriate action. This response usually involves logging the problem and terminating immediately. The stack cookie prevents traditional stack overflows from being exploitable, as the corrupted return address is never used. Figure 5-13. Stack frame with and without cookies LimitationsThis technology is effective but not foolproof. Although it prevents overwriting the saved frame pointer and saved return address, it doesn't protect against overwriting adjacent local variables. Figure 5-5 showed how overwriting local variables can subvert system security, especially when you corrupt pointer values the function uses to modify data. Modification of these pointer values usually results in the attacker seizing control of the application by overwriting a function pointer or other useful value. However, many stack protection systems reorder local variables, which can minimize the risk of adjacent variable overwriting. Another attack is to write past the stack cookie and overwrite the parameters to the current function. The attacker corrupts the stack cookie by overwriting function parameters, but the goal of the attack is to not let the function return. In certain cases, overwriting function parameters allows the attacker to gain control of the application before the function returns, thus rendering the stack cookie protection ineffective. Although this technique seems as though it would be useful to attackers, optimization can sometimes inadvertently eliminate the chance of a bug being exploited. When a variable value is used frequently, the compiler usually generates code that reads it off the stack once and then keeps it in a register for the duration of the function or the part of the function in which the value is used repeatedly. So even though an argument or local variable might be accessed frequently after an overflow is triggered, attackers might not be able to use that argument to perform arbitrary overwrites. Another similar technique on Windows is to not worry about the saved return address and instead shoot for an SEH overwrite. This way, the attacker can corrupt SEH records and trigger an access violation before the currently running function returns; therefore, attacker-controlled code runs and the overflow is never detected. Finally, note that stack cookies are a compile-time solution and might not be a realistic option if developers can't recompile the whole application. The developers might not have access to all the source code, such as code in commercial libraries. There might also be issues with making changes to the build environment for a large application, especially with hand-optimized components. Heap Implementation HardeningHeap overflows are typically exploited through the unlinking operations performed by the system's memory allocation and deallocation routines. The list operations in memory management routines can be leveraged to write to arbitrary locations in memory and seize complete control of the application. In response to this threat, a number of systems have hardened their heap implementations to make them more resistant to exploitation. Windows XP SP2 and later have implemented various protections to ensure that heap operations don't inadvertently allow attackers to manipulate the process in a harmful manner. These mechanisms include the following:

The UNIX glibc heap implementation has also been hardened to prevent easy heap exploitation. The glibc developers have added unlink checks to their heap management code, similar to the Windows XP SP2 defenses. LimitationsHeap protection technologies aren't perfect. Most have weaknesses that still allow attackers to leverage heap data structures for reliable (or relatively reliable) exploitation. Some of the published works on defeating Windows heap protection include the following:

UNIX glibc implementations have undergone similar scrutiny. One useful resource is "The Malloc Maleficarum" by Phantasmal Phantasmagoria (www.securityfocus.com/archive/1/413007/30/0/threaded). The most important limitation of these heap protection mechanisms is that they protect only the internal heap management structures. They don't prevent attackers from modifying application data on the heap. If you are able to modify other meaningful data, exploitation is usually just a matter of time and effort. Modifying program variables is difficult, however, as it requires specific variable layouts. An attacker can create these layouts in many applications, but it isn't always a reliable form of exploitationespecially in multithreaded applications. Another point to keep in mind is that it's not uncommon for applications to implement their own memory management strategies on top of the system allocation routines. In this situation, the application in question usually requests a page or series of pages from the system at once and then manages them internally with its own algorithm. This can be advantageous for attackers because custom memory-management algorithms are often unprotected, leaving them vulnerable to variations on classic heap overwrite attacks. Nonexecutable Stack and Heap ProtectionMany CPUs provide fine-grained protection for memory pages, allowing the CPU to mark a page in memory as readable, writable, or executable. If the program keeps its code and data completely separate, it's possible to prevent shellcode from running by marking data pages as nonexecutable. By enforcing nonexecutable protections, the CPU prevents the most popular exploitation method, which is to transfer control flow to a location in memory where attacker-created data already resides. Note Intel CPUs didn't enforce nonexecutable memory pages until recently (2004). Some interesting workarounds were developed to overcome this limitation, most notably by the PaX development team (now part of the GR-Security team). Documentation on the inner workings of PaX is available at http://pax.grsecurity.net/. LimitationsBecause nonexecutable memory is enforced by the CPU, bypassing this protection directly isn't feasiblegenerally, the attacker is completely incapacitated from directing execution to a location on the stack or the heap. However, this does not prevent attackers from returning to useful code in the executable code sections, whether it's in the application being exploited or a shared library. One popular technique to circumvent these protections is to have a series of return addresses constructed on the stack so that the attacker can make multiple calls to useful API functions. Often, attackers can return to an API function for unprotecting a region of memory with data they control. This marks the target page as executable and disables the protection, allowing the exploit to run its own shellcode. In general, this protection mechanism makes exploiting protected systems more difficult, but sophisticated attackers can usually find a way around it. With a little creativity, the existing code can be spliced, diced, and coerced into serving the attacker's purpose. Address Space Layout RandomizationAddress space layout randomization (ASLR) is a technology that attempts to mitigate the threat of buffer overflows by randomizing where application data and code is mapped at runtime. Essentially, data and code sections are mapped at a (somewhat) random memory location when they are loaded. Because a crucial part of buffer overflow exploitation involves overwriting key data structures or returning to specific places in memory, ASLR should, in theory, prevent reliable exploitation because attackers can no longer rely on static addresses. Although ASLR is a form of security by obscurity, it's a highly effective technique for preventing exploitation, especially when used with some of the other preventative technologies already discussed. LimitationsDefeating ASLR essentially relies on finding a weak point in the ASLR implementation. Attackers usually attempt to adopt one of the following approaches:

SafeSEHModern Windows systems (XP SP2+, Windows 2003, Vista) implement protection mechanisms for the SEH structures located on the stack. When an exception is triggered, the exception handler target addresses are examined before they are called to ensure that every one is a valid exception handler routine. At the time of this writing, the following procedure determines an exception handler's validity:

LimitationsSafeSEH protection is a good complement to the stack cookies used in recent Windows releases, in that it prevents attackers from using SEH overwrites as a method for bypassing the stack cookie protection. However, as with other protection mechanisms, it has had weaknesses in the past. David Litchfield of Next Generation Security Software (NGSSoftware) wrote a paper detailing some problems with early implementations of SafeSEH that have since been addressed (available at www.ngssoftware.com/papers/defeating-w2k3-stack-protection.pdf). Primary methods for bypassing SafeSEH included returning to a location in memory that doesn't belong to any module (such as the PEB), returning into modules without an exception table registered, or abusing defined exception handlers that might allow indirect running of arbitrary code. Function Pointer ObfuscationLong-lived function pointers are often the target of memory corruption exploits because they provide a direct method for seizing control of program execution. One method of preventing this attack is to obfuscate any sensitive pointers stored in globally visible data structures. This protection mechanism doesn't prevent memory corruption, but it does reduce the probability of a successful exploit for any attack other than a denial of service. For example, you saw earlier that an attacker might be able to leverage function pointers in the PEB of a running Windows process. To help mitigate this attack, Microsoft is now using the EncodePointer(), DecodePointer(), EncodeSystemPointer(), and DecodeSystemPointer() functions to obfuscate many of these values. These functions obfuscate a pointer by combining its pointer value with a secret cookie value using an XOR operation. Recent versions of Windows also use this anti-exploitation technique in parts of the heap implementation. LimitationsThis technology certainly raises the bar for exploit developers, especially when combined with other technologies, such as ASLR and nonexecutable memory pages. However, it's not a complete solution in itself and has only limited use. Attackers can still overwrite application-specific function pointers, as compilers currently don't encode function pointers the application uses. An attacker might also be able to overwrite normal unencoded variables that eventually provide execution control through a less direct vector. Finally, attackers might identify circumstances that redirect execution control in a limited but useful way. For example, when user-controlled data is in close proximity to a function pointer, just corrupting the low byte of an encoded function pointer might give attackers a reasonable chance of running arbitrary code, especially when they can make repeated exploit attempts until a successful value is identified. |

EAN: 2147483647

Pages: 194