1.3 (Multi)Media Data and Multimedia Metadata

|

| < Day Day Up > |

|

1.3 (Multi)Media Data and Multimedia Metadata

This section describes (multi)media data and multimedia metadata. We overview the MPEG coding family (MPEG-1, MPEG-2, MPEG-4) and then introduce multimedia metadata standards for MPEG-7 and, finally, the concepts of (multi)media data and multimedia metadata introduced in MPEG-21. Multimedia metadata models are of obvious interest for the design of content-based multimedia systems, and we refer to them throughout the book.

1.3.1 (Multi)Media Data

Given the broad use of images, audio and video data nowadays, it should not come as a surprise that much effort has been put into developing standards for codec, or coding and decoding multimedia data. Realizing that much of the multimedia data are redundant, multimedia codecs use compression algorithms to identify and use redundancy.

MPEG video compression [27] is used in many current and emerging products. It is at the heart of digital television set-top boxes, digital subscriber service, high-definition television decoders, digital video disc players, Internet video, and other applications. These applications benefit from video compression in that they now require less storage space for archived video information, less bandwidth for the transmission of the video information from one point to another, or a combination of both.

The basic idea behind MPEG video compression is to remove both spatial redundancy within a video frame and temporal redundancy between video frames. As in Joint Photographic Experts Group (JPEG), the standard for still-image compression, DCT (Discrete Cosine Transform)-based compression is used to reduce spatial redundancy. Motion compensation or estimation is used to exploit temporal redundancy. This is possible because the images in a video stream usually do not change much within small time intervals. The idea of motion compensation is to encode a video frame based on other video frames temporally close to it.

In addition to the fact that MPEG video compression works well in a wide variety of applications, a large part of its popularity is that it is defined in three finalized international standards: MPEG-1, MPEG-2, and MPEG-4.

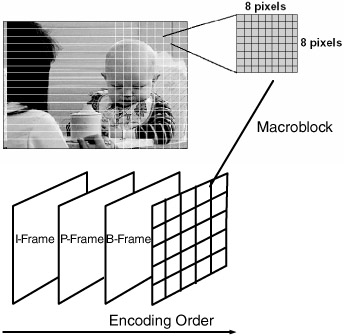

MPEG-1 [28] is the first standard (issued in 1993) by MPEG and is intended for medium-quality and medium-bit rate video and audio compression. It allows videos to be compressed by ratios in the range of 50:1 to 100:1, depending on image sequence type and desired quality. The encoded data rate is targeted at 1.5 megabits per second, for this is a reasonable transfer rate for a double-speed CD-ROM player. This rate includes audio and video. MPEG-1 video compression is based on a macroblock structure, motion compensation, and the conditional replenishment of macroblocks. MPEG-1 encodes the first frame in a video sequence in intraframe (I-frame) Each subsequent frame in a certain group of picture (e.g., 15 frames) is coded using interframe prediction (P-frame) or bidirectional prediction (B-frame). Only data from the nearest previously coded I-frame or P-frame is used for prediction of a P-frame. For a B-frame, either the previous or the next I- or P-frame or both are used. Exhibit 1.5 illustrates the principles of the MPEG-1 video compression.

Exhibit 1.5: Principles of the MPEG-1 video compression technique.

On the encoder side, the DCT is applied to an 8 × 8 luminance and chrominance block, and thus, the chrominance and luminance values are transformed into the frequency domain. The dominant values (DC values) are in the upper-left corner of the resulting 8 × 8 block and have a special importance. They are encoded relative to the DC coefficient of the previous block (DCPM coding).

Then each of the 64 DCT coefficients is uniformly quantized. The nonzero quantized values of the remaining DCT coefficients and their locations are then zig-zag scanned and run-length entropy coded using length-code tables. The scanning of the quantized DCT two-dimensional image signal followed by variable-length code word assignment for the coefficients serves as a mapping of the two-dimensional image signal into a one-dimensional bit stream. The purpose of zig-zag scanning is to trace the low-frequency DCT coefficients (containing the most energy) before tracing the high-frequency coefficients (which are perceptually not so receivable).

MPEG-1 audio compression is based on perceptual coding schemes. It specifies three audio coding schemes, simply called Layer-1, Layer-2, and Layer-3. Encoder complexity and performance (sound quality per bit rate) progressively increase from Layer-1 to Layer-3. Each audio layer extends the features of the layer with the lower number. The simplest form is Layer-1. It has been designed mainly for the DCC (digital compact cassette), where it is used at 384 kilobits per second (kbps) (called "PASC"). Layer-2 achieves a good sound quality at bit rates down to 192 kbps, and Layer-3 has been designed for lower bit rates down to 32 kbps. A Layer-3 decoder may as well accept audio streams encoded with Layer-2 or Layer-1, whereas a Layer-2 decoder may accept only Layer-1.

MPEG video compression and audio compression are different. The audio stream flows into two independent blocks of the encoder. The mapping block of the encoder filters and creates 32 equal-width frequency subbands, whereas the psychoacoustics block determines a masking threshold for the audio inputs. By determining such a threshold, the psychoacoustics block can output information about noise that is imperceptible to the human ear and thereby reduce the size of the stream. Then, the audio stream is quantized to meet the actual bit rate specified by the layer used. Finally, the frame packing block assembles the actual bitstream from the output data of the other blocks, and adds header information as necessary before sending it out.

MPEG-2 [29] (issued in 1994) was designed for broadcast television and other applications using interlaced images. It provides higher-picture quality than MPEG-1, but uses a higher data rate. At lower bit rates, MPEG-2 provides no advantage over MPEG-1. At higher bit rates, above about 4 megabits per second, MPEG-2 should be used in preference to MPEG-1. Unlike MPEG-1, MPEG-2 supports interlaced television systems and vertical blanking interval signals. It is used in digital video disc videos.

The concept of I-, P-, and B-pictures is retained in MPEG-2 to achieve efficient motion prediction and to assist random access. In addition to MPEG-1, new motion-compensated field prediction modes were used to efficiently encode field pictures. The top fields and bottom fields are coded separately. Each bottom field is coded using motion-compensated interfield prediction based on the previously coded top field. The top fields are coded using motion compensation based on either the previous coded top field or the previous coded bottom field.

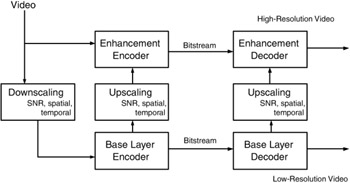

MPEG-2 introduces profiles for scalable coding. The intention of scalable coding is to provide interoperability between different services and to flexibly support receivers with different display capabilities. One of the important purposes of scalable coding is to provide a layered video bit stream that is amenable to prioritized transmission. Exhibit 1.6 depicts the general philosophy of a multiscale video coding scheme.

Exhibit 1.6: Scalable coding in MPEG-2.

MPEG-2 syntax supports up to three different scalable layers. Signal-to-noise ratio scalability is a tool that has been primarily developed to provide graceful quality degradation of the video in prioritized transmission media. Spatial scalability has been developed to support display screens with different spatial resolutions at the receiver. Lower-spatial resolution video can be reconstructed from the base layer. The temporal scalability tool generates different video layers. The lower one (base layer) provides the basic temporal rate, and the enhancement layers are coded with temporal prediction of the lower layer. These layers, when decoded and temporally multiplexed, yield full temporal resolution of the video. Stereo-scopic video coding can be supported with the temporal scalability tool. A possible configuration is as follows. First, the reference video sequence is encoded in the base layer. Then, the other sequence is encoded in the enhancement layer by exploiting binocular and temporal dependencies; that is, disparity and motion estimation or compensation.

MPEG-1 and MPEG-2 use the same family of audio codecs, Layer-1, Layer-2, and Layer-3. The new audio features of MPEG-2 use lower sample rate in Layer-3 to address low-bit rate applications with limited bandwidth requirements (the bit rates extend down to 8 kbps). Furthermore, a multi-channel extension for sound applications with up to five main audio channels (left, center, right, left surround, right surround) is proposed.

A non-ISO extension, called MPEG 2.5, was developed by the Fraunhofer Institute to improve the performance of MPEG-2 Audio Layer-3 at lower bit rates. This extension allows sampling rates of 8, 11.025, and 24 kHz, which is half of that used in MPEG-2. Lowering the sampling rate reduces the frequency response but allows the frequency resolution to be increased, so that the result has a significantly better quality.

The popular MP3 file format is an abbreviation for MPEG-1/2 Layer-3 and MPEG-2.5. It actually uses the MPEG 2.5 codec for small bit rates (<24 kbps). For bit rates higher than 24 kbps, it uses the MPEG-2 Layer-3 codec.

A comprehensive comparison of MPEG-2 Layer-3, MPEG 2.5, MP3, and AAC (see MPEG-4 below) is given in the MP3 overview by Brandenburg. [30]

MPEG-4 [31], [32], [33] (issued in 1999) is the newest video coding standard by MPEG and goes further, from a pure pixel-based approach, that is, from coding the raw signal, to an object-based approach. It uses segmentation and a more advanced scheme of description. Object coding is for the first time implemented in JPEG-2000 [34], [35] (issued in 1994) and MPEG-4. [36], [37], [38] Indeed, MPEG-4 is primarily a toolbox of advanced compression algorithms for audiovisual information, and in addition, it is suitable for a variety of display devices and networks, including low-bit rate mobile networks. MPEG-4 organizes its tools into the following eight parts:

-

ISO-IEC 14496-1 (systems)

-

ISO-IEC 14496-2 (visual)

-

ISO-IEC 14496-3 (audio)

-

ISO-IEC 14496-4 (conformance)

-

ISO-IEC 14496-5 (reference software)

-

ISO-IEC 14496-6 (delivery multimedia integration framework)

-

ISO-IEC 14496-7 (optimized software for MPEG-4 tools)

-

ISO-IEC 14496-8 (carriage of MPEG-4 contents over Internet protocol networks).

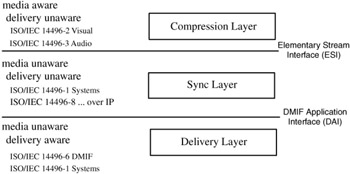

These tools are organized in a hierarchical manner and operate on different interfaces. Exhibit 1.7 illustrates this organization model, which comprises the compression layer, the sync layer, and the delivery layer. The compression layer is media aware and delivery unaware, the sync layer is media unaware and delivery unaware, and the delivery layer is media unaware and delivery aware.

Exhibit 1.7: General organization of MPEG-4.

The compression layer does media encoding and decoding of elementary streams, the sync layer manages elementary streams and their synchronization and hierarchical relations, and the deliver layer ensures transparent access to MPEG-4 content irrespective of the delivery technology used. The following paragraphs briefly describe the main features supported by the MPEG-4 tools. Note also that not all the features of the MPEG-4 toolbox will be implemented in a single application. This is determined by levels and profiles, to be discussed below.

MPEG-4 is object oriented:[39] An MPEG-4 video is a composition of a number of stream objects that build together a complex scene. The temporal and spatial dependencies between the objects have to be described with a description following the BIFS (binary format for scene description).

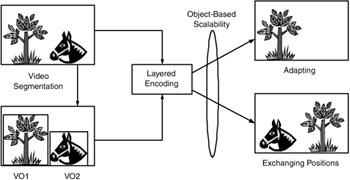

MPEG-4 Systems provides the functionality to merge the objects (natural or synthetic) and render the product in a single scene. Elementary streams can be adapted (e.g., one object is dropped) or mixed with stored and streaming media. MPEG-4 allows for the creation of a scene consisting of objects originating from different locations. Exhibit 1.8 shows an application example of the object-scalability provided in MPEG-4. The objects that are representing videos are called video objects, or VOs. They may be combined with other VOs (or audio objects and three-dimensional objects) to form a complex scene. One possible scalability option is object dropping, which may be used for adaptation purposes at the server or in the Internet. Another option is the changing of objects within a scene; for instance, transposing the positions of the two VOs in Exhibit 1.8. In addition, MPEG-4 defines the MP4 file format. This format is extremely flexible and extensible. It allows the management, exchange, authoring, and presentation of MPEG-4 media applications.

Exhibit 1.8: Object-based scalability in MPEG-4.

MPEG-4 Systems also provides basic support to protect and manage content. This is actually moved into the MPEG-21 intellectual property management and protection (IPMP) (MPEG-21, Part 4).

MPEG-4 Visual provides a coding algorithm that is able to produce usable media at a rate of 5 kbps and QCIF (quarter common intermediate format) resolution (176 × 144 pixels). This makes motion video possible on mobile devices. MPEG-4 Visual describes more than 30 profiles that define different combinations of scene resolution, bit rate, audio quality, and so forth to accommodate the different needs of different applications. To mention just two example profiles, there is the simple profile, which provides the most basic audio and video at a bit rate that scales down to 5 kbps. This profile is extremely stable and is designed to operate in exactly the same manner on all MPEG-4 decoders. The studio profile, in contrast, is used for applications involved with digital theater and is capable of bit rates in the 1 Gbps (billions of bits per second) range. Of note is that the popular DivX codec (http://www.divx-digest.com/) is based on the MPEG-4 visual coding using the simple scalable profile.

Fine granular scalability mode allows the delivery of the same MPEG-4 content at different bit rates. MPEG-4 Visual has the ability to take content intended to be experienced with a high-bandwidth connection, extract a subset of the original stream, and deliver usable content to a personal digital assistant or other mobile devices. Error correction, built into the standard, allows users to switch to lower-bit rate streams if connection degradation occurs because of lossy wireless networks or congestion. Objects in a scene may also be assigned a priority level that defines which objects will or will not be viewed during network congestion or other delivery problems.

MPEG-4 Audio offers tools for natural and synthetic audio coding. The compression is so efficient that good speech quality is achieved at 2 kbps. Synthetic music and sound are created from a rich toolset called Structured Audio. Each MPEG-4 decoder has the ability to create and process this synthetic audio making the sound quality uniform across all MPEG-4 decoders.

In this context, MPEG introduced the advanced audio coding (AAC), which is a new audio coding family based on a psychoacoustics model. Sometimes referred to as MP4, which is misleading because it coincides with the MPEG-4 file format, AAC provides significantly better quality at lower bit rates than MP3. AAC was developed under MPEG-2 and was improved for MPEG-4. Additional tools in MPEG-4 increase the effectiveness of MPEG-2 AAC at lower bit rates and add scalability and error resilience characteristics.

AAC supports a wider range of sampling rates (from 8 to 96 kHz) and up to 48 audio channels and is, thus, more powerful than MP3. Three profiles of AAC provide varying levels of complexity and scalability. MPEG-4 AAC is, therefore, designed as high-quality general audio codec for 3G (third-generation) wireless terminals. However, compared with MP3, AAC software is much more expensive to license, because the companies that hold related patents decided to keep a tighter rein on it.

The last component proposed is the Delivery Multimedia Integration Framework (DIMF). This framework provides abstraction from the transport protocol (network, broadcast, etc) and has the ability to identify delivery systems with a different QoS. DMIF also abstracts the application from the delivery type (mobile device versus wired) and handles the control interface and signaling mechanisms of the delivery system. The framework specification makes it possible to write MPEG-4 applications without indepth knowledge of delivery systems or protocols.

1.3.1.1 Related Video Standards and Joint Efforts.

There exist a handful other video standards and codecs. [40] Cinepak (http://www.cinepak.com/text.html) was developed by CTI (Compression Technologies, Inc.) to deliver high-quality compressed digital movies for the Internet and game environments.

RealNetworks (http://www.real.com) has developed codecs for audiovisual streaming applications. The codec reduces the spatial resolution and makes a thorough analysis if a frame contributes to motion and shapes and drops it if necessary. Priority is given to the encoding of the audio; that is, audio tracks are encoded first and then the video track is added. If network congestion occurs, the audio takes priority and the picture just drops a few frames to keep up. The Sure Stream feature allows the developer to create up to eight versions of the audio and video tracks. If there is network congestion during streaming, Real Player and the Real Server switch between versions to maintain image quality.

For broadcast applications, especially video conferencing, the ITU-T (http://www.itu.int/ITU-T/) standardized the H.261 (first version in 1990) and H.263 (first version in 1995) codecs. H.261 was designed to work for bit rates, which are multiples of 64 kbps (adapted to Integrated Services Digital Network connections). The H.261 coding algorithm is a hybrid of interframe prediction, transform coding, and motion compensation. Interframe prediction removes temporal redundancy. Transform coding removes the spatial redundancy. Motion vectors are used to help the codec to compensate for motion. To remove any further redundancy in the transmitted stream, variable-length coding is used. The coding algorithm of H.263 is similar to H.261; however, it was improved with respect to performance and error recovery. H.263 uses half-pixel precision for motion compensation, whereas H.261 used full-pixel precision and a loop filter. Some parts of the hierarchical structure of the stream are optional in H.263, so the codec can be configured for a lower bit rate or better error recovery. There are several optional negotiable options included to improve performance: unrestricted motion vectors, syntax-based arithmetic coding, advance prediction, and forward and backward frame prediction, which is a generalization of the concepts introduced in MPEG's P-B-frames.

MPEG and ITU-T launched in December 2001 the Joint Video Team to establish a new video coding standard. The new standard, named ISO-IEC MPEG-4 Advanced Coding (AVC-Part 10 of MPEG-4)/ITU H.264, offers significant bit-rate and quality advantages over the previous ITU/MPEG standards. To improve coding efficiency, the macroblock (see Exhibit 1.3) is broken down into smaller blocks that attempt to contain and isolate the motion. Quantization as well as entropy coding was improved. The standard is available as of autumn 2003. More technical information on MPEG-4 AVC may be found at http://www.islogic.com/products/islands/h264.html.

In addition, many different video file formats exist that must be used with a given codec. For instance, Microsoft introduced a standard for incorporating digital video under Windows by the file standard called AVI (Audio Video Interleaved). The AVI format merely defines how the video and audio will be stored, not how they have to be encoded.

1.3.2 Multimedia Metadata

Metadata describing a multimedia resource, such as an audio or video stream, can be seen from various perspectives, based on who produces or provides the metadata:

-

From the content-producer's perspective, typical metadata are bibliographical information of the resource, such as author, title, creation date, resource format, and so forth.

-

From the perspective of the service providers, metadata are typically value-added descriptions (mostly in XML format) that qualify information needed for retrieval. These data include various formats, under which a resource is available, and semantic information, such as players of a soccer game. This information is necessary to enable searching with an acceptable precision in multimedia applications.

-

From the perspective of the media consumer, additional metadata describing its preferences and resource availability are useful. These metadata personalize content consumption and have to be considered by the producer. Additional metadata are necessary for the delivery over the Internet or mobile networks to guarantee access to the best possible content; for example, metadata describing adaptation of the video is required when the available bandwidth decreases. One issue that needs to be addressed is whether or not an adaptation process is acceptable to the user.

To describe metadata, various research projects have developed sets of elements to facilitate the retrieval of multimedia resources. [41] Initiatives that appear likely to develop into widely used and general standards for Internet multimedia resources are the Dublin Core Standard, the Metadata Dictionary SMPTE (Society of Motion Picture and Television Engineers), MPEG-7, and MPEG-21. These four standards are general; that is, they do not target a particular industry or application domain and are supported by well-recognized organizations.

Dublin Core [42] is a Resource Description Framework-based standard that represents a metadata element set intended to facilitate the discovery of electronic resources. There have been many papers that have discussed the applicability of Dublin Core to nontextual documents such as images, audio, and video. They have primarily focused on extensions to the core elements through the use of subelements and schemes specific to audiovisual data. The core elements are title, creator, subject, published in, description, publisher, contributor, date, type, format, identifier, source, language, and rights. Dublin Core is currently used as a metadata standard in many television archives.

In this context, one has also to mention the Metadata Dictionary SMPTE. The dictionary is a big collection of registered names and data types, developed mostly for the television and video industries that form the SMPTE membership. Its hierarchical structure allows expansion and mechanisms for data formatting in television and video signals and provides a common method of implementation. Most metadata are media-specific attributes, such as timing information. Semantic annotation is, however, not possible. The SMPTE Web site contains the standards documents (http://www.smpte.org/).

MPEG-7 [43] is an Extensible Markup Language (XML)-based multimedia metadata standard that proposes description elements for the multimedia processing cycle from the capture (e.g., logging descriptors), to analysis and filtering (e.g., descriptors of the MDS [Multimedia Description Schemes]), to delivery (e.g., media variation descriptors), and to interaction (e.g., user preference descriptors). MPEG-7 may, therefore, describe the metadata flow in multimedia applications more adequately than the Dublin Core Standard. There have been several attempts to extend the Dublin Core Standard to describe the multimedia processing cycle. Hunter et al. [44], [45] showed that it is possible to describe both the structure and fine-grained details of video content by using the Dublin Core elements plus qualifiers. The disadvantage of this approach is that the semantic refinement of the Dublin Core through the use of qualifiers may lead to a loss of semantic interoperability. Another advantage of MPEG-7 is that it offers a system's part that allows coding of descriptions (including compression) for streaming and for associating parts of MPEG-7 descriptions to media units, which they describe. MPEG-7 is of major importance for content-based multimedia systems. Detailed information on the practical usage of this standard is given in Chapter 2.

Despite the very complete and detailed proposition of multimedia metadata descriptions in MPEG-7, the aspect of the organization of the infrastructure of a distributed multimedia system cannot be described with metadata alone. Therefore, the new MPEG-21 [46] standard was initiated in 2000 to provide mechanisms for distributed multimedia systems design and associated services. A new distribution entity is proposed and validated: the Digital Item. It is used for interaction with all actors (called users in MPEG-21) in a distributed multimedia system. In particular, content management, Intellectual Property Management (IPMP), and content adaptation shall be regulated to handle different service classes. MPEG-21 shall result in an open framework for multimedia delivery and consumption, with a vision of providing content creators and service providers with equal opportunities to an open electronic market. MPEG-21 is detailed in Chapter 3.

Finally, let us notice that in addition MPEG with MPEG-7 and MPEG-21, several other consortia have created metadata schemes that describe the context, presentation, and encoding format of multimedia resources. They mainly address a partial aspect of the use of metadata in a distributed multimedia system. Broadly used standards are:

-

W3C (World Wide Web Consortium) has built the Resource Description Framework-based Composite Capabilities/Preference Profiles (CC/PP) protocol.[47] The WAP Forum has used the CC/PP to define a User Agent Profile (UAProf) which describes the characteristics of WAP-enabled devices.

-

W3C introduced the Synchronized Multimedia Integration Language (SMIL, pronounced "smile")[48] which enables simple authoring of interactive audiovisual presentations.

-

IETF [49] (Internet Engineering Task Force) has created the Protocol-Independent Content Negotiation Protocol (CONNEG), which was released in 1999.

These standards relate to different parts of MPEG; for instance CC/PP relates to the MPEG-21 Digital Item Adaptation Usage Environment, CONNEG from IETF relates to event reporting in MPEG-21, parts of SMIL relate to the MPEG-21 Digital Item Adaptation, and other parts relate to MPEG-4. Thus, MPEG integrated main concepts of standards in use and, in addition, let these concepts work cooperatively in a multimedia framework.

[27]Steinmetz, R., Multimedia Technology, 2nd ed., Springer-Verlag, Heidelberg, 2000.

[28]Chiariglione, L., Short MPEG-1 description (final). ISO/IECJTC1/SC29/WG11 N MPEG96, June 1996, http://www.chiariglione.org/mpeg/.

[29]Chiariglione, L., Short MPEG-2 description (final). ISO/IECJTC1/SC29/WG11 N MPEG00, October 2000, http://www.chiariglione.org/mpeg/.

[30]Brandenburg, K., MP3 and AAC explained, in Proceedings of the 17th AES International Conference on High Quality Audio Coding, Florence/Italy, 1999.

[31]Koenen, R., MPEG-4 overview. ISO/IEC JTC1/SC29/WG11 N4668, (Jeju Meeting) March 2002, http://www.chiariglione.org/mpeg/.

[32]Pereira, F., Tutorial issue on the MPEG-4 standard, Image Comm., 15, 2000.

[33]Ebrahimi, T. and Pereira, F., The MPEG-4 Book, Prentice-Hall, Englewood Cliffs, NJ, 2002.

[34]Bouras, C., Kapoulas, V., Miras, D., Ouzounis, V., Spirakis, P., and Tatakis, A., On-demand hypermedia/mutimedia service using pre-orchestrated scenarios over the Internet, Networking Inf. Syst. J., 2, 741–762, 1999.

[35]Chiariglione, L., Short MPEG-1 description (final). ISO/IECJTC1/SC29/WG11 N MPEG96, June 1996, http://www.chiariglione.org/mpeg/.

[36]Koenen, R., MPEG-4 overview. ISO/IEC JTC1/SC29/WG11 N4668, (Jeju Meeting) March 2002, http://www.chiariglione.org/mpeg/.

[37]Pereira, F., Tutorial issue on the MPEG-4 standard, Image Comm., 15, 2000.

[38]Ebrahimi, T. and Pereira, F., The MPEG-4 Book, Prentice-Hall, Englewood Cliffs, NJ, 2002.

[39]Not in the sense of object-oriented programming or modeling thoughts.

[40]Gecsei, J., Adaptation in distributed multimedia systems, IEEE MultiMedia, 4, 58–66, 1997.

[41]Hunter, J. and Armstrong, L., A comparison of schemas for video metadata representation. Comput. Networks, 31, 1431–1451, 1999.

[42]Dublin Core Metadata Initiative, Dublin core metadata element set, version 1.1: Reference description, http://www.dublincore.org/documents/dces/, 1997.

[43]Martínez, J.M., Overview of the MPEG-7 standard. ISO/IEC JTC1/SC29/WG11 N4980 (Klagenfurt Meeting), July 2002, http://www.chiariglione.org/mpeg/.

[44]Dublin Core Metadata Initiative, Dublin core metadata element set, version 1.1: Reference description, http://www.dublincore.org/documents/dces/, 1997.

[45]Hunter, J., A proposal for the Integration of Dublin Core and MPEG-7, ISO/IEC JTC1/SC29/WG11 M6500, 54th MPEG Meeting, La Baule, October 2000, http://archive.dstc.edu.au/RDU/staff/jane-hunter/m6500.zip.

[46]Hill, K. and Bormans, J., Overview of the MPEG-21 Standard. ISO/IECJTC1/SC29/WG11 N4041 (Shanghai Meeting), October 2002, http://www.chiariglione.org/mpeg/.

[47]http://www.w3.org/Mobile/CCPP/.

[48]http://www.w3.org/AudioVideo/.

[49]http://www.imc.org/ietf-medfree/index.html.

|

| < Day Day Up > |

|

EAN: 2147483647

Pages: 77