Map Work-Flow Processes

Map Work-Flow Processes

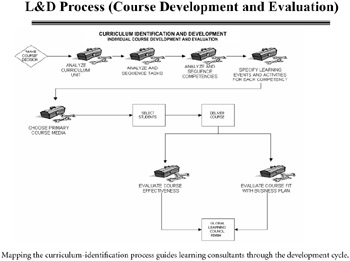

With the work processes and roles defined, we can flow the curriculum design and development value stream maps, which chart in detail the method our team will follow to create or purchase content (see Figure 4-6). The work-flow process should include roles and responsibilities, action items, time to complete each task, and metrics by which the success of each step will be measured. It is an overarching map of the entire course-development process, from the kick-off meeting to delivery of the product.

Figure 4-6: Curriculum Identification Work-Flow Process.

Built to Learn: The Inside Story of How Rockwell Collins Became a True Learning Organization

ISBN: 0814407722

EAN: 2147483647

EAN: 2147483647

Year: 2003

Pages: 124

Pages: 124