The Building Blocks of Security

|

The division of information security into logical components makes it easier to understand, and therefore easier to deploy. These logical components are confidentiality, authentication, authorization, integrity, nonrepudiation, privacy, and availability. These are the check boxes that need to be kept in mind when designing a secure system. If only some of the boxes are checked, security loopholes exist.

Confidentiality

We’ve seen that data in a networked system is either in transit or at rest. In the world of information security, the term confidentiality is used to refer to the requirement for data in transit between two communicating parties not to be available to third parties that may try to snoop on the communication. There are two broad approaches to confidentiality. One approach is to use a private connection between the two parties— either a dedicated line or a virtual private network (VPN). Another approach, used when data is being sent over an untrusted network such as the Internet, is to use encryption.

Introducing Encryption

In the public mind, encryption is often seen as synonymous with computer security. It would be easy to write an introduction to computer security that focused only on encryption. This heightened public awareness of encryption is due in part to the success of SSL (Secure Sockets Layer) for business-to-consumer (B2C) e-commerce. One of the features of SSL is the encryption of data as it passes between a Web browser and a Web server. The Padlock icon on Web browsers provides a neat graphical reminder that encryption is occurring (it also indicates that the Web site was authenticated; we’ll see what that means later). It indicates that eavesdroppers cannot read the data being submitted to a Web site, or requested from a Web site.

Encryption is undoubtedly important, both for confidentiality and as the basis of other security technologies such as digital signatures. However, it is not a kind of magical fairy dust that can be added to a system in order to make it secure. Remember, it is just one of the components of security. A Web-based system is not “secure” simply because it uses SSL for confidentiality; the other security check boxes mentioned earlier must also be ticked.

| Tip | Don’t think of “security” and “cryptography” as synonymous. Much security does not depend on encryption technologies, but rather on content-filtering, caution, and plain common sense. Adding encryption doesn’t make a system “secure” if encrypted traffic can access a system which is not supposed to be publicly available. |

The Terminology of Encryption

Cryptography is a branch of mathematics, and as such it includes a lot of specialized terminology. A number of pieces of encryption terminology are required to understand this book. In the terminology of encryption, plaintext refers to unencrypted data, while ciphertext refers to encrypted data. An encryption algorithm is used to take plaintext and convert it into ciphertext. This algorithm is a combination of mathematical functions that jumble the plaintext, making ciphertext.

However, the algorithm alone isn’t enough to perform the encryption operation. If that were the case, then when the details of the algorithm were discovered, the ciphertext could be decrypted. The answer to this problem might seem obvious: just keep the algorithm secret. This approach is called “security through obscurity” and does not hold much water, because a secret algorithm that is kept secret and not subject to examination by the world’s cryptography experts is likely to have security flaws. In addition, an imposter could use the algorithm to decrypt data even if the inner workings of the algorithm (for example, source code of a cryptography toolkit) were kept secret. Therefore, algorithms have keys, in order to add a variable element to the algorithm which is only known by the parties who are exchanging confidential data. This means that the algorithm can be public, subject to examination by cryptography experts, but relies on a secret key each time it is used. The key is fed into the algorithm, along with the plaintext, and ciphertext is created.

| Tip | Avoiding “Security through obscurity” goes further than the avoidance of obscure and secret encryption algorithms. It also extends to practices like using obscure, hard-to-guess names for resources. These are all to be avoided. |

An initialization vector is used to add another element of variability to the encryption. This is required because if two parties agree on an algorithm and on a key, but don’t use an initialization vector, then the source data will be identical to the ciphertext. For example, if the message “Yes, I can do that” happens to be repeated, then the ciphertext will be the same, and that is a security risk. We will see in the chapter on XML Encryption (Chapter 4) how initialization vectors (IVs), keys, and encrypted keys are expressed in XML format.

Cryptanalysis is the name given to the examination of a cryptographic system with a view to discovering the decryption key. In cryptanalysis, other aspects of the system may be known—the plaintext and the ciphertext, and the encryption algorithm itself— but the key is secret. If the key can be found, then other ciphertext encrypted using the same key can be decrypted.

It is important to realize that the use of strong encryption does not provide absolute certainty that encrypted data will not be decrypted and read. All strong cryptography can do is to make it extremely difficult to decrypt the data. In practice, this means that the timescale and computing power required to break the encryption make the exercise unattractive. Since cryptanalysis of the encryption algorithms in popular use has shown that they do not have known “backdoors” for discovering the key, the attacker is limited to a “brute force” attack. This means guesswork (repeatedly attempting to decrypt the data using a series of decryption keys), which takes time. If it is known that the decryption key is eight characters long, and each character can be any 8-bit ASCII character, there are more than 1019 possible keys to try. The number of possible keys is known as the keyspace. On average, a brute force attacker will have to traverse through half of the keyspace before they get lucky and find the decryption key. The time required to traverse through the keyspace can be reduced by using dedicated hardware or multiple machines, but this costs money. A “back-of-the-envelope” calculation can be used to find out whether the cost of breaking the encryption is greater than the value of the data itself. If it is, then that means that a potential attacker may not bother attempting a brute force attack on the encrypted data.

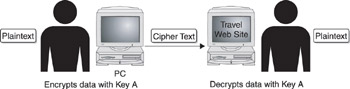

There are two types of encryption in general use for Web Services security: symmetric encryption and asymmetric encryption. In symmetric encryption, the decryption key is the same as the encryption key, as shown here. In asymmetric encryption, the decryption key is not the same as the encryption key. Let’s look at both of these in turn.

Symmetric Encryption

Symmetric encryption involves the use of the same key for encryption as for decryption. Looking at the preceding illustration, we can see why it is known as “symmetric” encryption—the illustration is symmetric horizontally because encryption and decryption use the same key. Examples of symmetric key algorithms are DES (Data Encryption Standard), which has been in use for over 20 years as a recommendation of the U.S. government, and AES (Advanced Encryption Standard), which replaces DES.

Because in symmetric encryption the one-and-only key is also used for decryption, it must be kept secret. Otherwise, the confidentiality requirement isn’t met. If a brute force attack is successful and the key becomes known, all ciphertext encrypted using that key can be decrypted. This is important for Web Services because we are dealing with information in transit. The data is being sent to a recipient, and since it is encrypted, it is confidential. The problem, therefore, is how to send the key safely to the recipient of the data so that only that recipient can read the data. It is obvious that the key cannot be sent in the clear with the data, because that would defeat the purpose of using encryption in the first place. (If the key could be “sniffed” as it is sent to the recipient, there would be no need for a brute force attack at all.)

There are two options for sending the key. Option one is to use a separate communication channel to send the key. For example, the encryption key could be communicated to the recipient over the phone. This is known as sending the key “out of band.” This is feasible for infrequent communications since a DES key, for example, is only eight characters long and could be conveyed in a phone call. However, for Web Services security, this approach is out of the question because the overhead of sending the key out of band would cancel out the integration advantages offered by a service-oriented architecture. All the advantages of publish, find, and bind that we discussed in Chapter 1 would be lost. Also, since Web Services are software talking to other software, the concept of a human being “picking up the phone” isn’t applicable. Of course, the out-of-band communication does not have to be a phone call, and can be another method. However, unless it is a trusted channel, it cannot be guaranteed that the key won’t be “sniffed.”

The second option is to encrypt the key itself, which means that it can be sent using the same communications channel (even in the same message) as the encrypted document. However, if the key is also encrypted using symmetric encryption, we’ve just introduced the problem of how to send the new key that is used to decrypt the first key—and we are back to square one. We’ll see in the next section how asymmetric encryption solves this problem.

Asymmetric Encryption

In the following illustration, we can see how asymmetric encryption gets its name. Two different keys are used so that the diagram is not horizontally symmetric. This may seem counterintuitive. How can one key be used to create ciphertext from plaintext, and then a completely different key be used to convert the ciphertext back into the original plaintext? That answer to this question involves some fairly complex mathematics. The most famous and widely used asymmetric algorithm is the RSA algorithm (named after its creators: Rivest, Shamir, and Adleman), which uses prime number arithmetic. Luckily, we don’t have to concern ourselves with mathematics in this chapter, and so we are more interested in the overall reason why it exists. Asymmetric encryption removes the need to send the decryption key to the recipient of encrypted data.

Recall that we suggested encrypting the symmetric key and sending it to the intended reader of the data, but this seemed to land us back at square one – how is the key itself encrypted? Actually, that problem applies only if symmetric encryption is used to encrypt the key. If asymmetric encryption is used to encrypt the key, the only requirement is that the reader of the data holds the key to be used to decrypt the data—that is, the corresponding key of the key pair. It is this second key that must be kept private; hence the term “private key.” Notice that we don’t call it a “decryption key.” That is because it can be used for more than decryption, as we’ll see later when we discuss digital signatures. Similarly, the key that is used for encryption is not only an “encryption key,” but can also be used in the context of digital signatures. Therefore, the term “public key” is more appropriate for this key, because the key should be available to any party that is encrypting data to be sent to the holder of the private key.

At this stage, some questions might present themselves. These are

-

Since asymmetric encryption is such a good solution for encrypting a key, why not use it for encrypting all the data and not bother using symmetric encryption at all?

-

How does the reader of the data get their private key in the first place; how could such a sensitive item be sent to them?

-

How does the entity encrypting the data know that they are using the appropriate public key?

The answer to the first question is easy. Symmetric encryption is much faster than asymmetric encryption, so it makes sense to use symmetric encryption to encrypt the data and only use the slower asymmetric encryption to encrypt the key itself. The answer to the second question is also relatively straightforward. The private key is generated by the reader of the data on their computer, and it never leaves their computer. Better still: the private key can be generated on a hardware token such as a smartcard, and never leave the smartcard. The answer to the third question is slightly more complex. We’ll see in the nonrepudiation section that public keys are rarely distributed alone. They are contained in something called a “digital certificate.” A public key alone does not contain identity information, but a digital certificate does. Digital certificates ensure that the correct public key is used for encryption.

This completes our discussion of confidentiality. Let’s look at the next information security requirement: integrity.

Integrity

In the field of information security, integrity has a special meaning. It does not mean that information cannot be tampered with. It means that if information is tampered with, this tampering can be detected. In an untrusted network, it may be impossible to ensure that the data is tamper-proof when it is in transit to its destination. So, knowledge about the fact that tampering has occurred is the next best thing.

Data integrity relies on mathematical algorithms known as hashing algorithms. A hashing algorithm takes a block of data as input and produces a much smaller piece of data as output. This output is sometimes called a “digest” of the data. If the data is a message, it is called a “message digest.” The digest is bound to the original data in the following way: if the original data changes, however slightly, and the hashing algorithm is rerun, the resulting digest will also be different. Examples of hashing algorithms include SHA-1.

Digital Signatures

A hashing algorithm alone cannot ensure integrity. Think about the scenario where a digest is added to the end of a message before it is sent to a recipient. An attacker who tampers with the message could simply construct a new digest and append it to the message instead. In this case, the tampering would be undetected, and so the integrity requirement would not be met.

This section follows the section on confidentiality for a reason, because encryption is also used for integrity. Specifically, asymmetric encryption is used. Recall that it isn’t appropriate to call the public key the “encryption key” or to call the private key the “decryption key,” even if those names accurately define their roles when they are used to fulfill the confidentiality requirement. The reason is that the process can be run in reverse: the private key can be used for encryption and the public key can be used for decryption. This might seem counterintuitive: if data can be decrypted using a public key, it isn’t confidential at all, is it? The answer is that only the digest of the data is encrypted using the private key, and only the holder of the private key can perform this encryption operation. The corresponding public key is then used by the reader of the data to decrypt the encrypted digest. If the hashing algorithm is rerun, and the resulting digest is found to be the same as the decrypted digest, this verifies the integrity of the data.

This encrypted digest bears some similarity to a handwritten signature, so it is called a “digital signature.” However, the way in which a digital signature (that is, an encrypted digest) ensures the integrity of data is not similar to a handwritten signature, because handwritten signatures do not ensure the integrity of a signed document. For example, a ten-page paper document could be signed and one of the middle pages replaced, and the signature could not be used to indicate that anything is amiss. Therefore, it is important to understand that digital signatures are not the same as handwritten signatures.

In this book, we will concentrate on XML Signature, which is a means (but not the only means) of rendering a digital signature in XML format. PKCS#7 was an earlier specification that described how a digital signature could be rendered. Later, we will see how WS-Security allows an XML Signature to be embedded in a SOAP message.

| Tip | Don’t confuse digital signatures with other types of electronic signatures. “Electronic signature” is a loose term that is used to describe signing technologies that involve electronics. Digital signatures are a type of electronic signature. Other types of electronic signatures include stylus pads on which a person can electronically pen their handwritten signature (sometimes called a “digitized signature”). These are used by document delivery companies and at credit card payment terminals. |

Recall the third question posed at the end of the section on confidentiality. To refresh your memory, here it is again:

How does the entity encrypting the data know that they are using the appropriate public key? For integrity, we have a similar question: How can the entity processing a digital signature be sure that they are using the appropriate public key? This question is answered in the next section.

Nonrepudiation

Nonrepudiation literally means that the originator of a message cannot claim not to have sent a given message. We saw in the preceding two sections on confidentiality and integrity that it is important that the appropriate public key is used. If the wrong public key is used for encrypting a document, or to verify a digital signature, the results can be disastrous. Any doubt about the authenticity of the public key throws integrity and confidentiality into question. A public key must be somehow bound to the identity of the party who is digitally signing the data, or decrypting the data. This binding to an identity also helps to deliver nonrepudiation. We will see in this section, however, that nonrepudiation is an elusive goal that requires a combination of technologies.

Digital Certificates

When a private key is generated, the corresponding public key is generated at the same time. Recall that the public key is used to encrypt information that is intended for the holder of the corresponding private key. If the wrong public key is used, the results are either bad or disastrous. They are bad if the intended reader cannot decrypt the data. They are disastrous if an imposter has somehow substituted his or her own public key and now holds the private key, which can be used to decrypt the data. Therefore, it is vitally important that the public key can be bound to the identity of the holder of the private key.

By looking at a public key alone, there is no way of ensuring the identity of the holder of the private key. A key is, after all, just a string of bytes. Digital certificates were invented to solve this problem. A digital certificate typically includes identity information (for example, name, location, and country) about the entity holding the corresponding private key, a serial number and expiry date, and, of course, the public key itself. The X.509 specification is used to format the information in a digital certificate. X.509 is fully extensible, meaning that any additional identity attributes can be added to the digital certificate.

Public Key Infrastructure

A digital certificate is issued by a certificate authority (CA). A digital certificate is bound to the CA that issues the certificate. This binding is performed using a digital signature, which ensures the integrity of the digital certificate. In practice, this means that the operator of the CA is vouching for the identity of the entity that is described by the digital certificate. In order to be able to vouch for this identity, an identification verification procedure must be performed. The identification verification procedure depends on the policy of the CA, and can range from a simple e-mail exchange to a face-to-face passport submission.

While a CA is used for issuing digital certificates, the identification verification is performed by a registration authority (RA). An RA and CA work in lockstep. When an identity is verified, the RA instructs the CA to issue a digital certificate. The RA may be operated by the same organization that provides the CA, or the RA function may be performed by another party such as a regional affiliate.

Once issued, a digital certificate may be required to be publicly available. If so, a popular method for publishing digital certificates is to use an X.500 directory. An X.500 directory is a hierarchical (that is, tree-like) storage mechanism. Lightweight Directory Access Protocol (LDAP) is typically used to request and retrieve X.509 certificates from an X.500 directory.

As we’ve seen, the most important part of an X.509 certificate is the public key. The public key has a corresponding private key. It is obviously important that this private key should not be available to anybody except for the entity who initially created the key pair. If this key is compromised (meaning that if there is any chance at all that it has been exposed to a third party), this means that data should no longer be encrypted with the corresponding public key. In addition, data that is digitally signed using the corresponding private key should not be trusted. This is a powerful reason why it is recommended that different public key pairs be used for encryption and signing.

It is vital that potential users of the corresponding public key be made aware if the private key is compromised, or is even suspected of being compromised. In this scenario, the digital certificate is revoked, meaning that the CA issuer has indicated that it is no longer valid. Information about revoked digital certificates is contained in a certificate revocation list (CRL). Like digital certificates themselves, a CRL can be stored in a directory and accessed using LDAP.

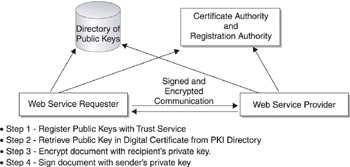

Together, CAs, RAs, and X.500 directories are known as a public key infrastructure (PKI). By linking public keys to actual identities using digital certificates, and by ensuring that a compromised private key results in swift revocation of a digital certificate, a PKI fulfils the nonrepudiation requirement. In Figure 2-1, you can see how PKI can be used for a Web Services transaction.

Figure 2-1: PKI used for Web Services

| Tip | Although digital certificates and PKI may be used to enable trust between a SOAP requester and a Web Service, this does not mean that end users must use digital certificates to authenticate to a system which uses Web Services. PKI is a notoriously difficult technology to deliver to end users. It is more practical for end users to authenticate using a username/password combination. However, WS-Security can then be used to embed information about the end user in a security token, for example, an X.509 certificate or a SAML Assertion, in a SOAP message. |

Four steps are outlined in the diagram. Step 1 is to generate a key pair and register the public key with a registration authority. This ensures that the certificate authority issues a digital certificate containing the public key. XKMS is the XML specification that can be used to provide PKI services over the Web. Note that XKMS is key-centric not certificate-centric, because it can be used without any certificates at all. In the diagram, both the Web Service requester and the Web Service provider register their keys using XKMS, and then their keys are held in a publicly available directory. If the requirement is only for the Web Services provider to be authenticated, then only they need to register their public key.

The public key can then be used to send encrypted data to the Web Service. Step 2 shows a Web Service requester retrieving the public key of the Web Service from a PKI directory. LDAP or Directory Services Markup Language (DSML) can be used for this step. Specific protocols such as SSL allow a Web Service provider to expose their public key to requesters, without the requirement to look up the key in a public directory; however, a public service may still be required in order to ensure that the key is valid. When a public key is enclosed in a digital certificate, the issuer of the certificate (sometimes called a “trusted third party” or “TTP”) signs both the key and the identity information of the key holder, thus binding them together and vouching for the identity of the key holder.

Step 3 shows secure communication between the Web Service provider and the Web Service requester. This data may be encrypted using the Web Service provider’s public key, with the public key’s validity checked using XKMS. XML Encryption can be used to encrypt a portion of the SOAP message, or SSL can be used at a lower communication layer to encrypt the session. XML Signature can be used for message integrity and nonrepudiation. As we can see from this diagram, many of the XML Web Services security specifications take existing procedures and apply an XML interface.

Authentication

In the previous section, we saw how the mathematical algorithms of asymmetric encryption can be linked with an identity-checking registration process and a directory server in order to deliver nonrepudiation. This same PKI technology can also be used for authentication—that is, to establish the identity of a communicating party. However, other methods are available for authentication, including biometrics, passwords, and hardware devices that issue one-time passwords. What these have in common is that a token is in the possession of the entity that is authenticated. In the case of a smartcard, the token is hardware-based; and in the case of a private key on a hard drive, the token is software-based. At a theoretical level, however, they are both tokens.

This book is about Web Services security, which adds a new twist on authentication. While a human user may authenticate directly to their systems, they will not be running Web Services directly. As we saw in the previous chapter, Web Services is software talking to other software. However, Web Services may well be run on behalf of a human user who has authenticated using a human-to-machine authentication technique (for example, a password). For example, a currency trader may authenticate to their dealing terminal at 8:00 A.M., and then press a button to perform a trade at 8:15 A.M. The processing of this trade may involve Web Services being run, perhaps both inside the dealer’s company and at a third-party settlement company. It may be a requirement for the Web Services to be called on behalf of the trader. Therefore, information about the dealer’s authentication status must be carried in the Web Service communication. This scenario, which is essential for Web Services, is enabled by SAML. SAML defines an XML syntax used for exchanging information about authentication and authorization.

Public Key Infrastructure for Authentication

We’ve seen that digital certificates bind a public key to the identity of the holder of the corresponding private key. When a digital certificate is used for authentication, it must be accompanied with proof-of-possession (POP) of the corresponding private key. After all, if this wasn’t required, anybody could retrieve digital certificates from an online directory and use them for authentication. A common way to implement proof-of-possession is to digitally sign a piece of data and send the digital certificate as part of the digital signature.

It is important when digital signatures are used for authentication to avoid the possibility of a replay attack. A replay attack involves intercepting data and then sending it again. In the case of an authentication request, a successful replay attack would result in an imposter assuming another person’s identity. It is important, therefore, that the signed data include a random element. This can be achieved using a challenge-response algorithm, whereby the entity that wishes to authenticate is presented with a piece of data they must digitally sign. The digital signature, possibly including the digital certificate, is then returned. If the signature is valid, the user has been authenticated.

The most popular application of digital certificates for authentication is Secure Sockets Layer (SSL), which uses asymmetric cryptography to perform authentication over HTTP. A requester (for example, a Web browser) issues a random challenge to a Web server and examines the response to ensure that the Web server holds the private key that corresponds to the public key in their digital certificate.

We learned in Chapter 1 that Web Services does not rely on HTTP, so SSL is not always an option for message authentication. WS-SecureConversation, part of the Microsoft Global XML Web Services Architecture (GXA) re-creates the functionality of SSL at the SOAP layer. In addition, the Web Services Development Kit (WSDK) supports XML Signature over a Timestamp element in a SOAP header. This functionality guards against a replay attack, since the SOAP message is only valid between the “Created” and “Expired” times contained within the Timestamp element.

| Tip | Be careful not to confuse replay attacks with denial of service attacks. If a single message is sent 1000 times to a Web Service, with a view to bringing the Web Service to its knees, that’s a denial of service attack. If a single message, containing authentication information, is detected and replayed in order to obtain illegal access to the Web Service, that’s a replay attack. |

An example of a timestamp is shown in Listing 2-1. This conforms to the WS-Security specification. A digital signature over this timestamp binds it to the identity of the signer, and limits the possibility of a replay attack.

Listing 2-1

<wsu:Timestamp xmlns:wsu="http://schemas.xmlsoap.org/ws/2002/07/utility"> <wsu:Created wsu:> 2002-08-14T17:33:27Z </wsu:Created> <wsu:Expires wsu:> 2002-08-14T17:38:27Z </wsu:Expires> </wsu:Timestamp>

| Tip | Ensure that the time period between the creation and expiration of a timestamp is short enough to limit the window of opportunity for a replay attack. |

Smartcards

The word “smartcard” is a relatively loose term, and refers to any credit-card-sized device that contains a microchip. Smartcards can be used to store private keys for use in asymmetric encryption. Recall that when a public and private key pair is generated, and a smartcard is used, the private key never leaves the smartcard. This makes for a very secure solution. Of course, if the smartcard is somehow compromised or lost, the corresponding public key should not be used and its digital certificate should be entered into its PKI’s certificate revocation list.

If the smartcard stores a private key, the process used for authentication is similar to the process when the private key is not on a smartcard. However, the overall security of the system is enhanced because smartcard-based authentication is “two-factor” rather than “one-factor.” Here, “two-factor” refers to “something you have” (the smartcard) and “something you know” (the password to use the private key on the smartcard). When the private key is not on a smartcard and is instead resident on the hard drive, the authentication is “one-factor”—just “something you know” (the password required to use the private key on the hard drive).

Two-factor authentication can also be used without asymmetric encryption. This is the approach used by tools such as RSA Security’s SecurID cards. These tokens present one-time keys on an LCD screen. “One-time keys” are so-called because they do not repeat. The user may have a password that they must enter into the device to retrieve a one-time key (called a “one-time pad”), or the key may simply be presented onscreen. If the user is required to use a password, that is two-factor authentication (something you know, something you have). If there is no password, it is only one-factor authentication (something you have). These one-time key devices interact with a server that verifies the keys. One-time key devices are extremely secure, especially when two-factor authentication is used. However, they are not particularly applicable to Web Services since Web Services involves software communicating with other software, which means there may be literally nobody around to read the display on the one-time key card and enter it into a dialog box. If an end user has authenticated using a smartcard, then this fact may be conveyed in a SAML Authentication Assertion, which “asserts” that the end user used a hardware token to authenticate.

Biometrics

Biometrics presents an excellent authentication solution, but they are not directly applicable for Web Services security. They are indirectly applicable when a user authenticates to a system using biometrics, and then information about their authentication action is used as part of a “single sign-in” system.

Username and Password

Username and password is perhaps the most common form of authentication on the Internet. The WS-Security Addendum, published in August 2002, describes a means to include a username and password combination as a “security token” in a SOAP message. The password may be sent in clear text, or alternatively a digest of the password may be sent.

Because end users rarely authenticate directly to a Web Service, SAML can be used to construct an assertion to indicate that a user has authenticated using username and password. Then, the SOAP message sent on the user’s behalf can include this SAML assertion but not the actual username or password.

Authorization

Authorization is an information security requirement that is sometimes linked with authentication, and it is important to see the difference between the two. While authentication is about “who you are,” authorization is about “what you are allowed to do.” Just because a user is authenticated doesn’t mean that they are authorized. Authentication is necessary for authorization, however. Authorization software allows an administrator to manage a policy for access control to services. It also typically provides the provisioning of access control rights to users. Authorization software, from vendors such as Netegrity and Oblix, can make use of users and groups that have already been configured in corporate directories. Users may be assigned to groups and roles.

The principal motivation of role-based access control (RBAC) is to map security management to an organization's structure. Traditionally, managing security has required mapping an organization's security policy to a relatively low-level set of controls, typically access control lists (ACLs). With RBAC, each user’s role may be based on the user's job responsibilities in the organization. Each role is assigned one or more privileges (for example, access to certain information). It is a user's membership in defined roles that determines the privileges the user is permitted.

When looking at authentication software, we saw that single sign-on technologies such as SAML may be used to convey information about an authentication event that has occurred. Similarly, we will see how a SOAP profile of SAML may provide a means for an authorization event to be conveyed to a Web Service. When multiple Web Services are being run in quick succession, and time is of the essence, it is important that the overhead of authorization not occur each time another Web Service is being run.

Policy Enforcement Points and Policy Decision Points

One of the important architectural features of authorization software is that the location where the authorization decision is made be separated from the location where the policy is enforced. These two locations are known as the policy decision point (PDP) and the policy enforcement point (PEP), respectively. The PEP is at the location where potential users present their credentials—for example, at a Web server. The PDP is not located on this system, but is on another system that is not exposed to users. This distinction is important because the PDP must be kept safe from tampering. It must not be exposed to the outside world. If it was on the Web server, which is an untrusted system, there would be the chance that it could be compromised and the policy changed. When we encounter SAML, we will see this distinction between the PDP and PEP expressed in the form of the SAML Protocol.

Availability

Availability may not strike the reader as being an obvious security requirement. However, if critical information is not available when needed, that is costly for any business. As well as the Web Service itself, security services themselves require availability. For example, a certificate revocation list is used for nonrepudiation. If this is not available, the nonrepudiation feature is lost.

Denial of Service Attacks

One of the means of denying availability is to mount a denial of service (DoS) attack. A DoS attack aims to use up all the resources of a service so that it is unavailable to legitimate users. DoS attacks have evolved over the past few years, and the corresponding defensive technologies have also evolved. One of the chief innovations was the distributed denial of service (DDoS) attack. This involves gaining unauthorized access to many computers and installing DoS software on these compromised computers. Some compromised computers act as “handlers” that each orchestrate large numbers of “agents,” which are the computers that perform the actual DoS attack. DDoS attacks can be very destructive in terms of limiting availability to services.

Privacy

Like availability, privacy is a requirement that might not come immediately to mind when information security is discussed. Sometimes privacy is mistaken for confidentiality. We’ve seen that confidentiality is the requirement that data that is in transit is not available to eavesdroppers. The privacy requirement concerns the privacy rights of the subject of the data.

Many privacy protection laws are now in force worldwide, requiring that private data not be disclosed without consent from the subject of the data. Many of the publicized Internet security breaches are privacy breaches. When credit card data is stolen on the Internet, it is rarely a breach of confidentiality for transport-level security, because strong encryption is almost universally used for data in transit on the Internet. However, when credit card data is in storage at a Web site, for example, it may well not be encrypted. If there are any back doors in the Web application, or a direct database connection is available, the credit card data may be stolen. When this happens, it is both a breach of confidentiality (at the server) and a breach of the privacy because the data was supposed to remain private.

Other privacy violations are possible if authorization solutions are misconfigured, or not applied at all. Web Services offers a whole new means of accessing information, and, therefore, a new means of violating privacy if security is not correctly applied.

| Tip | Privacy rules vary from country to country. In particular, data protection rules vary by country. Consult your national government web site to determine what the requirements are in your jurisdiction. |

|

- MPLS VPN Routing Model

- Inter-Provider VPNs

- Case Study 2: Implementing Multi-VRF CE, VRF Selection Using Source IP Address, VRF Selection Using Policy-Based Routing, NAT and HSRP Support in MPLS VPN, and Multicast VPN Support over Multi-VRF CE

- Case Study 3: Implementing Layer 2 VPNs over Inter-AS Topologies Using Layer 2 VPN Pseudo-Wire Switching

- Case Study 8: Implementing Hub and Spoke Topologies with EIGRP