12.1 File System Hierarchy Revisited

12.1 File System Hierarchy Revisited

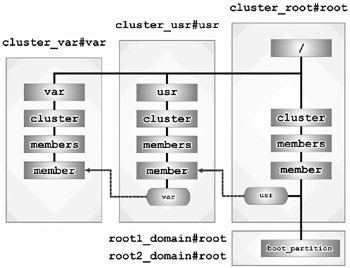

We begin our journey with the changes to the file system hierarchy now that the cluster has been created. The first change is the location of the file systems. In our standalone system, we had three file systems on two AdvFS domains:

| – the root (/) file system |

| – the /usr file system |

| – the /var file system |

Now that we have a two-member cluster, there are five file systems:

| – the root (/) file system |

| – the /usr file system |

| – the /var file system |

| – member1's boot disk |

| – member2's boot disk |

Figure 12-1 shows the TruCluster Server file system layout.

Figure 12-1: File System Hierarchy in a Cluster

The second change you will see is that there are more member directories.

# for i in / /usr /var > do > cd ${i}/cluster/members > print "\n[${PWD}]" > ls -ld * > done [/cluster/members] lrwxr-xr-x 1 root system 6 Dec 13 16:39 member -> {memb} drwxr-xr-x 9 root system 8192 Dec 13 16:43 member0 drwxr-xr-x 9 root system 8192 Dec 13 16:54 member1 drwxr-xr-x 9 root system 8192 Dec 14 13:12 member2 [/usr/cluster/members] lrwxr-xr-x 1 root system 6 Dec 13 16:40 member -> {memb} drwxr-xr-x 7 root system 8192 Dec 13 16:40 member0 drwxr-xr-x 7 root system 8192 Dec 13 16:43 member1 drwxr-xr-x 7 root system 8192 Dec 14 13:04 member2 [/var/cluster/members] lrwxr-xr-x 1 root system 6 Dec 13 16:42 member -> {memb} drwxr-xr-x 21 root system 8192 Dec 13 16:42 member0 drwxr-xr-x 21 root system 8192 Dec 13 16:42 member1 drwxr-xr-x 21 root system 8192 Dec 14 13:05 member2 This is to be expected because we now have a two-member cluster. A more interesting directory, however, lies within each cluster member's member directory – that directory is the member's boot partition (boot_partition).

12.1.1 The Boot Partition

The boot partition is the directory where files are located that are required by a member in order to boot. It is the boot partition, and not the root partition, that a member uses to boot. When we created the cluster, the root partition which was formerly root_domain#root was replaced by cluster_root#root. Since the root partition is mounted clusterwide it cannot be a bootable partition; therefore, it became necessary to have a member-specific boot partition with a boot block that each member could use to boot itself into the cluster. Each member has its own boot partition that is mounted at /cluster/members/member/boot_partition.

Two questions immediately spring to mind when considering this new file system hierarchy:

-

If root is cluster-common and that is where the kernel is located, how can I boot without root?

-

If I now have a boot partition, what is in it?

To answer the first question, let's expand upon what we stated earlier in this section. Every member must have its own boot disk. Why? First of all, every member must have its own kernel due to potential hardware differences between members. Second, another member when attempting to boot from it cannot open the boot partition. So we need a separate disk that only one member will attempt to access, solving the second problem, and on that disk we will place the kernel and associated support files thus solving the first problem.

A member boot disk contains three partitions:

| – the "a" partition at the head of the disk. |

| – the "b" partition. |

| – the "h" partition, a 1MB partition at the end of the disk. |

At block 0 of the member's boot disk is the boot block (rzboot.advfs for an AdvFS domain). This boot block points to the primary bootstrap code (bootrz.advfs) located at logical block number (LBN) 64. It is this bootstrap code that opens the osf_boot executable, which in turn loads the kernel. Both the osf_boot file and the kernel are located on the boot partition. Besides osf_boot and the kernel, what else should we expect? How about the sysconfigtab file? What about some of the hardware databases?

Returning to the second question, "If I now have a boot partition, what is in it?," let's take a look.

# ls -R /.local../boot_partition .tags genvmunix osf_boot quota.user etc mdec quota.group vmunix /.local../boot_partition/.tags: /.local../boot_partition/etc: clu_bdmgr.conf dec_devsw_db dec_hwc_ldb dvrdevtab clu_recover.dat dec_devsw_db.bak dec_hwc_ldb.bak fwevdb ddr.db dec_hw_db dec_scsi_db gen_databases ddr.dbase dec_hw_db.bak dec_scsi_db.bak sysconfigtab /.local../boot_partition/mdec: bootblks

As you can see, the entire partition contains a small number of files, many of which you have probably seen once or twice before. In fact, the boot partition currently uses about 35MB on our test cluster.

# du -sk /.local../boot_partition 35645 /.local../boot_partition

12.1.2 Member-Specific or Cluster-Common? (Revisited)

Now that we have a cluster, it should be easier to understand why we must have both member-specific and cluster-common files. Since the purpose of a cluster is to act like one large system instead of many smaller systems working independently, the cluster members share many files. In fact, the cluster members share most of the files. They also share a common configuration for many applications but not all applications.

As we pointed out in the previous section, every member requires its own files in order to boot. The kernel must be tailored to the member's specific hardware configuration, for example. Since the member is likely to have a unique hardware configuration, each member has its own /dev directory as well as member-specific hardware databases. Each member will need its own hostname and IP address; therefore, the rc.config file is member-specific. We discussed much of this in chapter 6 but felt it important enough to reiterate here.

EAN: 2147483647

Pages: 273